The AI landscape has shifted dramatically. Three major players now compete for developer attention: Anthropic’s Claude, OpenAI’s GPT series, and the disruptive newcomer DeepSeek.

But which model actually delivers? The answer isn’t straightforward. Each brings different strengths to the table, and your best choice depends entirely on what you’re building.

Let’s break down how these models stack up across the metrics that actually matter.

Model Lineups: What You’re Actually Choosing Between

Understanding the current model offerings is step one. These companies don’t just have “one model”—they’ve built entire families with different performance tiers.

Claude’s Current Roster

Anthropic offers three main models as of early 2026. Claude Opus 4.6 represents their most intelligent model, specifically designed for building agents and complex coding tasks. Claude Sonnet 4.6 balances speed with intelligence, making it their recommended daily driver. Claude Haiku 4.5 is the fastest option with near-frontier intelligence.

Claude Opus 4.6 and Claude Sonnet 4.6 (and some Sonnet 4.x variants) support a 1M token context window in beta across the Claude API, Amazon Bedrock, Google Cloud Vertex AI, and Microsoft Foundry.

OpenAI’s Expanded Lineup

OpenAI’s portfolio has grown substantially. Their flagship GPT-5.2 targets coding and agentic tasks across industries, with a 400,000 token context window and knowledge cutoff of August 31, 2025. Input costs $1.75 per million tokens, with output at $14.00 per million tokens. Cached input drops to just $0.18 per million tokens.

GPT-4.1 serves as their smartest non-reasoning model, with an impressive 1,047,576 token context window and knowledge cutoff of June 01, 2024. Standard pricing sits at $2.00 per million input tokens and $8.00 per million output tokens.

The lineup extends downward with GPT-5-mini ($0.25 input, $2.00 output per million tokens) and GPT-5-nano ($0.05 input, $0.40 output per million tokens) for budget-conscious applications.

DeepSeek’s Lean Approach

DeepSeek keeps things simpler. Their DeepSeek-V3.2 comes in two modes: deepseek-chat (non-thinking mode) and deepseek-reasoner (thinking mode). Both run on the same base model with a 128K context window.

According to the official DeepSeek API documentation, deepseek-chat defaults to 4K max output (8K maximum), while deepseek-reasoner allows 32K default output (64K maximum). The pricing structure is remarkably aggressive: $0.028 per million input tokens with cache hits, $0.28 per million standard input tokens, and $0.42 per million output tokens.

DeepSeek-V3.2-Speciale pushes reasoning capabilities even further, achieving gold-level performance in competitions like IMO, CMO, ICPC World Finals, and IOI 2025. It’s currently API-only without tool-use support.

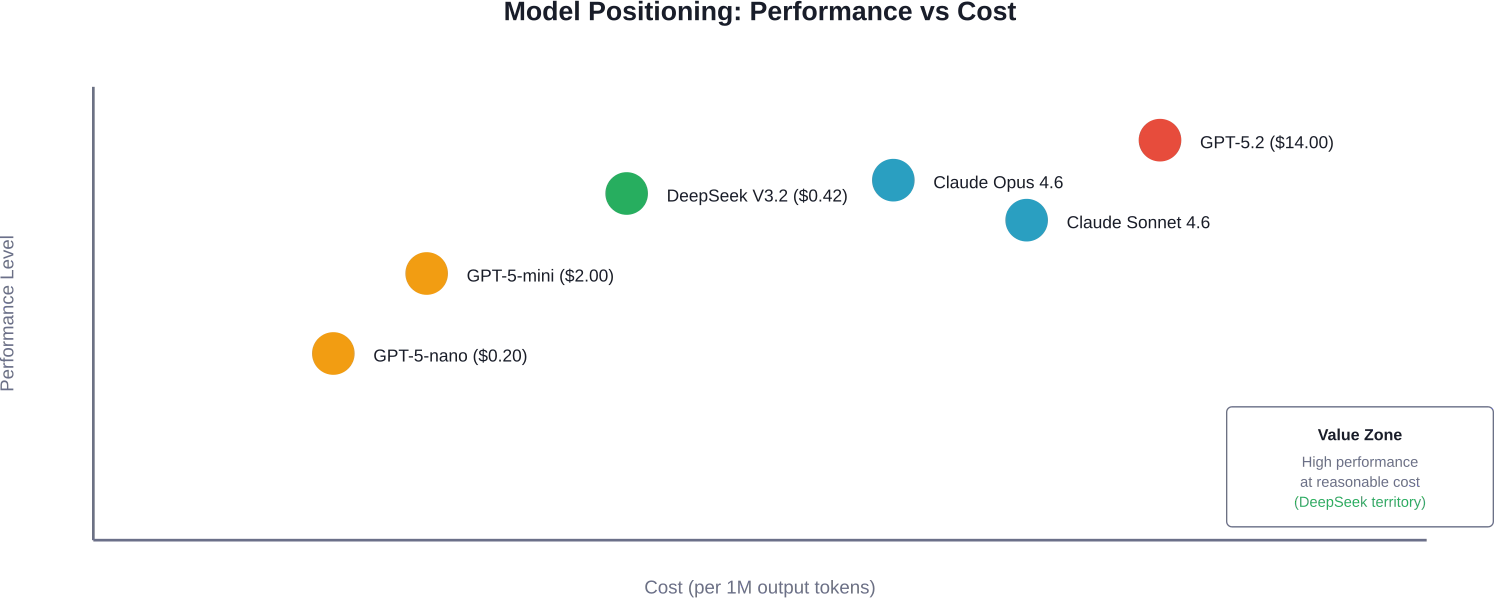

Cost-performance positioning of major AI models as of early 2026, showing DeepSeek’s competitive pricing advantage

Coding Performance: Where the Rubber Meets the Road

Developers care about one thing above all: can this model actually write good code?

According to research from arXiv comparing these models on coding tasks, DeepSeek achieved competitive performance at dramatically lower costs, while Claude generally costs significantly more per token. That’s a compelling value proposition for cost-conscious teams.

Real talk: the gap between these models on coding tasks has narrowed dramatically. GPT-4.1 provides balanced coding capabilities with strong Azure integration if you’re already in the Microsoft ecosystem. Claude Opus excels at understanding complex codebases and providing thoughtful refactoring suggestions.

But here’s where it gets interesting. According to benchmark data, DeepSeek R1 achieved 65.9 on LiveCodeBench (Pass@1-COT), with OpenAI o1-1217 at 63.4 and Claude-3.5-Sonnet at 33.8, while GPT-4o-0513 scored 34.2.

| Model | HumanEval Score | LiveCodeBench | Best Use Case

|

|---|---|---|---|

| DeepSeek R1 | 85%+ | 65.9 | Budget-conscious coding tasks |

| GPT-5.2 | High | ~63-65 | Agentic coding workflows |

| Claude Opus 4.6 | Competitive | N/A | Complex refactoring |

| OpenAI o1-1217 | High | 63.4 | Reasoning-heavy tasks |

What About Real-World Coding?

Benchmarks tell one story. Actual development work tells another.

Community discussions reveal that Claude tends to excel at maintaining consistent code style across large projects. GPT-5 handles complex architectural decisions well, especially when you need to reason through multiple implementation approaches. DeepSeek surprises developers with its ability to understand context despite its lower price point.

The truth? For straightforward CRUD applications and standard web development patterns, all three perform admirably. The differences emerge when you’re debugging subtle concurrency issues or refactoring legacy systems.

Reasoning Capabilities: How Deep Do They Think?

OpenAI’s o-series models were explicitly trained to “think longer” and produce chain-of-thought reasoning before answering. This yields strong logical reasoning on complex problems.

DeepSeek V3.2 in reasoning mode (deepseek-reasoner) competes directly in this space. The model achieved gold-level results in mathematical olympiads and competitive programming contests. DeepSeek-V3.2-Speciale maxes out reasoning capabilities to rival advanced models like Gemini-3.0-Pro, though it requires higher token usage.

Claude’s approach differs slightly. Rather than extended chain-of-thought visible to users, Claude uses adaptive thinking—dynamically deciding when and how much to think based on task complexity.

According to academic research from arXiv, when comparing these models on scientific computing tasks, each showed distinct reasoning patterns. The study evaluated performance across multiple domains, finding that model choice significantly impacted results depending on the specific reasoning type required.

The Pricing Reality Check

Cost matters. Especially when you’re processing millions of tokens monthly.

Let’s get specific with the numbers from official pricing pages.

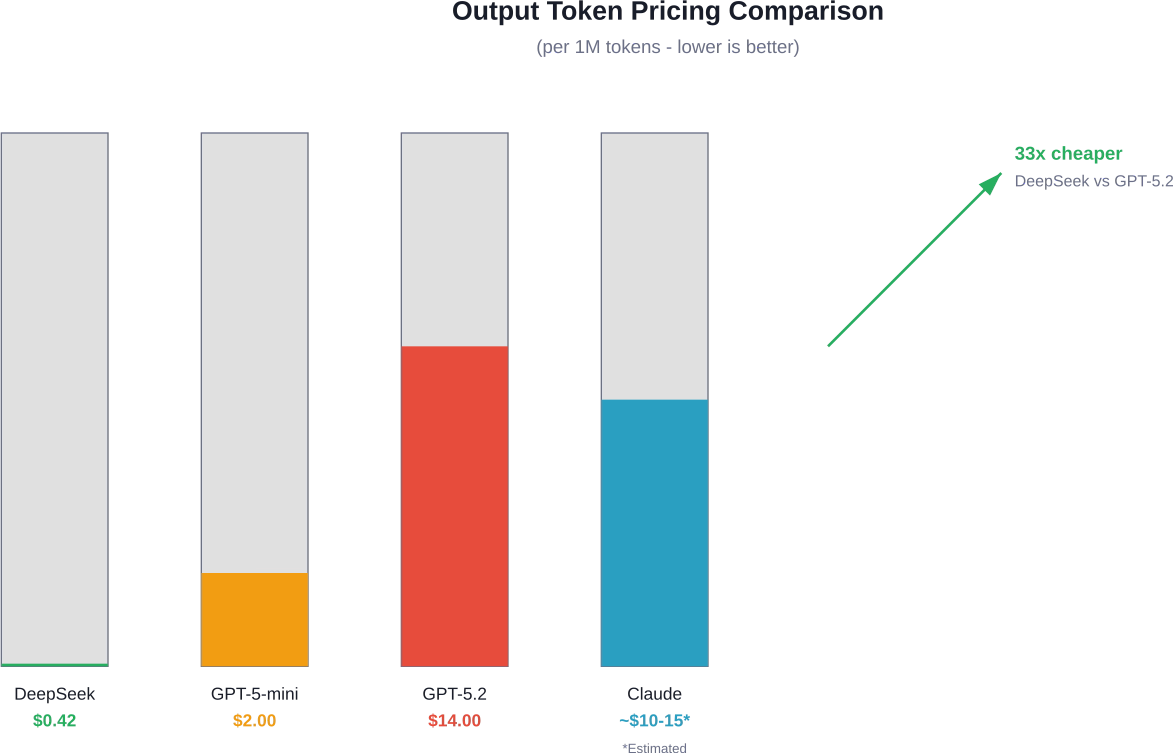

OpenAI Pricing Structure

GPT-5.2 standard processing costs $1.75 per million input tokens and $14.00 per million output tokens. Cached input drops to $0.175 per million tokens. The Batch API offers 50% savings, making input $0.875 and output $7.00 per million tokens.

GPT-5-mini provides a more economical option at $0.25 input and $2.00 output per million tokens (standard rates). GPT-5-nano undercuts everything at $0.025 input and $0.20 output per million tokens.

The pro models cost substantially more. GPT-5.2-pro runs $21.00 input and $168.00 output per million tokens.

Claude’s Pricing (Based on Historical Patterns)

While official current pricing for Claude Opus 4.6 wasn’t specified in the documentation provided, research from arXiv noted that Claude generally costs more than other AI approaches for similar tasks.

Current Claude API pricing information is available from Anthropic’s official documentation.

DeepSeek’s Aggressive Pricing

DeepSeek undercuts everyone substantially. According to their official API documentation, standard pricing is $0.28 per million input tokens and $0.42 per million output tokens. With cache hits, input drops to just $0.028 per million tokens.

That’s roughly 5-50 times cheaper than comparable models, depending on configuration.

Output token pricing comparison showing DeepSeek’s dramatic cost advantage over competing models

Context Windows and Memory

How much information can these models hold in their “working memory” during a conversation?

- Claude leads with a 1 million token context window in beta. That’s enough to fit several full novels or an entire large codebase. This makes Claude particularly effective for tasks requiring analysis of massive documents or long-running conversations.

- GPT-5.2 offers 400,000 tokens, while GPT-4.1 offers 1,047,576 token context window. These are both substantial—more than enough for most real-world applications.

- DeepSeek V3.2 provides 128K tokens, which is smaller but still adequate for the majority of tasks. Most developers won’t hit this limit in typical usage.

The practical impact? If you’re building tools that analyze entire repositories, process long legal documents, or maintain very long conversations, Claude or GPT-4.1 have the edge. For standard chatbot applications or focused coding tasks, DeepSeek’s 128K works fine.

Ecosystem and Integration

Models don’t exist in isolation. Integration matters.

OpenAI’s Ecosystem Advantage

OpenAI’s models integrate deeply with Microsoft Azure, GitHub Copilot, and countless third-party tools. The GPT ecosystem is mature, with extensive documentation, community resources, and pre-built integrations.

Function calling, structured outputs, fine-tuning, distillation, and predicted outputs are all supported. The v1/chat/completions endpoint has become a de facto standard that many tools support.

Claude’s Growing Presence

Claude is available through multiple channels: Claude API directly from Anthropic, Amazon Bedrock, Google Cloud Vertex AI, and Microsoft Foundry. This multi-cloud approach provides flexibility.

Anthropic has introduced Agent Skills, which are modular capabilities that extend Claude’s functionality. Each skill packages instructions, metadata, and optional resources that Claude uses automatically when relevant.

DeepSeek’s Compatibility Play

DeepSeek’s API intentionally mimics OpenAI’s format. According to their official documentation, you can use the OpenAI SDK or any OpenAI-compatible software with DeepSeek by simply changing the base_url to https://api.deepseek.com and providing a DeepSeek API key.

This compatibility means many existing tools work with DeepSeek immediately, lowering the switching cost.

Safety, Alignment, and Transparency

Not all models approach safety the same way.

- Claude has built a reputation for careful safety alignment. Anthropic’s Constitutional AI approach aims to make models helpful, harmless, and honest. In practice, this sometimes means Claude refuses requests that other models would attempt, which some users find overly cautious.

- OpenAI employs extensive reinforcement learning from human feedback (RLHF) and safety testing. They’ve been more willing to push boundaries while maintaining guardrails.

- DeepSeek published technical documentation explaining their model mechanisms and training methods, promoting transparency. However, as a newer entrant, their long-term safety track record is still being established.

For enterprise applications in regulated industries, Claude’s conservative approach may be advantageous. For research and experimentation, GPT’s balance of capability and safety works well. DeepSeek’s open approach appeals to developers who want to understand what’s happening under the hood.

Enterprise Considerations: Which Model for Business?

Choosing an AI model for enterprise use involves different criteria than personal projects.

Total Cost of Ownership

Don’t just look at per-token pricing. Consider volume discounts, caching benefits, and the cost of developer time. A model that costs 3x more but reduces debugging time by 40% may be the better investment.

DeepSeek’s pricing makes it attractive for high-volume applications where cost per interaction dominates. Claude’s accuracy may justify higher costs for customer-facing applications where errors are expensive. GPT’s ecosystem integration can reduce development time, offsetting higher API costs.

Reliability and Uptime

OpenAI has faced occasional outages during peak usage. Claude’s multi-cloud availability through AWS, GCP, and Azure provides redundancy options. DeepSeek, as a newer service, has limited track record data.

For mission-critical applications, multi-model strategies are becoming common. Use Claude as primary with GPT as fallback, or route simple queries to DeepSeek and complex ones to more expensive models.

Data Privacy and Compliance

Check each provider’s data handling policies carefully. Claude through Amazon Bedrock or Google Vertex AI may offer different compliance certifications than using the direct API. OpenAI’s Azure deployment provides enterprise-grade security features. DeepSeek’s data policies should be reviewed based on your specific regulatory requirements.

| Factor | Best for Claude | Best for GPT | Best for DeepSeek

|

|---|---|---|---|

| Budget Priority | Low | Medium | High |

| Ecosystem Integration | Medium | High | Medium |

| Safety Requirements | High | Medium | Medium |

| Context Window Needs | Very High (1M) | High (400K-1M) | Medium (128K) |

| Reasoning Tasks | High | Very High | High |

| Documentation Quality | High | Very High | Good |

Known Limitations and Weaknesses

Every model has blindspots. Knowing them helps you work around them.

Claude’s Quirks

Claude can be overly cautious, refusing benign requests due to safety filters. It sometimes provides more verbose explanations than necessary. The higher pricing limits use cases where cost per token is critical.

GPT’s Challenges

GPT models occasionally “hallucinate” information confidently. The reasoning models can be slower due to extended thinking time. Pricing for pro versions puts them out of reach for many applications.

DeepSeek’s Growing Pains

As a newer platform, DeepSeek has less community knowledge and fewer third-party integrations. The smaller context window limits some applications. Long-term reliability and support remain questions as the service matures.

Performance Benchmarks: The Numbers

Benchmarks provide standardized comparison points, though real-world performance varies.

Research from Georgetown University’s Center for Security and Emerging Technology emphasizes that evaluations are “still at a very early stage” and should be interpreted carefully. Popular benchmarks include MMLU (Measuring Massive Multitask Language Understanding) with multiple-choice questions from professional exams, and GPQA (Graduate-Level Google-Proof Q&A) with expert-written questions.

According to various sources, DeepSeek V3 competes effectively on coding benchmarks while maintaining dramatically lower costs. GPT-5 series models lead on reasoning-heavy evaluations. Claude performs strongly on nuanced language tasks and long-document understanding.

The takeaway? Benchmark scores matter, but they don’t tell the whole story. Test models on your specific use cases before committing.

User Experience and Interface

Developer experience matters as much as raw capability.

OpenAI’s playground and documentation are polished and comprehensive. The API is well-documented with extensive examples. For GPT-5.2, the free tier is not supported; usage tiers have defined TPM limits (e.g., Tier 5 shows up to 40,000,000 TPM).

Claude’s documentation is similarly thorough, with clear model comparison tables and feature descriptions. The multi-cloud approach means you might interact with Claude through different interfaces depending on your deployment choice.

DeepSeek’s documentation is functional but less extensive. The OpenAI compatibility helps, as many tutorials and examples work with minimal modification.

Which Model Should You Actually Choose?

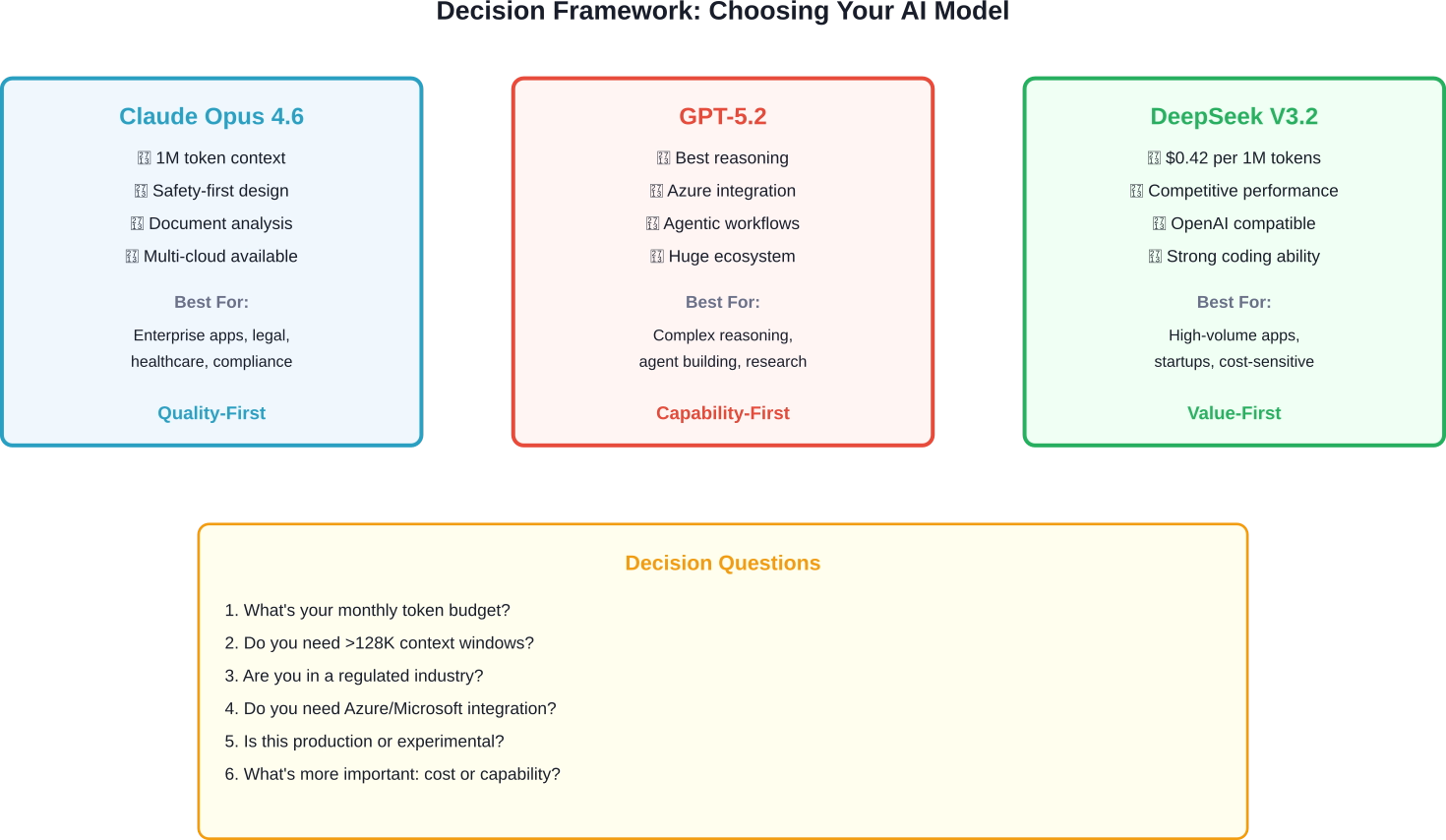

Here’s the thing: there’s no universal “best” model. Your choice depends on your specific needs.

Choose Claude If…

You need maximum context window for processing large documents. Safety and careful output generation are priorities. You’re building applications in sensitive domains where conservative behavior is advantageous. Budget is less constrained than quality requirements.

Choose GPT If…

You need deep ecosystem integration with Microsoft, Azure, or GitHub tools. Reasoning capabilities are central to your use case. You want the most extensive documentation and community support. You’re building agentic systems that need sophisticated planning.

Choose DeepSeek If…

Cost efficiency is a primary concern. You’re processing high volumes where per-token costs add up quickly. You need competitive performance without premium pricing. You’re comfortable with a newer platform and want OpenAI compatibility.

Framework for selecting the right AI model based on your specific requirements and constraints

Multi-Model Strategies

Many sophisticated applications don’t pick just one model.

A routing approach can optimize both cost and quality. Use DeepSeek for simple queries, Claude for complex analysis requiring long context, and GPT for tasks needing deep reasoning. This requires building routing logic but can reduce costs by 60% or more while maintaining quality.

Another strategy: use cheaper models for generating initial drafts, then use premium models for refinement and quality checking. Or run the same prompt through multiple models and use voting or ensemble methods for critical decisions.

The overhead of managing multiple models is decreasing as tools like Crazyrouter and similar services make it easy to test different models with the same code.

Navigating the AI Frontier with AI Superior

As the gap between reasoning capabilities and cost-efficiency narrows, the challenge for most enterprises shifts from choosing a model to successfully implementing it. At AI Superior, our team of Ph.D.-level Data Scientists and Software Engineers specializes in bridging this gap through end-to-end AI application development and strategic consulting. We help organizations move beyond simple API calls by building custom, high-performance systems that integrate these frontier models into existing workflows, ensuring that your choice of architecture—whether it involves Claude’s massive context windows or DeepSeek’s cost-effective reasoning—translates into tangible business value.

Our systematic approach focuses on identifying the specific areas where machine learning can drive long-term efficiency, from computer vision to predictive analytics. We understand that in a landscape as volatile as 2026, a “one-size-fits-all” model strategy rarely works. Instead, our team works closely with you through a rigorous discovery and MVP process to scale solutions that are robust, reliable, and tailored to your industry’s unique regulatory and data requirements.

Future Outlook: What’s Coming

The AI model landscape continues evolving rapidly.

- OpenAI continues releasing incremental improvements to their model family. The gap between reasoning models and standard models appears to be narrowing. Pricing pressure from competitors like DeepSeek may force adjustments.

- Anthropic is expanding Claude’s availability across cloud providers and adding features like Agent Skills. The 1 million token context window in beta suggests they’re pushing boundaries on input handling.

- DeepSeek is positioned as a disruptor, proving that competitive performance doesn’t require premium pricing. Their V3.2-Speciale model achieving gold-level results in programming competitions demonstrates they’re not just about cost—they’re pushing capability too.

Expect continued model improvements, pricing competition, and consolidation of capabilities across providers. The differences between these models will likely narrow on benchmarks while diverging on specialized use cases.

Conclusion: Making Your Choice

The competition between Claude, GPT, and DeepSeek benefits everyone. Prices are dropping, capabilities are increasing, and the gap between premium and budget options is narrowing.

Your decision ultimately comes down to priorities. If you’re building something where intelligence matters more than cost—research applications, complex reasoning tasks, sophisticated agents—GPT-5.2 or Claude Opus 4.6 justify their premium pricing.

If you’re processing high volumes and need cost efficiency without sacrificing too much capability, DeepSeek offers remarkable value. The $0.42 per million output tokens pricing changes the economics of AI applications.

And increasingly, the smart move isn’t choosing one model—it’s architecting your application to use the right model for each task.

The best way forward? Test all three on your specific use cases. Most offer free tiers or credits for initial testing. Run your actual prompts, measure the outputs, calculate the costs, and make your decision based on data rather than marketing claims.

Ready to start testing? Check the official documentation for Claude API, OpenAI Platform, and DeepSeek API to get your keys and begin experimenting today.

Frequently Asked Questions

Is DeepSeek as good as GPT-4 or Claude?

For many tasks, yes. DeepSeek V3.2 achieves competitive performance on coding benchmarks like HumanEval while costing dramatically less. Research data shows it reached strong performance levels on HumanEval at significantly lower costs than Claude. However, GPT and Claude may still have advantages on tasks requiring maximum reasoning capability or very long context windows beyond 128K tokens.

Which AI model is best for coding in 2026?

It depends on your specific needs. DeepSeek R1 scored highest on LiveCodeBench (65.9), making it excellent for coding tasks at low cost. GPT-5.2 excels at agentic workflows and complex architectural decisions. Claude Opus 4.6 is strong for understanding and refactoring large codebases. For most developers, DeepSeek offers the best value, while GPT provides the best ecosystem integration.

How much does it cost to use these AI models?

Pricing varies significantly. According to official pricing pages, DeepSeek costs $0.28 input and $0.42 output per million tokens (standard rates). GPT-5.2 costs $1.75 input and $14.00 output per million tokens. GPT-5-mini is $0.25 input and $2.00 output per million tokens. Claude pricing varies by deployment method—check Anthropic’s official documentation for current rates. DeepSeek is roughly 5-50 times cheaper than comparable models.

Can I use DeepSeek with my existing OpenAI code?

Yes. According to DeepSeek’s official API documentation, their API uses an OpenAI-compatible format. You can use the OpenAI SDK or any OpenAI-compatible software with DeepSeek by changing the base_url to https://api.deepseek.com and providing your DeepSeek API key. Most existing code should work with minimal modifications.

Which model has the longest context window?

Claude currently offers a 1 million token context window in beta. GPT-4.1 provides 1,047,576 tokens, slightly exceeding Claude. GPT-5.2 offers 400,000 tokens. DeepSeek V3.2 has 128K tokens, which is smaller but sufficient for most applications. For tasks requiring analysis of extremely large documents or very long conversations, Claude or GPT-4.1 have the advantage.

Are these models safe for enterprise use?

All three have been deployed in enterprise environments, but with different considerations. Claude emphasizes safety alignment and is popular in regulated industries. OpenAI offers enterprise deployments through Azure with additional security features. DeepSeek is newer with less established track record. For enterprise use, evaluate each provider’s data handling policies, compliance certifications, and service level agreements based on your specific requirements. Multi-cloud deployments of Claude through AWS, GCP, or Azure may provide additional compliance options.