Key Points: AI cost estimation in 2026 spans multiple dimensions: training costs from $50,000 to millions depending on model complexity, cloud infrastructure ranging $250,000+ annually for large-scale deployments, and hidden expenses like data acquisition, risk management, and ongoing inference costs. Organizations must account for both direct technical costs and indirect factors like regulatory compliance, cybersecurity, and resource allocation when budgeting AI projects.

How much does it actually cost to build and deploy an AI system in 2026? The answer isn’t what most people expect.

Market studies show that AI development costs range from $50k – $500k+ depending on the complexity and scope of the project. But here’s the thing—that’s just the starting point. The real cost picture involves infrastructure, data acquisition, ongoing management, and a host of hidden expenses that catch organizations off guard.

This guide breaks down the complete cost structure of AI projects with real pricing data from cloud providers, development frameworks, and industry deployments. No fluff, just the numbers and frameworks needed for accurate budgeting.

The Real Cost Components of AI Development

When CFOs ask about AI costs, they’re usually thinking about model development. That’s actually just one piece—and often not the biggest one.

According to the National Institute of Standards and Technology’s AI Risk Management Framework, effective AI governance requires comprehensive resource planning that extends far beyond initial development. The framework emphasizes that trustworthy AI systems demand ongoing investment in monitoring, validation, and risk mitigation.

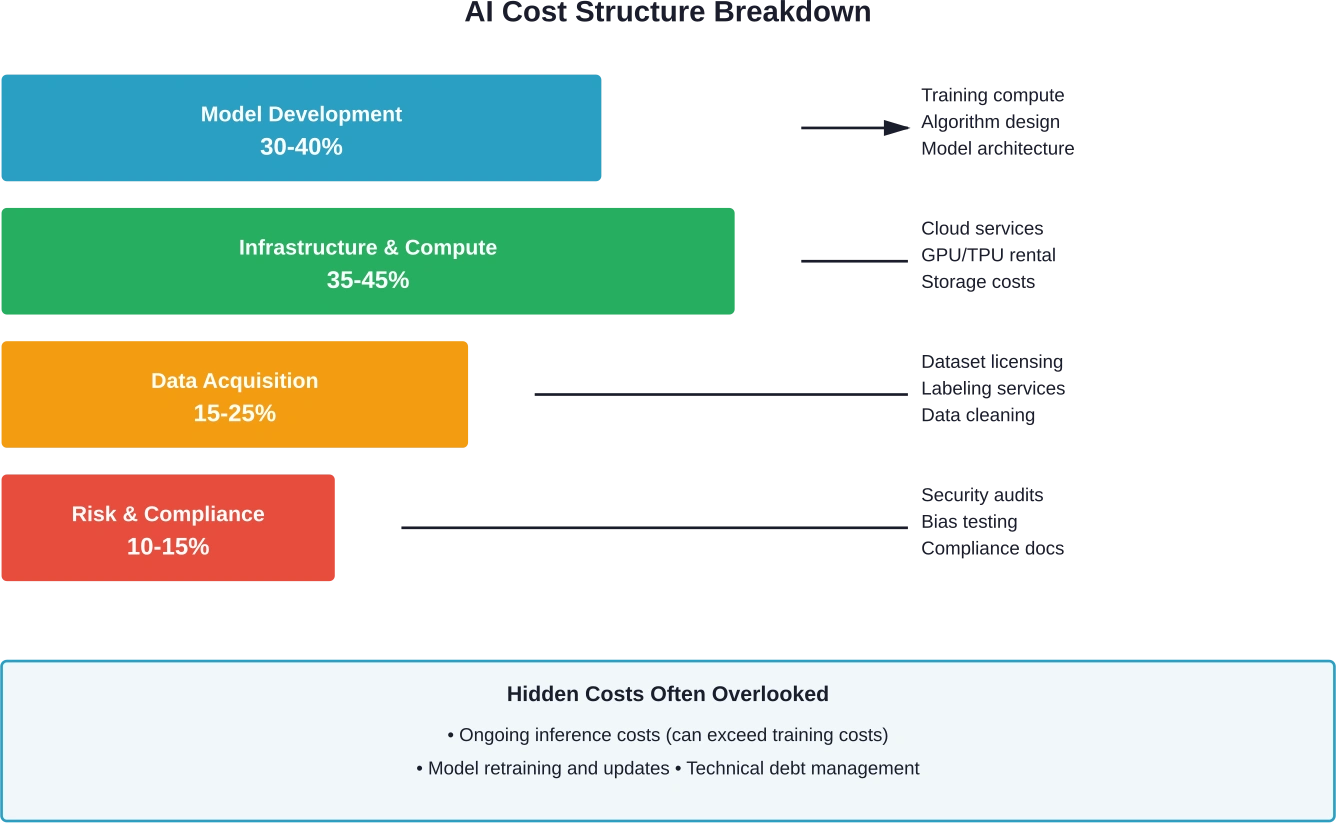

Breaking down the actual cost structure reveals four major categories:

Model Complexity and Training Costs

The complexity of AI models accounts for 30-40% of total project costs. Building large-scale models from scratch requires vast computational resources and significant financial investment.

Research on resource costs published by the Sustainable AI Lab at Bonn University found that training a model like GPT-4 requires between 1,174 and 8,800 A100 GPUs depending on Model FLOPs Utilization (MFU) and hardware lifespan. That corresponds to extracting and eventually disposing of up to 7 tons of toxic elements—a hidden environmental and financial cost rarely included in initial estimates.

But wait. Recent cost accounting research reveals a major loophole organizations use to obscure true development expenses: model distillation. DeepSeek-V3, for instance, was produced partly by distilling the more powerful DeepSeek-R1, yet its widely quoted $6 million budget doesn’t include the parent model’s development expenses.

Cloud Infrastructure and Compute Costs

Infrastructure represents the most predictable—and often largest—ongoing expense. Amazon AWS estimates for AI infrastructure show monthly costs can easily reach five figures.

| Service Component | Monthly Cost (USD) | 12-Month Cost (USD)

|

|---|---|---|

| Amazon EC2 (compute instances) | 20,959.76 | 251,517.10 |

| Elastic Block Store (EBS) | 1,233.29 | 14,799.48 |

| S3 Standard (storage) | 471.04 | 5,652.48 |

| VPN Connection | 275.00 | 3,300.00 |

| Total Infrastructure | 22,939.09 | 275,269.06 |

These infrastructure costs assume 12 hours daily usage over 30 days. Running systems 24/7 would roughly double these figures.

Data Acquisition and Preparation

Data represents the least understood input in AI production. As AI labs exhaust public data sources, they’re turning to proprietary data with deals reaching hundreds of millions of dollars.

Research from Open Data Labs on ‘The Economics of AI Training Data: A Research Agenda’ establishes data economics as a coherent field, documenting how data is currently exchanged and priced. The challenge? Data doesn’t behave like traditional production inputs. Its value changes based on context, quality, recency, and uniqueness—making cost estimation particularly complex.

Real talk: most organizations underestimate data costs by 50% or more. Acquisition is just the beginning. Cleaning, labeling, validation, and ongoing updates all carry substantial price tags.

Risk Management and Compliance Costs

The National Institute of Standards and Technology published a Generative AI Profile in July 2024 (NIST.AI.600-1) as a companion to the AI Risk Management Framework. This cross-sectoral resource emphasizes that generative AI introduces unique risks requiring specific mitigation strategies and associated costs.

Risk management isn’t optional anymore. It’s a mandatory budget line item. Organizations must account for:

- Security vulnerability assessments and remediation

- Bias testing and fairness audits

- Regulatory compliance documentation

- Model monitoring and drift detection

- Incident response planning

Research cited in the Brookings Institution report found that companies with high cybersecurity exposure significantly underperform in the stock market, with roughly 0.33% lower returns per month. These digital vulnerabilities impose real economic costs that don’t neatly appear in GDP—but absolutely affect AI project budgets.

Platform-Specific Pricing Models

Different AI platforms and services use wildly different pricing structures. Understanding these models is essential for accurate cost estimation.

API-Based Model Pricing

For organizations using pre-trained models through APIs, costs scale with usage volume. The FinOps Foundation provides comparison data for monthly costs assuming 12 hours daily usage over 30 days:

| Platform & Model | Monthly Cost | Use Case

|

|---|---|---|

| OpenAI GPT-3.5 Turbo 16K | $90.00 | General-purpose, cost-effective |

| OpenAI GPT-4 8K | $2,700.00 | Complex reasoning tasks |

| Amazon Bedrock Cohere Command | $117.00 | Enterprise integration |

| Amazon Bedrock Claude Instant | $187.20 | Fast response applications |

Notice the 30x price difference between GPT-3.5 and GPT-4? That’s not a typo. Model capability directly drives cost—which means choosing the right model for each task matters enormously for budget control.

The Inference Cost Trap

Here’s what catches most organizations off guard: inference costs can dwarf training expenses.

Research on supervised training economics found that zero-shot vision-language models demonstrate 52.3% accuracy on diverse product categories. However, the analysis showed that custom model training only justifies investment beyond 55 million inferences—equivalent to processing 151,000 images daily for one year.

Below that threshold? The inference costs of running a custom model exceed the benefits. Organizations would save money using off-the-shelf solutions despite lower accuracy.

This fundamentally changes how cost estimation should work. The question isn’t just “what does training cost?” It’s “at what usage volume does custom development become cost-effective?”

Cost Estimation Frameworks for 2026

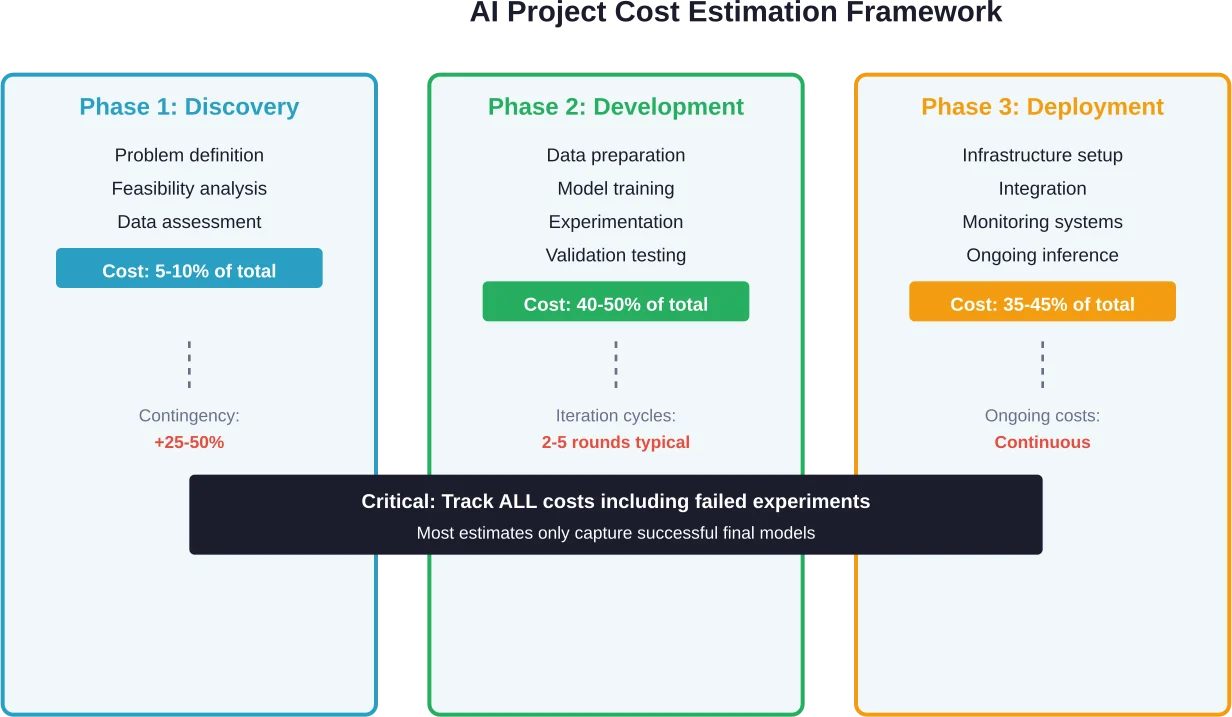

Traditional cost estimation approaches fail for AI projects because they treat development as a linear process. AI doesn’t work that way.

Effective cost estimation requires accounting for iteration, experimentation, and the inherent uncertainty in model performance. Several frameworks have emerged to address these challenges:

The Seven Principles of AI Cost Accounting

Research on AI cost and compute accounting proposes seven principles for accurate cost tracking. These principles address technical ambiguities that create loopholes undermining regulatory effectiveness and accurate budgeting.

The core insight? Narrow accounting can obscure total development costs. Organizations must track:

- All upstream model development (including parent models used for distillation)

- Full compute resources (not just final training runs)

- Data acquisition and processing costs

- Failed experiments and abandoned approaches

- Ongoing inference and serving costs

Most cost estimates focus only on the final successful model. That’s like estimating pharmaceutical R&D costs by looking only at approved drugs while ignoring the 90% that failed in trials.

Project Type and Complexity Multipliers

Not all AI projects cost the same. Research on project cost prediction found that certain project types are particularly prone to cost overruns.

Solar and wind power projects consisting of many identical components tend to have accurate cost estimates. But projects where each implementation differs significantly—like IT projects, major events, or complex systems—frequently exceed budgets. The Sydney Opera House famously experienced massive cost overruns because each aspect was sufficiently unique that prior project data didn’t transfer well.

AI projects fall into this high-uncertainty category. Cost estimation must include substantial contingency buffers—typically 25-50% above initial estimates for novel AI applications.

Build a Structured AI Cost Model with AI Superior

AI project budgets often fail because companies underestimate data preparation, experimentation cycles, and infrastructure costs. AI Superior focuses on technical due diligence before implementation begins.

Their cost estimation process includes:

- Business goal clarification

- Feasibility and risk analysis

- Technical architecture definition

- Development and maintenance forecasting

If you need a grounded AI cost estimate instead of broad industry averages, request a structured assessment from AI Superior.

Industry-Specific Cost Variations

AI development costs vary dramatically across industries due to different data requirements, regulatory constraints, and risk tolerances.

Healthcare and Life Sciences

Healthcare AI projects typically cost 40-60% more than comparable projects in other sectors. Why? Regulatory compliance, data privacy requirements, and the extraordinarily high cost of errors.

Medical data acquisition alone can consume 35-40% of project budgets. Healthcare datasets require extensive annotation by domain experts—physicians reviewing imaging studies or clinical notes—at rates of $150-$400 per hour.

Manufacturing and Product Design

AI-driven cost estimation applications in manufacturing represent an interesting use case: using AI to predict the costs of other products.

Three-dimensional product cost estimation systems bring cost insights earlier into engineering workflows. These applications integrate directly with CAD systems to estimate manufacturing costs during the design phase, enabling engineers to optimize for both performance and cost simultaneously.

Manufacturing AI projects benefit from relatively structured data and clear performance metrics. Development costs typically fall in the $75,000-$250,000 range for focused applications.

Financial Services

Financial AI deployments carry unique cost drivers around explainability and audit trails. Regulators require financial institutions to explain AI-driven decisions—particularly for credit, lending, and risk assessment applications.

Building interpretable models that satisfy regulatory requirements while maintaining competitive performance adds 20-35% to development costs compared to pure accuracy-focused approaches.

Economic Impact and Strategic Considerations

Beyond individual project costs, AI investment decisions have broader economic implications that organizations must consider.

Tools vs. Agents: Different Cost Profiles

Research from RAND Corporation models economic implications of two contrasting AI development scenarios: restricting AI to purely assistive tools versus enabling autonomous AI agents that can perform tasks independently and self-replicate.

These scenarios aren’t predictions—they’re boundaries for potential economic outcomes. But they matter for cost estimation because the tool vs. agent distinction fundamentally changes cost structures.

AI tools that augment human capabilities require ongoing human oversight and intervention. Costs scale partially with usage but maintain substantial human labor components.

AI agents capable of autonomous operation front-load development costs but potentially reduce ongoing operational expenses. However, they introduce new cost categories around monitoring, safety systems, and managing agent interactions.

National Statistics and Measurement Challenges

A January 2026 report from Brookings Institution on integrating AI investment into US national statistics highlights a measurement problem: current economic statistics don’t adequately capture AI investments and impacts.

This creates challenges for organizational cost-benefit analysis. Standard ROI calculations assume reliable market comparisons and industry benchmarks. When statistical agencies can’t properly measure AI investments across the economy, individual organizations lack context for evaluating whether their costs are reasonable.

The report recommends new frameworks for counting AI as intangible capital investment—similar to how software and R&D are currently tracked in GDP statistics.

Optimizing AI Development Costs

Smart organizations don’t just estimate costs—they actively manage and reduce them through strategic choices.

Build vs. Buy Decisions

The most impactful cost decision happens before any code gets written: build custom models or use existing solutions?

Custom development makes financial sense when:

- Inference volumes exceed 50-100 million requests annually

- Proprietary data provides substantial competitive advantages

- Existing solutions don’t address specific requirements

- Models will be reused across multiple products or services

Below those thresholds, API-based solutions typically offer better economics despite per-request costs.

Progressive Model Complexity

Start simple. Seriously.

Many projects begin with overly complex model architectures that prove unnecessary. Starting with simpler approaches—traditional machine learning, rule-based systems, or smaller pre-trained models—establishes performance baselines at 10-20% of advanced model costs.

Add complexity only when simpler approaches fail to meet requirements. This progressive strategy typically reduces total project costs by 30-40% while accelerating time to production.

Hardware Lifecycle Optimization

Research on AI resource costs found that hardware lifespan assumptions dramatically affect total cost calculations. GPU requirements for training large models range from 1,174 to 8,800 units depending on utilization efficiency and hardware lifespan.

Organizations can optimize costs through:

- Combined software and hardware optimization strategies

- Spot instance usage for fault-tolerant training workloads

- Reserved capacity for predictable inference loads

- Multi-cloud strategies to leverage competitive pricing

These optimizations reduce infrastructure costs by 40-60% compared to on-demand pricing.

Common Cost Estimation Mistakes

Even experienced organizations make predictable errors in AI cost estimation.

Ignoring Data Costs

The Economics of AI Training Data research establishes that despite data’s central role in AI production, it remains the least understood input. As AI labs exhaust public data sources, proprietary data deals now reach hundreds of millions of dollars.

Organizations consistently underestimate data costs because they think in terms of storage rather than acquisition, curation, and maintenance. Data costs should typically represent 15-25% of total project budgets—not the 5-8% most initial estimates allocate.

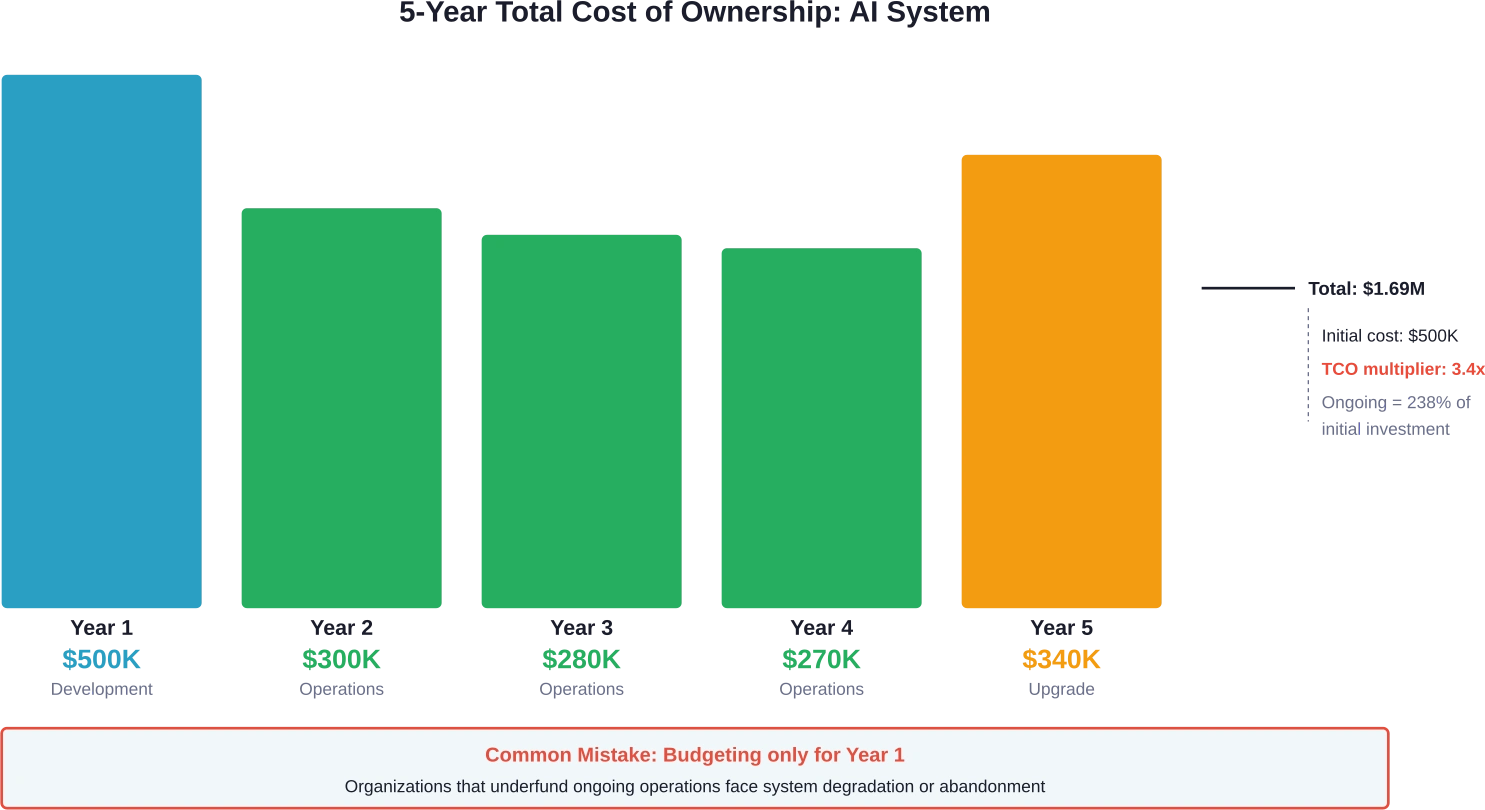

Underestimating Ongoing Costs

Initial development represents just the beginning. Successful AI systems require continuous investment in:

- Model monitoring and drift detection

- Periodic retraining with fresh data

- Infrastructure scaling as usage grows

- Security updates and vulnerability patches

- Performance optimization

Annual ongoing costs typically equal 40-60% of initial development expenses. Over five years, total cost of ownership runs 3-4x the initial project budget.

Treating AI Like Traditional Software

Traditional software development has relatively predictable costs. Requirements lead to design, design to implementation, implementation to testing, testing to deployment.

AI development doesn’t work that way. Models might not achieve target performance. Data quality issues emerge during training. Business requirements change as stakeholders understand what’s actually possible.

Cost estimates must include iteration budgets and explicit contingencies for experimentation. Fixed-price AI projects almost always exceed budgets or underdeliver on capabilities.

Risk Management and Cost Buffers

The National Institute of Standards and Technology’s AI Risk Management Framework emphasizes that trustworthy AI requires comprehensive risk mitigation—and mitigation costs money.

Quantifying Risk-Related Costs

Risk management represents an investment, not just an expense. Systems developed without adequate risk controls face:

- Potential regulatory fines and penalties

- Reputational damage from model failures

- Legal liability for biased or harmful outputs

- Costly emergency remediation

Allocating 10-15% of project budgets to risk management upfront prevents much larger costs down the road. Organizations that skip this step often spend 3-5x as much addressing problems reactively.

Building Appropriate Contingencies

Based on analysis of AI project outcomes across industries, appropriate contingency buffers are:

| Project Type | Contingency Buffer | Risk Factors

|

|---|---|---|

| Well-defined problem, proven approach | 15-25% | Low technical uncertainty |

| Novel application, established methods | 25-40% | Moderate uncertainty |

| Research-stage problem | 50-100% | High technical risk |

| Unprecedented capability | 100%+ | Unknown unknowns |

Organizations uncomfortable with these contingencies should reconsider whether they’re ready for the project. Underfunded AI initiatives fail at much higher rates than properly resourced ones.

Future Trends Affecting AI Costs

Cost dynamics are shifting rapidly. Several trends will reshape AI economics over the next 2-3 years.

Capability Doubling and Price Pressure

According to IEEE Spectrum (published 2025-07-02), LLM benchmarking shows capabilities doubling approximately every seven months. This exponential improvement creates interesting cost dynamics.

On one hand, newer models deliver better performance at similar price points—effectively reducing the cost per unit of capability. On the other, the rapid pace means models become outdated quickly, accelerating replacement cycles and increasing total lifecycle costs.

Regulatory Compliance Costs

Brookings Institution research and academic analysis reveal increasing regulatory scrutiny worldwide, with policymakers increasingly using development cost and compute as proxies for AI capabilities and risks. Such regulations introduce requirements contingent on specific thresholds.

This regulatory evolution will add new cost categories:

- Mandatory impact assessments

- Third-party audits and certifications

- Enhanced documentation and reporting

- Ongoing compliance monitoring

Organizations should budget an additional 8-12% for compliance-related activities as regulations mature.

Frequently Asked Questions

How much does it cost to develop a basic AI solution in 2026?

Basic AI solutions using pre-trained models and standard cloud infrastructure typically cost $50,000-$150,000 for initial development. This includes data preparation, model fine-tuning, integration, and basic deployment. However, ongoing operational costs add $15,000-$50,000 annually depending on usage volume and infrastructure requirements.

What percentage of AI project costs go toward infrastructure vs. development?

Infrastructure and compute typically represent 35-45% of total project costs for AI systems. Model development accounts for 30-40%, data acquisition 15-25%, and risk management 10-15%. However, these ratios shift dramatically based on whether organizations build custom models or use API-based services.

How do inference costs compare to training costs?

For high-volume applications, inference costs frequently exceed training expenses over the system’s lifetime. Research shows that custom model training only becomes cost-effective beyond approximately 55 million inferences—equivalent to processing 151,000 items daily for one year. Below that threshold, API-based solutions typically offer better economics despite per-request charges.

What hidden costs do organizations most often overlook?

The most commonly overlooked costs include ongoing model retraining (20-40% of initial development costs annually), data quality maintenance, cybersecurity measures, regulatory compliance documentation, and technical debt management. Organizations also frequently underestimate the cost of failed experiments—which can represent 40-60% of total R&D expenses but rarely appear in project estimates.

How should organizations budget for AI projects with uncertain outcomes?

AI projects require substantial contingency buffers due to inherent uncertainty. Well-defined problems need 15-25% contingencies, novel applications 25-40%, and research-stage problems 50-100% or more. Organizations should also use staged funding approaches, releasing resources incrementally as projects demonstrate progress rather than committing full budgets upfront.

What factors most significantly impact AI development costs?

Model complexity accounts for 30-40% of cost variation. Other major factors include data quality and availability, regulatory requirements (especially in healthcare and finance), required inference volumes, and whether organizations build custom models versus using existing solutions. Industry-specific requirements can increase costs 40-60% in highly regulated sectors.

How can organizations reduce AI development costs without sacrificing quality?

Start with simpler models and add complexity only when necessary—this typically reduces costs 30-40%. Use spot instances and reserved capacity to optimize infrastructure spending (40-60% savings). Prioritize build-vs-buy decisions carefully, recognizing that custom development only makes economic sense at high inference volumes. Finally, invest in proper cost tracking and allocation from day one to identify optimization opportunities.

Conclusion: Building Realistic AI Budgets

Accurate AI cost estimation requires looking far beyond initial model development expenses. Infrastructure, data acquisition, ongoing operations, risk management, and regulatory compliance all represent substantial cost centers that organizations frequently underestimate or ignore entirely.

The most dangerous approach? Treating AI development like traditional software projects with fixed requirements and predictable timelines. AI systems require iteration, experimentation, and explicit budgets for uncertainty.

Successful organizations build comprehensive frameworks that track all cost categories—including failed experiments and hidden expenses. They establish realistic contingency buffers based on project uncertainty levels. And they recognize that initial development represents less than 30% of five-year total cost of ownership.

The good news? Organizations that invest in accurate cost estimation upfront make better build-vs-buy decisions, allocate resources more effectively, and deliver AI systems that actually create value rather than consuming budgets without returns.

Ready to develop a realistic AI budget for your organization? Start by mapping all cost categories outlined in this framework, establishing baseline estimates for your specific use case, and building in appropriate contingencies for technical uncertainty. The investment in thorough cost planning pays dividends throughout the project lifecycle.