Quick Summary: Artificial intelligence cost analytics platforms provide organizations with real-time visibility into AI spending, tracking everything from token usage and model training expenses to GPU consumption across cloud providers. These specialized tools combine granular cost attribution, automated anomaly detection, and predictive forecasting to help businesses optimize their AI budgets and prevent cost overruns before they happen.

AI applications can drain budgets faster than most finance teams realize. Without proper oversight, costs spiral out of control—GPU hours accumulate, API calls multiply, and model training runs longer than expected.

Traditional cloud cost management approaches fall short when dealing with AI workloads. They can’t track token usage, allocate spending across teams, or provide the granular insights needed to optimize model training expenses.

That’s where artificial intelligence cost analytics platforms come in. These specialized tools transform how organizations understand and manage their AI spending, delivering visibility that wasn’t possible just a few years ago.

What Is an AI Cost Analytics Platform

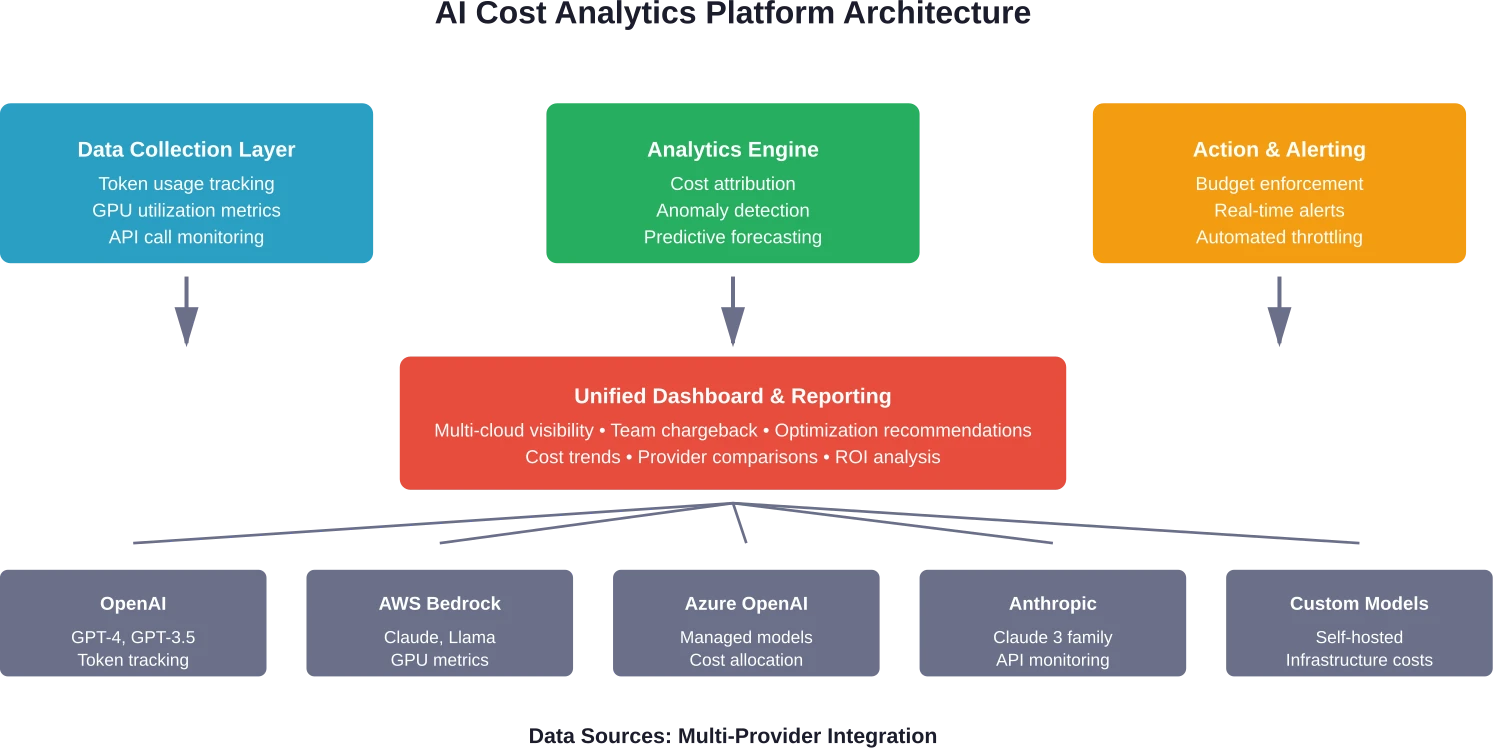

An AI cost analytics platform is purpose-built software that tracks, analyzes, and optimizes spending related to artificial intelligence workloads. Unlike generic cloud cost tools, these platforms understand the unique economics of AI operations.

They monitor specific AI cost drivers: token consumption in language models, GPU utilization during training, inference requests across different providers, and compute resources allocated to various models. The platform aggregates this data and translates it into actionable insights.

Think of it as financial observability for AI infrastructure. Every dollar spent gets attributed to a specific team, project, model, or agent. Cost anomalies get flagged immediately. Budget thresholds trigger alerts before overruns occur.

The value proposition is straightforward. AI applications are resource-intensive, and without proper tracking, organizations waste significant money on inefficient configurations, over-provisioned resources, and poorly optimized models.

Why Traditional Cost Management Falls Short for AI

Cloud cost management tools weren’t designed for AI workloads. They track virtual machines and storage buckets just fine, but AI operations introduce complexities these systems can’t handle.

Token-based pricing from providers like OpenAI or Anthropic Claude doesn’t fit neatly into traditional billing categories. How do organizations allocate costs when a single API call might use different models with varying price points?

GPU utilization presents another challenge. Traditional tools show that GPUs are running, but they don’t reveal whether those resources are being used efficiently. A model might consume expensive GPU hours while producing minimal results—and standard monitoring won’t catch that disconnect.

Model training costs fluctuate wildly based on parameters that generic tools don’t track. Batch size, learning rate adjustments, and hyperparameter tuning all impact expenses, but these variables remain invisible without AI-specific analytics.

Multi-cloud AI deployments compound these problems. Organizations might use AWS Bedrock for some models, Azure OpenAI for others, and direct API access to multiple providers. Unified visibility across these platforms requires specialized infrastructure.

Core Capabilities of Modern AI Cost Analytics Platforms

The best platforms share several essential capabilities that separate them from basic monitoring tools. These features address the specific challenges of managing AI spending.

Deep Cost Attribution at Every Level

Granular attribution tracks where every dollar goes. Platforms break down costs by team, project, model, individual agent, or even specific API endpoints.

This visibility enables chargeback models where business units pay for their actual AI consumption. Finance teams can finally answer questions like “How much did the customer service chatbot cost last quarter?” or “Which research team is driving our GPU expenses?”

Attribution extends to individual sessions or conversations. Organizations can calculate the exact cost of serving a single customer interaction, making it possible to optimize for profitability at a granular level.

Real-Time Tracking and Anomaly Detection

Real-time monitoring catches cost spikes as they happen, not days later when the bill arrives. Platforms track token usage, model invocations, and compute consumption continuously.

Automated anomaly detection uses baseline patterns to identify unusual spending. If a particular model suddenly consumes 10x its normal token budget, alerts fire immediately. Teams can investigate before minor issues become major expenses.

This capability proves especially valuable for organizations running multiple AI agents or experimenting with different models. Development teams sometimes leave expensive processes running inadvertently—real-time tracking catches these mistakes quickly.

Automated Budget Controls and Enforcement

Budget thresholds prevent cost overruns before they occur. Teams set spending limits at various levels—per project, per team, per model, or per time period.

When spending approaches these limits, the platform can take automated actions. It might send escalating alerts, throttle request rates, or even pause specific workloads until a budget reset occurs.

These controls balance cost management with operational needs. Teams don’t want AI applications to fail unexpectedly, but they also can’t allow unlimited spending. Configurable policies provide the right balance for each use case.

Multi-Cloud and Multi-Provider Support

Organizations rarely use a single AI provider. Modern platforms aggregate costs across OpenAI, Anthropic Claude, AWS Bedrock, Azure OpenAI, Google Cloud AI, and other services.

Unified dashboards provide a single source of truth for AI spending regardless of where workloads run. Teams can compare costs across providers and identify opportunities to optimize by shifting workloads to more cost-effective options.

This capability matters increasingly as organizations adopt multi-cloud strategies to avoid vendor lock-in and leverage different providers’ strengths for specific use cases.

Predictive Forecasting and Trend Analysis

Historical data powers predictive models that forecast future spending. Platforms analyze usage patterns to project costs weeks or months ahead.

These forecasts help finance teams with budgeting and resource planning. They can anticipate how scaling AI applications will impact costs and make informed decisions about capacity expansion.

Trend analysis reveals how spending patterns change over time. Organizations can track whether optimization efforts are working or if certain projects are consuming an increasing share of resources.

Economic Frameworks for Evaluating AI Costs

Understanding AI economics requires more than tracking expenses. Organizations need frameworks that contextualize costs within broader business objectives.

Research from arXiv introduced the concept of Levelized Cost of AI (LCOAI), a standardized metric for evaluating AI deployment costs. This approach parallels how energy industries assess power generation economics—calculating the total lifecycle cost per unit of useful output.

For AI applications, useful output might mean successful customer interactions, accurate predictions, or completed tasks. The framework accounts for infrastructure, model training, inference costs, and operational overhead—then divides by actual business value delivered.

Another economic framework focuses on Cost-of-Pass metrics for language models. This approach evaluates models based on the cost required to achieve a successful outcome rather than just the cost per token or API call.

Different models have different accuracy rates. A cheaper model that requires multiple attempts to produce acceptable results might cost more than a premium model that succeeds on the first try. Cost-of-Pass calculations capture this nuance.

These frameworks help organizations make better decisions about model selection, provider choice, and optimization priorities. They shift the conversation from “What’s our AI spend?” to “What’s our AI return on investment?”

Build AI Monitoring and Analytics Tools

As AI systems scale, organizations need visibility into performance, infrastructure usage, and operational costs.

AI Superior develops AI platforms and analytics tools that help companies monitor and manage AI workloads.

Typical platform components include:

- model performance monitoring

- infrastructure usage tracking

- operational analytics dashboards

- AI system management tools

These systems help organizations operate AI solutions reliably at scale.

Key Metrics Tracked by AI Cost Analytics Platforms

Effective platforms monitor dozens of metrics, but several stand out as essential for understanding AI economics. These measurements provide the foundation for optimization decisions.

Token Consumption and Pricing Efficiency

For language models, token usage drives the majority of costs. Platforms track tokens consumed per request, per session, per user, and per application.

They also calculate effective token prices across different providers and models. This enables apples-to-apples comparisons even when providers use different pricing structures.

Token efficiency metrics reveal opportunities for optimization. Applications using verbose prompts or generating unnecessarily long responses waste money on every interaction.

GPU Utilization and Compute Efficiency

Training custom models requires significant GPU resources. Platforms monitor GPU utilization rates, idle time, and cost per training hour.

Low utilization indicates inefficient resource allocation. Organizations might be provisioning expensive GPUs that sit idle for large portions of time, or poorly optimized training jobs might fail to saturate available compute capacity.

Cost per training run metrics help teams understand whether model improvements justify their expenses. A 2% accuracy gain might not warrant a 50% increase in training costs.

Inference Costs and Latency Trade-offs

Inference—running trained models to generate predictions—creates ongoing operational costs. Platforms track inference volume, cost per prediction, and the relationship between latency requirements and expenses.

Faster inference typically costs more. Organizations need visibility into whether their latency requirements justify premium pricing or if slightly slower (but cheaper) alternatives would meet user needs.

Batch inference costs significantly less than real-time predictions for many use cases. Platforms help identify opportunities to shift workloads from expensive real-time APIs to more economical batch processing.

Provider Cost Comparison Metrics

With multiple AI providers in the market, organizations need clear comparisons. Platforms benchmark equivalent workloads across OpenAI, Anthropic, AWS, Azure, and other providers.

These comparisons account for quality differences. The cheapest option isn’t always the best value if it produces inferior results. Platforms measure cost-per-quality-unit rather than just raw price.

According to analysis from Artificial Analysis, intelligence versus cost metrics reveal significant variations across providers. Models with similar capability levels can have dramatically different price points.

Real-World Impact: Cost Optimization in Practice

Theory matters less than results. Some organizations implementing AI cost analytics platforms report cost reductions

Community discussions highlight cases where manufacturing companies reduced operational costs by identifying peak-hour energy consumption patterns in their AI workloads. By scheduling training jobs during off-peak hours, they cut GPU expenses significantly without impacting performance.

Development teams discover that many production applications use overpowered models for simple tasks. A customer service chatbot might use GPT-4 for routine questions that GPT-3.5 could handle at a fraction of the cost. Platforms make these inefficiencies visible.

Anomaly detection catches runaway costs before they become serious problems. A misconfigured API integration might trigger thousands of unnecessary model calls—without real-time monitoring, this waste continues until the monthly bill arrives.

Budget controls prevent experimentation from spiraling into financial disasters. Research teams can explore new models and approaches within defined spending limits, knowing the platform will prevent accidental overruns.

Choosing the Right AI Cost Analytics Platform

Not all platforms offer the same capabilities or serve the same use cases. Organizations need to evaluate options based on their specific requirements and existing infrastructure.

Integration with Existing Infrastructure

The platform must connect seamlessly with current AI workflows. Deep integrations with major cloud providers, popular model hosting services, and common development frameworks reduce implementation friction.

API compatibility matters for custom applications. Teams building proprietary AI systems need platforms that can ingest data from non-standard sources without extensive custom development.

Authentication and security requirements vary by organization. Enterprise-grade platforms support single sign-on, role-based access controls, and compliance with regulatory frameworks like SOC 2 or GDPR.

Granularity of Cost Attribution

How detailed does cost tracking need to be? Some organizations require attribution down to individual API calls or specific user sessions. Others need only project-level visibility.

More granular attribution typically requires more instrumentation. Teams must decide whether the additional implementation effort justifies the extra visibility.

Multi-tenant applications introduce additional complexity. Platforms must track costs across different customers or business units while maintaining data isolation and privacy.

Scalability and Performance

AI workloads scale rapidly. Platforms must handle increasing data volumes without performance degradation or disproportionate cost increases.

Real-time processing requirements grow with workload size. A platform that handles thousands of daily API calls might struggle with millions—organizations should verify scalability before committing.

Data retention policies impact long-term costs. Platforms that store detailed metrics indefinitely become expensive over time. Clear retention options help manage storage expenses.

Alerting and Automation Capabilities

Alerting sophistication varies widely. Basic platforms send emails when spending exceeds thresholds. Advanced systems integrate with incident management tools, support complex multi-condition alerts, and enable automated remediation workflows.

Customizable alert logic prevents notification fatigue. Teams need the ability to define precisely when and how they’re notified about cost issues.

Automated responses to cost anomalies save money and reduce manual toil. Platforms that can automatically scale down resources, throttle requests, or switch to cheaper providers provide significant operational advantages.

| Capability | Basic Platforms | Advanced Platforms | Enterprise Platforms |

|---|---|---|---|

| Cost Attribution | Project-level tracking | Team and model-level | Per-session granularity |

| Provider Support | 1-2 major providers | 5+ providers | Unlimited via API |

| Real-Time Monitoring | Hourly updates | Minute-level data | Sub-second streaming |

| Anomaly Detection | Static thresholds | Machine learning-based | Contextual AI models |

| Budget Controls | Manual alerts | Automated throttling | Policy-driven orchestration |

| Forecasting | Simple trend lines | Multi-factor predictions | Scenario modeling |

| Integration Options | Basic APIs | Webhooks and SDKs | Custom connectors |

| Pricing | Free or low-cost | Usage-based tiers | Custom enterprise contracts |

Implementation Best Practices

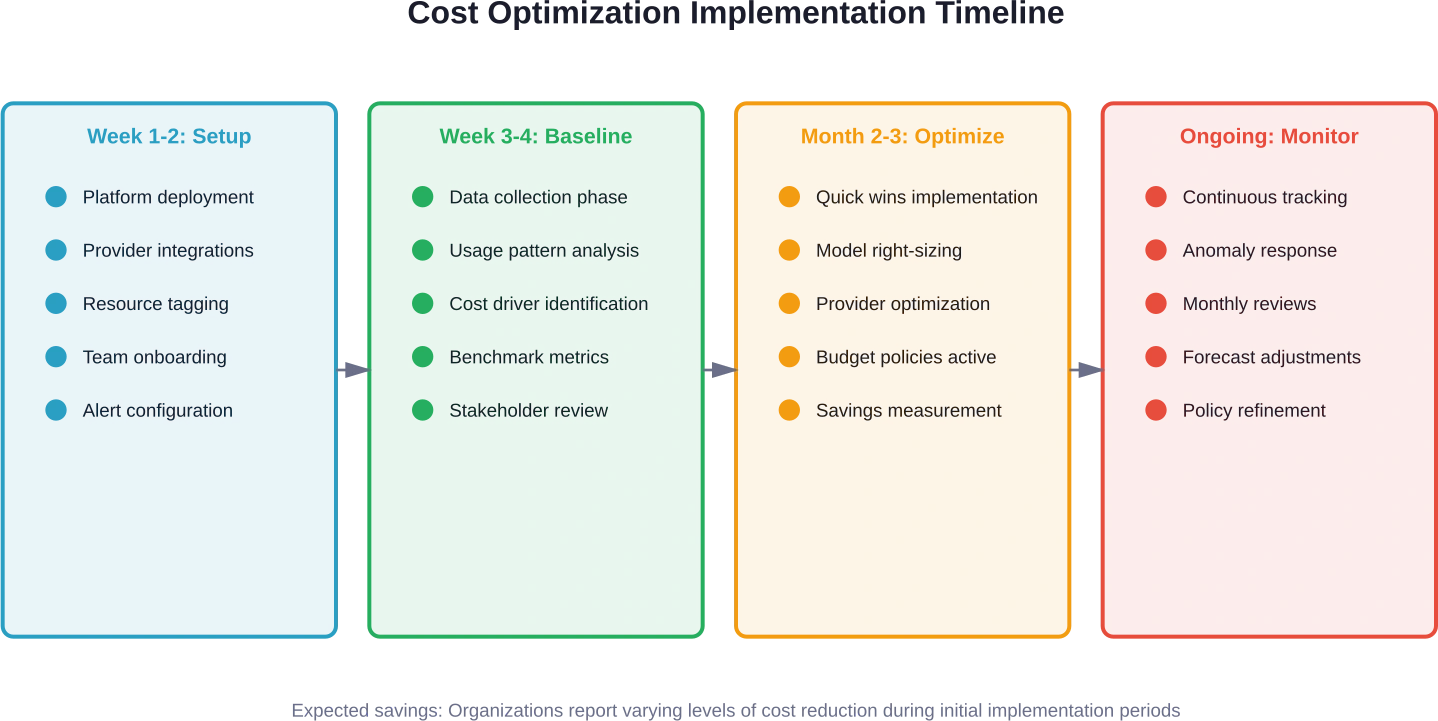

Deploying an AI cost analytics platform requires planning. Organizations that rush implementation often struggle with data quality issues and incomplete visibility.

Start with Comprehensive Instrumentation

Incomplete data leads to incomplete insights. Teams should instrument all AI workloads from the beginning rather than adding tracking incrementally.

This means integrating with every provider, tagging all resources appropriately, and ensuring consistent metadata across different systems. The upfront effort pays dividends when analysis reveals cost drivers across the entire AI portfolio.

Consistent tagging schemes enable meaningful aggregation. Organizations should establish naming conventions for projects, teams, environments, and models before implementation begins.

Define Clear Cost Allocation Models

How should shared resources be allocated? Central AI infrastructure might support multiple business units—organizations need transparent methodologies for distributing costs.

Common approaches include proportional allocation based on usage, dedicated resource pools for each team, or chargeback models where internal customers pay for actual consumption.

Whatever model organizations choose, clarity matters more than perfection. Teams need to understand how their actions impact costs and how expenses are attributed to their budgets.

Establish Baseline Metrics Before Optimization

Optimization efforts need reference points. Before making changes, document current spending patterns, utilization rates, and cost per business outcome.

These baselines enable measuring improvement. Without them, teams can’t prove that optimization efforts delivered value or quantify returns on investment in cost management tools.

Baseline data also helps set realistic targets. Organizations might discover that their costs are already well-optimized, or they might uncover opportunities larger than initially expected.

Create Feedback Loops Between Finance and Engineering

Cost optimization requires collaboration between teams with different expertise. Finance teams understand budgets and spending patterns but lack technical knowledge about AI systems. Engineering teams know how systems work but often lack visibility into financial impacts.

Regular cost review meetings bring these perspectives together. Engineers learn which workloads drive expenses. Finance teams understand technical constraints that limit optimization options.

Shared dashboards and reports ensure everyone works from the same data. When cost anomalies occur, both teams can investigate quickly rather than waiting for end-of-month billing cycles.

Emerging Trends in AI Cost Management

The AI cost analytics space continues to evolve rapidly. Several emerging trends will shape how organizations manage AI spending in coming years.

AI-Powered Cost Optimization

Platforms increasingly use AI to optimize AI costs—meta-optimization, if you will. Machine learning models analyze historical usage patterns and automatically suggest configuration changes.

These systems might recommend switching to different models based on accuracy requirements, adjusting batch sizes for training jobs, or shifting workloads between providers based on real-time pricing.

The goal is moving from visibility to autonomous optimization. Rather than just showing teams where money is spent, platforms will automatically implement cost-saving measures within defined policies.

Standardized Cost Metrics Across the Industry

As mentioned earlier, research into frameworks like LCOAI aims to standardize how organizations evaluate AI costs. Industry adoption of common metrics would enable better benchmarking and decision-making.

The U.S. National Science Foundation has invested in AI research since the 1960s, and contemporary research directions include making AI systems more economically efficient and accessible. Standardized metrics support these goals by creating common language around AI economics.

Organizations could compare their costs against industry benchmarks, identify whether they’re paying premium or discount rates, and make data-driven decisions about provider selection.

Integration with FinOps Practices

FinOps—the practice of bringing financial accountability to cloud spending—is expanding to encompass AI workloads. Organizations are incorporating AI cost management into broader cloud financial operations.

This integration creates unified visibility across infrastructure, applications, and AI. Finance teams get a complete picture of technology spending rather than managing AI costs separately from other cloud resources.

Cross-functional FinOps teams include AI specialists who understand model training economics, token-based pricing, and GPU utilization patterns. This expertise ensures AI workloads receive appropriate financial oversight.

Focus on Carbon Cost and Sustainability

AI workloads consume significant energy. Training large models requires thousands of GPU hours, generating substantial carbon emissions.

Cost analytics platforms are beginning to track environmental impact alongside financial costs. Organizations can see the carbon footprint of different models and providers, enabling sustainability-informed decisions.

This capability matters for companies with carbon reduction commitments. Being able to choose lower-emission AI options or schedule training during periods of cleaner grid electricity helps meet environmental goals.

Common Challenges and How to Overcome Them

Organizations implementing AI cost analytics platforms encounter predictable obstacles. Understanding these challenges helps teams prepare and respond effectively.

Incomplete or Inconsistent Tagging

Cost attribution depends on proper resource tagging. When teams don’t tag resources consistently, spending can’t be accurately allocated.

The solution involves establishing tagging policies before deployment and enforcing them through automation. Cloud governance tools can prevent resources from being created without required tags.

Regular audits identify untagged or incorrectly tagged resources. Automated remediation can apply default tags to resources that lack proper metadata.

Resistance from Development Teams

Engineers sometimes view cost tracking as bureaucratic overhead that slows development. They worry that budget controls will interfere with experimentation and innovation.

Overcoming this resistance requires demonstrating value rather than imposing restrictions. Show teams how cost visibility helps them optimize their own work and secure budget for future projects.

Involve engineers in setting budget policies rather than dictating from above. When teams participate in defining reasonable limits, they’re more likely to support the process.

Data Silos Across Multiple Platforms

Organizations often use multiple AI providers, cloud platforms, and development environments. Data lives in different systems with incompatible formats.

Robust integration capabilities address this challenge. Platforms must support diverse data sources and normalize information into consistent formats.

Custom connectors and APIs enable integration with proprietary systems. Organizations with unique infrastructure need platforms that accommodate non-standard data sources.

Alert Fatigue and False Positives

Overly sensitive alerting creates noise that teams learn to ignore. When every small cost fluctuation triggers notifications, important signals get lost.

Careful threshold tuning reduces false positives. Alerts should fire for genuinely anomalous conditions, not normal usage variations.

Context-aware alerting uses machine learning to understand normal patterns. Rather than static thresholds, intelligent alerts adapt to usage patterns and trigger only for truly unusual events.

The ROI of AI Cost Analytics Platforms

Investing in cost analytics platforms requires justification. Organizations need to understand the financial return on these tools.

Direct savings can come from reduced waste. Organizations report identifying inefficient workloads, over-provisioned resources, and unnecessary API calls that may reduce spending

Indirect benefits include improved budget predictability. Finance teams can forecast AI expenses accurately rather than dealing with surprise bills. This predictability enables better planning and resource allocation.

Faster innovation cycles represent another benefit. When teams have clear cost visibility and budget guardrails, they can experiment confidently without fear of creating financial disasters. This encourages exploration of new AI capabilities.

Operational efficiency improves when automated systems handle cost monitoring and optimization. Engineering teams spend less time manually tracking expenses and more time building features.

For most organizations, platforms pay for themselves within months through direct cost savings alone. The additional operational and strategic benefits make the ROI compelling.

| Benefit Category | Typical Impact | Measurement Method | Time to Realize |

|---|---|---|---|

| Direct Cost Savings | 20-40% reduction in AI spend | Month-over-month expense comparison | 1-3 months |

| Budget Predictability | ±5% forecast accuracy | Variance between forecast and actual | 2-4 months |

| Anomaly Prevention | Avoid 10-50% cost overruns | Detected anomalies vs. costs prevented | Ongoing |

| Resource Optimization | 15-25% efficiency gain | Output per dollar spent | 2-5 months |

| Time Savings | 5-10 hours per week | Reduction in manual cost tracking | 1-2 months |

| Faster Experimentation | 30-50% more iterations | Number of experiments within budget | 3-6 months |

Looking Ahead: The Future of AI Cost Management

AI adoption continues accelerating, and cost management capabilities must keep pace. Several developments will shape the next generation of cost analytics platforms.

Deeper integration with AI development workflows will make cost tracking invisible. Instead of separate platforms, cost visibility will be embedded directly into development environments, testing frameworks, and deployment pipelines.

Real-time cost feedback during development helps engineers make cost-aware decisions before code reaches production. IDE plugins might show the projected cost of a model configuration change while developers are still writing code.

Increased sophistication in cost-performance trade-off analysis will help organizations find optimal balances. Platforms will recommend specific configurations that achieve quality targets at minimal cost.

Expanded coverage of emerging AI technologies ensures platforms remain relevant as the field evolves. Support for new model types, training approaches, and deployment patterns will appear as they gain adoption.

The U.S. National Science Foundation continues investing in AI research, including work on making AI more accessible and economically viable. These research directions will inform future platform capabilities.

Better support for distributed AI workloads accommodates edge computing and federated learning scenarios. As AI moves beyond centralized cloud deployments, cost tracking must follow.

Frequently Asked Questions

What’s the difference between cloud cost management and AI cost analytics platforms?

Cloud cost management tools track general infrastructure spending like virtual machines, storage, and networking. AI cost analytics platforms specifically understand AI workload economics—token consumption, model training costs, GPU utilization, and inference expenses. They provide granularity and context that generic cloud tools can’t match for AI applications.

How much do AI cost analytics platforms typically cost?

Pricing varies significantly based on features and scale. Pricing varies by platform and use case; consult individual provider websites for current information. Many platforms offer usage-based pricing tied to AI spending volume or number of tracked resources. Enterprise plans with advanced features typically require custom contracts.

Can these platforms work with custom or self-hosted AI models?

Yes, most advanced platforms support custom models through APIs and SDKs. Organizations running self-hosted models can instrument their infrastructure to send cost data to analytics platforms. This requires more integration work than managed services but provides equivalent visibility into infrastructure costs, compute utilization, and resource consumption.

How quickly can organizations implement an AI cost analytics platform?

Basic implementation typically takes 1-2 weeks for platform deployment, provider integrations, and initial configuration. Comprehensive instrumentation across all AI workloads might require several weeks depending on infrastructure complexity. Organizations usually see initial insights within the first week and meaningful optimization opportunities within 2-4 weeks of complete data collection.

What level of technical expertise is required to manage these platforms?

Basic cost tracking and reporting features require minimal technical knowledge—finance teams can interpret dashboards and reports without engineering backgrounds. Advanced features like custom integrations, complex budget policies, and automated optimization typically require involvement from engineers who understand the underlying AI infrastructure. Most organizations use cross-functional teams combining finance and engineering expertise.

Do AI cost analytics platforms support multi-cloud environments?

Leading platforms provide unified visibility across multiple cloud providers including AWS, Azure, Google Cloud, and specialized AI services like OpenAI and Anthropic. They aggregate costs from different sources into single dashboards, enabling comparison and optimization across providers. Multi-cloud support is essential for organizations pursuing vendor diversification strategies.

How do these platforms handle data privacy and security?

Enterprise-grade platforms implement comprehensive security controls including encryption in transit and at rest, role-based access controls, audit logging, and compliance with standards like SOC 2, ISO 27001, and GDPR. They typically don’t require access to model training data or inference payloads—only metadata about resource usage and costs. Organizations should verify specific security features and compliance certifications with individual providers.

Taking Action on AI Cost Management

AI spending will only increase as organizations deploy more sophisticated applications. Proactive cost management separates efficient operations from budget disasters.

Start by assessing current visibility into AI costs. Can the finance team explain what’s driving AI expenses? Can engineering teams see how their decisions impact spending? If answers are unclear, it’s time to implement proper tracking.

Evaluate AI cost analytics platforms based on specific organizational needs rather than generic feature lists. The right solution depends on infrastructure complexity, team size, compliance requirements, and existing tooling.

But don’t wait for perfect information before beginning. Even basic cost tracking delivers immediate value. Organizations can start with limited instrumentation and expand coverage over time.

The economics of AI continue evolving. Research from arXiv on frameworks like LCOAI and Cost-of-Pass metrics demonstrates growing sophistication in how the industry thinks about AI costs. Organizations that adopt these analytical approaches gain competitive advantages.

Federal investment continues driving AI innovation, with the National Science Foundation and partner agencies funding research institutes focused on advancing AI capabilities. As AI becomes more powerful and accessible, cost management becomes more critical—not less.

The organizations that master AI cost analytics won’t just save money. They’ll make better decisions about model selection, understand true AI ROI, and experiment more confidently. These advantages compound over time.

Start by documenting current AI spending and identifying major cost drivers. Then evaluate platforms that address specific challenges. The initial effort pays for itself quickly through reduced waste and improved efficiency.

AI promises to transform industries and create new capabilities. Effective cost management ensures organizations can afford to realize that promise.