Quick Summary: LLM chatbot pricing in 2026 ranges from free tiers with limited access to enterprise plans exceeding $3,000 monthly. Token-based API costs vary dramatically: OpenAI’s GPT-5.2 Pro charges $21/$168 per million tokens, while GPT-5.2 standard charges $1.75/$14, and DeepSeek V3.2-Exp costs $0.28 per million input tokens (cache-miss) and $0.42 per million output tokens. The right choice depends on usage volume, features needed, and whether you need subscription chatbot access or direct API integration.

The LLM chatbot market has exploded, and with it, a pricing landscape that can confuse even experienced developers. If someone asked what an AI chatbot costs in 2026, the honest answer is: anywhere from zero dollars to six figures annually.

That massive range exists because “LLM chatbot pricing” encompasses two fundamentally different approaches. First, there are subscription-based chatbot platforms where teams pay monthly fees for ready-to-use conversational AI. Second, there are token-based API services where developers build custom solutions and pay per usage.

Understanding which model fits specific needs—and what the true costs look like—requires cutting through marketing fluff and examining real numbers. The pricing structures have evolved significantly since 2025, with new models entering the market and established providers adjusting their rates.

How LLM Chatbot Pricing Actually Works

Before diving into specific costs, it helps to understand the two dominant pricing frameworks that shape this market.

Subscription-Based Chatbot Platforms

These services offer complete chatbot solutions with interfaces, integrations, and support built in. Teams pay a recurring fee—usually monthly—and get access to a platform that handles the technical complexity.

According to recent market analysis, subscription chatbot pricing typically follows this structure:

| Pricing Model | How It Works | Typical Cost Range |

|---|---|---|

| Subscription (SaaS) | Fixed monthly plans with usage caps | $30–$1,500/month |

| Usage-Based | Pay per conversation, resolution, or token | $0.50–$5 per conversation |

| Custom Enterprise | Negotiated pricing with dedicated resources | $3,000–$50,000+/month |

| Per-User/Seat | Cost per team member accessing platform | $15–$200/user/month |

The subscription approach works well for businesses that want predictable costs and minimal technical overhead. But here’s the catch: these platforms often impose strict limits on monthly conversations, active chatbots, or training data volume.

Token-Based API Pricing

For developers building custom solutions, API access offers more flexibility but introduces variable costs. Every interaction with an LLM gets measured in tokens—roughly equivalent to word fragments.

Token pricing splits into two components: input tokens (the prompt sent to the model) and output tokens (the response generated). Output tokens nearly always cost more because generating text requires more computational resources than processing it.

The math gets interesting fast. A typical customer service conversation might consume 500 input tokens and generate 300 output tokens. At different provider rates, that single interaction could cost anywhere from fractions of a cent to several cents.

Major LLM API Pricing Comparison

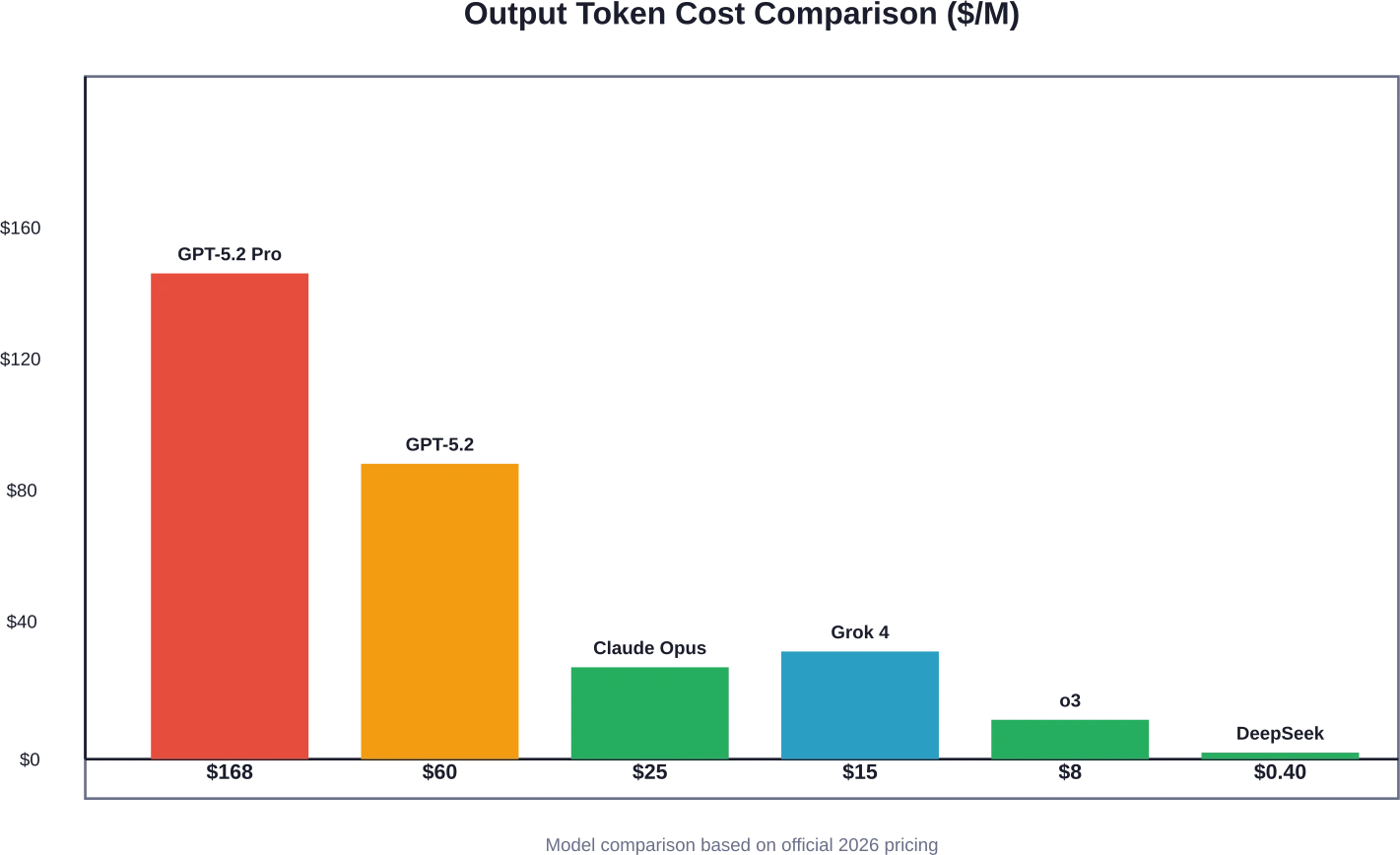

The token-based pricing landscape shifted dramatically in early 2026. New models launched, competitors undercut each other, and capability improvements changed cost-per-value calculations.

OpenAI Pricing Structure

OpenAI operates both subscription plans for ChatGPT access and per-token API pricing for developers. According to OpenAI’s official pricing page, their subscription tiers for ChatGPT include:

- Free: Limited access to GPT-5.2 with message caps and slower responses

- Go: Expanded access with more messages and uploads

- Plus, Pro, Team, Enterprise: Progressive tiers with higher limits and additional features

For API access, OpenAI’s February 2026 pricing shows significant variation across model tiers:

| Model | Input ($/M tokens) | Output ($/M tokens) | Use Case |

|---|---|---|---|

| GPT-5.2 Pro | $21.00 | $168.00 | Maximum capability tasks |

| GPT-5.2 | $1.75 | $14.00 | Latest flagship model |

| GPT-4.1 Mini | $0.40 | $1.60 | Cost-efficient tasks |

| o1 (reasoning) | $15.00 | $60.00 | Complex problem-solving |

| o3 (reasoning) | $2.00 | $8.00 | Next-gen reasoning |

Community discussions noted that o1 costs significantly more than o3, though the pricing relationship reflects different computational architectures rather than capability hierarchy.

OpenAI also offers specialized models like chatgpt-image-latest at $5 input and $10 output per million tokens, designed for multimodal interactions.

Anthropic Claude Pricing

Anthropic’s Claude models have gained traction for their strong performance in coding and analysis tasks. According to Anthropic’s announcement dated February 5, 2026, Claude Opus 4.6 pricing remains at $5 per million input tokens and $25 per million output tokens.

That makes Claude Opus substantially cheaper than some OpenAI’s models. For a developer running 10 million input tokens and 5 million output tokens monthly using equivalent-tier models, the cost difference is significant:

- GPT-5.2 Pro: (10 × $21) + (5 × $168) = $1,050

- Claude Opus 4.6: (10 × $5) + (5 × $25) = $175

Claude also introduced a 1M token context window in beta, allowing longer conversations without context truncation—a feature that reduces token waste from repeated context.

Anthropic provides cost monitoring tools through the Claude Console, where developers can track usage patterns and set spend limits. For Anthropic Claude Code, developers can use the /cost command to view detailed token usage statistics for current sessions, helping identify optimization opportunities.

Google Gemini Pricing

Google’s Gemini models offer competitive pricing, though specific 2026 rates vary by model tier and region. Based on competitor analysis, Gemini models typically position themselves between OpenAI’s premium tiers and budget alternatives.

Gemini’s advantage lies in integration with Google Cloud infrastructure and services, making it attractive for organizations already invested in that ecosystem.

xAI Grok Pricing

According to competitor analysis, xAI launched Grok 4 models with pricing at $3 per million input tokens and $15 per million output tokens. This positions Grok 4 as more expensive than Claude Opus but cheaper than GPT-5.2.

xAI also offers Grok 4 Fast and Grok 4.1 Fast at dramatically lower rates: $0.20 input and $0.50 output per million tokens. These fast variants sacrifice some capability for speed and cost efficiency.

DeepSeek Pricing Disruption

China-based DeepSeek has undercut nearly every competitor with its V3.2-Exp model variant. DeepSeek V3.2-Exp costs $0.28 per million input tokens (cache-miss) and $0.42 per million output tokens.

That pricing represents an order of magnitude reduction compared to premium Western models. For high-volume applications, DeepSeek’s rates could translate to thousands of dollars in monthly savings.

The trade-offs include potential latency from Chinese servers, data residency concerns for regulated industries, and questions about long-term pricing sustainability.

Subscription Chatbot Platform Costs

For businesses that prefer turnkey solutions over API development, subscription platforms bundle the LLM access with interfaces, analytics, and integrations.

Small Business Pricing

Entry-level plans typically serve solopreneurs or small teams testing chatbot capabilities. These starter plans often cost $30–$150 monthly and include:

- 1–3 active chatbots

- Limited monthly conversations (often 500–5,000)

- Basic integrations (website, Facebook Messenger)

- Standard response templates

- Email support

The constraints here matter. A small e-commerce site handling 100 customer inquiries daily would hit a 3,000-conversation monthly cap within the first week. Once limits are exceeded, platforms either charge overage fees or pause the chatbot—neither option is great for customer experience.

Mid-Market Solutions

Growing companies typically need plans in the $300–$1,000 monthly range. At this tier, capabilities expand substantially:

- 5–10 chatbots with more sophisticated logic

- 15,000–50,000 monthly conversations

- CRM and helpdesk integrations

- Custom training on company-specific data

- Analytics and conversation insights

- Priority support with faster response times

This tier works for companies with established customer bases but not yet at enterprise scale. The pricing starts to reflect the value of automation—a single support representative costs $3,000–$5,000 monthly in salary and benefits, so even a chatbot handling 30% of inquiries can justify the investment.

Enterprise Chatbot Pricing

Large organizations often pay $3,000–$50,000+ monthly for enterprise-grade chatbot platforms. At this level, pricing typically shifts to custom quotes based on:

- Unlimited or very high conversation volumes

- White-label branding options

- Advanced security and compliance features

- Dedicated account management

- Custom model training and fine-tuning

- SLA guarantees for uptime and response speed

- Multi-language support

Enterprise deals often include professional services—implementation support, custom integration development, and ongoing optimization consulting. These services can add tens of thousands in one-time or recurring costs.

Hidden Costs That Inflate LLM Chatbot Pricing

The advertised price rarely tells the complete story. Several hidden or semi-hidden costs can double the actual expense of running LLM chatbots.

Context Window and Token Waste

Every conversation with an LLM includes not just the latest user message but also conversation history for context. A 10-turn conversation might send thousands of tokens of context with each new message.

Models with larger context windows reduce this waste by maintaining more conversation state without re-sending. Claude Opus 4.6’s 1M token context window represents a major advantage here—longer conversations don’t require expensive context re-transmission.

Prompt Caching Costs

Some providers offer prompt caching to reduce costs when repeatedly sending the same context. OpenAI and Anthropic both support forms of caching, but the pricing models differ.

Cached tokens cost less than fresh ones, but not all content qualifies for caching. Understanding when caching applies—and optimizing prompts to maximize cache hits—requires technical sophistication that smaller teams may lack.

Integration and Development Time

API-based approaches save on subscription fees but introduce development costs. Building a production-ready chatbot requires:

- Backend infrastructure for API calls

- User interface development

- Conversation flow logic and error handling

- Security implementation for user data

- Monitoring and logging systems

- Ongoing maintenance as APIs evolve

For a mid-sized development team, this might represent 200–500 hours of work initially, plus 10–20 hours monthly for maintenance. At typical developer rates, that translates to $20,000–$50,000 in initial costs and $1,500–$3,000 monthly ongoing.

Data Preparation and Training

General-purpose LLMs perform well out of the box, but domain-specific performance often requires fine-tuning or retrieval-augmented generation (RAG) systems.

Building a RAG system means:

- Collecting and cleaning company documentation

- Chunking content appropriately

- Generating and storing embeddings

- Implementing retrieval logic

- Testing and iterating on retrieval quality

This work isn’t free. Organizations often spend weeks or months getting knowledge bases production-ready.

Monitoring and Quality Assurance

LLMs occasionally generate incorrect, inappropriate, or off-brand responses. Enterprise deployments require:

- Conversation monitoring systems

- Human review processes for flagged interactions

- A/B testing different prompts and models

- Regular audits for quality and compliance

These operational costs add up. A company might need 0.5–2 FTE dedicated to chatbot quality management, depending on conversation volume and risk tolerance.

Choosing the Right Pricing Model

With such varied options, how should organizations decide between subscription platforms versus API development, or between premium models versus budget alternatives?

Usage Volume Calculations

Start by estimating conversation volume and token consumption. For a customer service chatbot:

- Estimate daily conversations (existing ticket volume provides a baseline)

- Calculate average tokens per conversation (500–2,000 is typical depending on complexity)

- Add 30–50% buffer for growth and unexpected spikes

Then calculate costs across different providers. A company handling 10,000 conversations monthly at 1,000 tokens each (500 input, 500 output) would consume:

- 5 million input tokens monthly

- 5 million output tokens monthly

At different provider rates:

| Provider/Model | Monthly Cost | Annual Cost |

|---|---|---|

| GPT-5.2 Pro | $945 | $11,340 |

| Claude Opus 4.6 | $150 | $1,800 |

| Grok 4 | $90 | $1,080 |

| o3 | $50 | $600 |

| DeepSeek V3.2 | $3.50 | $42 |

That calculation reveals massive differences. But wait—price isn’t everything.

Quality Versus Cost Trade-Offs

Cheaper models often mean lower quality outputs. For use cases where accuracy matters—medical advice, legal information, financial guidance—spending more for better models reduces risk.

Some developers have reported that memory costs can spiral unexpectedly when building chatbots with long conversation histories, especially with models that don’t support efficient context management.

Testing different models against specific use cases provides the clearest answer. Run pilot projects with 100–500 real conversations across multiple models, measuring:

- Response accuracy and relevance

- User satisfaction scores

- Conversation resolution rates

- Escalation to human agents

The model that provides acceptable quality at the lowest cost wins. Sometimes that’s a premium model; sometimes a mid-tier option performs just as well.

Build Versus Buy Decision

Should organizations build custom chatbots using APIs or buy subscription platforms?

Subscription platforms make sense when:

- Technical resources are limited

- Speed to market matters more than customization

- Conversation volume fits within platform limits

- Standard integrations cover needed use cases

API development makes sense when:

- Unique workflows require custom logic

- High volume makes subscription costs prohibitive

- Deep integration with existing systems is essential

- Technical team has bandwidth for development

The crossover point often occurs around 25,000–50,000 monthly conversations. Below that threshold, subscription platforms offer better economics. Above it, custom API implementations usually cost less despite development overhead.

Managing and Optimizing LLM Costs

Once deployed, several strategies help control ongoing expenses.

Prompt Engineering for Efficiency

Well-crafted prompts reduce token waste and improve output quality. Techniques include:

- Using concise system messages that establish context without excessive words

- Implementing few-shot learning with 2–3 examples rather than 10+

- Structuring outputs with JSON or other formats to minimize verbose explanations

- Breaking complex tasks into smaller steps when possible

A 20% reduction in average tokens per conversation translates directly to 20% cost savings.

Model Selection by Task

Not every task requires the most capable model. Intelligent routing can save substantial costs:

- Use cheaper models for simple FAQs and routing decisions

- Reserve expensive models for complex reasoning or generation

- Implement confidence scoring to determine when to escalate to premium models

A tiered approach might use GPT-4.1 Mini for 70% of conversations and GPT-5.2 for the remaining 30% that need advanced capabilities, reducing average cost by 50–60%.

Caching and Context Optimization

Leveraging prompt caching when available reduces costs for repeated context. Strategic use of cached content can cut token expenses significantly.

For Anthropic Claude Code, developers can use the /cost command to view detailed token usage statistics for current sessions, helping identify optimization opportunities.

Usage Monitoring and Alerts

Both OpenAI and Anthropic provide usage monitoring tools. Setting up alerts prevents surprise bills when usage spikes unexpectedly.

Key metrics to monitor:

- Daily token consumption trends

- Cost per conversation over time

- Model selection distribution

- Error rates that trigger retries and waste tokens

Anthropic’s Claude Console provides detailed cost and usage reporting visible to developers, billing managers, and administrators, enabling proactive cost management.

Enterprise Considerations and Volume Discounts

Large organizations often negotiate better rates than published API pricing suggests.

Custom Enterprise Agreements

Companies committing to significant volume—often $50,000+ annually—can negotiate:

- Volume discounts of 10–30%

- Custom rate tiers based on committed spend

- SLA guarantees for uptime and latency

- Dedicated support and technical account management

- Private deployment options for data sensitivity

OpenAI, Anthropic, and other major providers all offer enterprise plans, though pricing details aren’t publicly disclosed.

Data Residency and Compliance

Regulated industries face additional constraints. Healthcare organizations need HIPAA compliance; financial services require SOC 2; European companies must consider GDPR data residency rules.

Enterprise agreements often include:

- Business Associate Agreements (BAAs) for healthcare

- Data processing agreements specifying data handling

- Regional deployment options to keep data in specific jurisdictions

- Zero data retention policies

Claude Code supports zero data retention options for teams concerned about data privacy.

These compliance features sometimes carry premium pricing or minimum spend commitments.

Emerging Models and Future Pricing Trends

The LLM landscape evolves rapidly. Several trends are shaping pricing for 2026 and beyond.

Open Source Competition

Models like GLM-5 and Qwen3.5 represent increasingly capable open-source alternatives. Organizations with technical resources can self-host these models, eliminating per-token costs entirely.

The trade-off is infrastructure expense. Running a 40B parameter model requires significant GPU resources—often $500–$2,000 monthly in cloud GPU costs or substantial capital investment for on-premises hardware.

For very high volume deployments (millions of daily conversations), self-hosting can achieve better economics than API services despite infrastructure overhead.

Specialized Models

Task-specific models optimized for narrow use cases often provide better value than general-purpose flagships. OpenAI’s o3 reasoning model costs less than o1 while delivering improved performance for certain analytical tasks.

As providers release more specialized models, organizations can optimize costs by matching models to specific use case requirements rather than using expensive flagship models for everything.

Multimodal Pricing Evolution

Models handling images, audio, and other modalities introduce additional pricing complexity. OpenAI’s Realtime API charges differently for text, audio, and image tokens, with audio tokens in user messages costing 1 token per 100ms and assistant audio tokens at 1 token per 50ms.

For voice-based chatbots, these rates add up quickly. A 5-minute conversation involves 300,000ms of audio. At OpenAI’s Realtime API rates (1 token per 100ms for user audio, 1 token per 50ms for assistant audio), this could translate to 3,000–6,000 tokens depending on conversation split, before any text processing.

Calculating Return on Investment

Understanding costs is only half the equation. The other half is quantifying the value chatbots provide.

Support Cost Reduction

The most straightforward ROI calculation involves displaced support tickets. If a chatbot handles 40% of incoming inquiries and each human-handled ticket costs $5–$15 in labor, the savings add up quickly.

For a company processing 5,000 monthly support tickets at $8 average cost:

- Total monthly support cost: $40,000

- Chatbot handling 40%: 2,000 tickets automated

- Savings: 2,000 × $8 = $16,000 monthly

If the chatbot costs $2,000 monthly (including development and API expenses), the net savings is $14,000 monthly or $168,000 annually.

Revenue Impact

For sales and lead generation chatbots, ROI calculations shift to conversion improvements:

- Increased engagement from 24/7 availability

- Faster response times reducing abandonment

- Better qualification of leads before human handoff

- Upsell and cross-sell recommendations

Even small improvements in conversion rates can justify chatbot investment. A 2% increase in conversion on $1M monthly revenue is $20,000—far exceeding typical chatbot costs.

Intangible Benefits

Some chatbot value is harder to quantify:

- Improved customer satisfaction from instant responses

- Consistent brand voice across all interactions

- Freed-up human agents for complex high-value cases

- Data collection and insights from conversation patterns

These factors matter for long-term competitiveness even if they don’t show up directly in financial calculations.

Stop Overpaying for LLM Chatbots and Build It Right

LLM chatbot costs depend heavily on how the system is designed. Model choice, training strategy, token usage, and infrastructure all affect the final price. Many companies discover that using generic models without optimization quickly increases operational costs.

AI Superior works with businesses that need custom LLM systems built for real production use. The company develops and fine-tunes large language models, prepares training data, and optimizes deployment so chatbots remain accurate and cost-efficient as usage grows. Their team of PhD-level data scientists and engineers focuses on building AI systems tailored to specific workflows rather than relying on one-size-fits-all models.

Planning an LLM chatbot? Talk to AI Superior before you commit to an expensive architecture and get a clear view of what your chatbot should actually cost to build and run.

Real-World Cost Examples

To make pricing concrete, consider a few realistic scenarios:

Scenario 1: Small E-Commerce FAQ Bot

- Volume: 2,000 conversations monthly

- Approach: Subscription platform

- Cost: $79/month platform fee

- Result: Handles 60% of product questions, reducing email support volume by half

Scenario 2: Mid-Size SaaS Support

- Volume: 15,000 conversations monthly

- Approach: Custom API integration with Claude Opus

- Token usage: 12M input, 8M output monthly

- API cost: (12 × $5) + (8 × $25) = $260/month

- Development: $30,000 initial build, $2,000 monthly maintenance

- First-year cost: $30,000 + ($260 + $2,000 × 12) = $57,120

- Ongoing annual cost: $27,120

- Result: Handles 45% of tier-1 support, saves 2 FTE

Scenario 3: Enterprise Multi-Channel Assistant

- Volume: 200,000 conversations monthly across web, mobile, and voice

- Approach: Hybrid model using DeepSeek for simple queries, GPT-5.2 for complex

- Token usage: 120M input (80M DeepSeek, 40M GPT), 80M output (50M DeepSeek, 30M GPT)

- API cost: DeepSeek: (80 × $0.28) + (50 × $0.42) = $43.40; GPT: (40 × $21) + (30 × $168) = $5,880

- Total monthly API cost: $2,442

- Infrastructure: $5,000 monthly (load balancing, monitoring, databases)

- Team: 2 FTE for maintenance and optimization = $20,000 monthly

- Total monthly cost: $27,442

- Result: Handles 70% of customer interactions, displacing 8 support FTE

These examples illustrate how costs scale with volume and sophistication.

Common Pricing Questions

Do free LLM options exist?

Yes, several providers offer free tiers. According to OpenAI’s pricing page, their Free plan provides limited access to GPT-5.2 with message caps and slower responses. This works for experimentation but not production deployments.

Open-source models can be self-hosted at zero software licensing cost, but infrastructure expenses remain.

How do enterprise discounts work?

Enterprise customers committing to high volume can negotiate custom rates, often 10–30% below published API pricing. These deals typically require minimum annual spend commitments of $50,000–$100,000+.

What happens if usage exceeds plan limits?

Subscription platforms usually either charge overage fees (often at higher per-unit rates) or pause service until the next billing cycle. API services continue working but accumulate charges beyond any committed spend.

Can costs be predicted accurately?

Usage estimation improves with time, but variability remains. Unexpected viral content, seasonal spikes, or changes in user behavior can cause 2–5× usage swings. Building in a 30–50% buffer helps avoid surprises.

Are there regional pricing differences?

Some providers adjust pricing by region, though major API services like OpenAI and Anthropic use unified global rates. Data residency requirements sometimes force use of regional deployments that carry premium pricing.

Frequently Asked Questions

What is the average cost of an AI chatbot in 2026?

The average cost varies dramatically by approach. Subscription platforms for small businesses range from $30–$300 monthly. Mid-market solutions cost $300–$1,000 monthly. Enterprise deployments often exceed $3,000 monthly. For API-based implementations, costs depend on volume—typical ranges are $100–$5,000 monthly for most organizations, with high-volume enterprise deployments sometimes reaching $20,000+ monthly in token costs alone.

How much does ChatGPT API cost compared to Claude?

As of February 2026, OpenAI’s GPT-5.2 Pro costs $21 per million input tokens and $168 per million output tokens, while Anthropic’s Claude Opus 4.6 costs $5 input and $25 output per million tokens. Claude is substantially cheaper—about 67% less expensive than GPT-5.2 Pro. For 10 million input and 5 million output tokens monthly, GPT-5.2 costs $1,050 versus Claude at $175.

What factors affect LLM chatbot pricing the most?

The primary cost drivers are conversation volume, tokens per conversation, model selection, and implementation approach. A company using premium models like GPT-5.2 Pro at high volume can pay 100–400× more than one using budget models like DeepSeek for similar conversation counts. Context window size, caching efficiency, and whether custom development is needed also significantly impact total cost of ownership.

Is it cheaper to build a custom chatbot or use a platform?

For volumes below 25,000 monthly conversations, subscription platforms usually cost less when factoring in development time. Above that threshold, custom API implementations become more economical despite initial development costs of $20,000–$50,000. The crossover point depends on technical team availability and specific feature requirements. Custom solutions offer more flexibility but require ongoing maintenance.

Do LLM providers offer free tiers?

Yes, most major providers offer limited free access. OpenAI provides a Free plan with restricted access to GPT-5.2, message caps, and slower responses. These free tiers work for testing and experimentation but impose limits that make them impractical for production use. Once conversation volumes reach hundreds or thousands monthly, paid plans become necessary.

How can I reduce LLM API costs without sacrificing quality?

Several strategies reduce costs while maintaining quality: use tiered model routing (cheaper models for simple queries, premium models for complex ones), optimize prompts to reduce token waste, leverage prompt caching when available, implement larger context windows to avoid repeated context transmission, and test multiple models to find the best value-to-performance ratio for specific use cases. Many organizations achieve 30–50% cost reductions through these optimizations.

What hidden costs should I budget for beyond API pricing?

Beyond direct API or subscription costs, budget for development time ($20,000–$50,000 initial for custom solutions), ongoing maintenance ($1,500–$5,000 monthly), infrastructure for hosting and monitoring ($500–$5,000 monthly depending on scale), data preparation and knowledge base creation (weeks to months of effort), and quality assurance including human review processes. Hidden costs often double or triple the apparent price of LLM services.

Making Your LLM Chatbot Pricing Decision

The LLM chatbot pricing landscape in 2026 offers more options than ever—and more complexity. The spread between budget and premium options has widened, with choices now spanning from DeepSeek’s $0.28/$0.42 per million tokens to OpenAI’s GPT-5.2 Pro at $21/$168.

No single answer fits every use case. Small businesses testing conversational AI benefit from subscription platforms that bundle technology and support for predictable monthly fees. Growing companies with moderate volume often find mid-tier platforms or API implementations with cost-effective models like Claude Opus or o3 offer the best value. Large enterprises with technical resources can optimize costs through custom development, model routing, and volume negotiations.

The key is starting with clear usage estimates, testing multiple approaches with real workloads, and measuring not just costs but outcomes—support tickets resolved, conversion rates improved, customer satisfaction enhanced. Those metrics determine true ROI.

One thing is certain: the pricing will continue evolving. New models launch monthly, existing providers adjust rates, and open-source alternatives improve. Organizations that build flexible architectures allowing easy model switching position themselves to optimize costs as the market shifts.

Ready to explore LLM chatbot options for your specific needs? Start by calculating your expected monthly conversation volume and token consumption. Test free tiers from multiple providers with representative use cases. Then choose the solution that delivers acceptable quality at manageable cost—not necessarily the cheapest option or the most expensive, but the one that provides the best value for your particular requirements.