Quick Summary: LLM costs in the UK vary significantly based on provider and usage. As of 2026, businesses face charges ranging from fractions of a penny per token for smaller models to several pounds for complex queries on enterprise systems. The University of Manchester has developed frameworks reducing the resource demands of control techniques for LLMs by over 90%, potentially lowering operational costs dramatically. UK AI adoption continues growing, with natural language processing and text generation being the most common uses, with 85% of AI adopters currently using AI for these purposes.

Large Language Models have moved from experimental tech to business essentials across the UK. But here’s the thing—costs can spiral quickly if organisations don’t understand the pricing structures.

The UK AI sector saw substantial growth between 2023 and 2024, according to government data. With that expansion comes a pressing question: what does it actually cost to run these systems?

Understanding LLM expenses isn’t just about the per-token charges. Infrastructure, testing, control mechanisms, and energy consumption all factor into the total cost of ownership. And those numbers matter whether running a startup in Manchester or managing enterprise operations in London.

Understanding LLM Pricing Models in the UK

Most LLM providers charge based on token consumption. A token roughly equals four characters or three-quarters of a word in English.

Pricing structures typically separate input tokens (the prompt sent to the model) from output tokens (the response generated). Output tokens generally cost more because they require more computational resources.

The UK market sees several pricing approaches. Some providers offer tiered subscriptions with included token allowances. Others use pure pay-as-you-go models. Enterprise contracts often include volume discounts and dedicated capacity guarantees.

Token-Based Pricing Explained

Token-based billing means costs scale directly with usage. A simple query might consume 50-100 tokens total. Complex document analysis could run into thousands.

Real talk: most businesses underestimate their token consumption in the first quarter of deployment. Testing and development environments can burn through budgets surprisingly fast.

Here’s where it gets interesting. The University of Manchester researchers developed new software frameworks—LangVAE and LangSpace—that reduce hardware and energy resource needs for controlling and testing LLMs by over 90%. That’s not just a marginal improvement. It’s transformative for organisations worried about spiralling operational costs.

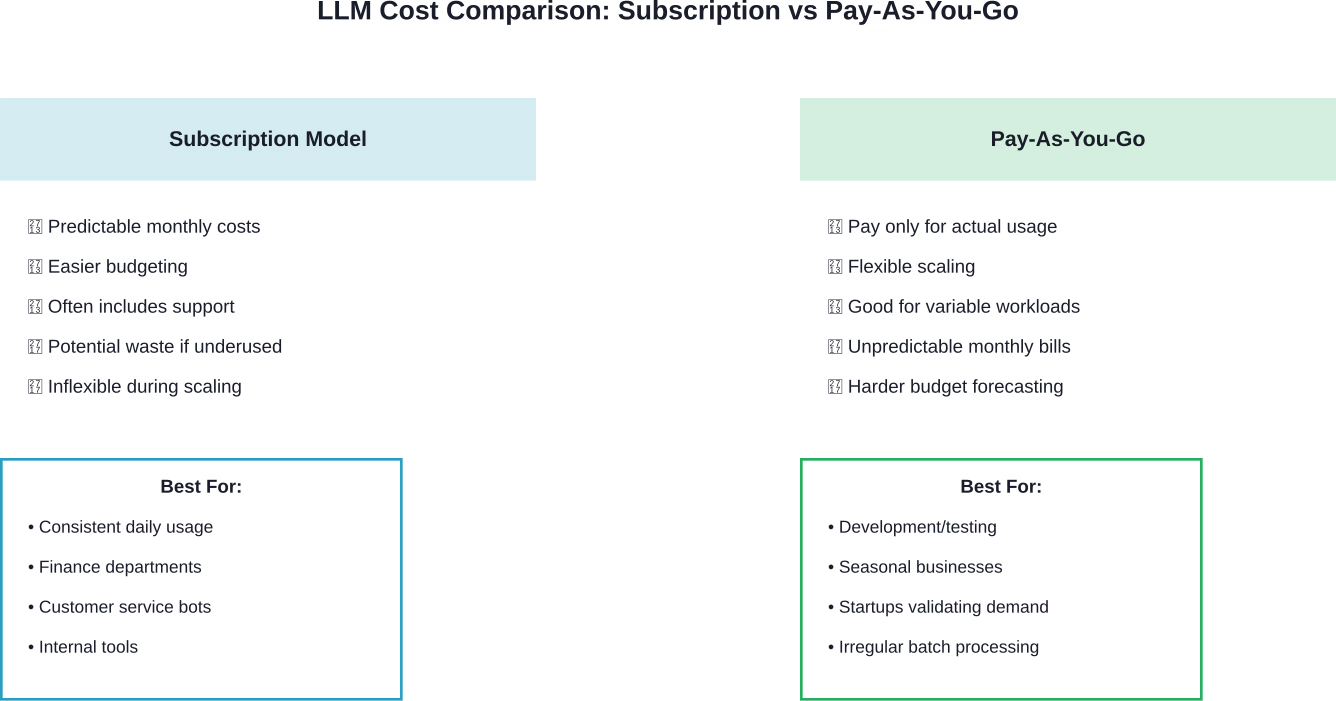

Subscription vs Pay-As-You-Go

Subscription models provide predictability. Fixed monthly costs work well for budgeting. But they can be wasteful if actual usage doesn’t match the tier purchased.

Pay-as-you-go offers flexibility. Organisations only pay for what they consume. The downside? Costs become harder to forecast, especially during scaling periods.

Many UK businesses adopt hybrid approaches. Base subscriptions cover predictable workloads. Overflow usage gets billed at pay-as-you-go rates.

UK AI Adoption Patterns and Cost Implications

Government data shows AI adoption varies considerably by organisation size and sector. Large and mid-sized businesses lead adoption rates, particularly in information and communication, finance, real estate, and business services.

Natural language processing and text generation are the most common uses, with 85% of AI adopters currently using AI for these purposes. That makes sense—these use cases deliver immediate value without extensive customisation.

But adoption patterns reveal something important about costs. Sectors with higher adoption rates have learned to manage expenses through specialisation and optimisation. They’re not just throwing models at problems.

Sector-Specific Usage Patterns

Financial services firms typically run high-volume, relatively standardised queries. Think fraud detection, compliance monitoring, document classification. These workloads benefit from dedicated capacity and negotiated rates.

Healthcare and legal sectors face different dynamics. Their queries tend to be longer and more complex. Accuracy matters more than speed. Specialised models often perform better than general-purpose alternatives.

Research from the Regulatory Genome Project at Cambridge Judge Business School demonstrates this precisely. Their analysis indicates that for Human-in-the-Loop systems to be truly effective, evaluation must consider total end-to-end efficiency. Specialised models aren’t just more accurate—they’re cheaper in isolation.

The research indicates that specialised models’ near-instantaneous processing time makes the entire workflow faster and more responsive. That speed translates directly into cost savings when billing by the token.

Cost Reduction Strategies for UK Organisations

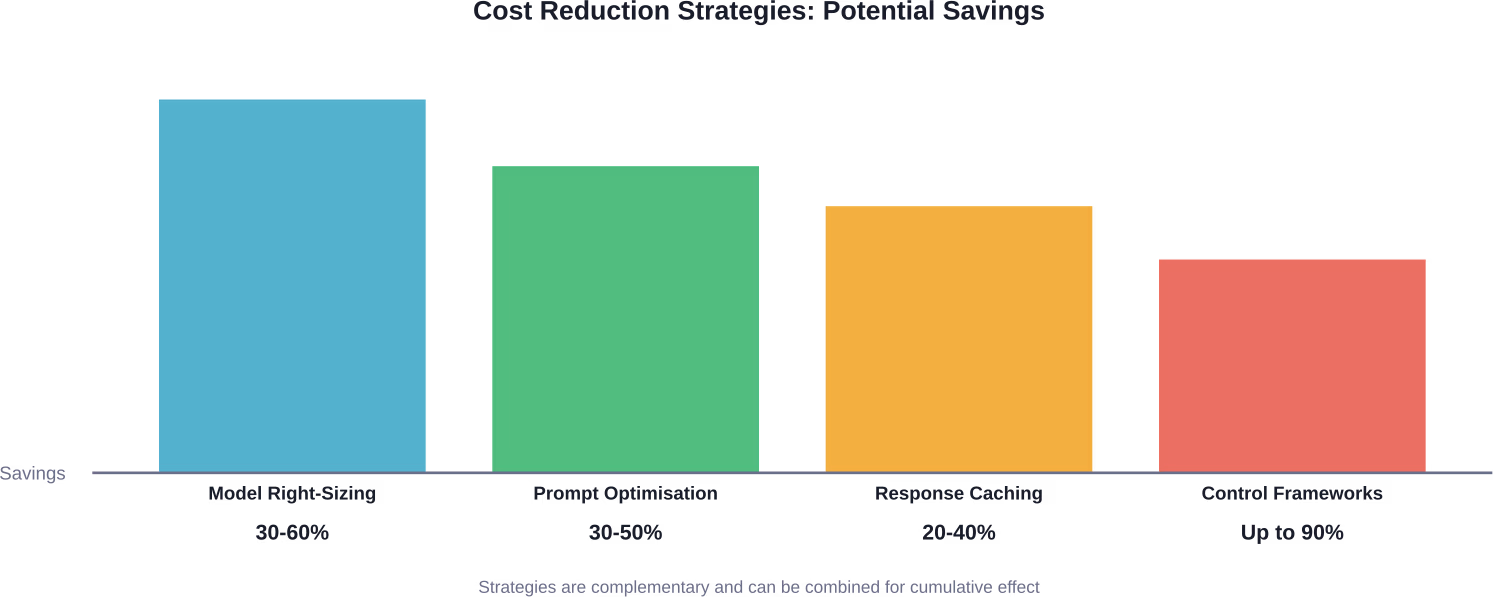

Smart organisations don’t just accept vendor pricing as fixed. Multiple strategies can dramatically reduce LLM operational expenses.

Model Selection and Right-Sizing

Not every task needs the largest, most capable model. Smaller models handle many routine tasks adequately at a fraction of the cost.

Consider a tiered approach. Route simple queries to lightweight models. Reserve premium models for complex reasoning tasks that genuinely require advanced capabilities.

Research from Cambridge Judge Business School confirms specialised AI models hold significant advantages for precision tasks. While general models at scale have their place, matching model capability to task requirements optimises both performance and cost.

Prompt Engineering and Optimisation

Inefficient prompts waste tokens. Verbose instructions, unnecessary examples, and poorly structured queries all inflate costs.

Effective prompt engineering reduces token consumption without sacrificing output quality. That means shorter prompts, clearer instructions, and strategic use of system messages.

Testing shows well-optimised prompts can reduce token usage by 30-50% compared to naive approaches. Over thousands of daily queries, those savings compound significantly.

Caching and Response Reuse

Many organisations query LLMs with identical or near-identical inputs repeatedly. Caching responses eliminates redundant API calls entirely.

Semantic caching takes this further. When a new query closely matches a previous one, the cached response might suffice. This approach requires careful implementation to avoid serving stale or inappropriate responses, but the cost savings can be substantial.

Advanced Control Frameworks

The University of Manchester breakthrough deserves special attention. Their LangVAE and LangSpace frameworks reduce resource demands for LLM control by over 90%.

These frameworks build compressed language representations from LLMs, making control and testing processes drastically more efficient. For organisations prioritising explainability and reliability—particularly in regulated sectors like healthcare and energy—this technology could fundamentally alter the cost equation.

The research team’s approach addresses a critical bottleneck. Examining and adjusting LLM behaviour traditionally requires massive computational resources. By compressing language representations, the frameworks make these processes accessible to organisations without hyperscale infrastructure.

Infrastructure and Hidden Costs

Token charges represent only part of total LLM expenses. Infrastructure, integration, monitoring, and maintenance all add up.

API Management and Monitoring

Effective cost management requires visibility into usage patterns. API management platforms track consumption, identify anomalies, and enforce rate limits.

Without proper monitoring, organisations often discover budget overruns only when invoices arrive. Real-time tracking enables proactive intervention before costs spiral.

Integration and Development Costs

Building LLM functionality into existing systems requires developer time. Depending on complexity, integration projects can range from a few days to several months.

Community discussions highlight this reality. Development and testing environments consume significant token budgets. Organisations should account for these expenses separately from production usage.

Energy and Environmental Considerations

LLMs consume considerable energy, both in training and inference. While cloud providers handle infrastructure, those costs ultimately flow through to customers.

The Manchester research directly addresses this concern. Reducing resource demands by 90% means corresponding reductions in energy consumption. For organisations with sustainability commitments, these efficiency gains matter beyond just financial savings.

Specialised vs General Models: Cost-Benefit Analysis

The debate between specialised and general models has real cost implications.

General models offer versatility. One API, multiple use cases. Simplified architecture. But they’re often oversized for specific tasks, consuming more tokens than necessary.

Specialised models excel at precision tasks. Research from Cambridge Judge Business School demonstrates they’re not just more accurate—they’re cheaper for targeted applications. Near-instantaneous processing means lower per-query costs and better resource utilisation.

The optimal strategy typically combines both. General models handle diverse, unpredictable queries. Specialised models tackle high-volume, domain-specific work.

| Factor | General Models | Specialised Models |

|---|---|---|

| Upfront Cost | Lower (no training required) | Higher (requires training/fine-tuning) |

| Per-Query Cost | Higher (larger token consumption) | Lower (optimised for specific tasks) |

| Accuracy | Good across diverse tasks | Excellent for target domain |

| Speed | Variable | Near-instantaneous for trained tasks |

| Flexibility | High (handles varied queries) | Low (optimised for specific domain) |

| Break-Even Point | N/A | Typically 10,000+ queries/month |

UK Public Sector AI Adoption and Cost Considerations

The public sector faces unique constraints around AI deployment. Budget limitations, procurement processes, and public trust all factor into adoption decisions.

Research from Nesta reveals that less than half (40%) of the UK public trust the public sector to use AI responsibly. That trust deficit complicates deployment, potentially requiring additional oversight and transparency mechanisms—all of which add costs.

The AI Incubator for Artificial Intelligence, working with Nesta’s Centre for Collective Intelligence Design, has trialled approaches to involve the public in assessing AI tools for public services. These participatory methods add process overhead but may prove essential for building the trust necessary for successful deployment.

Government Procurement Challenges

Public sector procurement prioritises transparency and value for money. Standard commercial pricing models don’t always align with these requirements.

Fixed-price contracts provide budget certainty but may not accommodate the variable nature of token-based pricing. Some government departments negotiate hybrid arrangements with usage caps and overage provisions.

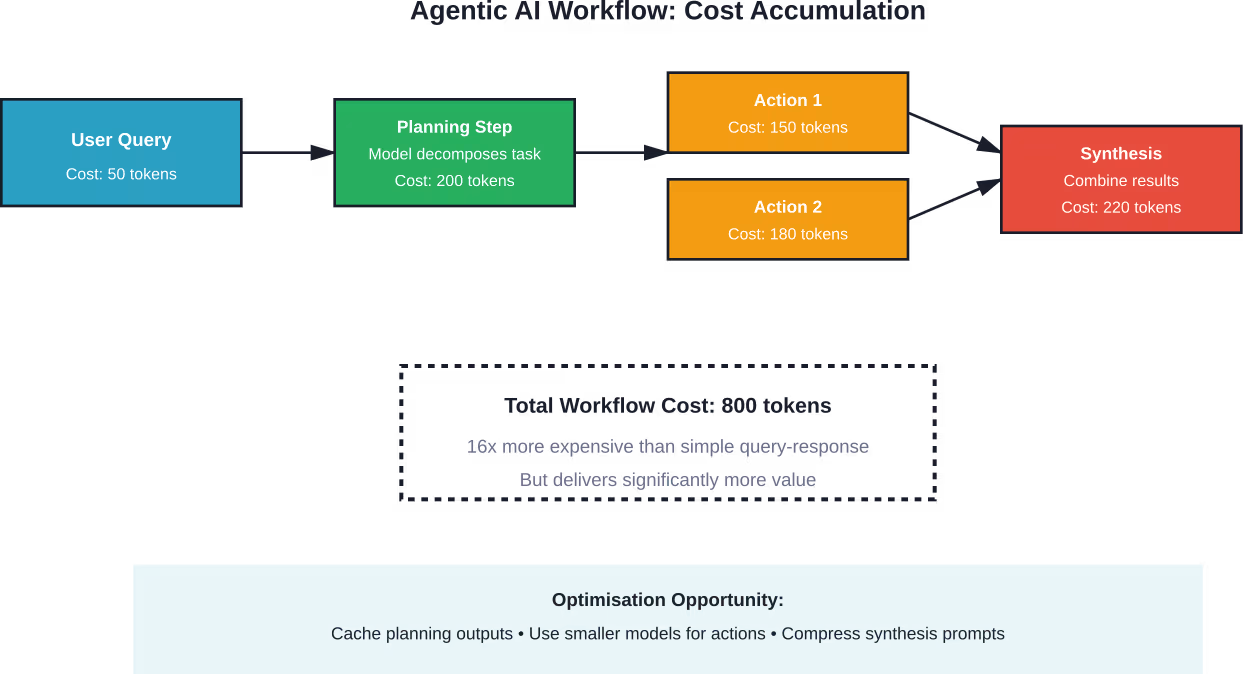

Agentic AI Systems and Multi-Step Workflows

Agentic AI systems that handle multi-step tasks represent the next frontier of complexity—and cost management challenges.

These systems make multiple LLM calls to complete single user requests. Each step consumes tokens. Complex workflows can quickly multiply costs compared to simple query-response patterns.

Human-in-the-Loop workflows add another dimension. While they improve accuracy and build trust, they also introduce human labour costs alongside computational expenses.

The Regulatory Genome Project research on HITL systems emphasises that evaluation must consider total end-to-end efficiency. A model that’s slightly more expensive per query might reduce overall costs if it eliminates human review steps.

Future Cost Trends and Predictions

LLM costs have generally trended downward as competition increases and efficiency improves. But predicting future pricing remains challenging.

Several factors point toward continued cost reductions. Model compression techniques improve efficiency. Hardware advances reduce computational requirements. Increased competition pressures providers to lower prices.

However, demand for more sophisticated capabilities could offset these savings. As organisations deploy LLMs for increasingly complex tasks, they may consume more tokens per interaction even as per-token prices decline.

The Open Source Factor

Open source LLMs introduce competitive pressure on commercial pricing. Organisations willing to manage their own infrastructure can potentially reduce costs significantly.

But self-hosting isn’t free. Hardware, energy, maintenance, and expertise all have costs. For many UK businesses, commercial APIs remain more economical than self-hosted alternatives, particularly at lower usage volumes.

Research from the Generative AI Laboratory at the University of Edinburgh highlights the strain AI puts on open access resources. The cost of providing free resources is skyrocketing, creating tension between the ideals of openness and economic sustainability.

Budgeting and Cost Forecasting Best Practices

Effective LLM cost management starts with realistic budgeting. But many organisations struggle to forecast expenses accurately.

Establishing Usage Baselines

Before committing to production deployment, run extended pilots. Track actual token consumption across representative workloads. Usage patterns often differ dramatically from initial estimates.

Consider seasonal variations. Many business applications show cyclical usage patterns. Budget for peak periods, not just averages.

Building in Buffer and Contingency

Token consumption can spike unexpectedly. System bugs, user behaviour changes, or expanded use cases can all drive costs above projections.

Generally speaking, adding 20-30% contingency to LLM budgets provides reasonable protection against overruns. Tighter contingencies work for mature deployments with established usage patterns.

Regular Review and Optimisation Cycles

LLM costs aren’t set-and-forget. Regular reviews identify optimisation opportunities. Query patterns change. New, more efficient models become available. Pricing structures evolve.

Quarterly cost reviews work well for most organisations. High-volume users may benefit from monthly analysis.

Cut LLM Costs Before You Commit

LLM costs in the UK often escalate at the data and training stages, especially when models are built without a clear structure. AI Superior focuses on building and deploying LLM systems end-to-end, starting with data collection, preprocessing, and model design through to training and fine-tuning. Instead of treating these as separate steps, the work is aligned from the start, which helps avoid rework and keeps budgets more predictable.

The team typically works with companies that need production-ready systems, not experiments – combining AI consulting with full development to match models to real business use cases. If you are planning an LLM project or trying to control costs across data, training, and deployment, it makes sense to get a second opinion early. Reach out to AI Superior to review your approach before costs lock in.

Frequently Asked Questions

How much do LLMs typically cost UK businesses per month?

Costs vary enormously based on usage volume and model selection. Small businesses running basic chatbots might spend £50-200 monthly. Mid-sized organisations with multiple applications typically see £500-5,000 in monthly costs. Large enterprises with extensive deployments can spend tens of thousands monthly. Token-based pricing means costs scale directly with usage, making generalisations difficult without knowing specific workload characteristics.

Are subscription plans or pay-as-you-go pricing better for UK companies?

It depends on usage predictability. Subscriptions work well for consistent, predictable workloads and provide budget certainty. Pay-as-you-go suits variable usage patterns, testing environments, and organisations validating demand. Many UK businesses use hybrid approaches—base subscriptions for predictable work with pay-as-you-go coverage for overflow. Analyse historical usage patterns to determine which model aligns with actual consumption patterns.

Can smaller models reduce costs without sacrificing quality?

Absolutely. Research from Cambridge confirms specialised models often outperform general models for specific tasks while costing less. Not every query needs the largest available model. Simple classification, routine customer service responses, and straightforward data extraction work well with smaller models. Reserve premium models for complex reasoning tasks that genuinely require advanced capabilities. Testing different models against actual workloads identifies optimal cost-performance balances.

What hidden costs should UK organisations budget for beyond API charges?

Integration and development time represent significant upfront costs. API management and monitoring tools add ongoing expenses. Testing and development environments consume tokens separately from production usage. Human review costs for Human-in-the-Loop workflows can exceed computational expenses. Training staff to work effectively with LLMs requires time investment. Finally, organisations should budget for periodic optimisation work to prevent cost creep as usage scales.

How can the University of Manchester’s 90% cost reduction be applied?

The LangVAE and LangSpace frameworks specifically address control and testing resource demands. Organisations prioritising explainability and reliability—particularly in regulated sectors—can adopt these frameworks to compress language representations. This makes examining and adjusting LLM behaviour dramatically more efficient. While the frameworks target specific aspects of LLM operations rather than general inference costs, they can substantially reduce total cost of ownership for organisations requiring rigorous testing and control mechanisms.

What cost benchmarks should UK businesses use for different sectors?

Financial services typically see higher per-employee LLM costs due to compliance, fraud detection, and document processing applications. Healthcare organisations face complex queries requiring sophisticated models, driving up per-interaction costs but handling lower volumes. Retail and e-commerce often run high-volume, lower-complexity workloads with modest per-query costs. Professional services like legal and consulting show variable patterns—some firms use LLMs extensively for research and drafting, others minimally. Rather than sector benchmarks, focus on use-case-specific metrics aligned with business value delivered.

Should UK organisations consider self-hosting open source models?

Self-hosting makes sense for specific scenarios: very high usage volumes where per-token costs become prohibitive, strict data sovereignty requirements, or need for extensive model customisation. However, self-hosting requires hardware investment, energy costs, maintenance expertise, and ongoing model updates. For most UK businesses below 10 million tokens monthly, commercial APIs remain more economical. Organisations should calculate total cost of ownership including infrastructure, personnel, and opportunity costs before committing to self-hosted deployments.

Conclusion

LLM costs in the UK continue evolving as the technology matures and adoption expands. Understanding pricing models, optimising usage, and selecting appropriate models all contribute to cost management.

The Manchester research demonstrating 90% reductions in control resource demands shows that breakthrough efficiency gains remain possible. As frameworks and techniques advance, organisations willing to invest in optimisation can achieve substantial savings.

But cost shouldn’t be the only consideration. The Regulatory Genome Project research on HITL systems reminds us that total end-to-end efficiency matters more than isolated per-token pricing. A slightly more expensive model that eliminates human review steps or reduces error rates may deliver better overall value.

For UK businesses evaluating LLM adoption, start with clear use cases and realistic usage projections. Run pilots to establish baselines. Implement monitoring from day one. And remember—the most expensive mistake isn’t overspending on tokens. It’s deploying systems that don’t deliver business value.

Ready to optimize your LLM costs? Begin by auditing current usage patterns, identifying quick wins through prompt optimisation, and evaluating whether specialised models might serve high-volume use cases more efficiently. The right strategy balances cost efficiency with the value these powerful tools can deliver.