Key Points: AI data annotation costs range from $4-12 per hour for basic tasks, with pricing models including hourly rates, per-unit fees, and project-based contracts. Costs vary by annotation complexity, required expertise, geographic location, quality requirements, and project scale, with industry data showing organizations waste up to 95% of annotations due to inefficient processes.

The foundation of every successful AI model isn’t complex algorithms or massive compute clusters. It’s annotated data. Clean, precise, labeled information that teaches machines what to recognize.

But here’s the catch—data annotation isn’t cheap. And if pricing models aren’t understood properly, costs spiral fast.

In 2026, organizations face mounting pressure to balance quality with budget constraints. Recent market forecasts show the global data annotation market continues to expand, driven by demand for computer vision, natural language processing, and autonomous systems.

The question isn’t whether annotation costs matter. It’s how to predict, control, and optimize them without sacrificing the quality that determines model performance.

Understanding AI Data Annotation Pricing Models

Annotation pricing isn’t one-size-fits-all. Different project types demand different billing structures, and choosing wrong creates budget overruns.

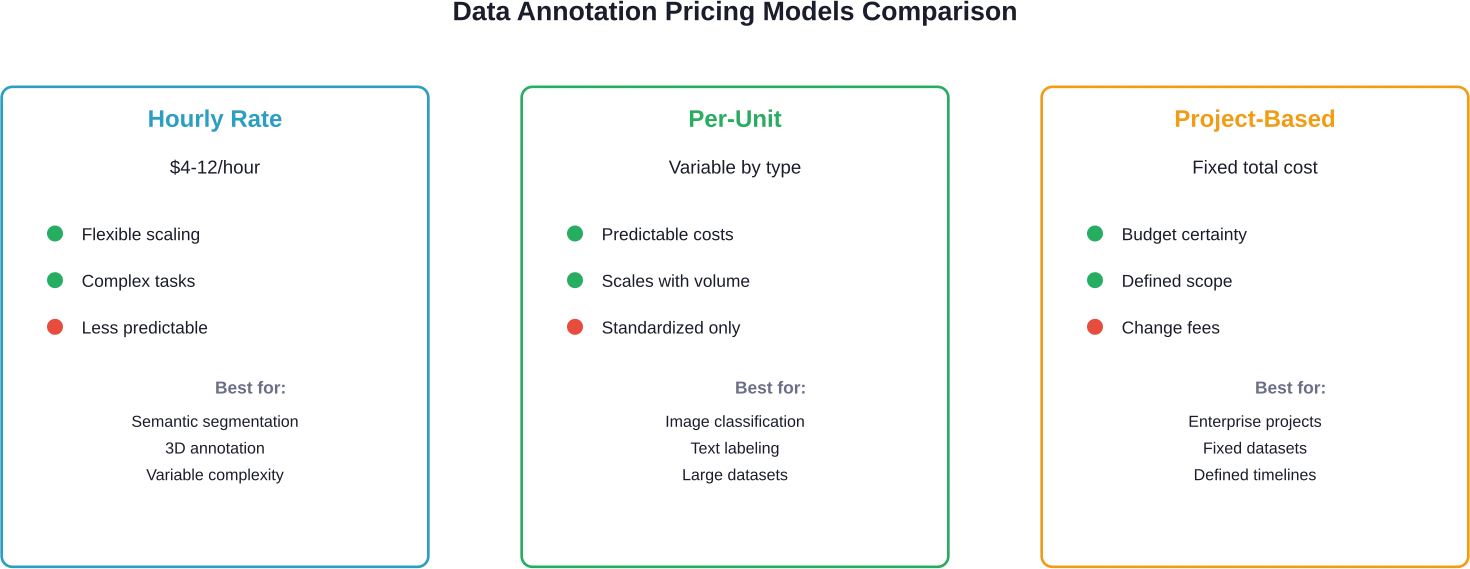

Three primary models dominate the market. Each has distinct advantages depending on project scope, timeline, and complexity.

Hourly Rate Pricing

This model bills based on annotator time rather than output volume. Rates typically range from $4 to $12 per hour, varying by annotator expertise and geographic location.

Hourly pricing works best for complex, variable tasks where annotation time per unit fluctuates significantly—think detailed semantic segmentation or 3D image annotation. When task complexity makes output prediction difficult, hourly rates provide flexibility.

The trade-off? Less predictable total costs for large datasets.

Per-Unit Pricing

Pay-per-annotation models charge fixed rates for each completed item—per bounding box, per labeled image, per transcribed audio segment.

This approach suits standardized tasks with consistent complexity. Simple image classification or basic text labeling benefits from per-unit models because costs scale predictably with dataset size.

Providers typically offer tiered per-unit rates based on volume commitments.

Project-Based Contracts

Fixed-price agreements cover entire annotation projects from start to finish. Vendors quote total costs upfront based on dataset size, annotation type, and quality requirements.

Project-based pricing reduces billing uncertainty but requires detailed scope definition. Changes mid-project often trigger additional fees.

Large enterprises with well-defined datasets favor this model for budget predictability.

Key Factors Driving Annotation Costs

Understanding pricing models isn’t enough. Multiple variables influence final costs, and several aren’t obvious upfront.

Annotation Complexity and Type

Simple tasks cost less. Period.

Basic image classification—labeling photos as “cat” or “dog”—requires minimal time and expertise. Bounding box annotation costs more because annotators draw precise rectangles around objects.

Semantic segmentation? That’s another level entirely. Pixel-level masks require meticulous work, driving up both time and cost. 3D annotation for autonomous vehicle training sits at the premium end, demanding specialized skills and tools.

Text annotation follows similar patterns. Simple sentiment labeling is cheaper than named entity recognition, which costs less than complex relationship extraction.

Required Expertise and Specialization

Generalist data annotation—basic labeling, ranking, guideline-based tasks—sits on the lower end. Based on community discussions, generalist data annotation rates typically fall around $8-20 per hour for this work.

Domain expertise changes everything. Medical image annotation requires radiologists or trained technicians who understand anatomical structures. Legal document classification needs annotators familiar with legal terminology and concepts.

Specialized knowledge commands premium rates, sometimes doubling or tripling costs compared to generalist work.

Geographic Location

Where annotation happens significantly impacts pricing. Labor costs vary dramatically across regions.

North American and Western European annotators typically charge higher rates than other regions for complex tasks. Eastern European teams offer mid-tier pricing. Southeast Asian and African markets provide cost-effective options while maintaining quality through proper training and quality control.

Remote work expansion has increased access to global talent pools, allowing organizations to balance cost and quality across geographies.

Quality Requirements and Validation

Higher accuracy demands cost more. When models need 95%+ annotation precision, multi-layer quality assurance becomes necessary.

Quality assurance typically involves multiple annotators labeling the same data, expert review of discrepancies, and validation rounds. Each QA layer adds 20-40% to base costs.

Research on crowd annotations shows that aggregating soft labels from multiple annotators can improve both predictive performance and uncertainty estimation—but requires paying multiple people for the same work.

Dataset Size and Project Scale

Volume creates leverage. Large projects typically unlock volume discounts—sometimes 15-30% off base rates.

But scale also introduces complexity. Coordinating dozens or hundreds of annotators requires project management infrastructure. Training costs for specialized tasks get amortized across more units in large projects, reducing per-unit impact.

Small pilot projects pay premium per-unit costs because setup overhead gets distributed across fewer items.

| Cost Factor | Impact Level | Typical Range | Optimization Strategy

|

|---|---|---|---|

| Annotation complexity | Very High | 2-10x variation | Pre-label with automation where possible |

| Required expertise | High | 1.5-3x variation | Mix expert review with generalist annotators |

| Geographic location | High | 2-4x variation | Offshore for non-sensitive data |

| Quality requirements | Medium-High | +20-60% cost | Risk-based QA (sample validation vs. full) |

| Dataset volume | Medium | 15-30% discount at scale | Batch work to unlock volume pricing |

Reduce AI Data Annotation Waste with Expert Guidance from AI Superior

Annotation costs depend on dataset size, complexity, quality control requirements, and domain expertise. AI Superior helps companies define annotation strategies that balance accuracy and budget.

They provide:

- Annotation workflow design

- Quality assurance framework

- Tool selection guidance

- Data validation before model training

Before allocating large budgets to labeling, work with AI Superior to define exactly what data is required and avoid unnecessary annotation spend.

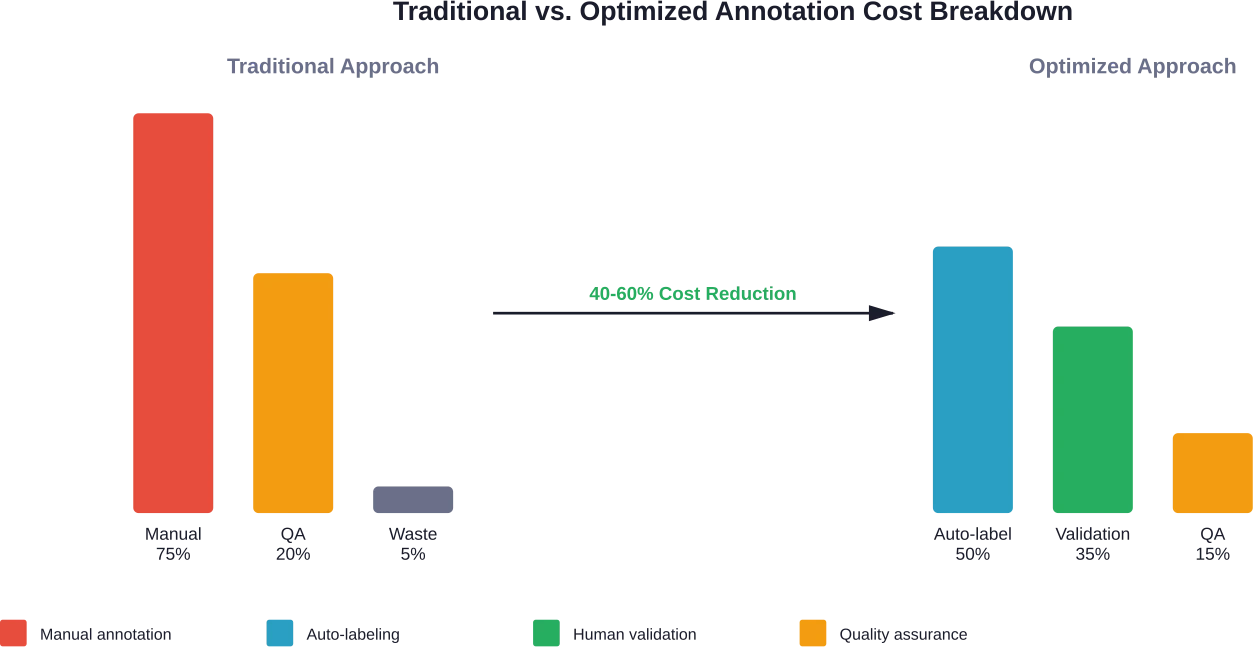

The Hidden Cost Crisis: Wasted Annotations

Here’s something most organizations don’t talk about: waste.

Organizations spend significant resources manually creating data annotations they never use. By some estimates, organizations never use 95% of their data annotations, representing massive resource waste.

One organization reportedly throws away 499 out of every 500 annotations. Let that sink in.

Why does this happen? Several reasons compound:

Teams over-annotate datasets without clear model requirements. Product requirements change mid-project, making existing annotations irrelevant. Annotation quality issues force complete re-work. Models achieve acceptable performance with far less data than initially annotated.

This waste represents not just monetary loss but opportunity cost—budgets consumed by unnecessary annotation can’t fund model development or infrastructure improvements.

Auto-Labeling and Efficiency Improvements

Technology is shifting the economics. Auto-labeling uses pre-trained models to generate initial annotations that humans then verify and correct.

This approach can reduce annotation time by 50-80% for suitable tasks. Instead of annotating from scratch, workers validate machine-generated labels—faster and cheaper.

Active learning techniques identify which samples most benefit model training, allowing selective annotation of high-value data points rather than exhaustive labeling. Research shows that with less than 8% of data labeled using active learning approaches, acceptable model performance becomes achievable.

But here’s the thing—automation works best for established use cases with pre-trained models available. Novel tasks or unique domains still require substantial manual effort.

In-House vs. Outsourcing: Cost Comparison

Building internal annotation teams versus outsourcing represents a fundamental budget decision. Neither option universally wins—context matters.

In-House Annotation Costs

Internal teams provide control and domain integration. Annotators sit alongside data scientists, enabling rapid feedback cycles and deep understanding of model requirements.

But full costs include more than salaries. Factor in recruitment, training, management overhead, annotation tools, workspace, and benefits. Total annual cost per annotator for internal teams can be substantial in developed markets.

For organizations with continuous annotation needs and sensitive data that can’t leave internal systems, in-house teams make sense. Upfront investment amortizes across ongoing projects.

Outsourcing Economics

Outsourcing converts fixed costs into variable expenses. Pay only for actual annotation work without maintaining permanent staff during slow periods.

Specialized vendors provide trained teams, established workflows, and quality frameworks. Ramp-up happens faster—vendors can deploy dozens of annotators in days versus months for internal hiring.

Trade-offs include less direct control, potential communication friction, and data security considerations. Highly confidential datasets may face restrictions on external sharing.

Many organizations adopt hybrid models—maintaining small internal teams for sensitive work while outsourcing high-volume, standardized tasks.

Budgeting for Data Annotation Projects

Accurate budget forecasting prevents mid-project surprises. Several steps improve estimation accuracy.

Define Scope Precisely

Vague requirements generate vague quotes. Specify annotation types needed, expected quality levels, dataset size, and timeline constraints.

Create annotation guidelines early—even rough drafts help vendors assess complexity. Sample datasets reveal edge cases that impact effort estimates.

Account for Hidden Costs

Budget line items beyond base annotation include:

Guideline development and refinement. Annotator training on domain-specific requirements. Quality assurance and inter-annotator agreement testing. Re-work for failed validation batches. Project management and communication overhead. Tool licenses if using specialized annotation platforms.

It is advisable to add contingency to initial estimates. Annotation projects consistently uncover unexpected complexity.

Pilot Before Scaling

Small pilot batches—500-1,000 items—reveal actual annotation rates and quality levels before committing to full datasets. Pilots cost more per-unit but prevent expensive mistakes at scale.

Use pilot results to refine guidelines, adjust quality processes, and recalibrate budget estimates based on observed performance.

Consider Iterative Funding

Instead of annotating entire datasets upfront, phase annotation with model development. Annotate minimum viable datasets, train initial models, then annotate additional data based on model performance and error analysis.

Active learning identifies which additional samples matter most, preventing waste on annotations that won’t improve model accuracy.

| Annotation Approach | Typical Cost per 1,000 Images | Timeline | Best Use Case

|

|---|---|---|---|

| Simple classification | $50-$150 | 1-2 days | Category labeling, content moderation |

| Bounding boxes | $200-$600 | 3-5 days | Object detection, retail inventory |

| Semantic segmentation | $800-$2,500 | 1-2 weeks | Autonomous vehicles, medical imaging |

| 3D annotation | $2,000-$6,000 | 2-3 weeks | LIDAR, robotics, spatial mapping |

| Video annotation | $1,500-$4,000 | 1-3 weeks | Action recognition, surveillance |

Selecting the Right Annotation Partner

For organizations outsourcing annotation, vendor selection impacts both cost and quality outcomes. Several criteria matter beyond price.

Quality Assurance Processes

Ask how vendors measure and maintain quality. Multi-layer validation, inter-annotator agreement metrics, and expert review cycles indicate mature processes.

Request pilot projects to evaluate actual quality before committing to large contracts. Quality issues discovered late cost far more to fix than upfront validation.

Domain Expertise

Generalist annotation firms handle standard tasks well. Specialized domains—medical, legal, scientific—benefit from vendors with relevant experience and trained annotators.

Check case studies and references from similar industries. Domain vocabulary and concept understanding significantly impact efficiency.

Scalability and Flexibility

Project needs change. Can vendors rapidly scale capacity up or down? What minimum commitments do contracts require?

Flexible engagement models prevent paying for unused capacity or waiting weeks for volume increases.

Data Security and Compliance

Sensitive datasets need vendors with appropriate security certifications—SOC 2, ISO 27001, HIPAA compliance for healthcare data, GDPR compliance for European data.

Understand where annotation happens geographically and whether that creates regulatory issues. Some industries restrict data processing locations.

Technology Platform

Modern annotation platforms accelerate work through smart tools—pre-labeling suggestions, keyboard shortcuts, automated quality checks.

Vendors using outdated interfaces cost more because annotation takes longer. Request platform demos during evaluation.

Future Trends Impacting Annotation Costs

Several emerging trends will reshape annotation economics over the next few years.

Foundation models and transfer learning reduce annotation requirements for many tasks. Models pre-trained on massive datasets require less task-specific labeled data to achieve good performance.

Synthetic data generation creates labeled training data programmatically, potentially reducing reliance on human annotation for certain computer vision applications.

Improved active learning techniques get smarter about identifying high-value samples, maximizing model improvement per annotation dollar spent.

But the flip side? More complex AI applications—multimodal models, embodied AI, nuanced language understanding—demand richer, more sophisticated annotations. Simple labels won’t suffice.

The annotation market will likely bifurcate: commodity labeling becomes increasingly automated and cheap, while specialized, high-quality annotation for cutting-edge applications commands premium pricing.

Frequently Asked Questions

What’s the average cost of data annotation per hour?

Data annotation costs typically range from $4 to $12 per hour for basic work, varying by geographic location and task complexity. Specialized annotation requiring domain expertise can reach higher rates in developed markets. Rates depend significantly on annotation type, quality requirements, and annotator skill level.

How much does it cost to annotate 10,000 images?

Image annotation costs depend heavily on complexity and vary by provider and project specifics. Costs increase with more complex annotation types such as bounding boxes, semantic segmentation, and 3D annotation.

Is in-house annotation cheaper than outsourcing?

Not necessarily. While hourly rates may appear lower for internal staff, total cost of in-house teams includes salaries, benefits, training, management, tools, and workspace—which can be substantial. Outsourcing converts these fixed costs to variable expenses and provides faster scaling. In-house makes sense for continuous needs and sensitive data; outsourcing works better for variable workloads.

How can organizations reduce annotation costs without sacrificing quality?

Several strategies reduce costs while maintaining quality: use auto-labeling with human validation to cut manual effort by 50-80%, apply active learning to annotate only high-value samples, implement risk-based quality assurance instead of reviewing everything, start with pilot projects to refine processes, and leverage geographic arbitrage by working with quality offshore teams.

What percentage of annotation budget should go to quality assurance?

Quality assurance typically adds 20-40% to base annotation costs depending on accuracy requirements. Mission-critical applications needing 95%+ precision may allocate 40-60% of budget to multi-layer validation. Less critical applications can use sampling-based QA at 15-25% of total cost. Balance QA investment against downstream cost of errors in production models.

Why do organizations waste so much annotated data?

Industry estimates suggest organizations never use 95% of their annotations due to several factors: over-annotation without clear model requirements, changing product specifications mid-project, quality issues forcing re-work, and models achieving acceptable performance with less data than anticipated. Better planning, iterative annotation aligned with model development, and active learning approaches reduce this waste.

Should annotation costs be considered a one-time or ongoing expense?

Most AI applications require ongoing annotation. Models need updates as concepts evolve, edge cases emerge, and business requirements change. Budget for continuous annotation at 20-40% of initial dataset creation costs annually. Applications in rapidly changing domains need higher ongoing annotation budgets to maintain model relevance and accuracy.

Making Smart Annotation Investment Decisions

Data annotation costs represent significant AI development expenses, but smart approaches prevent budget overruns while maintaining quality.

The key insights: pricing models vary dramatically based on task complexity, required expertise, and quality standards. Geographic location, project scale, and vendor selection all impact final costs. Organizations waste massive resources on unnecessary annotations through poor planning.

Successful annotation strategies combine automation where appropriate with targeted human validation. Pilot projects validate assumptions before scaling. Iterative approaches aligned with model development prevent over-annotation.

Most importantly—annotation isn’t just a cost center. It’s foundational infrastructure determining model quality, capabilities, and business value. Cutting corners on annotation quality to save costs often generates much larger expenses downstream through model failures, re-work, and missed opportunities.

The question isn’t how to minimize annotation costs. It’s how to optimize annotation investment for maximum model performance per dollar spent. Organizations that understand this distinction build better AI systems more efficiently.

Ready to optimize your annotation budget? Start with a small pilot project, measure actual costs and quality, then scale based on data rather than assumptions. The most expensive annotation mistake is committing to large-scale work before validating your approach.