Quick Summary: LangChain framework itself is free and open-source, but developing LLM applications involves costs for LLM API calls (typically $0.25-$75 per million tokens), LangSmith observability platform ($0-$39+ per seat monthly), infrastructure hosting, and developer time. Total development costs range from near-zero for prototypes to $10,000+ monthly for production deployments depending on scale, model choice, and feature complexity.

Building applications with large language models has become mainstream in 2026, and LangChain remains one of the most adopted frameworks for orchestrating LLM workflows. But here’s what catches teams off guard: while the framework is free, the total cost of development and deployment involves multiple layers that aren’t always obvious upfront.

The pricing structure isn’t straightforward. LangChain is open-source, so there’s no license fee. However, teams quickly encounter costs for model API calls, observability tooling, vector databases, hosting infrastructure, and the developer hours required to build production-ready applications.

This guide breaks down every cost component for LangChain-based LLM application development in 2026, from initial prototyping through production scaling.

LangChain Framework Pricing: The Core Components

LangChain itself is completely free. The framework is open-source and available without licensing costs. This applies to both the Python and JavaScript implementations, which developers can install via pip or npm and use immediately.

The framework provides modular components for building LLM applications: chains for sequencing operations, agents for autonomous decision-making, retrievers for document search, and memory systems for conversation context. None of these core capabilities require payment to LangChain.

However, the LangChain ecosystem extends beyond the core framework. LangGraph, a library for building stateful multi-agent workflows, is also open-source and free. LangServe, which converts chains into deployable APIs, follows the same model—free to use, though deploying those APIs will require cloud infrastructure that comes with hosting costs.

LangSmith Observability Platform: Where Subscription Costs Begin

LangSmith is where teams encounter the first direct cost from the LangChain ecosystem. This platform provides tracing, debugging, evaluation, and monitoring capabilities that become essential when moving from prototype to production.

According to the official LangChain pricing page, LangSmith offers three tiers as of 2026:

| Plan | Price per Seat | Base Traces Included | Trace Retention | Best For |

|---|---|---|---|---|

| Developer | $0/month | 5,000/month | 14 days (base) | Solo developers, prototyping |

| Plus | $39/month | 10,000/month | 14 days (base), 400 days (extended) | Small teams, production apps |

| Enterprise | Custom pricing | Custom allocation | Custom retention | Large organizations, compliance needs |

The Developer plan allows one seat for free, making it viable for individual developers getting started. It includes up to 5,000 base traces monthly, with pay-as-you-go pricing beyond that threshold. Base traces cost $2.50 per 1,000 traces and have a 14-day retention period.

Extended traces, which retain data for 400 days, cost $5.00 per 1,000 traces (double the base rate). For applications requiring long-term observability and compliance audit trails, this cost can accumulate significantly.

The Plus plan at $39 per seat per month is where most production teams land. It includes 10,000 base traces monthly, unlimited Fleet agents for autonomous operations, and email support. Teams can add unlimited seats at the same per-seat rate.

Additional LangSmith features include annotation queues for human feedback, prompt management through the Prompt Hub and Playground, and monitoring with alerting capabilities. These tools don’t carry separate costs—they’re bundled into the plan tiers.

LLM API Costs: The Largest Variable in Production Budgets

The most significant ongoing expense for LangChain applications is the cost of calling LLM APIs. This dwarfs the framework and tooling costs for any application running at scale.

LangChain supports integration with dozens of model providers through its standardized interface. Each provider has different pricing structures based on token consumption, model capability, and additional features like caching or batch processing.

2026 Model Pricing Landscape

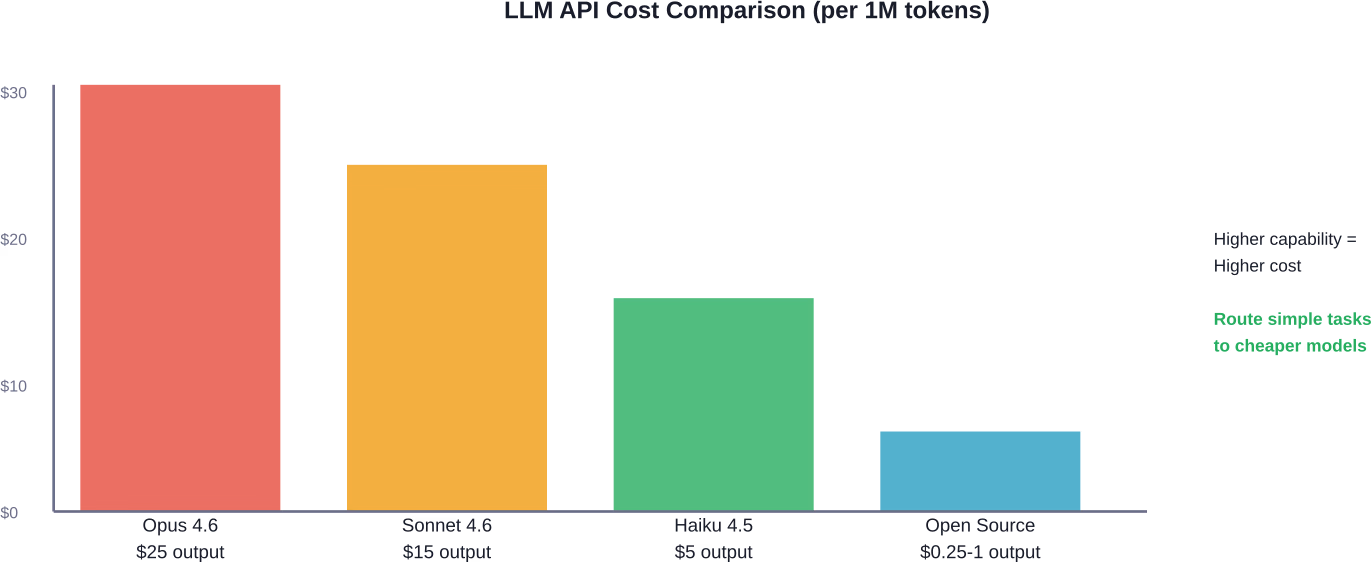

Anthropic’s Claude models represent a common choice for LangChain applications. According to recent pricing documentation, Claude models offer these representative rates per million tokens (pricing subject to change):

- Claude Opus 4.6: $5 input / $25 output

- Claude Sonnet 4.6: $3 input / $15 output

- Claude Haiku 4.5: $0.25-$1 input / $1.25-$5 output

Legacy models from the Claude 4.1 series show higher pricing, with Opus 4.1 at $15 input / $75 output per million tokens. The price evolution demonstrates how newer generations often deliver better performance at lower costs.

OpenAI models follow similar token-based pricing, though specific 2026 rates vary by model generation. GPT-4 class models typically range from $2.50-$30 per million input tokens depending on capability tier and context window size.

Smaller open-source models accessed through providers like Groq or hosted on infrastructure like AWS Bedrock can reduce per-token costs substantially—sometimes by 80-95% compared to frontier models—though with corresponding capability tradeoffs.

Cost Optimization Strategies

Prompt caching can reduce costs by up to 90% for repeated context. When applications process similar documents or maintain consistent system prompts across requests, caching the encoded representations eliminates redundant processing costs.

Batch API processing, where available, typically offers 50% discounts compared to real-time inference. Applications that can tolerate delayed responses—like document processing pipelines or nightly analysis jobs—benefit significantly from batch pricing.

Combining these strategies compounds savings. According to cost optimization research, teams using both prompt caching and batch APIs together can potentially reduce total inference costs by significant margins compared to standard real-time processing without caching.

Model routing represents another optimization approach. Hierarchical architectures in financial document processing achieved 97.7% of reflexive architecture accuracy at 60.9% of the cost, based on benchmarking data for specialized workflows. LangChain applications can implement similar logic to route simple queries to cheaper models while reserving expensive frontier models for complex reasoning tasks.

Infrastructure and Supporting Service Costs

Beyond the LLM APIs and LangSmith observability, LangChain applications require supporting infrastructure that adds to the total budget.

Vector Database Costs

Applications using retrieval-augmented generation require vector databases to store and search document embeddings. Popular options include Pinecone, Weaviate, Qdrant, and Chroma, each with different pricing models.

Managed vector database services typically charge based on data volume, query volume, and performance tier. Small applications might stay within free tiers offering 1GB storage and limited queries. Production deployments handling millions of vectors can incur $50-$500+ monthly depending on scale and replication requirements.

Self-hosted vector databases eliminate subscription costs but require infrastructure management. Running Qdrant or Chroma on cloud compute instances shifts the cost to server rental and operational overhead.

Embedding Model Costs

Generating embeddings for vector storage carries its own API costs, though typically much lower than LLM inference. OpenAI’s text-embedding-3-large costs approximately $0.13 per million tokens, while smaller embedding models cost even less.

For applications processing large document collections, embedding costs can accumulate. Processing 10 million tokens of documentation would cost roughly $1.30 with standard embedding APIs—negligible compared to LLM costs but worth tracking for budget accuracy.

Application Hosting

Deploying LangChain applications requires compute resources. Simple chatbots might run on serverless platforms like Vercel or AWS Lambda with minimal costs under $20 monthly. More complex agent systems with continuous operation and state management need persistent servers.

Cloud compute costs vary widely based on requirements. A basic containerized deployment on services like Render or Railway starts around $7-$25 monthly for small instance sizes. Production systems with auto-scaling, load balancing, and high availability can reach $200-$2,000+ monthly depending on traffic and complexity.

Development Team Investment: The Hidden Cost Component

Developer time represents a significant portion of total project cost, though it’s often overlooked when focusing on infrastructure and API expenses.

Building a basic LangChain application—a simple RAG chatbot or document Q&A system—typically requires 40-80 hours for a developer familiar with Python and LLM concepts. At standard contract rates of $75-$150 per hour, this translates to $3,000-$12,000 in development investment.

Complex multi-agent systems with custom tools, sophisticated memory management, and production-grade error handling can require 200-500+ development hours. For teams building proprietary agentic workflows, the development investment can easily reach $20,000-$75,000 before the application processes a single production request.

Ongoing maintenance adds to this total. LLM APIs evolve rapidly, with models being deprecated and new versions released frequently. Keeping applications updated, monitoring performance degradation, and optimizing prompts as model behavior shifts requires continuous developer attention.

Real-World Budget Examples: From Prototype to Production

Understanding abstract cost components is helpful, but real budget scenarios provide clearer planning guidance.

Scenario 1: Solo Developer Prototype

A single developer building a proof-of-concept document analysis tool:

- LangChain framework: $0

- LangSmith Developer plan: $0 (5,000 traces/month)

- Claude Haiku API usage (50,000 queries, avg 2k tokens output): ~$500/month

- Vector database (Chroma self-hosted): $0

- Hosting (Railway hobby tier): $0-$5/month

- Development time (60 hours self-funded): Not invoiced

Total monthly recurring cost: ~$505. Initial development investment: 60 hours of developer time.

Scenario 2: Small Team Customer Support Bot

A startup building an internal customer support assistant with 3 team members:

- LangChain framework: $0

- LangSmith Plus plan (3 seats): $117/month

- Claude Sonnet API usage (200,000 queries, avg 1.5k tokens output): ~$4,500/month

- Pinecone vector database (starter plan): $70/month

- AWS hosting (containerized deployment): $150/month

- Development time (initial 200 hours across team at $75-$150/hour): $15,000-$30,000 one-time

- Ongoing optimization (10 hours/month): $750-$1,500/month

Total monthly recurring cost: ~$5,587-$6,337. Initial development investment: $15,000-$30,000.

Scenario 3: Enterprise Document Processing Pipeline

A large organization processing thousands of financial documents daily:

- LangChain framework: $0

- LangSmith Enterprise plan (25 seats): ~$3,000+/month (custom pricing)

- Multi-model routing (Opus for complex, Haiku for simple, batch processing): ~$15,000/month

- Vector database (managed, high availability): $800/month

- Cloud infrastructure (auto-scaling, redundancy): $2,500/month

- Development team (initial build, 500 hours): $50,000-$100,000 one-time

- Ongoing development and optimization (40 hours/month): $3,000-$6,000/month

Total monthly recurring cost: ~$24,300-$27,300. Initial development investment: $50,000-$100,000.

Cost Tracking and Budget Management

Managing LLM application costs requires visibility into token consumption patterns. LangSmith provides built-in cost tracking capabilities that integrate with LangChain applications.

According to the official cost tracking documentation, LangSmith calculates costs based on token usage metadata and configurable pricing models. Teams can set input and output prices per million tokens for each model, with support for detailed token type breakdowns.

For applications calling OpenAI, Anthropic, or models following OpenAI-compatible formats, cost tracking happens automatically when using LangChain integrations or LangSmith wrappers. The platform reads token counts from API responses and applies pricing rules to compute run costs.

Custom cost structures—like non-linear pricing or provider-specific discounts—require manual cost annotation. Teams can attach cost metadata to traces programmatically when automatic calculation doesn’t match billing reality.

The cost calculation works greedily from most-to-least specific token types. If pricing defines $2 per million input tokens with a detailed rate of $1 per million cache-read tokens, and a run uses 20 input tokens with 5 from cache, the system charges $1 per million for the 5 cached tokens and $2 per million for the remaining 15 standard inputs.

Comparing LangChain to Alternative Frameworks

Cost considerations extend to framework selection. LangChain competes with alternatives like Vercel AI SDK and direct OpenAI SDK usage, each with different cost implications.

Vercel AI SDK focuses on streaming and edge deployment, optimized for Next.js applications. The framework itself is free, similar to LangChain. However, it doesn’t include built-in observability like LangSmith, requiring separate monitoring solutions that may carry their own costs.

OpenAI SDK provides direct access to OpenAI models with minimal abstraction. This eliminates framework overhead but requires custom implementation of features LangChain provides out-of-box—chain composition, memory management, tool integration. The development time savings from using LangChain often outweigh any marginal performance gains from direct SDK usage.

Semantic Kernel from Microsoft offers similar orchestration capabilities with tight Azure integration. Teams already invested in the Azure ecosystem may consider bundled services through Semantic Kernel’s Azure integration, though the framework itself is also open-source and free like LangChain.

| Framework | Core License | Observability | Best For | Cost Profile |

|---|---|---|---|---|

| LangChain | Free/Open | LangSmith ($0-$39+/seat) | Complex agents, RAG | LLM APIs + optional tooling |

| Vercel AI SDK | Free/Open | Third-party required | Edge streaming, Next.js | LLM APIs + monitoring SaaS |

| OpenAI SDK | Free/Open | Custom implementation | Simple OpenAI-only apps | LLM APIs + dev time |

| Semantic Kernel | Free/Open | Azure Application Insights | Azure-native deployments | LLM APIs + Azure services |

Technical Debt and Long-Term Cost Considerations

Choosing a well-adopted framework like LangChain can reduce certain risks associated with abandoned frameworks.

However, rapid framework evolution introduces version management challenges. LangChain releases breaking changes periodically as the LLM landscape shifts. Teams should budget for periodic upgrade work as LangChain releases breaking changes and new capabilities.

Research indicates self-admitted technical debt appears in 2.4% to 31% of open-source project codebases, often introduced by experienced developers. For LLM applications specifically, debt accumulates around prompt management, evaluation methodologies, and model version pinning—all areas where tools like LangSmith provide value but at subscription cost.

Making the ROI Calculation

Whether LangChain development costs make financial sense depends on the business value generated. A customer support bot that handles 1,000 queries daily and reduces support ticket volume by 30% might justify $5,000 monthly in operational costs if it saves $20,000 in support agent salaries.

Document processing pipelines need similar analysis. If an enterprise processes 10,000 financial documents monthly and LLM automation reduces review time from 15 minutes to 3 minutes per document, the labor savings—2,000 hours monthly at $50/hour rates—would be $100,000. Even expensive model usage at $20,000 monthly shows clear ROI.

Prototypes and MVPs require different math. Spending $5,000 on development to validate product-market fit before committing to full-scale deployment makes sense. Spending $50,000 on a prototype without user validation does not.

The key insight: LangChain and LangSmith costs are optimization problems, not fixed expenses. Teams control model selection, caching strategies, batch processing usage, and infrastructure choices. The framework provides flexibility to start cheap and scale strategically as business value proves out.

Keep LangChain Projects Efficient From Day One

LangChain often looks simple at the start, but costs tend to grow once chains, prompts, and model calls scale in real use. AI Superior works on the full LLM application layer behind frameworks like LangChain – model selection, fine-tuning, retrieval setup, and deployment. The focus is not on the framework itself, but on building systems where model calls, data flows, and infrastructure are aligned with the actual use case. That keeps token usage under control and avoids pipelines that generate unnecessary load.

Most cost issues in LangChain setups come from how the system is designed, not the tool you choose. If you want to avoid over-engineered chains and rising inference costs, contact AI Superior and review your LLM application setup before it scales.

Frequently Asked Questions

Is LangChain completely free to use?

Yes, the LangChain framework itself is open-source and free. There are no licensing costs, usage fees, or subscription requirements for the core framework, LangGraph, or LangServe. However, building applications involves costs for LLM API calls, optional LangSmith observability platform, and infrastructure hosting.

How much does LangSmith cost for production applications?

LangSmith offers a free Developer plan with 5,000 traces per month, suitable for solo developers and prototypes. The Plus plan costs $39 per seat per month and includes 10,000 traces monthly, making it appropriate for small teams and production deployments. Enterprise pricing is custom based on scale and requirements. Additional traces beyond plan limits cost $2.50-$5.00 per 1,000 traces depending on retention period.

What are typical LLM API costs for a LangChain application?

LLM API costs vary dramatically based on model choice and usage volume. Claude Haiku costs $0.25-$5 per million tokens, Sonnet costs $3-$15 per million, and Opus costs $5-$25 per million. A small chatbot processing 50,000 queries monthly with average 2,000 output tokens might cost $500-$2,500 monthly depending on model tier. Enterprise applications can reach $10,000-$50,000+ monthly at high scale.

Can I reduce LLM costs without sacrificing quality?

Yes, through several optimization strategies. Prompt caching can reduce costs by up to 90% for repeated context. Batch API processing offers 50% discounts for non-real-time workloads. Model routing—using cheaper models for simple tasks and expensive models only for complex reasoning—can reduce costs by 40-60% while maintaining 95%+ accuracy. Combining these techniques can achieve significant cost reduction compared to unoptimized implementations.

Do I need LangSmith to use LangChain in production?

No, LangSmith is optional. LangChain applications run perfectly fine without LangSmith subscriptions. However, LangSmith provides critical observability, debugging, and evaluation capabilities that become valuable for production deployments. Teams can start without it and add LangSmith when application complexity or scale makes debugging and monitoring difficult. The free Developer plan offers limited tracing for testing these capabilities.

How much should I budget for LangChain development?

Development costs depend on application complexity. Simple chatbots or document Q&A systems typically require 40-80 hours of development ($3,000-$12,000 at standard rates). Complex multi-agent systems with custom tools and sophisticated workflows can require 200-500+ hours ($15,000-$75,000+). Ongoing maintenance adds 5-40 hours monthly depending on scale. Monthly operational costs range from $500 for prototypes to $25,000+ for enterprise deployments, with LLM API usage representing 55-99% of recurring expenses.

Are there hidden costs I should watch for?

The most commonly overlooked costs include vector database subscriptions for RAG applications ($0-$500+ monthly), embedding API costs for document processing ($0.10-$0.30 per million tokens), infrastructure hosting beyond serverless free tiers ($20-$2,000+ monthly), and developer time for ongoing prompt optimization and model updates (10-40 hours monthly). Teams also sometimes underestimate token consumption during development and testing, which can add 20-50% to initial budget estimates.

Planning Your LangChain Development Budget

Building LLM applications with LangChain in 2026 requires understanding multiple cost layers. The framework itself eliminates licensing expenses, but the total cost of ownership includes LLM API consumption, optional observability tooling, supporting infrastructure, and developer investment.

Start with the free tier. LangChain’s open-source framework and LangSmith’s Developer plan let teams prototype and validate concepts without upfront costs. This risk-free exploration phase helps determine whether the application generates enough value to justify production investment.

Budget for scale gradually. Begin with cheaper models like Claude Haiku or smaller alternatives. Implement prompt caching and batch processing from the start. Upgrade to more capable models only for specific use cases where the quality improvement justifies the cost increase.

Track everything. Enable LangSmith cost tracking early to understand consumption patterns before they become expensive surprises. Review token usage weekly during development and daily in production. Set up budget alerts before costs exceed acceptable thresholds.

Most importantly, measure business value against technical costs. LLM applications should solve problems worth more than their operational expenses. If the math doesn’t work—if API costs exceed the value generated—no amount of optimization will make the project sustainable. But when value exceeds cost, LangChain provides the tooling to build, deploy, and scale efficiently.

Ready to start building? The LangChain framework is available now, completely free. Test the waters with the LangSmith Developer plan, choose a cost-effective model for initial experiments, and scale your investment as your application proves its value. The framework makes it possible to start today for near-zero cost and grow into production at your own pace.