Quick Summary: AI will not replace lawyers entirely, but it will fundamentally transform how legal work is performed. While AI excels at automating routine tasks like document review and legal research, the profession still requires human judgment, ethical reasoning, client relationships, and courtroom advocacy that AI cannot replicate. According to Brookings Institution research, over 30% of workers could see AI impact their tasks, but adaptive capacity varies significantly across the legal profession.

The question isn’t new. Every technological leap—from typewriters to legal databases—has sparked fears about lawyer obsolescence. But artificial intelligence feels different.

This time, the technology doesn’t just speed up existing work. It performs tasks that previously required years of legal training. AI can analyze contracts, predict case outcomes, and draft legal documents in seconds. So the anxiety is understandable.

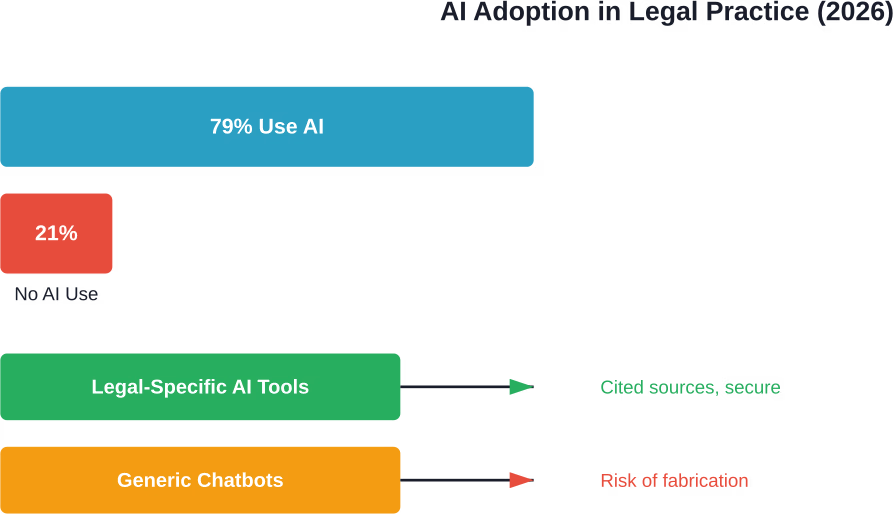

Here’s what’s actually happening: AI adoption in the legal profession has accelerated dramatically. According to the latest Legal Trends Report, 79% of legal professionals now use AI in some form. The gap between firms that embrace it and those that don’t is becoming increasingly clear.

But adoption doesn’t equal replacement. The relationship between AI and lawyers is far more nuanced than simple substitution.

The Current State of AI in Legal Practice

AI has moved beyond the experimental phase in law firms. Legal professionals aren’t debating whether to use AI anymore—they’re figuring out how to use it effectively.

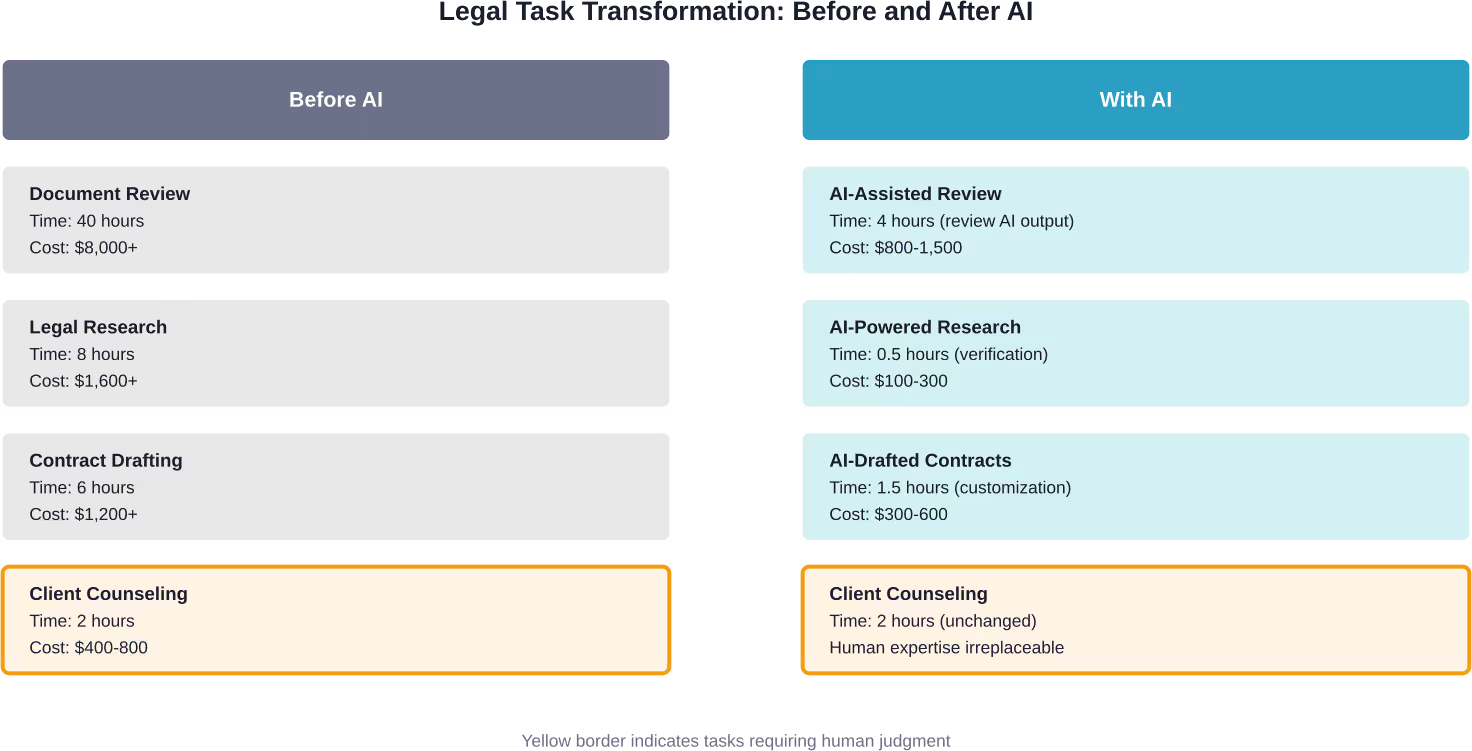

The technology handles specific tasks remarkably well. Document review that once consumed hundreds of billable hours now happens in minutes. Legal research tools powered by AI surface relevant case law faster than any manual search. Contract analysis software identifies problematic clauses across thousands of agreements simultaneously.

And this matters for efficiency. Firms using legal-specific AI solutions report significant time savings on routine work.

But there’s a catch. Generic tools like ChatGPT have created serious problems. The New York State Bar Association documented a case where an attorney admitted to using ChatGPT to “supplement” his legal research. The AI provided fabricated cases. Even after being asked by the court to verify the cases, the attorney went back to ChatGPT, which “complied by inventing a much longer text.”

The court didn’t find this amusing.

This incident highlights a critical distinction: legal-specific AI solutions offer cited sources, data security, and better accuracy for legal work. Generic chatbots lack legal training and carry privacy risks that can destroy careers.

What Research Shows About AI Exposure in Legal Work

The Brookings Institution has conducted extensive research on AI’s potential impact across professions. Their findings on legal work reveal both opportunities and vulnerabilities.

According to Brookings research published in October 2024, generative AI technology may impact broad swaths of the nation’s workers. More than 30% of all workers could see AI shift half or more of their work tasks. The exposure data suggests that generative AI technology may impact middle- to higher-paid occupations, clerical roles, and women disproportionately.

But here’s where it gets interesting.

A January 2026 Brookings study introduced the concept of “adaptive capacity”—workers’ varied ability to transition if displacement occurs. Among workers in the top quartile of occupational AI exposure, 26.5 million have above-median adaptive capacity, positioning them to make a job transition if displacement occurs.

However, the analysis also documents that some 6.1 million workers (4.2% of the workforce) face concentrated pockets of potential vulnerability.

For lawyers specifically, the picture is complex. Legal professionals generally fall into the higher adaptive capacity category due to their education, transferable skills, and professional networks. Yet exposure to AI varies widely based on specialization.

Geographic factors matter too. Brookings research from February 2025 found that the geography of generative AI’s workforce impacts will likely differ from those of previous technologies. While 43% of workers in San Jose could see generative AI shift half or more of their work tasks, that share is only 31% of workers in Las Vegas. At the county level, exposure to AI varies widely, from elevated exposure rates of around 40% or more in high-paying urban centers to much lower rates elsewhere.

Which Legal Tasks Face the Highest AI Exposure

Not all legal work faces equal AI disruption. Research and practice reveal clear patterns about which tasks AI handles well and which remain stubbornly human.

High AI exposure tasks include:

- Document review and e-discovery

- Basic legal research and case law searches

- Contract drafting for standard agreements

- Due diligence document analysis

- Initial case assessment and pattern recognition

- Routine correspondence and client updates

Low AI exposure tasks include:

- Courtroom advocacy and oral arguments

- Complex negotiation and settlement discussions

- Client relationship management and counseling

- Ethical judgment in ambiguous situations

- Strategy development for novel legal issues

- Cross-examination and witness preparation

The distinction isn’t random. AI excels at pattern recognition, data processing, and generating text based on training data. It struggles with nuance, persuasion, ethical reasoning, and adapting to unprecedented situations.

| Task Category | AI Capability | Human Advantage | Likely Outcome |

|---|---|---|---|

| Document Review | High speed, pattern detection | Context understanding | AI-assisted, human oversight |

| Legal Research | Rapid case law retrieval | Strategic relevance assessment | AI-assisted, human oversight |

| Client Counseling | Limited empathy, generic advice | Relationship, trust, nuance | Human-led |

| Trial Advocacy | No courtroom presence | Persuasion, adaptation, credibility | Human-led |

| Contract Drafting | Template generation | Custom negotiation, risk assessment | AI-assisted, human oversight |

| Ethical Judgment | Rule-based only | Moral reasoning, professional responsibility | Human-led |

The Entry-Level Lawyer Problem

Here’s a concern that keeps law school deans awake at night: if AI handles the tasks traditionally assigned to junior associates, how do new lawyers develop expertise?

At the 2026 Davos World Economic Forum, CEOs of two leading artificial intelligence companies issued a joint warning. Demis Hassabis of Google DeepMind said he expects AI to begin to impact junior-level jobs and internships this year, while Dario Amodei of Anthropic reaffirmed his prediction that 50% of entry-level jobs could disappear within five years.

This creates a troubling paradox. Junior lawyers historically learned through repetitive tasks—reviewing documents, conducting research, drafting routine motions. These tasks were educational stepping stones, not just billable work.

Now AI performs these tasks faster and cheaper. Firms face economic pressure to use the technology. But if associates never do document review, how do they learn what makes a contract problematic? If they never research case law manually, how do they develop legal reasoning skills?

Molly Kinder, Senior Fellow at Brookings Metro, proposed looking to the medical residency model as a solution. In a January 2026 commentary, she suggested structured training programs that preserve educational value even as AI handles routine work.

The idea makes sense. Medical residents don’t perform every basic task manually—technology assists them. But their training ensures they understand the underlying principles. Legal education may need similar restructuring.

Some firms are already adapting. They’re redesigning associate training to focus on AI supervision, strategic thinking, and client-facing skills rather than pure task execution.

Stanford Law’s liftlab Initiative and the Future of Legal AI

Academic institutions aren’t just studying AI’s impact—they’re actively shaping how it integrates into legal practice.

On September 15, 2025, Stanford Law School announced the launch of the Legal Innovation through Frontier Technology Lab, or liftlab. Led by Professor Julian Nyarko and Executive Director Megan Ma, liftlab explores how artificial intelligence can reshape legal services to make them faster, cheaper, better, and more widely accessible.

The initiative is among the first academic efforts in legal AI to unite research, prototyping, and real-time collaboration with industry. The mission focuses on increasing access to high-quality legal services in the private sector by leveraging artificial intelligence and other frontier technologies.

One particularly interesting project is the “personas project.” The team is attempting to download a senior law firm partner’s experience and activate it in an AI agent. The goal? Taking that rich trove of knowledge—gathered over a career—and putting it into a program to hone legal practice and train new associates.

Think about the implications. If successful, this technology could democratize expertise. A small firm in rural America could access strategic insights that previously required hiring a BigLaw partner.

But it also raises questions. Can experience truly be codified? Does distilling decades of practice into algorithms lose something essential? What happens to mentorship, professional judgment, and the tacit knowledge that comes from navigating hundreds of unique situations?

The work at liftlab acknowledges these tensions. Their approach extends beyond theory to develop, investigate, and evaluate AI in legal education and private practice in sustainable and responsible ways.

Real-World AI Implementation in Law Firms

Theory is one thing. Implementation is messier.

Danielle Benecke, founder and global head of Baker McKenzie’s Applied AI practice, offers a ground-level perspective. Her practice designs and delivers AI-native legal and compliance services for complex, high-stakes matters.

According to Benecke, the current moment involves navigating existential threats for clients while simultaneously building AI-integrated workflows. Law firms aren’t choosing between AI and traditional methods—they’re figuring out how to blend them effectively.

The challenges are practical:

- Data security and client confidentiality in AI systems

- Quality control when AI generates work product

- Professional responsibility and malpractice exposure

- Client expectations around cost and speed

- Training staff to supervise rather than perform tasks

Firms that succeed don’t just buy AI tools. They redesign workflows, retrain staff, establish quality control protocols, and rethink billing structures.

The firms that struggle? They bolt AI onto existing processes without fundamental changes. The technology underperforms, staff resist adoption, and clients don’t see value.

The Billing Model Disruption

Here’s an uncomfortable truth: AI threatens the billable hour.

If research that previously took eight hours now takes thirty minutes, billing becomes awkward. Do firms charge for the actual time spent? The value delivered? The time it would have taken without AI?

Clients increasingly demand transparency. They’re not willing to pay associates’ hourly rates for work that AI performed in seconds.

This forces a shift toward value-based billing, flat fees, and alternative fee arrangements. The change was happening before AI, but the technology accelerates it.

For partners accustomed to traditional models, this creates existential questions about firm economics. For clients, it offers opportunities to pay for outcomes rather than hours.

What AI Cannot Replace in Legal Practice

Technology limitations reveal themselves most clearly in what AI consistently fails to do.

Start with judgment. Legal practice constantly requires decisions in ambiguous situations where no clear answer exists. A client asks whether to settle or proceed to trial. The decision depends on risk tolerance, business objectives, emotional factors, opposing counsel’s credibility, and dozens of intangibles.

AI can analyze historical settlement data. It can’t make the call.

Then there’s ethical reasoning. Professional responsibility rules provide frameworks, but applying them to specific situations requires moral judgment. When does aggressive advocacy cross into misconduct? When should confidentiality yield to prevent harm? How does a lawyer balance duties to clients, courts, and justice?

These questions don’t have algorithmic answers.

The Irreplaceable Human Elements

Client relationships remain stubbornly human. People hire lawyers during stressful, consequential moments—divorces, criminal charges, business disputes, estate planning after a death. They need someone who listens, empathizes, and makes them feel heard.

AI can generate empathetic-sounding text. It can’t actually care about outcomes. Clients sense the difference.

Courtroom advocacy presents another barrier. Trials require real-time adaptation, reading judges and jurors, adjusting arguments based on reactions, and building credibility through presence and persuasion.

An AI can’t stand before a jury and earn trust. It can’t read a judge’s facial expression and pivot strategy mid-argument. It can’t cross-examine a hostile witness or deliver a closing argument that moves people.

Negotiation depends on similar skills. Effective negotiators read body language, build rapport, make strategic concessions, and create solutions that satisfy interests rather than just positions. The process is dynamic, interpersonal, and deeply human.

Creative problem-solving in novel situations also eludes AI. When presented with unprecedented legal questions—new technology, evolving social norms, gaps in legislation—lawyers must reason by analogy, draw from multiple domains, and construct arguments without clear precedent.

AI trained on existing data struggles with genuine novelty.

The Transformation, Not Replacement

So here’s where the evidence points: AI won’t replace lawyers, but lawyers who use AI will replace lawyers who don’t.

The profession is transforming in three fundamental ways.

First, the nature of legal work is shifting from task execution to oversight and strategy. Junior associates spend less time reviewing documents and more time analyzing AI output, identifying edge cases, and developing judgment. Senior lawyers focus on complex problem-solving, relationship management, and high-stakes decision-making.

Second, access to legal services may expand. AI-powered tools can deliver basic legal assistance to people who couldn’t afford traditional representation. Document automation, legal chatbots, and self-service platforms bring legal help to underserved populations.

This doesn’t replace lawyers for complex matters. But it fills gaps where no legal assistance existed before.

Third, competitive dynamics are changing. Firms that integrate AI effectively deliver better value—faster turnaround, lower costs, fewer errors. Firms that resist face pressure from clients who expect efficiency gains and competitors who provide them.

The legal profession has weathered technological disruption before. Legal databases didn’t eliminate lawyers—they changed how legal research happens. Email didn’t replace attorneys—it transformed communication. Practice management software didn’t render lawyers obsolete—it improved organization.

AI follows this pattern. It’s a more powerful tool than previous technologies, but still a tool that augments rather than replaces human expertise.

Specialization Will Matter More

Not all legal specialties face equal AI pressure. The variation matters for career planning.

Practice areas most vulnerable to AI disruption:

- High-volume, routine document review

- Basic contract drafting and review

- Straightforward legal research

- Simple estate planning

- Routine compliance work

Practice areas most resistant to AI disruption:

- Complex litigation and trial work

- High-stakes negotiation

- Appellate advocacy

- Novel legal questions and test cases

- Relationship-intensive work like family law

- Crisis management and reputation defense

The pattern is clear. Routine, repetitive, rules-based work faces higher AI exposure. Complex, relationship-dependent, judgment-intensive work remains protected.

Law students making career decisions should consider this landscape. Specializing in practice areas that leverage uniquely human skills provides more job security than focusing on tasks that AI handles well.

| Legal Specialty | AI Exposure Level | Key AI Impact | Human Advantage |

|---|---|---|---|

| Contract Review | High | Automated clause identification | Strategic negotiation, custom terms |

| E-Discovery | Very High | Document classification, relevance | Strategy, privilege review |

| Trial Litigation | Low | Research assistance only | Advocacy, jury persuasion, presence |

| Family Law | Low | Form generation | Client counseling, emotional support |

| Tax Law | Medium | Compliance automation, calculations | Planning, interpretation, advocacy |

| Criminal Defense | Low | Case law research | Courtroom advocacy, plea negotiation |

| Corporate M&A | Medium | Due diligence, document drafting | Deal strategy, relationship management |

| IP Litigation | Medium | Prior art searches, claim analysis | Technical expertise, trial work |

Test AI On Legal Work Before Making Big Assumptions

AI is often discussed as a replacement for lawyers, but in practice it only handles specific parts of the work, like research, document handling, or data-heavy tasks, while legal judgment stays with people.

AI Superior works with companies that want to apply AI inside real operations, not in isolation. They help identify where AI can support legal workflows, then build custom solutions around those areas, focusing on automation, data processing, and tools that fit into existing systems.

If you are exploring AI in legal work, it makes more sense to test it on your own processes first. Reach out to AI Superior to determine what can be automated without disrupting how your team already works.

How Lawyers Should Adapt to the AI Era

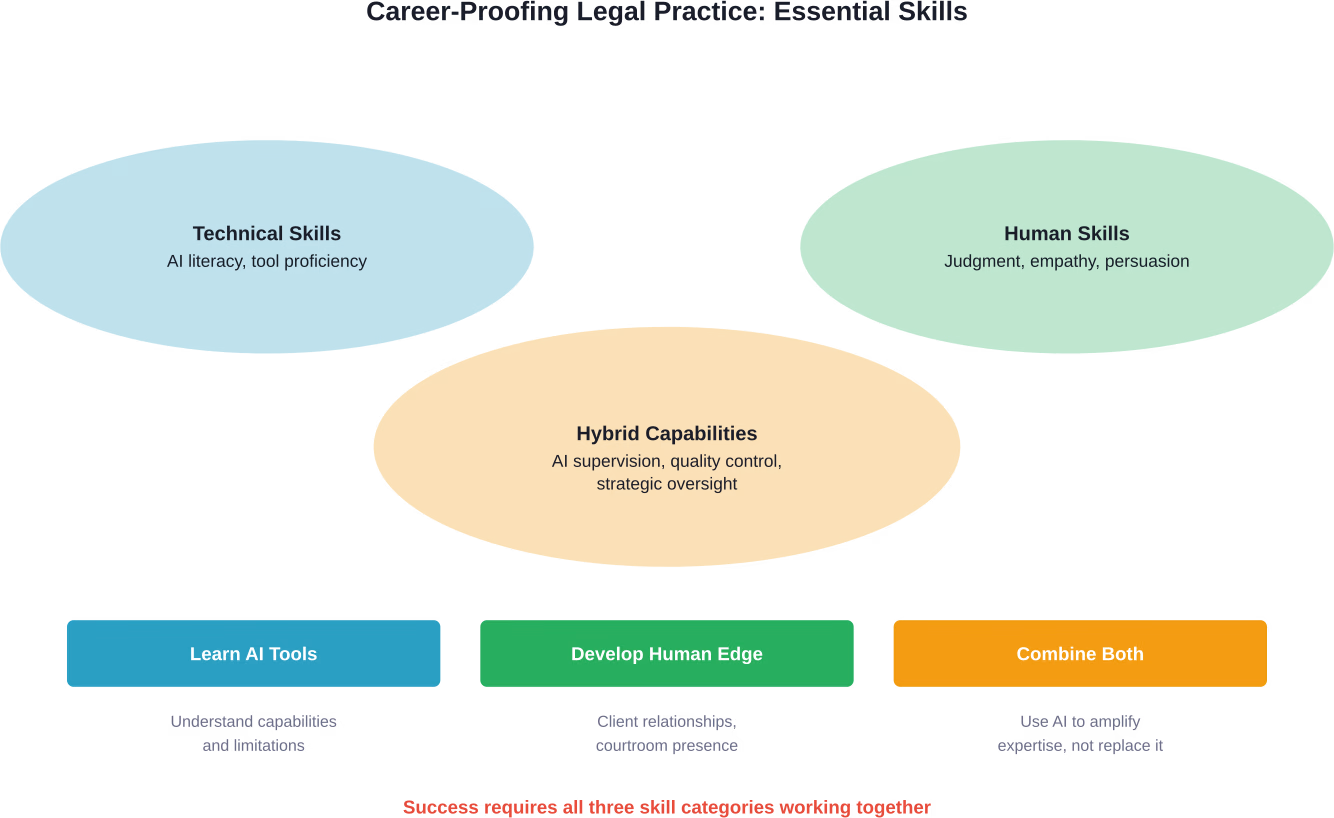

Passive resistance isn’t a viable strategy. Lawyers who want to thrive need proactive adaptation.

Start with AI literacy. Legal professionals don’t need to become programmers, but they should understand what AI can and cannot do, recognize its limitations, and identify appropriate use cases. Ignorance creates vulnerability.

Then develop AI supervision skills. The future lawyer doesn’t perform every task manually—they oversee AI-assisted work, verify outputs, catch errors, and add strategic value. This requires different competencies than traditional legal practice.

Double down on distinctly human skills. Emotional intelligence, persuasive communication, creative problem-solving, and relationship-building become more valuable as AI handles routine tasks. These capabilities differentiate irreplaceable lawyers from vulnerable ones.

Embrace continuous learning. AI technology evolves rapidly. Tools that didn’t exist last year become standard practice today. Lawyers must commit to ongoing education about new capabilities, emerging tools, and best practices.

Rethink service delivery models. Clients want value, not just hours. Lawyers who redesign their practices around outcomes, efficiency, and client experience position themselves competitively. Those who cling to outdated models face pressure.

Practical Steps for Law Firms

Firms need institutional strategies, not just individual adaptation.

First, invest in legal-specific AI solutions. Generic tools carry risks. Purpose-built legal AI offers security, accuracy, and professional responsibility compliance that general chatbots lack.

Second, establish clear AI policies. When can staff use AI? Which tools are approved? What verification is required? How should AI-assisted work be disclosed to clients? Written policies prevent ethical violations and quality problems.

Third, redesign training programs. Don’t just teach associates to use AI tools—help them develop judgment, strategic thinking, and client skills that technology can’t replicate. The goal is producing lawyers who add value beyond what AI provides.

Fourth, experiment with alternative fee arrangements. As efficiency improves, billable hour models become harder to justify. Firms that proactively develop value-based pricing maintain client relationships. Those that wait face pressure.

Fifth, communicate transparently with clients. Explain how AI enhances service quality and efficiency. Address privacy and confidentiality concerns. Share the benefits of technological investment rather than quietly pocketing efficiency gains while maintaining old billing rates.

The Access to Justice Opportunity

Here’s an underappreciated angle: AI might expand legal services to people who desperately need them but can’t afford traditional representation.

The justice gap is enormous. Millions of people face legal problems—evictions, debt collection, family court matters, employment disputes—without any lawyer. They can’t afford $300/hour attorneys. They often don’t qualify for free legal aid. They navigate complex legal systems alone.

AI-powered tools can bridge some of that gap. Automated document preparation helps people file court forms correctly. Legal chatbots answer basic questions about rights and procedures. Self-help platforms guide people through simple legal processes.

This isn’t a full representation. It’s not appropriate for complex matters. But for straightforward situations, AI assistance is vastly better than nothing.

Some critics worry this creates two-tiered justice—AI for the poor, human lawyers for the rich. There’s validity to that concern. But the current system already has tiers. Many people have zero access. AI at least provides something.

The key is ensuring AI tools are accurate, accessible, and clearly limited. People need to understand what automated services can and cannot do. They need pathways to human lawyers when situations exceed AI capabilities.

Done right, AI expands the pie rather than just redistributing existing legal services. More people get basic help. Human lawyers focus on complex, high-value work. The profession’s overall contribution to society increases.

Regulatory and Ethical Considerations

Professional responsibility rules haven’t caught up to AI reality. Lawyers face uncertainty about ethical obligations when using these tools.

Several principles are emerging. Lawyers maintain professional responsibility for AI-assisted work. Delegation to AI doesn’t eliminate the duty of competence. Attorneys must verify outputs, catch errors, and ensure accuracy.

The ChatGPT fabrication cases demonstrate this clearly. Courts hold lawyers accountable for submitted materials regardless of how those materials were generated. “AI did it” isn’t a defense.

Confidentiality obligations also apply to AI tools. Inputting client information into systems without adequate security violates professional responsibility. Lawyers must use tools that protect privileged and confidential data.

Disclosure obligations remain unclear. Must lawyers tell clients when AI assisted their work? Opinions vary. Some jurisdictions may require disclosure. Others may not. The lack of clear guidance creates risk.

Competence requirements are evolving. Some argue lawyers must understand AI tools they use, including limitations and failure modes. Blind reliance on technology without understanding creates malpractice exposure.

Bar associations are developing AI guidance, but slowly. Practitioners need clearer rules about acceptable use, required safeguards, disclosure obligations, and quality control measures.

Looking Ahead: The Legal Profession in 2030

Predictions are hazardous. But current trends suggest where things are heading.

By 2030, AI will likely be ubiquitous in legal practice. Firms that don’t use it will seem as outdated as offices without email. The technology won’t be novel—it’ll be standard infrastructure.

The lawyer’s role will center on judgment, strategy, and relationships. Routine tasks will be almost entirely automated. Junior lawyers won’t spend years doing document review—they’ll train on AI supervision, client interaction, and strategic thinking from day one.

Legal education will look different. Law schools will teach AI literacy alongside traditional subjects. Clinical programs will incorporate technology supervision. Ethics courses will deeply address AI-related professional responsibility.

The job market will be bifurcated. Lawyers with strong AI skills, business acumen, and client relationship abilities will thrive. Those who can only perform tasks that AI handles will struggle. The premium on uniquely human capabilities will increase.

Access to basic legal services will improve for ordinary people. Many simple legal needs will be met through AI-powered platforms. Human lawyers will focus on complex matters that genuinely require expertise.

Billing models will continue shifting toward value-based pricing. Clients will increasingly refuse to pay hourly rates when AI does the work. Firms will compete on outcomes, expertise, and service quality rather than hours billed.

Regulatory frameworks will mature. Bar associations will establish clear AI guidelines. Courts will develop precedents about AI use and accountability. The current wild-west environment will give way to structured norms.

But lawyers will still exist. The profession will transform, not disappear. Human judgment, ethical reasoning, advocacy, and relationship skills will remain essential. Technology will change how lawyers work, not whether they’re needed.

Frequently Asked Questions

Will AI completely replace lawyers in the near future?

No. While AI will automate many routine legal tasks, the profession requires human judgment, ethical reasoning, client relationships, and courtroom advocacy that AI cannot replicate. Research from the Brookings Institution shows that while over 30% of workers could see AI impact their tasks, lawyers generally have high adaptive capacity due to their education and transferable skills. The transformation will change how lawyers work, not eliminate the profession.

Which legal jobs are most at risk from AI?

Entry-level positions focused on routine tasks face the highest exposure. Document review, basic legal research, standard contract drafting, and e-discovery work can now be performed by AI with human oversight. As warned at the 2026 Davos World Economic Forum, entry-level workers may feel AI’s impact first as these educational stepping stones become automated.

What percentage of legal professionals currently use AI?

According to the latest Legal Trends Report, 79% of legal professionals now use AI in some form. This represents a dramatic shift from early adoption to mainstream integration. The gap between firms that embrace AI and those that resist is becoming increasingly clear in terms of efficiency and competitiveness.

Can AI handle courtroom litigation and trial work?

No. Trial advocacy requires real-time adaptation, reading judges and jurors, building credibility through presence, delivering persuasive arguments, and conducting cross-examination. These deeply human skills cannot be replicated by AI. Litigation research and preparation may be AI-assisted, but courtroom performance remains exclusively human territory.

Should I avoid law school because of AI?

Not necessarily, but choose your specialization carefully. Practice areas emphasizing uniquely human skills—complex litigation, high-stakes negotiation, relationship-intensive work, and novel legal questions—remain protected from AI disruption. Avoid careers focused solely on routine, repetitive tasks. The legal profession isn’t disappearing, but it’s transforming in ways that make some specialties more resilient than others.

How do I protect my legal career from AI disruption?

Develop AI literacy to understand the technology’s capabilities and limitations. Build supervision skills to oversee AI-assisted work effectively. Double down on distinctly human abilities—emotional intelligence, persuasive communication, creative problem-solving, and client relationship management. Embrace continuous learning as AI tools evolve. Specialize in practice areas that require judgment and personal interaction rather than just task execution.

What’s the biggest risk of using AI in legal practice?

Professional responsibility violations. The documented cases of ChatGPT fabricating legal citations demonstrate that lawyers remain accountable for all submitted work regardless of how it was generated. Using generic AI tools without legal training creates risks of inaccuracy, confidentiality breaches, and ethical violations. Legal-specific AI with cited sources, security features, and accuracy safeguards is essential for responsible practice.

Conclusion: Transformation, Not Obsolescence

The evidence is clear. AI will not replace lawyers, but it will fundamentally reshape the profession.

Routine tasks are being automated. Entry-level training must evolve. Billing models are under pressure. Competitive dynamics favor AI adopters. These changes are real and accelerating.

But the core of legal practice remains irreducibly human. Judgment in ambiguous situations. Ethical reasoning beyond rule-following. Client relationships built on trust and empathy. Courtroom advocacy that persuades through presence. Strategic problem-solving in unprecedented circumstances.

No algorithm replicates these capabilities.

The lawyers who thrive will embrace AI as a tool while doubling down on uniquely human skills. They’ll use technology to handle routine work faster and focus their expertise on high-value activities that genuinely require human intelligence.

The lawyers who struggle will resist change, cling to outdated models, and try to compete on tasks that AI performs better.

The choice is adaptation or obsolescence. But obsolescence comes not from AI replacing lawyers—it comes from lawyers who use AI replacing lawyers who don’t.

Legal professionals at every career stage should assess their skills honestly, invest in AI literacy, and develop capabilities that technology can’t match. Law firms should integrate AI strategically while redesigning training, billing, and service delivery for the new reality.

The transformation is underway. The profession’s future depends on how its members respond.

Ready to future-proof your legal practice? Start by evaluating legal-specific AI tools that enhance rather than replace your expertise. The firms making strategic AI investments today are building competitive advantages that will define success tomorrow.