Quick Summary: LLM server costs vary dramatically: cloud APIs like OpenAI charge $0.03-$6 per 1M tokens depending on the model, while self-hosting requires $50,000-$287,000 annually for capable infrastructure. The break-even point typically occurs at 500M+ tokens monthly for enterprise deployments. Cost optimization depends on usage volume, data privacy needs, and whether you prioritize minimal upfront investment or long-term savings.

The economics of running large language models has become a critical business decision. According to competitor content, enterprise spending on LLM APIs doubled to $8.4 billion in 2025, yet many organizations find themselves questioning whether cloud providers or self-hosted infrastructure makes financial sense.

According to competitor content citing Kong’s 2025 Enterprise AI report, 44% of organizations cite data privacy and security as the top barrier to LLM adoption. Every prompt sent to external APIs touches servers outside organizational control. This privacy concern drives many teams toward self-hosting, but infrastructure costs create their own financial challenges.

The math isn’t straightforward. Cloud APIs offer zero upfront costs but compound expenses at scale. Self-hosting demands substantial capital investment but promises long-term savings. The break-even point depends on usage volume, model size, and operational requirements.

Understanding LLM Pricing Models

Cloud providers have standardized around token-based pricing. OpenAI charges $0.03 per 1,000 input tokens and $0.06 per 1,000 output tokens for GPT-4. GPT-3.5 Turbo runs significantly cheaper at $0.0015 per 1,000 input tokens.

But what does that actually mean for real workloads? A single customer support conversation might consume 2,000-5,000 tokens. Scale that to thousands of conversations daily, and costs accumulate fast.

Token costs vary dramatically across providers and models. According to OpenAI’s documentation, audio tokens in the Realtime API are priced at 1 token per 100 milliseconds for user messages, while assistant audio outputs count 1 token per 50 milliseconds. These modality differences create pricing complexity that’s easy to underestimate.

Major Cloud Provider Pricing Structures

Amazon Bedrock follows similar token-based pricing, though rates depend on the specific foundation model selected. Pricing varies by modality, provider, and model tier. Google Cloud’s Vertex AI maintains comparable pricing structures while offering Standard PayGo consumption options that adjust throughput capacity based on organizational spending over 30-day periods.

Here’s the thing though—cloud pricing isn’t just about per-token rates. Providers implement usage tiers, batch processing discounts, and regional variations that complicate direct comparisons.

According to OpenAI’s cost optimization documentation, the Batch API and flex processing provide additional cost reduction mechanisms beyond standard pricing. Batch processing can reduce expenses for non-time-sensitive workloads where latency requirements are flexible.

| Provider | Model Example | Input Cost (per 1M tokens) | Output Cost (per 1M tokens) | Notable Features |

|---|---|---|---|---|

| OpenAI | GPT-4 | $30 | $60 | Realtime API, batch processing |

| OpenAI | GPT-3.5 Turbo | $1.50 | $2.00 | Lower cost, faster inference |

| Amazon Bedrock | Various providers | Varies by model | Varies by model | Multi-provider access |

| Google Vertex AI | Gemini models | Varies by tier | Varies by tier | Usage-based tier upgrades |

Hidden Costs in Cloud LLM Services

Token pricing represents only part of the financial picture. Cloud deployments incur costs that don’t appear on initial pricing pages.

Data egress fees accumulate when transferring large volumes of responses. Storage costs apply to conversation logs and training data. Monitoring and observability tools add overhead. For production systems requiring guaranteed throughput, reserved capacity pricing models replace pay-per-token economics with fixed commitments.

Community discussions on platforms like LocalLLaMA reveal frustration with unpredictable cloud costs. Usage patterns that seem reasonable during testing can explode in production as concurrency increases.

Self-Hosting Infrastructure Costs

The promise of self-hosted LLMs centers on long-term cost savings and data control. But the upfront investment is substantial, and operational expenses persist indefinitely.

Community discussions report that running Qwen-2.5 32B or QwQ 32B on AWS g5.12xlarge instances (4x A10G GPUs) costs approximately $50,000 annually at continuous operation. Llama-3 70B on p4d.24xlarge instances (8x A100 GPUs) reportedly costs around $287,000 per year at continuous operation.

Those numbers assume cloud infrastructure. On-premise hardware changes the economics entirely.

Hardware Requirements and Capital Costs

Modern consumer CPU bandwidth—dual-channel DDR5-6400 delivering around 100 GB/s—falls dramatically short compared to GPU throughput exceeding 1.7 TB/s. Apple Silicon presents an exception with Unified Memory Architecture providing higher bandwidth, but scaling Apple hardware for production workloads faces practical limitations.

The rule of thumb: roughly 0.5GB of VRAM per billion parameters when using 4-bit quantization. Full precision FP16 doubles that requirement. A 70B parameter model in 4-bit quantization needs approximately 35GB+ VRAM minimum. The model must fit in VRAM for reasonable inference speed; otherwise, the system falls back to CPU processing running 10-100x slower.

Community discussions report that minimal internal deployment costs range between $125,000-$190,000 annually, with moderate-scale customer-facing features running $500,000-$820,000 yearly. Core product engines at enterprise scale extend beyond those figures substantially.

Operational Expenses Beyond Hardware

Infrastructure represents only the beginning. Self-hosting requires skilled DevOps personnel, ongoing maintenance, power and cooling, backup systems, and network infrastructure.

Power consumption for GPU servers is substantial. An 8x A100 system can draw 3-5 kW under load, translating to $2,000-$4,000 annually in electricity costs depending on local rates. Cooling requirements add another 30-50% to power consumption.

But wait. Hardware ages. GPUs lose resale value rapidly as newer architectures emerge. A three-year depreciation cycle means capital costs amortize annually, plus eventual replacement expenses.

Breaking Down Total Cost of Ownership

Comparing cloud and self-hosted costs requires calculating total cost of ownership over realistic time horizons. The analysis shifts dramatically based on usage volume.

For low-volume applications processing under 10 million tokens monthly, cloud APIs remain unbeatable economically. At GPT-3.5 Turbo rates of $1.50 per million input tokens, monthly costs stay under $20. No infrastructure investment makes financial sense at this scale.

The calculation changes for moderate usage. Processing 100 million tokens monthly on GPT-3.5 Turbo costs approximately $150-200. Over three years, that’s $5,400-7,200—still well below minimal self-hosting infrastructure.

The Break-Even Point

Analysis suggests break-even typically occurs around 500 million to 1 billion tokens monthly for enterprise deployments. At this volume, cloud costs reach $15,000-60,000 monthly depending on the model used. Annually, that’s $180,000-720,000.

Self-hosted infrastructure costing $125,000-190,000 annually for minimal deployment starts making economic sense. Over three years, on-premise solutions can deliver 30-50% savings compared to cloud services for high-volume workloads.

Sound familiar? This matches patterns reported in community analyses comparing cloud versus on-premise deployments at scale.

| Monthly Token Volume | Cloud API Cost (GPT-3.5) | Cloud API Cost (GPT-4) | Self-Host Estimate | Recommended Approach |

|---|---|---|---|---|

| 10M tokens | $15-20 | $300-600 | N/A | Cloud API |

| 100M tokens | $150-200 | $3,000-6,000 | N/A | Cloud API |

| 500M tokens | $750-1,000 | $15,000-30,000 | $10,400/month | Consider self-hosting |

| 1B+ tokens | $1,500-2,000 | $30,000-60,000 | $10,400-15,800/month | Self-hosting likely cheaper |

Hidden Variables in TCO Calculations

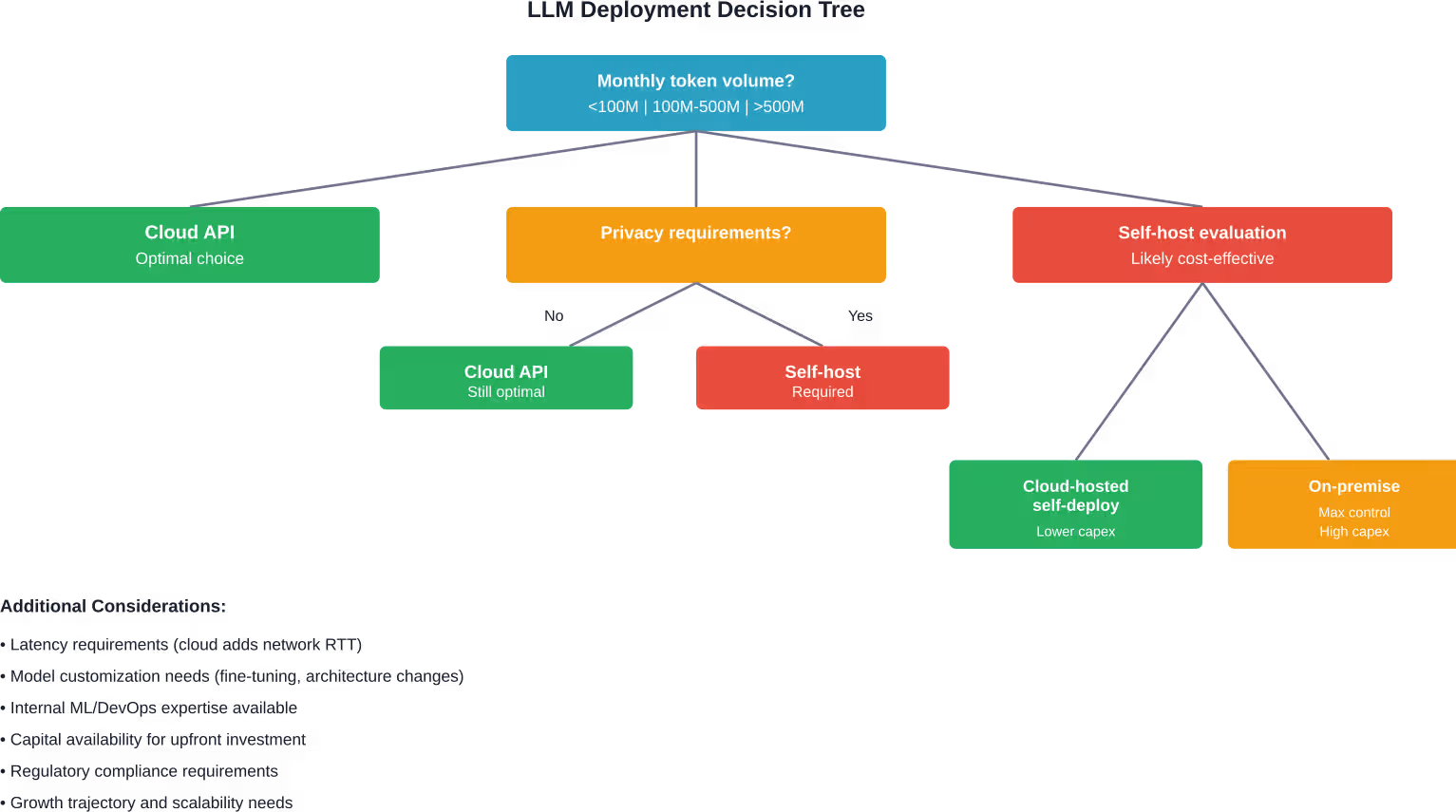

Standard break-even analysis overlooks critical factors. Data privacy requirements may force self-hosting regardless of cost efficiency. Regulatory compliance in healthcare, finance, or government sectors often mandates on-premise infrastructure.

Latency requirements shift the equation. Cloud API calls introduce network round-trip time. For real-time applications requiring sub-100ms response times, local inference becomes necessary independent of cost considerations.

Model customization creates another dimension. Cloud providers offer limited fine-tuning options. Organizations needing extensive model adaptation require infrastructure supporting custom training pipelines, dramatically increasing complexity and cost.

Cost Optimization Strategies

Regardless of deployment choice, cost optimization techniques can reduce LLM expenses substantially. According to OpenAI’s cost optimization documentation, several strategies consistently deliver savings.

Reducing Token Consumption

Every token costs money. Minimizing token usage directly reduces expenses. Shorter prompts deliver the same results with lower costs. Removing unnecessary context, examples, and verbose instructions cuts token counts without sacrificing output quality.

Prompt engineering becomes an economic optimization exercise. Testing different prompt formulations to achieve identical results with fewer tokens generates immediate ROI. A 20% reduction in average prompt length translates directly to 20% cost savings.

Caching frequently used context reduces redundant token processing. Many providers now support prompt caching where repeated context portions don’t count toward token limits on subsequent requests.

Batch Processing and Async Workloads

OpenAI’s Batch API offers significantly reduced pricing for non-time-sensitive workloads. Processing requests asynchronously when latency requirements are flexible unlocks substantial discounts.

The Batch API accepts bulk requests processed within 24-hour windows. For tasks like content analysis, data enrichment, or batch summarization, this approach cuts costs while maintaining throughput.

Similar batch processing capabilities exist across providers. Amazon SageMaker supports batch transform jobs. Google Vertex AI offers batch prediction endpoints with reduced pricing compared to online inference.

Model Selection and Quantization

Smaller models cost less per token and run faster. GPT-3.5 Turbo costs roughly 5% of GPT-4 pricing. For tasks within smaller model capabilities, cost savings compound massively at scale.

For self-hosted deployments, quantization reduces hardware requirements dramatically. 4-bit quantization halves memory needs compared to 8-bit, enabling larger models on equivalent hardware. According to technical discussions, accuracy degradation from quantization remains minimal for most applications.

Research published on arXiv explores LLM shepherding techniques where small language models handle most requests while larger models provide hints only when needed. Even small hints (10-30% of full LLM responses) yield substantial accuracy gains. This hybrid approach can deliver dramatic cost reductions while maintaining output quality.

Optimize Cloud vs Self-Hosting Before Costs Lock In

Choosing between cloud and self-hosted LLM infrastructure is rarely just a pricing decision. Costs depend on how models are trained, deployed, and used over time, including data pipelines, scaling strategy, and system efficiency. AI Superior works across the full lifecycle, from data preparation and model selection to deployment and optimization, helping teams design setups that match real usage instead of theoretical capacity.

In practice, this often means deciding where cloud makes sense, where self-hosting is justified, and how to avoid overpaying in either direction. The focus is on building systems that run reliably in production, not just comparing infrastructure costs. If you are evaluating cloud vs self-hosting or already seeing costs grow, it is worth reviewing your architecture early. Reach out to AI Superior to assess your setup before costs scale further.

Infrastructure Performance Optimization

For self-hosted deployments, hardware utilization directly impacts cost efficiency. According to AWS announcements, Amazon SageMaker Large Model Inference container v15 powered by vLLM 0.8.4 with vLLM V1 engine support delivers the V1 engine, which provides higher throughput than the previous V0 engine.

The V1 engine includes an async mode that directly integrates with vLLM’s AsyncLLMEngine, creating a more efficient background loop that continuously processes incoming requests for higher throughput than the previous Rolling-Batch implementation. These infrastructure improvements translate directly to cost savings by extracting more inference capacity from equivalent hardware.

Hardware Architecture Choices

AWS Graviton processors provide cost-efficient alternatives for smaller models. Analysis from AWS demonstrates that running small language models on Graviton3-based instances (ml.c7g series) with llama.cpp for Graviton-optimized inference and pre-quantized GGUF format models delivers substantial cost savings for appropriate workloads.

Google Cloud’s A4 VMs based on NVIDIA Blackwell architecture represent the latest high-performance option. According to case studies, Baseten achieved over 225% better cost-performance serving popular models like DeepSeek V3, DeepSeek R1, and Llama 4 Maverick on A4 infrastructure compared to previous generation hardware.

Hardware selection depends on model size and throughput requirements. Smaller models under 13B parameters run effectively on CPU-based instances. Mid-size models (13B-70B parameters) benefit from single or multi-GPU setups. Large models above 70B parameters require multi-GPU configurations or model parallelism strategies.

Dynamic Workload Scheduling

Google Cloud’s Dynamic Workload Scheduler optimizes resource utilization across varying traffic patterns. Rather than provisioning for peak capacity continuously, dynamic scheduling scales resources based on actual demand.

This capability matters most for workloads with significant traffic variation. Applications experiencing daily or weekly usage patterns waste resources during low-traffic periods with static provisioning. Dynamic scheduling can reduce infrastructure costs by 40-60% for workloads with pronounced variability.

Real-World Cost Examples

Theoretical analysis only goes so far. Real deployment costs provide concrete reference points.

Community discussions describe minimal production deployments costing $125,000-190,000 annually. This typically supports internal tooling and moderate request volumes—thousands of requests daily rather than millions.

Moderate-scale customer-facing features run $500,000-820,000 yearly according to the same analyses. This scale handles significant production traffic with acceptable latency and availability guarantees.

Enterprise-Scale Deployments

Large organizations running LLMs as core product infrastructure report costs extending well beyond these ranges. Multi-million dollar annual investments become typical for high-volume, low-latency requirements across distributed geographic regions.

Research from arXiv analyzing inference economics provides baseline calculations. Taking the A800 80GB as an example under common assumptions, baseline hourly cost per card approximates $0.79/hour, generally falling within the $0.51-0.99/hour range. Major cloud platforms typically charge multiples of this baseline to cover operational overhead and margin.

These per-card costs multiply across the GPU counts required for larger models. An 8-GPU deployment runs approximately $6.32/hour at baseline rates, translating to $55,366 annually for continuous operation—before factoring in power, cooling, networking, or personnel costs.

Comparing Cloud and On-Premise at Scale

Analysis examining cloud versus on-premise economics finds that on-premise systems delivering equivalent capacity to high-volume cloud deployments require approximately $833,806 in upfront capital costs for H100-based infrastructure.

Over three years, this capital investment amortizes to approximately $277,935 annually. Add operational expenses—power, cooling, maintenance, personnel—and total annual costs reach $350,000-450,000 for enterprise-grade on-premise deployment.

Compare that to cloud API costs at equivalent volumes. Processing 5 billion tokens monthly on GPT-4 runs approximately $150,000-300,000 monthly, or $1.8-3.6 million annually. The on-premise break-even becomes clear at this scale.

| Deployment Scenario | Cloud API Annual Cost | Self-Hosted Cloud Annual Cost | On-Premise Annual Cost |

|---|---|---|---|

| Small (100M tokens/month) | $2,400 | Not economical | Not economical |

| Medium (500M tokens/month) | $12,000-360,000 | $125,000-190,000 | $350,000-450,000 |

| Large (2B tokens/month) | $48,000-1.4M | $287,000-400,000 | $350,000-450,000 |

| Enterprise (5B+ tokens/month) | $1.8M-3.6M | $400,000-600,000 | $400,000-550,000 |

Data Privacy and Compliance Costs

Financial analysis alone doesn’t capture the complete decision framework. Data privacy and regulatory compliance impose requirements that override pure cost optimization.

Healthcare organizations subject to HIPAA regulations face strict data handling requirements. Sending patient information to external APIs creates compliance challenges that may be prohibitively complex or expensive to address. Self-hosting becomes mandatory regardless of cost inefficiency at lower volumes.

Financial services encounter similar constraints under regulations like GDPR, PCI-DSS, and sector-specific requirements. The cost of compliance violations—both financial penalties and reputational damage—dwarfs infrastructure expenses.

Quantifying Privacy Value

How much is data privacy worth financially? This calculation depends on business context. For consumer applications handling non-sensitive data, privacy premiums may be minimal. For enterprises managing proprietary information, intellectual property, or regulated data, privacy value becomes substantial.

Some organizations accept 2-3x higher costs for self-hosted infrastructure purely for data sovereignty. Others require air-gapped deployments with no external connectivity regardless of cost multiples involved.

The 44% of organizations citing data privacy as a top barrier to LLM adoption reflects this calculus. Cost efficiency matters, but not at the expense of core security and compliance requirements.

Long-Term Cost Trends

LLM economics continue evolving rapidly. Inference costs have declined substantially as algorithmic efficiency improves and hardware advances.

Research from MIT examining algorithmic efficiency and falling AI inference costs found that closed-weight model trends are slightly faster than open-weight model trends. This is particularly pronounced for closed-weight models in the 40%-60% group, where sudden price drops occur that are not mirrored in open-weight models, hinting at non-technical competitive effects.

Moore’s Law and AI Acceleration

Hardware performance continues improving. NVIDIA’s Blackwell architecture delivers significant performance gains over previous generations. Google’s TPU developments and specialized AI accelerators from startups create ongoing performance improvements.

These hardware advances reduce costs in two ways. First, newer hardware delivers more inference throughput per dollar of capital investment. Second, competition among cloud providers creates pricing pressure that benefits customers.

But wait. Hardware improvements also enable larger, more capable models. GPT-3 to GPT-4 brought substantial capability increases alongside higher inference costs. The trend toward larger models can offset infrastructure efficiency gains.

Open Source Model Ecosystem

Open-weight models from Meta, Mistral, Alibaba, and others create competitive pressure on proprietary model pricing. Organizations can deploy open models like Llama 4, DeepSeek, or Qwen without per-token API charges.

This dynamic accelerates cost reduction for organizations capable of self-hosting. The gap between proprietary API costs and self-hosted open model costs widens as open model quality improves.

Analysis emphasizes that viewing “open source LLMs” as free represents a misconception. The models themselves have no licensing fees, but operational costs remain substantial. The true savings come from eliminating per-token charges at sufficient scale—not from zero-cost operation.

Making the Build vs Buy Decision

The short answer? It depends on volume, capabilities, and constraints.

Cloud APIs make overwhelming sense for exploration, prototyping, and low-to-moderate production volumes. Zero upfront investment, no operational complexity, and instant access to state-of-the-art models provide unbeatable value for most use cases.

Self-hosting becomes economically viable when monthly token volumes exceed 500 million to 1 billion tokens consistently. At this scale, infrastructure costs amortize effectively, and total cost of ownership favors owned infrastructure over API charges.

Decision Framework

Consider these factors systematically:

- Volume and scale: Calculate current and projected token consumption over 12-36 months. Break-even analysis requires multi-year time horizons to amortize capital investments properly.

- Data sensitivity: Determine whether data privacy, regulatory compliance, or intellectual property concerns mandate self-hosting regardless of cost considerations.

- Latency requirements: Applications requiring sub-100ms response times may need local inference independent of cost efficiency.

- Model customization needs: Extensive fine-tuning, continued training, or model architecture modifications require self-hosted infrastructure with full model access.

- Technical capabilities: Self-hosting demands ML engineering, DevOps, and infrastructure expertise. Organizations lacking these capabilities face substantial hiring or consulting costs that impact TCO calculations.

- Capital availability: On-premise infrastructure requires significant upfront investment. Cloud-hosted self-deployment reduces capital requirements while maintaining some cost advantages over APIs at scale.

Frequently Asked Questions

How much does it cost to run an LLM server?

Cloud API costs range from $0.0015 to $6 per million tokens depending on the model. Self-hosting requires $50,000-$287,000 annually for cloud infrastructure or $350,000-$550,000 for on-premise deployment including hardware, power, and operational expenses. Costs scale with model size, throughput requirements, and usage volume.

When does self-hosting LLMs become cheaper than cloud APIs?

Break-even typically occurs around 500 million to 1 billion tokens monthly for enterprise deployments. Below this threshold, cloud APIs remain more cost-effective due to zero upfront costs and operational simplicity. Above this volume, self-hosted infrastructure delivers 30-50% savings over three-year time horizons.

What are the hidden costs of self-hosting LLMs?

Beyond hardware and cloud infrastructure costs, self-hosting incurs DevOps personnel expenses, power consumption ($2,000-$4,000 annually for large GPU systems), cooling requirements adding 30-50% to power costs, backup systems, network bandwidth, monitoring tools, and hardware depreciation with replacement cycles every 3-5 years.

Can I run LLMs cost-effectively at home?

Smaller models under 13B parameters run on consumer hardware with modest costs—primarily electricity at $50-200 monthly depending on usage and local rates. Larger models require professional GPU setups costing $3,000-15,000 in hardware plus ongoing power expenses. For personal use and experimentation, this can be cost-effective, but production deployments require enterprise infrastructure.

How do different LLM providers compare on pricing?

OpenAI charges $30-60 per million tokens for GPT-4 and $1.50-2.00 for GPT-3.5 Turbo. Amazon Bedrock and Google Vertex AI offer comparable pricing with variations based on specific models and consumption tiers. Batch processing APIs provide 30-50% discounts for non-time-sensitive workloads across most providers.

What factors most impact LLM inference costs?

Token volume represents the primary cost driver for cloud APIs. For self-hosted deployments, model size determines hardware requirements, while throughput needs dictate infrastructure scale. Quantization (4-bit vs 8-bit vs full precision) affects memory requirements and hardware costs. Prompt engineering and caching strategies can reduce token consumption 15-40%.

Is it worth self-hosting open source LLMs?

Open source models eliminate per-token API charges but still require infrastructure investments. At volumes below 100 million tokens monthly, cloud APIs remain cheaper. Above 500 million tokens monthly, self-hosted open models deliver substantial savings despite operational complexity. Data privacy requirements may justify self-hosting regardless of cost break-even points.

Conclusion

LLM server costs present a nuanced decision framework where no single answer fits all scenarios. Cloud APIs deliver unmatched convenience and cost efficiency for low-to-moderate volumes. Self-hosting requires substantial upfront investment but generates long-term savings at scale.

The break-even point typically occurs around 500 million tokens monthly, though privacy requirements, latency needs, and model customization demands can override pure financial optimization. Organizations must calculate total cost of ownership over multi-year horizons while accounting for hidden operational expenses beyond simple infrastructure costs.

Cost optimization strategies—prompt engineering, batch processing, model selection, quantization, and caching—apply regardless of deployment choice and can reduce expenses 30-70% when implemented systematically.

Looking ahead, inference costs continue declining as hardware improves and algorithmic efficiency advances. Open source models create competitive pressure that benefits organizations capable of self-hosting at scale. The decision framework remains consistent: start with cloud APIs, monitor token consumption growth, and evaluate self-hosting when volumes justify infrastructure investment.

Ready to optimize LLM costs for your specific use case? Calculate projected token volumes, assess data privacy requirements, and model total cost of ownership across deployment options. The right choice depends on your unique constraints—but armed with realistic cost data, that decision becomes considerably clearer.