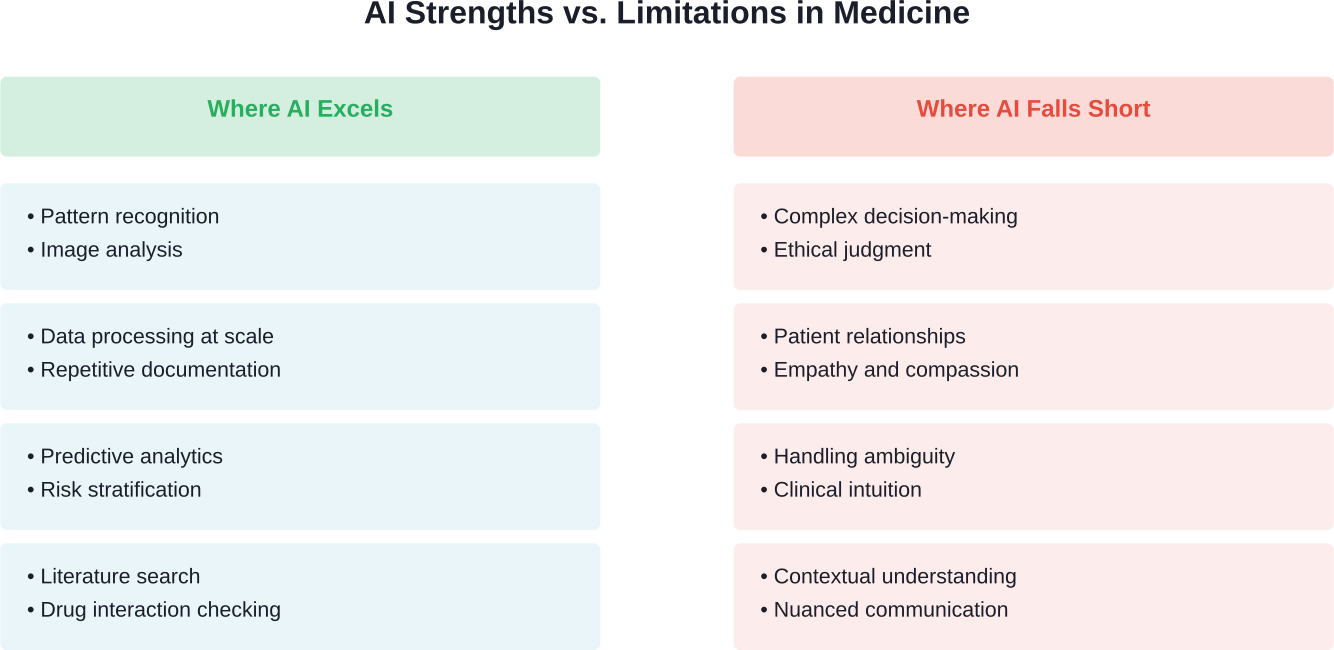

Quick Summary: AI will not replace physicians but will transform how they work. While AI excels at data analysis, pattern recognition, and administrative tasks, the complexity of medical decision-making, patient relationships, and ethical judgment requires human physicians. The future of medicine involves AI as a powerful tool that augments physician capabilities rather than replacing them.

The question of whether artificial intelligence will replace physicians has moved from science fiction to serious medical discourse. And honestly? The anxiety is understandable.

AI systems now read mammograms, predict patient deterioration, and draft clinical notes. According to research, more than half (129 (58%) devices in the USA and 126 (53%) devices in Europe) of AI/ML-based medical devices approved from 2015-2020 were approved or CE marked for radiological use. That’s substantial.

But here’s the thing: the data tells a more nuanced story than the headlines suggest.

What Medical Research Actually Shows About AI Replacing Physicians

The World Health Organization emphasizes that AI advancements should contribute to global health in ways that are “safe, ethical and equitable.” Notice they don’t say AI should replace healthcare workers.

According to research from the Yonsei Medical Journal, a meta-analysis of empirical studies found that AI significantly reduces physician workload and diagnostic time by automating repetitive interpretation and documentation processes. The key phrase? “Freeing clinicians to focus solely on patients.”

That’s augmentation, not replacement.

A meta-analysis examining medical AI’s impact on physician productivity revealed that automated generative AI-based electronic medical record systems reduce documentation time by approximately 40%. Physicians spend less time on paperwork and more time on what actually matters: patient care.

The Algorithm Aversion Problem

Here’s where it gets interesting. Research published in PubMed on whether AI will replace human physicians found something surprising: people trust human expertise more than accurate AI, especially for decisions traditionally made by humans.

Even when AI demonstrates higher accuracy, patients prefer human physicians for medical diagnosis. This supports what researchers call “algorithm aversion theory.”

Real talk: trust isn’t just a nice-to-have in medicine. It’s fundamental.

Where AI Excels in Healthcare Today

Let’s be clear about what AI actually does well in medical settings. The technology has shown remarkable progress in specific, well-defined tasks.

Diagnostic Imaging and Pattern Recognition

AI dominates in automated classification of medical images. A review of AI/ML-based medical devices approved from 2015-2020 found that more than half focused on diagnostic imaging applications.

Radiology, pathology, and dermatology have seen particularly strong AI adoption. These specialties involve pattern recognition at scale—exactly where machine learning thrives.

But reading images isn’t the same as practicing medicine.

Administrative and Documentation Tasks

According to research from the Korean Medical Association, AI systems excel at reducing administrative burden. Automated documentation, appointment scheduling, and data entry free physicians from tasks that don’t require medical expertise.

Healthcare providers face burnout rates between 25% and 75% in some clinical specialties, according to research published in PubMed. Reducing administrative load addresses a genuine crisis.

Precision Medicine and Drug Development

AI contributes to precision medicine by analyzing genetic data, identifying treatment patterns, and optimizing drug development. These applications support physician decision-making rather than replacing it.

Research shows AI helps with diagnosis, treatment planning, and improved patient outcomes. The emphasis? Helping.

Don’t Assume AI Replaces Physicians – See Where It Fits

In medicine, AI is showing up around the edges, helping handle large volumes of data, highlighting patterns, and speeding up routine steps. It doesn’t take over clinical responsibility, but it can take pressure off the process. AI Superior works with healthcare organizations to move from ideas to working solutions.

They start by breaking down real processes, identifying where AI can make sense, and then building custom systems that connect to existing infrastructure instead of replacing it. Their work is focused on making AI usable in everyday operations, not just testing concepts. If you are looking at AI in clinical settings, it is more useful to evaluate it on real workflows rather than general claims.

Contact AI Superior to explore what can be improved without disrupting how care is delivered.

Why AI Won’t Replace Physicians: The Fundamental Barriers

The philosophical analysis published in the Journal of Medical Ethics and History of Medicine argues that the idea of AI completely replacing physicians is a “pseudo-problem.” Here’s why.

The Embodiment Problem

Medicine isn’t just pattern recognition. Physical examination requires tactile feedback, observation of subtle patient movements, and integration of sensory information that AI systems lack.

A urologist at Keck Medicine of USC explained: “I know the patient’s history, the previous surgeries they’ve had and obstacles I might encounter. AI can’t really do that yet.”

That word “yet” is important, but so is the recognition of current limitations.

The Complexity of Clinical Decision-Making

Medical decisions involve weighing incomplete information, managing uncertainty, and considering patient values. These aren’t computational problems with optimal solutions.

According to research from the University of Messina published in Healthcare, there’s no universally accepted definition of AI in medicine despite its growing use. The ambiguity reflects the complexity of medical practice itself.

Clinical intuition develops from thousands of patient interactions. AI can process more data, but pattern matching isn’t the same as clinical reasoning.

The Trust and Relationship Factor

The doctor-patient relationship involves empathy, communication, and trust-building that extend beyond information exchange. Research published in Healthcare emphasizes how AI shapes rather than replaces this relationship.

Even for highly stigmatized diseases, patients prefer human physicians for diagnosis and treatment decisions. The human element isn’t a legacy feature to be optimized away.

| Clinical Capability | AI Performance | Human Physician Performance | Current Best Approach |

|---|---|---|---|

| Image classification accuracy | High (90-95%+ in specific domains) | Variable (85-95% depending on specialty) | AI-assisted human review |

| Documentation speed | Excellent (real-time generation) | Slow (significant time burden) | AI automation with physician oversight |

| Complex differential diagnosis | Limited (struggles with rare conditions) | Strong (especially with experience) | Physician-led with AI support |

| Patient communication | Poor (lacks empathy and nuance) | Essential (core clinical skill) | Human physician primary |

| Ethical decision-making | Not applicable (requires values) | Required (central to practice) | Human physician exclusively |

| Literature review | Excellent (rapid comprehensive search) | Time-consuming but thorough | AI-assisted research |

Regulatory and Liability Challenges

Who’s responsible when AI makes an error? The World Health Organization released guidance on AI regulation emphasizing the importance of establishing safety and effectiveness before widespread adoption.

Medical practice involves legal accountability. Current frameworks assign responsibility to human practitioners, not algorithms.

Until liability frameworks evolve—and that’s a massive undertaking—AI will function as a tool used by physicians, not an independent practitioner.

How AI Actually Changes Medical Practice

The real story isn’t a replacement. It’s transformation.

The Complementary Model

Research published in PubMed emphasizes “complementing, not replacing” doctors and healthcare providers. The utilization of AI in clinical practice contributes to improved diagnostic accuracy and optimized treatment planning.

Keck Medicine of USC frames it clearly: “What we’re looking for is not necessarily the perfect AI model that’s going to be 100% accurate but more AI technology that can help a physician make better decisions.”

That’s the practical approach emerging in healthcare systems.

Redefining Physician Productivity

The Korean Medical Association research found that AI revolutionizes physician productivity by handling repetitive tasks. Physicians shift time from data entry to patient interaction.

This changes what “being a doctor” means day-to-day, but it doesn’t eliminate the role.

Addressing Healthcare Workforce Challenges

Healthcare faces skilled workforce shortages globally. The World Health Organization notes that AI holds potential to address these challenges by extending the reach of available providers.

In resource-limited settings, AI-assisted diagnosis can help less specialized practitioners make decisions that previously required specialists. That’s not replacement—it’s capability extension.

What Physicians Think About AI

A cross-sectional quantitative research study of 105 physicians in Saudi Arabia examined attitudes toward AI at a conceptual level, finding no significant differences related to sex, work category, and years of experience. The findings highlighted significant concerns alongside recognition of potential benefits.

Generally speaking, physicians acknowledge AI’s capabilities while expressing reservations about over-reliance on technology. The concerns aren’t Luddite resistance—they reflect legitimate questions about patient safety and care quality.

The Knowledge and Training Gap

Research from Zagazig University examined the impact of AI enhancement programs on healthcare providers’ knowledge and attitudes. The integration of AI influences workplace flourishing when accompanied by proper training.

But wait. If physicians need specialized training to use AI effectively, doesn’t that reinforce their central role rather than threaten it?

Exactly.

The Ethical and Governance Framework

The World Health Organization released ethics and governance guidance for large multi-modal models in healthcare. The guidance emphasizes ensuring AI works “to the public benefit of all countries.”

WHO Director-General Tedros Adhanom Ghebreyesus stated: “The future of healthcare is digital, and we must do what we can to promote universal access to these innovations and prevent them from becoming another driver for inequity.”

Notice the framing: AI as innovation requiring human governance, not autonomous replacement.

Six Principles for AI in Health

The WHO guidance identifies ethical challenges and establishes six consensus principles to ensure AI serves public benefit. These principles acknowledge AI as a tool requiring ethical oversight—something algorithms can’t provide for themselves.

Medical practice involves value judgments that reflect societal consensus, cultural context, and individual patient preferences. These aren’t computational problems.

Specific Medical Specialties and AI Impact

Not all specialties face the same level of AI disruption.

Radiology and Pathology

These image-intensive specialties see the most significant AI adoption. But radiologists aren’t disappearing—they’re evolving into specialists who interpret AI findings in clinical context.

The radiologist of 2026 spends less time on routine screening and more time on complex cases requiring expert judgment.

Primary Care

Primary care involves relationship continuity, preventive counseling, and care coordination. AI assists with clinical decision support and documentation, but the relationship-centered nature of primary care resists automation.

Surgery

As the Keck Medicine urologist noted, surgical practice involves tactile knowledge, patient-specific anatomical understanding, and real-time decision-making during procedures.

Robotic surgical systems enhance precision, but they’re operated by surgeons. AI provides intraoperative guidance, but doesn’t replace surgical expertise.

| Medical Specialty | AI Disruption Level | Primary AI Applications | Expected Future Role |

|---|---|---|---|

| Radiology | High | Image analysis, automated reporting | Expert interpretation and complex cases |

| Pathology | High | Digital pathology analysis, pattern detection | Diagnostic confirmation and rare diseases |

| Primary Care | Moderate | Clinical decision support, triage | Relationship-centered comprehensive care |

| Emergency Medicine | Moderate | Risk stratification, predictive analytics | Acute care and critical decision-making |

| Surgery | Low-Moderate | Surgical planning, robotic assistance | Complex procedures and patient-specific care |

| Psychiatry | Low | Symptom tracking, therapy support | Therapeutic relationship and treatment |

What Patients Want: The Human Element

Research on patient preferences shows patients favor human physicians for medical diagnosis, even when acknowledging AI’s potential accuracy advantages.

Research on algorithm aversion shows people discount algorithmic advice more than human advice, particularly in high-stakes medical decisions.

Sound familiar? Patients want expertise, but they also want someone who understands their fears, explains options in accessible language, and makes them feel heard.

AI doesn’t do that. Not yet. Maybe not ever.

The Self-Diagnosis Trend

Patients increasingly use AI tools for preliminary self-diagnosis support based on peer-reviewed literature.

But the research explicitly states: “Patient-facing AI tools remain a means of assistance and do not replace consultation with a physician.”

Even the tools designed for patient use acknowledge physician consultation as essential.

The Next Decade: Realistic Predictions

So what does the future actually look like?

Expanded AI Capabilities

AI will continue improving in diagnostic accuracy, predictive analytics, and personalized treatment recommendations. Large language models will enhance clinical documentation and patient communication support.

These advancements make physicians more effective, not obsolete.

Hybrid Practice Models

The emerging model combines AI efficiency with human expertise. Physicians become interpreters and integrators of AI-generated insights rather than solely generators of those insights.

That’s a role transformation, not elimination.

Specialized Human Skills

As AI handles routine tasks, physician training may emphasize communication, ethical reasoning, and complex decision-making. These distinctly human capabilities become more valuable, not less.

Preparing for the AI-Augmented Future

Healthcare systems need strategies to integrate AI effectively while maintaining the physician’s central role.

Medical Education Evolution

Research on AI enhancement programs shows that proper training impacts healthcare providers’ knowledge, attitudes, and workplace flourishing. Medical education must incorporate AI literacy alongside traditional clinical skills.

Future physicians need to understand AI capabilities and limitations to use these tools effectively.

Regulatory Frameworks

The World Health Organization emphasizes rapid availability of appropriate systems alongside safety assurance. Regulation must balance innovation with patient protection.

Establishing clear guidelines for AI development, validation, and deployment protects patients while enabling beneficial technology adoption.

Equitable Access

AI risks becoming another driver of health inequity if access concentrates in well-resourced settings. WHO’s vision emphasizes universal access to digital health innovations.

Preventing AI from exacerbating health disparities requires intentional policy and investment.

Frequently Asked Questions

Will AI completely replace doctors in the future?

No. Medical research and expert consensus indicate AI will augment rather than replace physicians. The complexity of medical decision-making, patient relationships, and ethical judgment requires human physicians. AI excels at specific tasks like image analysis and documentation but lacks the contextual understanding and empathy essential to medical practice.

Which medical specialties are most threatened by AI?

Radiology and pathology face the highest level of AI disruption due to their focus on pattern recognition in images. However, even in these specialties, physicians are evolving rather than disappearing—they focus on complex cases and clinical interpretation while AI handles routine analysis. No specialty faces complete replacement.

Do patients trust AI for medical diagnosis?

Research shows patients prefer human physicians for medical diagnosis even when AI demonstrates higher accuracy. This “algorithm aversion” is particularly strong for high-stakes medical decisions and stigmatized diseases. Patients value the human element in healthcare beyond pure diagnostic accuracy.

How does AI reduce physician burnout?

According to research from the Korean Medical Association, AI significantly reduces physician workload by automating repetitive documentation and data entry tasks. Automated electronic medical record systems free physicians to focus on direct patient care rather than administrative burden, addressing a major contributor to burnout.

What are the main ethical concerns about AI in medicine?

Key ethical concerns include accountability for AI errors, patient privacy and data security, potential bias in AI algorithms, equitable access to AI-enhanced care, and maintaining the human element in patient relationships. The World Health Organization has released ethics and governance guidance addressing these challenges.

Is AI accurate enough to make medical decisions independently?

AI achieves high accuracy in specific, well-defined tasks like image classification. However, medical decisions involve weighing incomplete information, managing uncertainty, and considering patient values—complexities beyond current AI capabilities. AI provides decision support but requires physician oversight for clinical application.

How should physicians prepare for working with AI?

Research on AI enhancement programs shows that training significantly impacts healthcare providers’ knowledge and attitudes. Physicians should develop AI literacy, understand both capabilities and limitations of AI tools, maintain strong clinical reasoning skills, and emphasize distinctly human skills like communication and empathy that become more valuable as AI handles routine tasks.

Conclusion: Transformation, Not Replacement

The evidence from medical research, healthcare organizations, and clinical practice points to a clear conclusion: AI will transform medical practice without replacing physicians.

AI excels at data processing, pattern recognition, and administrative tasks. These capabilities reduce physician workload and enhance diagnostic accuracy. But medicine involves human judgment, empathy, and ethical reasoning that extend beyond computational capabilities.

The World Health Organization, medical research institutions, and practicing physicians consistently frame AI as a complementary tool rather than a replacement. The future involves physician-AI partnership optimizing patient care.

Real talk: the physicians most at risk aren’t those who might be replaced by AI. They’re those who refuse to adapt to AI-augmented practice.

The question isn’t whether AI will replace physicians. It’s how physicians will use AI to provide better care.

And that’s a fundamentally different—and far more interesting—question.

Ready to explore how AI is reshaping specific aspects of healthcare? Dive deeper into the technologies transforming medicine while keeping human expertise at the center of patient care.