Quick Summary: AI will not replace professors entirely, but will fundamentally transform their roles. While AI can automate grading, content delivery, and administrative tasks, the human elements of teaching—mentorship, critical thinking development, emotional connection, and ethical guidance—remain irreplaceable. Professors who adapt AI as a tool will become more effective, while those who resist may struggle.

The question hanging over university campuses isn’t subtle anymore. Will artificial intelligence replace professors? It’s a fair concern. AI tutors can answer questions at 3 AM. ChatGPT writes essays in seconds. Automated grading systems process assignments faster than any human.

But here’s the thing—this isn’t really about replacement. It’s about transformation.

According to a survey by the American Association of Colleges & Universities and Elon University’s Imagining the Digital Future Center (published January 2025), 95% of higher education leaders expressed concern about generative AI’s impact on teaching and learning. That’s not panic. That’s recognition that something fundamental is shifting.

The real story? AI is redefining what professors do, not eliminating the profession. Some tasks will disappear. Others will become more important. And the professors who thrive will be those who understand the difference.

What AI Can Actually Replace in Academic Work

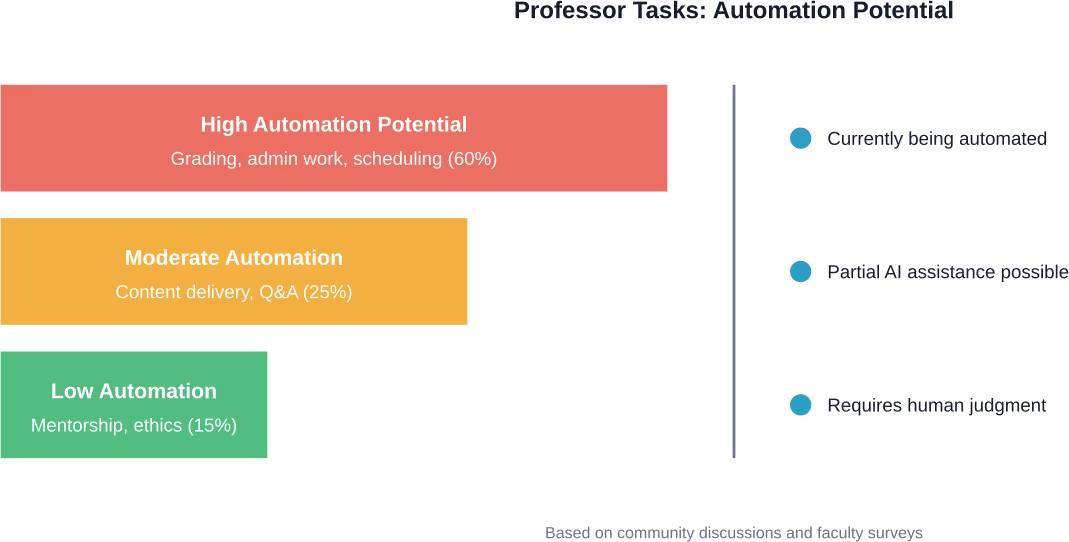

Let’s be honest about what’s vulnerable. Roughly 60% of typical professorial tasks could be automated with current technology. That’s not speculation—it’s already happening on campuses worldwide.

Administrative work tops the list. Syllabus generation, email responses to common questions, scheduling, and committee documentation can all be handled by AI systems. One professor described these tasks as “the bureaucratic paper shuffle” that consumes hours without directly benefiting students.

Grading represents another major category. Multiple-choice tests? Already automated. Short-answer questions? AI can evaluate those with reasonable accuracy. Even essay grading is becoming feasible for basic assignments, with AI systems checking structure, argument coherence, and citation accuracy.

Content delivery is evolving too. AI-powered platforms can present lectures, adapt to individual learning speeds, and provide instant feedback on practice problems. Pavel Pevzner at UC San Diego has explored Massive Adaptive Interactive Texts (MAITs)—AI technology designed to replace one-size-fits-all lectures with responsive, individualized instruction systems.

The Automation Reality Check

But wait. Just because something can be automated doesn’t mean the automation works well.

AI grading misses nuance. It can’t appreciate creative approaches that break conventional patterns. It struggles with context-dependent answers. And it definitely can’t gauge whether a student truly understands material or just learned to game the algorithm.

As one philosophy professor at Utah Valley University put it: “I cannot live my life that way, and I refuse to do so.” Nearly 40% of students at that institution are first-generation college attendees. For them, the human connection with professors often determines whether they persist or drop out.

The technology exists. The question is whether using it serves students better than human judgment.

What Makes Professors Irreplaceable

Here’s what AI can’t do: make students feel seen.

Student testimonials consistently highlight the same themes. “You pushed me out of my comfort zone.” “You made me see a better version of myself.” “Your passion for teaching is contagious.” These aren’t responses to information delivery. They’re responses to human connection.

Critical thinking development requires more than presenting information. Professors create cognitive dissonance—they challenge assumptions, ask uncomfortable questions, and push students beyond memorization into synthesis. An AI can present a Socratic dialogue. It can’t read the room and know when to press harder or when a student needs encouragement instead.

Mentorship extends beyond coursework. Professors write recommendation letters that actually know the student. They provide career guidance based on years of watching how industries evolve. They offer emotional support during academic struggles. They model how to think like a historian, scientist, or philosopher—not just what to think.

The Unscripted Moment Problem

Teaching’s most powerful moments are unscripted. A tangential question leads to a breakthrough discussion. A failed group project becomes a lesson in resilience and collaboration. A student’s personal experience connects course material to real-world implications no textbook anticipated.

AI operates on patterns. It can’t improvise meaningfully because it doesn’t understand context the way humans do. It can’t recognize when breaking from the lesson plan will serve learning better than following it.

Community discussions among professors emphasize this reality repeatedly. The consensus? AI won’t replace professors who care, innovate, challenge, and push. But it probably will replace professors who already act like robots—delivering rote lectures without engagement, grading mechanically without feedback, treating students as numbers rather than individuals.

How AI Is Transforming The Professor’s Role

Transformation looks different than replacement. Rather than eliminating professors, AI is shifting what they spend time on.

According to materials from the Medium article on AI and professors, the U.S. National Science Foundation, along with Capital One and Intel, is investing $100 million into National AI Research Institutes. The focus? Not replacing educators, but enhancing their capabilities.

Professors are becoming designers rather than just deliverers. They’re creating AI-augmented learning experiences where technology handles repetitive tasks while humans focus on higher-order thinking. They’re developing assignments that incorporate AI tools, teaching students to use technology critically rather than dependently.

Research roles are expanding too. Faculty now track how AI use influences student performance, document what works and what fails, and publish findings that shape educational AI development. They’re not passive recipients of technology—they’re actively shaping how it’s built and deployed.

The Efficiency Amplification Effect

AI makes good professors better. That’s the practical reality emerging from early adoption.

Tasks like creating course materials, drafting personalized feedback, and answering routine questions can be AI-assisted, freeing hours for actual teaching. One industry observer with decades of educational technology experience noted that when property management systems automated hotel night audits—previously an eight-hour manual process—it didn’t eliminate jobs. It shifted workers toward higher cognitive responsibilities.

The same pattern appears in higher education. AI handles routine work. Professors gain bandwidth for the work machines can’t do: facilitating difficult discussions, mentoring struggling students, developing innovative pedagogy, conducting research.

As of March 2026, the U.S. National Science Foundation announced the TechAccess: AI-Ready America initiative, a coordinated effort to enable Americans to understand, apply, and create with artificial intelligence. The program includes Coordination Hubs receiving $1 million annually for three years, with 10 hubs selected in the first round, 20 in the second, and the remainder in round three.

That’s investment in integration, not elimination.

| Traditional Professor Role | AI-Augmented Professor Role | Key Change |

|---|---|---|

| Lecture content delivery | Discussion facilitation and Socratic questioning | From information source to thinking guide |

| Manual grading of all assignments | AI-assisted grading with human oversight for complexity | Time freed for personalized feedback |

| Standard assignments for all students | Adaptive assignments that incorporate AI tools | Teaching AI literacy alongside content |

| Office hours for basic questions | Office hours for complex mentorship | AI chatbots handle routine queries |

| Creating all materials from scratch | Curating and customizing AI-generated materials | Designer role rather than pure creator |

Apply AI In Education Without Losing What Matters

AI can support teaching tasks, but meaningful learning still depends on how those tools are applied and guided by people.

AI Superior works on practical AI implementation, including in environments where accuracy, structure, and human oversight matter. They help organizations design and build custom AI solutions, integrate machine learning into existing systems, and set up data workflows that support real use cases. In education-related contexts, that can mean supporting content systems, research processes, or internal tools — without trying to replace the human role behind them.

If you’re looking at AI as a support layer rather than a shortcut in education or research, contact AI Superior to see how it can fit into your setup.

The Student Experience Problem No One Talks About

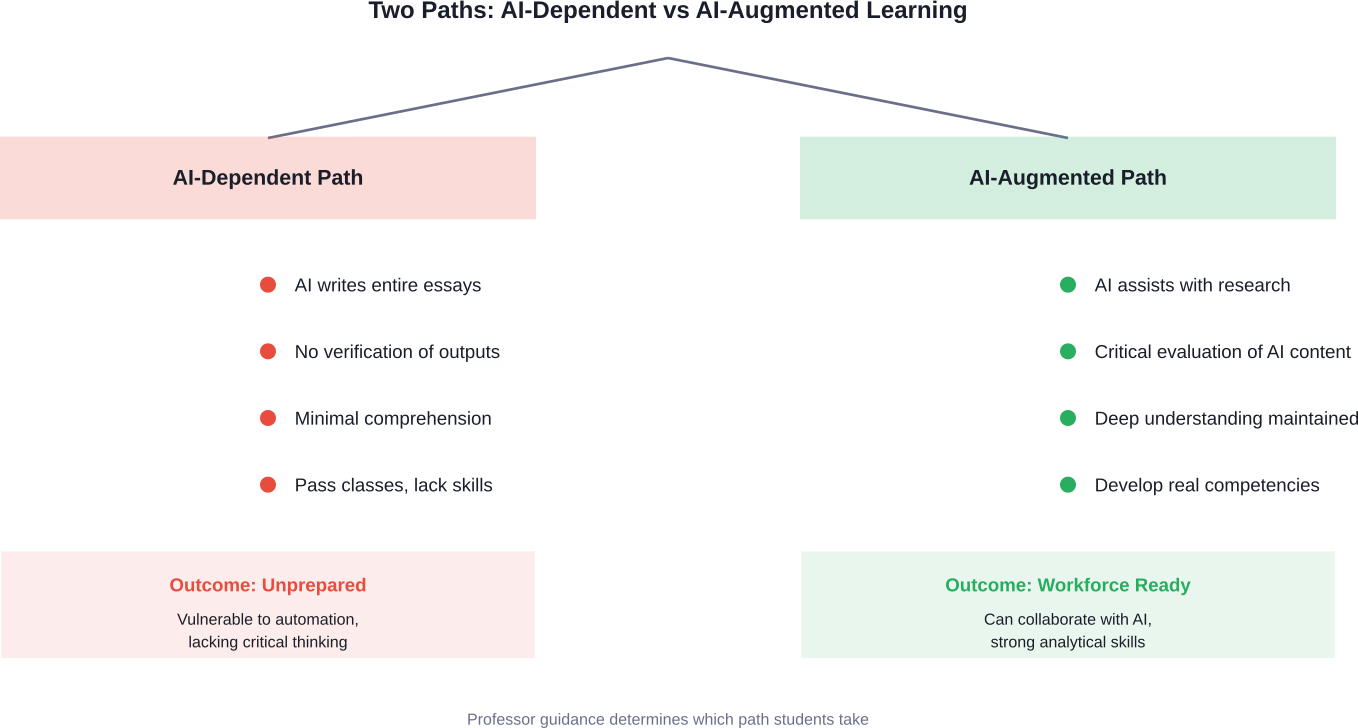

Students using AI without learning creates a quiet crisis. They’re getting degrees without developing competencies.

Across campuses, professors report the same phenomenon: essays that sound sophisticated but lack coherent argument structure. Problem sets completed correctly but students can’t explain the reasoning. Research papers with perfect citations but no original synthesis.

The technology makes it easy to fake learning. And some students are taking that path.

But here’s where professor irreplaceability becomes critical. Human educators can spot the difference between AI-assisted learning and AI-dependent shortcutting. They can ask follow-up questions. They can require verbal explanations. They can create assessments that test understanding rather than just output.

Research from the University of Hawaii Economic Research Organization citing Pew Research Center analysis finds that occupations most exposed to AI aren’t low-wage or low-skill jobs, but higher-paying, knowledge-intensive roles. Workers in highly AI-exposed jobs earn an average of $33 an hour, compared with $20 among those with less AI exposure.

The implication? Students who use AI as a crutch rather than a tool are preparing for jobs that won’t exist or won’t pay well. Professors serve as guides helping students use technology without becoming dependent on it.

Teaching AI Literacy as Core Competency

Forward-thinking faculty aren’t banning AI. They’re teaching responsible use.

Assignments now include expectations about AI tool usage. Students learn to evaluate AI outputs, verify information, and integrate machine assistance into human reasoning rather than substituting it. This mirrors how professionals in every field increasingly work.

Research from the Brookings Institution and MIT examining the future of work suggests that AI works best as augmentation—technologies that make human skills and expertise more valuable—rather than pure automation. Professors teaching this skill provide irreplaceable value.

The NSF’s February 2026 CyberAI SFS (CyberAICorps Scholarship for Service) program integrates AI education with cybersecurity operations and workforce development, with two tracks supporting up to 25 projects per fiscal year.

The Economic Reality of Higher Education

Universities face financial pressure. AI offers apparent cost savings. That’s the uncomfortable truth driving some institutional interest in automation.

If AI can “teach” 500 students as easily as 50, the business case for reducing faculty becomes tempting. Especially at institutions struggling with enrollment declines or budget cuts.

But this calculation misses critical factors. Student retention suffers without human connection. Graduation rates drop when students feel like numbers. Alumni donation rates correlate with meaningful faculty relationships during college years.

Research from the Brookings Institution and MIT examining the future of work suggests that AI works best as augmentation—technologies that make human skills and expertise more valuable—rather than pure automation. Organizations that eliminated human roles entirely often found themselves rebuilding them after discovering technology couldn’t handle complexity.

The Prestige Paradox

Elite universities won’t eliminate professors. Their value proposition depends on access to distinguished faculty. Parents pay premium tuition specifically for small classes with renowned scholars.

The risk falls on less-selective institutions, community colleges, and programs already using contingent faculty. These serve the most vulnerable student populations—first-generation college students, working adults, underrepresented minorities. For these students, professor relationships often determine success or failure.

Replacing professors with AI at institutions serving disadvantaged populations would essentially create a two-tier system: wealthy students get human mentorship, everyone else gets algorithms. That’s not just unfair—it’s counterproductive for workforce development.

Materials from March 2026 NSF initiatives emphasize making AI accessible to all Americans, not creating AI-based inequality. The goal involves enabling communities, workers, and students to understand and work with AI, which requires human educators who understand both the technology and the people using it.

What Professors Must Do to Stay Relevant

Adaptation isn’t optional anymore. Professors who treat AI as irrelevant or purely threatening will struggle. Those who embrace it strategically will thrive:

- First: Learn the tools. Professors don’t need to become programmers, but they do need working knowledge of major AI platforms, their capabilities, and their limitations. Using AI daily builds intuition about what it can and can’t do.

- Second: Redesign assessments. Traditional essays and problem sets are increasingly AI-vulnerable. Effective alternatives include oral examinations, iterative projects with required check-ins, collaborative work with peer evaluation, and assignments requiring personal reflection or experience integration.

- Third: Teach explicitly about AI. Make AI literacy part of every course. Discuss when AI use is appropriate, how to verify AI-generated information, and how AI fits into professional practice in the field.

The Co-Creation Model

Some of the most innovative faculty are involving students in AI experimentation. Rather than dictating rules, they’re exploring together how AI changes their discipline.

Students bring fresh perspectives. They’re often more comfortable with technology. Creating assignments collaboratively—where students help determine how AI should and shouldn’t be used—builds investment in ethical use.

This approach also models lifelong learning. Professors admitting uncertainty about AI’s full implications, then working with students to figure it out, demonstrates intellectual humility and adaptability. Those are skills every professional needs in a rapidly changing technological landscape.

NSF’s August 2025 announcements (specifically the Expanding K-12 Resources for AI Education Dear Colleague Letter) focused on expanding K-12 AI education, including resources for high school students and teacher professional development.

| Old Faculty Mindset | AI-Ready Faculty Mindset |

|---|---|

| “AI is cheating, ban it completely” | “AI is a tool, teach responsible use” |

| “My lectures are irreplaceable” | “My mentorship is irreplaceable” |

| “Technology threatens my job” | “Technology changes my job” |

| “Students must work like I did” | “Students must learn for their world, not mine” |

| “I’m the sole knowledge source” | “I’m the guide to knowledge evaluation” |

The Timeline Question: How Fast Is This Happening?

According to a professor’s LinkedIn post, Bill Gates recently said that in 10 years, most teachers will be replaced by AI. That statement sparked considerable debate among educators. Was it realistic? Alarmist? Or perhaps too conservative?

As of 2026, we’re not seeing wholesale replacement. What we’re seeing is accelerating integration.

This year’s senior class is the first to have spent nearly its entire college career in the age of generative AI. These students never knew higher education without ChatGPT. Their expectations and behaviors differ fundamentally from previous cohorts.

Faculty are adapting faster than many predicted. 95% of higher education leaders expressing concern about AI’s impact aren’t paralyzed—they’re actively experimenting, sharing strategies, and developing institutional policies.

Technology adoption in education typically follows a pattern: hype, disappointment, then gradual practical integration. AI appears to be moving through this cycle faster than previous technologies, but it’s still following the pattern.

What Five Years Might Bring

Based on current trajectories, the 2030 university landscape likely includes:

AI teaching assistants that handle routine questions 24/7, managed by professors who focus on complex inquiries. Adaptive learning platforms that adjust pace and difficulty, with professors monitoring overall progress and intervening for struggling students. Automated grading for objective assignments, with human evaluation reserved for creative, analytical, or nuanced work. Virtual reality simulations for experiential learning, designed and facilitated by faculty.

But lectures won’t disappear. Office hours won’t become optional. Thesis advising won’t be automated. The core relationship between expert educator and developing scholar remains essential to higher education’s purpose.

Research examining AI and workforce development emphasizes that AI’s impact on knowledge work is complex. Research from the University of Hawaii Economic Research Organization finds that occupations most exposed to AI are higher-paying, knowledge-intensive roles—meaning workers collaborate with AI rather than being replaced by it.

The Global Perspective on AI and Higher Education

This transformation isn’t uniquely American. Universities worldwide face similar questions, though approaches vary.

Some countries emphasize AI skill development as national priority, viewing professor-led AI integration as workforce preparation. Others express more concern about preserving traditional academic values amid technological disruption.

International collaboration on AI education research is expanding. What works in one context may not transfer directly to another, but principles are emerging: AI works best as augmentation, not replacement. Human connection remains central to learning. Critical thinking becomes more important as information access becomes easier.

NSF’s March 2026 initiative explicitly aims to coordinate AI readiness across American communities. The program includes Coordination Hubs—10 in the first round, 20 in the second, with the remainder in round three—each receiving $1 million yearly for three years. These hubs bring together educational institutions, businesses, and community organizations.

That coordination model recognizes that preparing for an AI-integrated future requires human networks, not just technology deployment.

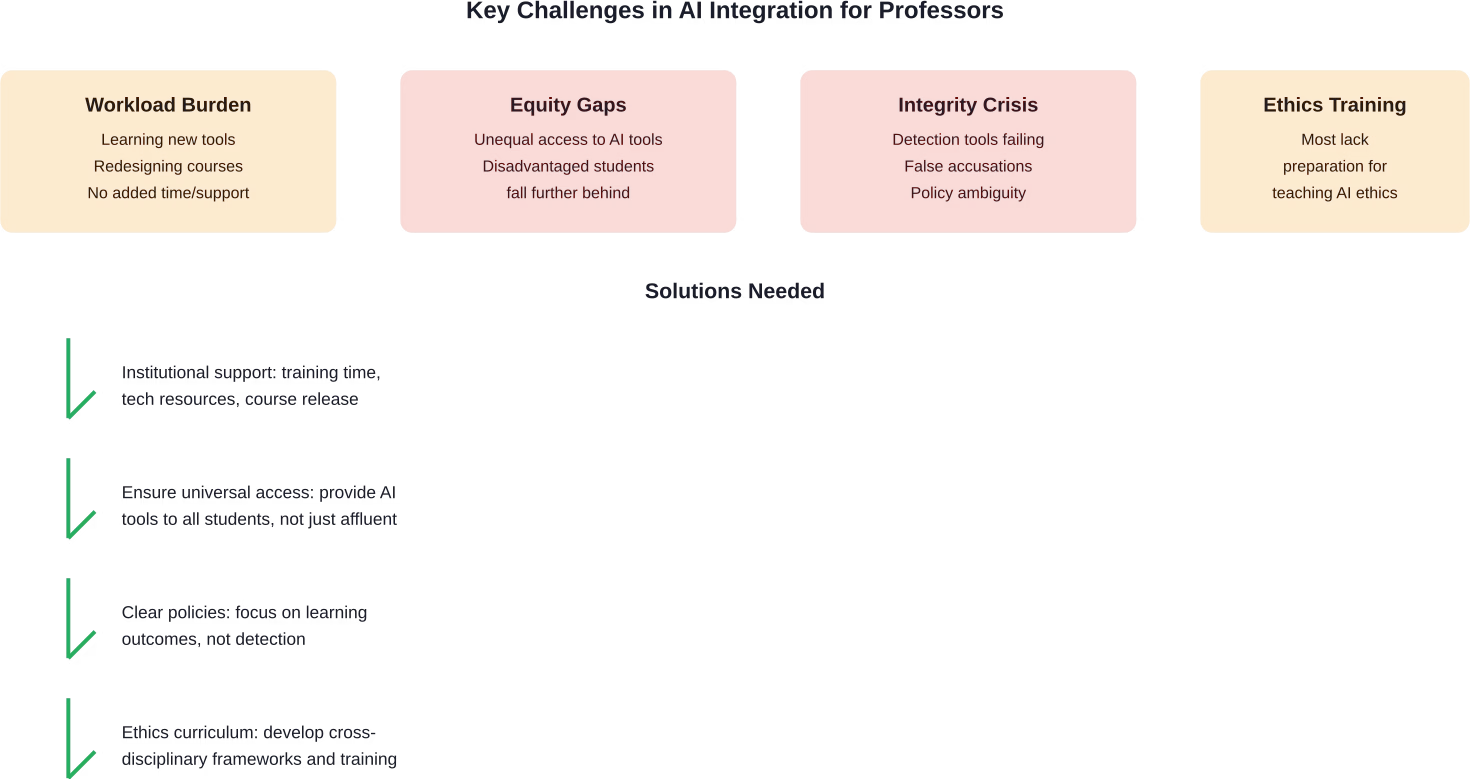

Challenges That Threaten the Transition

Not everything about this transformation is smooth. Real obstacles exist, and ignoring them doesn’t help.

Faculty workload is already unsustainable at many institutions. Adding “learn AI tools and redesign all your courses” to existing responsibilities without additional support creates burnout, not innovation. Universities need to provide training time, technical support, and course redesign resources.

Equity concerns are significant. Students from disadvantaged backgrounds may lack access to AI tools at home, creating achievement gaps. Institutions must ensure AI integration doesn’t privilege already-privileged students.

Academic integrity systems are struggling. Detection tools produce false positives. Students are learning to hide AI use. Professors face impossible investigation loads. Clear policies with reasonable expectations are essential, but they’re still evolving at most institutions.

The Ethics Teaching Gap

AI raises ethical questions that most professors aren’t trained to address. Bias in algorithms. Privacy implications. Environmental costs of computational resources. Intellectual property ambiguity. Labor displacement concerns.

These issues cross disciplinary boundaries. A biology professor using AI for genetics research confronts different ethical questions than a literature professor whose students use AI for textual analysis. Both need frameworks for addressing ethics in their context.

Faculty are essentially becoming ethics educators by necessity. That’s outside the training most received in graduate school. Professional development focused on ethical AI use is becoming essential, not optional.

According to materials from various academic institutions and research centers, professors increasingly see themselves as AI policymakers within their classrooms and departments. They’re not just implementing institutional rules—they’re creating norms, teaching values, and modeling responsible technology use.

Real Stories From The Transition

Theory is one thing. Practice is another. Professors across disciplines are navigating this transition now, with mixed results.

A philosophy professor at a large public institution serving many first-generation students explicitly refuses to accommodate AI-based shortcuts. The reasoning? These students need critical thinking skills more than anyone. Letting them outsource cognitive work to machines undermines their education’s entire purpose.

Meanwhile, other faculty embrace experimentation. They assign students to use AI, then critique the outputs. They ask students to improve on AI-generated work. They discuss why AI produces biased or incorrect results. The classroom becomes a space for learning both course content and technological literacy.

Some professors report frustration. They redesign an assignment to be AI-resistant, only to discover students found workarounds. Others express relief that administrative tasks finally have technical solutions, freeing them to focus on the teaching they actually enjoy.

The Discipline Difference

AI’s impact varies significantly by field. STEM disciplines often find AI integration more straightforward—tools exist for automated problem checking, simulations, and data analysis that genuinely enhance learning.

Humanities face different challenges. How do you teach literary analysis when AI can generate competent essays? How do you develop historical thinking when students can ask AI for interpretations? The answer involves moving beyond content recall into metacognition—teaching students to think about thinking, question assumptions, and develop original arguments AI can’t replicate.

Professional programs—business, education, nursing—must prepare students for AI-integrated workplaces. These programs increasingly treat AI as tool training, similar to teaching spreadsheet proficiency or presentation skills.

The NSF’s focus on STEM workforce development through AI-ready initiatives recognizes that different disciplines need different approaches. March 2026 announcements emphasized transforming how students learn STEM subjects, not just adding AI content to existing courses.

The Verdict: What’s Actually Happening

So will AI replace professors? The evidence says no—but with important caveats.

AI is replacing certain tasks that professors currently do. That’s undeniable. Grading, administrative work, basic content delivery, and routine student questions are increasingly automated. That’s roughly 40-60% of traditional professorial work, depending on the institution and discipline.

But AI isn’t replacing the professor role itself. What’s emerging is a transformed position where human educators focus on aspects of teaching that require judgment, empathy, creativity, and relationship-building. These capacities remain beyond current AI capabilities, and likely will for the foreseeable future.

The professors at risk aren’t those doing excellent teaching. They’re those who were already performing their jobs mechanically—delivering lectures without engagement, grading without feedback, treating education as information transfer rather than human development.

Real talk: if your entire job can be replicated by current AI, you weren’t really teaching. You were information delivery, which students could always get from textbooks, videos, or now, chatbots.

The Two-Tier Risk

The genuine concern isn’t about replacing all professors. It’s about creating educational inequality where wealthy students get human mentorship and disadvantaged students get algorithms.

Elite institutions won’t eliminate faculty. Public universities serving vulnerable populations might face pressure to cut costs through automation. That would worsen existing inequalities rather than improving education.

Preventing this outcome requires intentional policy choices. Federal initiatives like NSF’s AI-Ready America aim to ensure broad access rather than exclusive advantage. But implementation will determine whether AI democratizes education or concentrates privilege.

Research examining workforce impacts of AI consistently shows that technology’s effects depend on how it’s deployed. Organizations using AI to augment workers see productivity gains and improved outcomes. Those using AI purely for cost-cutting through job elimination often see quality decline and organizational dysfunction.

Higher education faces the same choice.

Frequently Asked Questions

Will AI completely replace university professors in the next 10 years?

No. While AI will automate certain teaching tasks like grading and content delivery, the core professorial functions—mentorship, critical thinking development, ethical guidance, and human connection—remain beyond AI capabilities. Professors who adapt will become more effective, not obsolete. Current trajectories suggest this prediction is unlikely based on the complexity of genuine education.

What percentage of a professor’s job can AI actually do?

Current estimates suggest 40-60% of traditional professorial tasks could be automated, primarily administrative work, grading, and routine content delivery. However, the most valuable aspects of teaching—facilitating discussion, mentoring students, developing original research, and creating meaningful learning experiences—remain human-centered. The percentage varies significantly by discipline and institution type.

Should students be allowed to use AI tools for assignments?

Rather than blanket bans or unrestricted use, educators are moving toward teaching responsible AI use. Students should learn to leverage AI tools critically—using them for research assistance, draft improvement, and idea generation while maintaining original thinking and proper attribution. The goal is preparing students for professional environments where AI collaboration is standard, not eliminating technology from education.

How are professors detecting AI-written assignments?

Detection remains challenging and imperfect. AI detection tools produce false positives and students learn to evade them. Many professors are shifting away from trying to catch AI use toward designing assignments that require demonstrable understanding—oral presentations, iterative projects with check-ins, personal reflection integration, and in-class work. The focus moves from policing to pedagogy.

Which professor jobs are most at risk from AI automation?

Positions focused primarily on large-lecture content delivery and standardized grading face the most automation pressure. Contingent faculty at under-resourced institutions teaching introductory courses might be vulnerable if institutions prioritize cost-cutting over educational quality. Conversely, professors engaged in research, personalized mentorship, small seminars, and innovative pedagogy are least at risk.

What should professors do to prepare for AI integration in education?

Faculty should learn major AI tools through regular use, redesign assessments to emphasize understanding over outputs, teach AI literacy explicitly within their courses, and participate in policy development at their institutions. Viewing AI as a tool that changes rather than threatens the profession enables productive adaptation. Professional development focused on AI integration is becoming essential.

Will AI make education more or less expensive?

The impact on educational costs depends on institutional choices. If universities use AI primarily for cost-cutting through faculty reduction, short-term expenses might decrease while educational quality suffers. If institutions use AI to enhance professor effectiveness and student outcomes, costs might remain similar but value increases. Federal and institutional policies will shape whether AI democratizes access or creates two-tier educational systems.

The Path Forward

Higher education stands at a genuine inflection point. The decisions made over the next few years will shape teaching and learning for decades.

Institutions that use AI thoughtfully—as amplification rather than replacement—will produce better-prepared graduates with stronger critical thinking skills and technological literacy. Universities that treat AI purely as a cost-cutting opportunity will damage educational quality and student outcomes.

Professors have agency in this transition. Those who engage proactively with AI, experiment with integration, share what works and what fails, and advocate for student-centered policies will shape the transformation. Those who wait passively for institutions to dictate changes will have less influence over outcomes.

Students need guidance more than ever. Information has never been more abundant or easier to access. Wisdom—knowing what information matters, how to evaluate it, and how to apply it ethically—remains scarce. That’s what professors provide.

The question isn’t whether AI will replace professors. It’s whether educators will help students use AI wisely or watch them become dependent on tools they don’t understand. The answer to that question determines whether the next generation enters a workforce ready to work with AI or vulnerable to being replaced by it.

According to NSF initiatives launched through early 2026, the goal involves preparing all Americans—workers, students, business owners, communities—to be AI-ready. That requires human educators who understand both technology and people.

AI won’t replace professors who do that work. It will make them more essential than ever.

The future of higher education isn’t automated lectures and algorithm-graded essays. Its professors are liberated from busywork to focus on what they do best: challenging students to think deeply, pushing them beyond comfortable assumptions, and preparing them not just for jobs, but for thoughtful citizenship in a complex world.

That’s work machines can’t do. And it’s work our society desperately needs.