Quick Summary: Predictive analytics trends in 2026 center on real-time forecasting, AI-driven automation, causal machine learning for supply chains, and personalized customer experiences. The market is growing at 22-28% annually, with organizations leveraging event-driven architectures and advanced machine learning to turn historical data into actionable future insights across healthcare, retail, finance, and manufacturing sectors.

The predictive analytics market continues its explosive growth trajectory. Expected to reach between $17 billion and $22 billion in 2025, it’s maintaining an annual growth rate of 22% to 28% over the next five years. That’s not just incremental progress—it’s a fundamental shift in how organizations make decisions.

But here’s the thing: predictive analytics isn’t what it was even two years ago. The techniques, tools, and applications have evolved dramatically. Real-time data processing, causal machine learning, and AI-powered automation are rewriting the playbook.

So what trends are actually shaping the field in 2026? Let’s break down the developments that matter.

What Predictive Analytics Actually Is in 2026

Predictive analytics uses historical data, statistical methods, and machine learning models to forecast future outcomes or trends. According to Stanford HAI, these techniques analyze patterns in historical data to estimate the likelihood of events such as customer behavior, equipment failures, or market changes.

The practice sits at the intersection of math, statistics, and computer science. It’s fundamentally different from descriptive analytics (what happened?) or diagnostic analytics (why did it happen?). Predictive analytics answers: what will happen?

| Analytics Type | Question Answered | Primary Use |

|---|---|---|

| Descriptive | What happened? | Historical reporting |

| Diagnostic | Why did it happen? | Root cause analysis |

| Predictive | What will happen? | Forecasting and probability |

| Prescriptive | What should we do? | Decision optimization |

The key difference between predictive analytics and machine learning lies in scope and application. Predictive analytics focuses on forecasting specific outcomes for business decisions. Machine learning enables systems to learn from data and improve performance without explicit programming.

That said, the line is blurring. Modern predictive analytics implementations heavily leverage machine learning techniques—particularly deep learning and neural networks.

Causal Machine Learning Transforms Supply Chain Management

One of the most significant trends is the shift from correlation-based models to causal approaches. According to NIST research published in January 2026, causal machine learning represents an empirical breakthrough in supply chain management.

Traditional predictive models identify patterns: “When X happens, Y tends to follow.” Causal models go deeper: “X causes Y through mechanism Z.” This distinction matters enormously when making intervention decisions.

For supply chains specifically, causal ML helps answer questions like:

- Will changing supplier A actually reduce delays, or is the correlation spurious?

- What’s the true impact of inventory policy changes on customer satisfaction?

- Which disruptions genuinely cascade through the network versus which are isolated?

The NIST framework demonstrates how causal inference techniques can be applied to real-world supply chain data, moving beyond simple prediction to understanding the underlying mechanisms driving outcomes.

And this isn’t theoretical. Manufacturers are already implementing causal models to optimize procurement, reduce waste, and build resilience against disruptions.

Real-Time Data Architectures Drive Immediate Forecasting

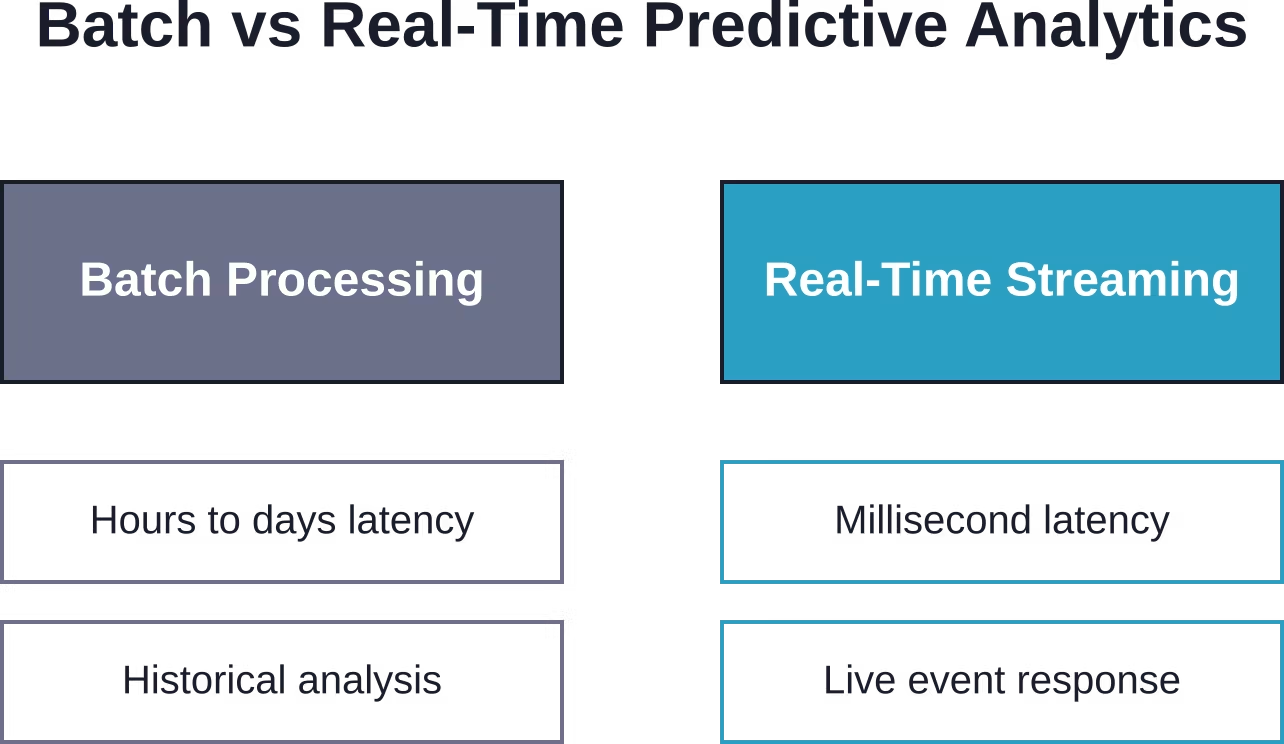

Batch processing is losing ground to real-time analytics. Event-driven architectures (EDAs) and data-in-motion platforms are becoming the foundation for predictive systems that need to respond immediately.

Here’s why this matters: traditional predictive models often work with data that’s hours or days old. For use cases like fraud detection, equipment monitoring, or dynamic pricing, that lag is unacceptable.

Real-time data technologies enable:

- Immediate anomaly detection in transaction streams

- Live equipment failure prediction based on sensor data

- Dynamic customer experience personalization during active sessions

- Instant supply chain risk alerts as events unfold

The shift requires different technical infrastructure—stream processing frameworks, event brokers, and models optimized for low-latency scoring. But the payoff is substantial: predictions that are actually actionable in the moment they’re needed.

AI-Powered Personalization Reaches New Sophistication

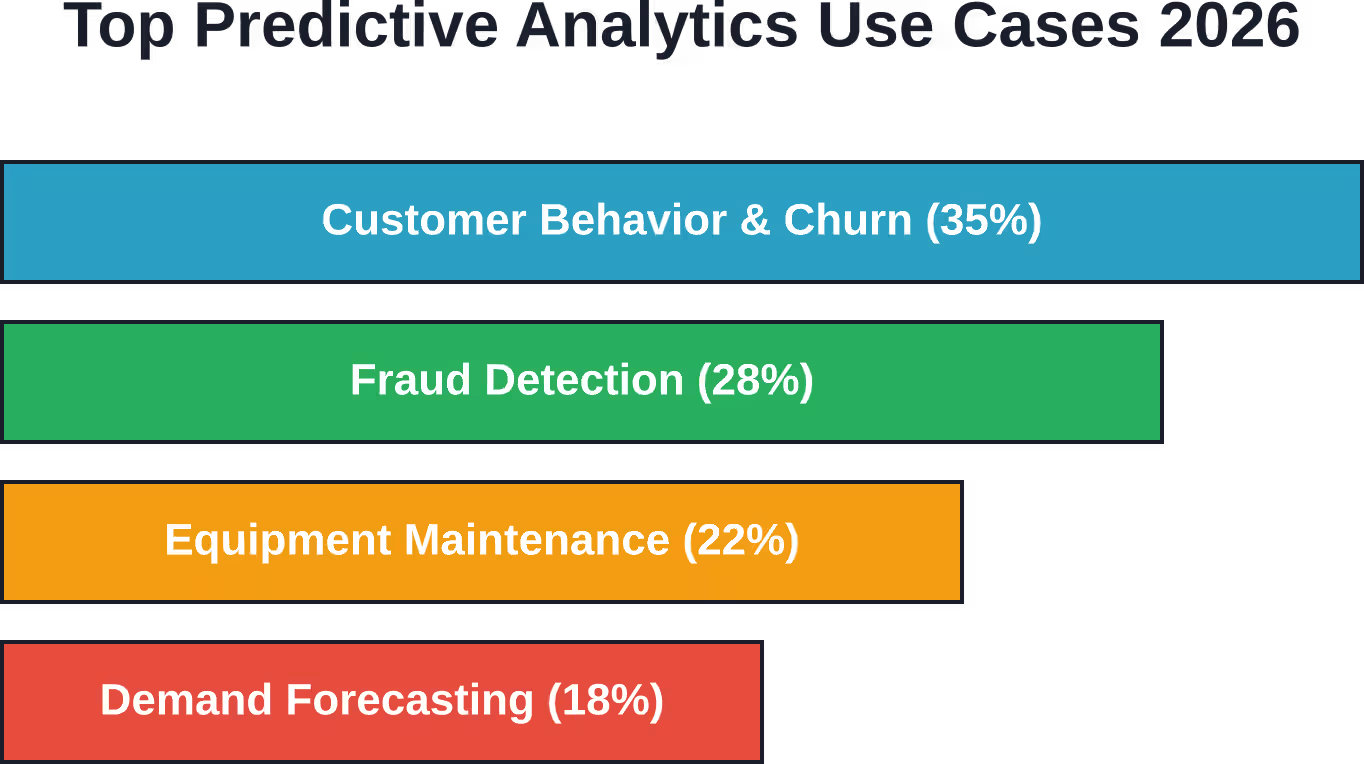

Customer analytics has always been a core predictive analytics use case. But the level of personalization possible in 2026 is remarkable.

Organizations using advanced predictive customer analytics and personalization techniques report revenue improvements. That’s corroborated across multiple industry analyses and reflects the maturity of recommendation engines, dynamic content systems, and behavioral prediction models.

Major e-commerce companies report significant improvements in customer retention through predictive analytics. While Amazon’s scale is unique, the underlying techniques—collaborative filtering, sequential pattern mining, propensity modeling—are increasingly accessible to smaller organizations.

Modern customer analytics platforms combine:

- Behavioral sequence analysis to predict next actions

- Sentiment analysis from text and voice interactions

- Lifetime value modeling for retention prioritization

- Churn prediction with intervention recommendations

The shift from segment-based to individual-level prediction is nearly complete. Machine learning models can now generate personalized forecasts for millions of customers simultaneously, something that wasn’t computationally feasible even five years ago.

Smaller Manufacturers Access AI Through Practical Tools

Predictive analytics is no longer exclusively an enterprise capability. According to NIST research on smaller manufacturers, artificial intelligence has become a crucial aspect of Industry 4.0 adoption even for mid-size and small production facilities.

What changed? Primarily tool accessibility and cost. Cloud-based analytics platforms, pre-trained models, and low-code/no-code interfaces have dramatically lowered the barrier to entry.

Smaller manufacturers are leveraging predictive analytics for:

- Equipment maintenance scheduling based on sensor data

- Quality prediction to reduce defects and waste

- Demand forecasting for inventory optimization

- Energy consumption prediction for cost management

The MEP (Manufacturing Extension Partnership) centers have played a role in disseminating these capabilities, helping manufacturers identify use cases and implement solutions without massive capital investment.

Real talk: predictive analytics for manufacturing doesn’t require a data science team anymore. Many platforms offer industry-specific templates and automated model training that production managers can configure themselves.

Healthcare Diagnosis and Resource Forecasting Expand

Healthcare remains one of the highest-impact domains for predictive analytics. The applications range from patient-level diagnosis support to system-wide resource planning.

Key healthcare use cases in 2026 include:

- Equipment failure prediction for critical medical devices

- Patient readmission risk modeling

- Disease progression forecasting for chronic conditions

- Hospital capacity and staffing demand prediction

- Treatment outcome probability for personalized care

The accuracy of these models continues to improve as electronic health record (EHR) systems mature and data quality increases. Machine learning techniques like Long Short-Term Memory (LSTM) networks excel at temporal health data—tracking how patient conditions evolve over time.

But there’s a challenge: healthcare predictive models must be explainable. Black-box neural networks that can’t justify their predictions face regulatory and ethical barriers. This has driven significant innovation in interpretable machine learning and causal inference techniques.

Financial Forecasting Gets More Granular

Financial services have used predictive analytics for decades—credit scoring is fundamentally a predictive model. What’s new is the granularity and breadth of applications.

Modern financial forecasting leverages predictive analytics for:

- Real-time fraud detection across transaction streams

- Market movement prediction for algorithmic trading

- Credit risk assessment with alternative data sources

- Cash flow forecasting for treasury management

- Customer lifetime value modeling for acquisition optimization

One notable trend: incorporating unstructured data like news sentiment, social media signals, and satellite imagery into financial models. These alternative data sources provide predictive signals that traditional structured data misses.

WHOOP, for example, improved AI and machine learning financial forecasting while enhancing member experiences by centralizing data access with modern data platforms. That combination—better forecasting plus better customer experience—reflects how predictive analytics has become integrated across business functions rather than siloed in finance.

Key Predictive Analytics Models and Techniques

The technical foundation of predictive analytics continues to evolve. While classical statistical methods remain relevant, machine learning dominates modern implementations.

Regression Models

Linear regression, logistic regression, and polynomial regression handle continuous and categorical predictions. They’re interpretable, fast to train, and effective when relationships are relatively linear. Financial forecasting and simple risk scoring often rely on regression.

Decision Trees and Ensemble Methods

Random forests, gradient boosting machines (like XGBoost and LightGBM), and ensemble techniques combine multiple models for superior accuracy. They handle non-linear relationships, feature interactions, and missing data gracefully. Customer churn prediction and credit scoring frequently use ensemble methods.

Neural Networks and Deep Learning

Deep learning excels with complex pattern recognition, especially in unstructured data like images, text, and time series. LSTM networks, convolutional neural networks (CNNs), and transformer architectures power advanced forecasting applications. Healthcare diagnosis and natural language processing rely heavily on deep learning.

Time Series Forecasting

ARIMA, Prophet, and specialized neural architectures handle temporal data with seasonality and trends. Demand forecasting, sales prediction, and resource planning depend on robust time series techniques.

Clustering and Classification

K-means, hierarchical clustering, support vector machines, and Bayesian classifiers segment data and assign category predictions. Customer segmentation and fraud detection leverage these approaches.

The choice of technique depends on data characteristics, interpretability requirements, computational resources, and the specific prediction task. Many production systems use ensemble approaches—combining multiple model types to leverage their complementary strengths.

Food Production Optimization Through LSTM Networks

Food wastage remains one of the persistent challenges facing the industry. Mistaken demand prediction leads to overproduction, spoilage, and resource misallocation.

Recent research on predictive analytics for food production demonstrated a machine learning approach using Long Short-Term Memory networks. The system forecasts food quantities and transactions using historical sales data combined with features like day, month, and item-specific attributes.

The results? 89.68% accuracy in demand prediction. That level of precision enables significant waste reduction, optimized inventory management, and better resource allocation—delivering both economic and environmental benefits toward sustainable food production.

LSTM networks are particularly suited to this application because they capture long-term dependencies in sequential data. Food demand exhibits complex patterns—weekly cycles, monthly trends, seasonal variations, holiday effects—that simpler models struggle to represent.

The approach demonstrates how specialized neural architectures can solve industry-specific forecasting challenges that were previously intractable.

Tools and Platforms Enabling Predictive Analytics

The predictive analytics ecosystem includes a range of tools spanning different use cases and technical skill levels.

Cloud platforms like Snowflake provide integrated data warehousing and analytics capabilities. They centralize data access, reduce infrastructure complexity, and enable teams to build predictive models without managing servers.

Specialized machine learning platforms offer automated model training, hyperparameter tuning, and deployment pipelines. These reduce the time from data to production model from months to days.

Open-source frameworks—scikit-learn, TensorFlow, PyTorch, XGBoost—give data scientists fine-grained control and customization. They’re the foundation for custom model development when off-the-shelf solutions don’t fit.

Business intelligence tools increasingly incorporate predictive capabilities directly. Non-technical users can generate forecasts through guided interfaces without writing code.

The trend is toward democratization: making predictive analytics accessible to more roles, not just dedicated data scientists. But that doesn’t mean dumbing down—it means better abstractions and interfaces on top of sophisticated techniques.

Challenges and Considerations

Predictive analytics isn’t a silver bullet. Several challenges persist:

Data Quality and Availability

Models are only as good as their training data. Incomplete, biased, or outdated data produces unreliable predictions. Data governance and pipeline quality matter as much as algorithm choice.

Model Interpretability

Complex models like deep neural networks often operate as black boxes. For regulated industries or high-stakes decisions, explainability is non-negotiable. This drives ongoing research in interpretable machine learning.

Overfitting and Generalization

Models can memorize training data rather than learning generalizable patterns. Rigorous validation, regularization, and testing on held-out data are essential to ensure models perform well on new inputs.

Ethical and Bias Concerns

Predictive models can perpetuate or amplify biases present in historical data. Fair lending, hiring, and healthcare applications require careful bias auditing and mitigation strategies.

Integration and Operationalization

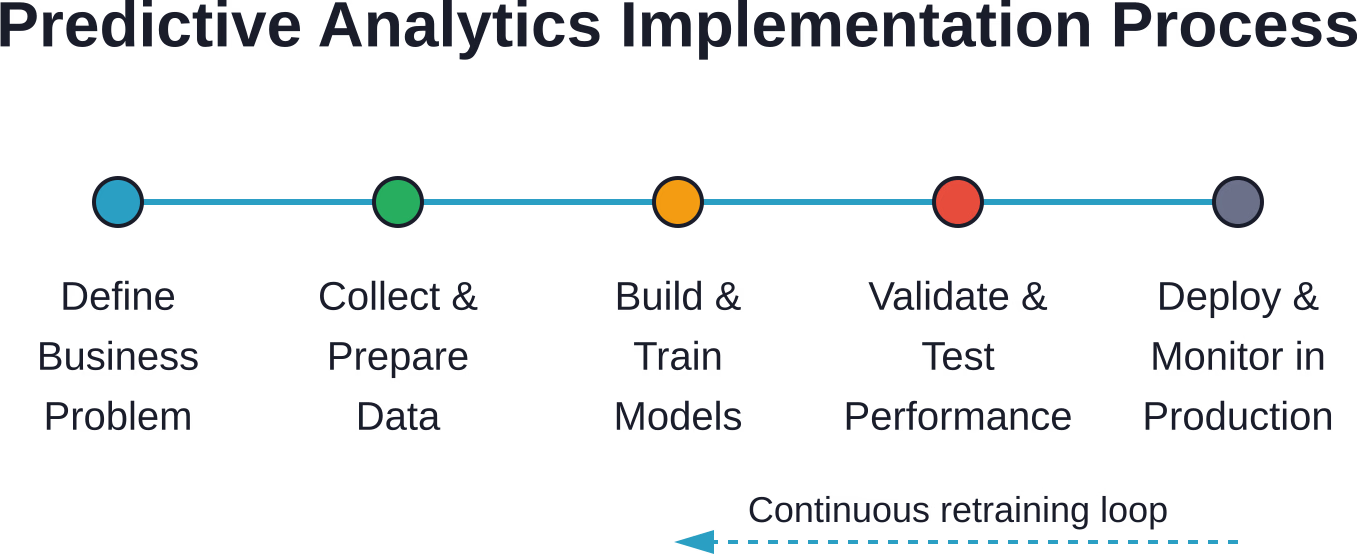

Building a model is one thing; deploying it into production systems where it delivers business value is another. MLOps practices—versioning, monitoring, retraining—are critical for sustainable predictive analytics.

Organizations succeeding with predictive analytics treat these challenges seriously rather than viewing them as afterthoughts.

Getting Started With Predictive Analytics

Organizations new to predictive analytics should follow a pragmatic approach:

- Start with a clear business problem: Don’t build models in search of applications. Identify a specific decision that better forecasting would improve—customer churn, inventory levels, equipment failures.

- Assess data readiness: Do you have sufficient historical data? Is it clean and accessible? Data preparation typically consumes 60-80% of project time. Underestimating this is a common failure mode.

- Begin with simple baselines: Linear regression or decision trees often provide surprising value before jumping to complex deep learning. Simple models are faster to deploy, easier to interpret, and serve as performance benchmarks.

- Invest in infrastructure: Predictive analytics requires data pipelines, model training environments, and deployment platforms. Cloud-based solutions reduce upfront capital costs.

- Build cross-functional teams: Effective predictive analytics combines domain expertise, data engineering, and statistical modeling. No single person has all these skills.

- Measure business impact, not just model accuracy: A model with 95% accuracy that doesn’t change decisions is worthless. Track how predictions influence actions and outcomes.

The barrier to entry has never been lower. But success still requires disciplined execution and realistic expectations.

Build Predictive Models That Actually Work With Your Data

Most predictive analytics projects fail because models don’t fit real data or decision processes. AI Superior develops custom machine learning models that use historical and current data to support forecasting, pattern detection, and more accurate decisions.

Turn Predictive Analytics Into Working Models

AI Superior focuses on practical implementation, not theory:

- Development of models based on your data

- Identification of patterns and signals in large datasets

- Support for data-driven decision processes

- Integration into existing systems

- Validation through small, testable implementations

Talk to AI Superior and see how your data can be turned into working predictive models.

Frequently Asked Questions

What is predictive analytics and how does it work?

Predictive analytics uses historical data, statistical algorithms, and machine learning to forecast future outcomes. It works by identifying patterns in past data—such as customer purchases, equipment sensor readings, or market trends—and applying those patterns to make probability-based predictions about what will happen next. The process involves data collection, model training on historical examples, validation to ensure accuracy, and deployment to generate forecasts on new data.

What industries benefit most from predictive analytics?

Healthcare, finance, retail, manufacturing, and telecommunications see substantial value from predictive analytics. Healthcare uses it for patient diagnosis and resource planning. Financial services apply it to fraud detection and credit risk. Retailers leverage customer behavior prediction and demand forecasting. Manufacturers predict equipment failures and optimize production. Any industry with substantial historical data and decisions influenced by future uncertainty can benefit.

How is predictive analytics different from machine learning?

Predictive analytics is an application focused on forecasting specific business outcomes using data. Machine learning is a set of techniques that enable systems to learn patterns from data without explicit programming. Predictive analytics often uses machine learning methods as tools, but not all machine learning is predictive (some is descriptive or prescriptive). The key distinction: predictive analytics describes what you’re trying to accomplish (forecast the future), while machine learning describes how you accomplish it (algorithmic pattern learning).

What size organization needs predictive analytics capabilities?

Organizations of all sizes can benefit, though applications differ. Large enterprises use predictive analytics for complex multi-variable forecasting across global operations. Mid-size companies apply it to focused use cases like customer churn or inventory optimization. Even small manufacturers now access predictive maintenance through affordable cloud platforms and industry-specific tools. The question isn’t organization size but whether better forecasting would improve specific decisions enough to justify the investment.

What are the biggest challenges in implementing predictive analytics?

Data quality and availability top the list—models require substantial clean historical data. Integration with existing business processes is challenging; predictions must flow into decision-making workflows to deliver value. Skills gaps persist; many organizations lack data science expertise. Model interpretability matters for regulated industries that need to explain predictions. Finally, managing expectations is critical—predictive analytics improves decision-making probabilistically, it doesn’t eliminate uncertainty.

How accurate are predictive analytics models?

Accuracy varies dramatically based on the domain, data quality, and prediction horizon. Short-term forecasts with stable patterns (like next-week demand for established products) can exceed 90% accuracy. Long-term predictions in volatile domains (like stock market movements) remain much less reliable. Food production forecasting has achieved nearly 90% accuracy using LSTM networks. Customer churn models typically range from 70-85% accuracy. The key is comparing model performance against baseline methods (like naive forecasting or human judgment) rather than expecting perfection.

What’s the difference between predictive and prescriptive analytics?

Predictive analytics forecasts what will happen—it estimates probabilities and likely outcomes. Prescriptive analytics goes further to recommend what actions should be taken in response to those forecasts. Prediction might say “this customer has a 75% churn probability.” Prescriptive would add “offer a 15% discount to maximize retention value.” Prescriptive analytics typically combines predictive models with optimization algorithms and business rules to generate actionable recommendations, not just forecasts.

The Future of Predictive Analytics

Looking ahead, several trends will shape predictive analytics beyond 2026.

Automated machine learning (AutoML) will continue reducing the technical barrier, enabling business analysts to build sophisticated models without coding. But human expertise will remain critical for problem framing, data interpretation, and bias detection.

Causal inference techniques will increasingly complement correlation-based prediction, helping organizations understand not just what will happen but why—and what interventions will actually change outcomes.

Edge computing will push predictive models closer to data sources. Manufacturing sensors, IoT devices, and mobile applications will run local prediction models rather than sending all data to centralized cloud systems.

Ethical AI and responsible forecasting will gain prominence. As predictive models influence more high-stakes decisions, frameworks for fairness, transparency, and accountability will become standard practice rather than afterthoughts.

The integration of unstructured data—text, images, video, audio—into forecasting models will deepen. Multimodal models that combine traditional structured data with natural language and visual inputs will unlock new prediction capabilities.

And real-time prediction will become table stakes. Batch forecasting won’t disappear, but applications demanding immediate responses will drive architectural innovation in streaming analytics and low-latency model serving.

The organizations that win with predictive analytics will be those that view it not as a technology project but as a decision-making capability—one that requires ongoing investment in data infrastructure, talent, and process integration.

Ready to implement predictive analytics in your organization? Start by identifying one high-value decision that better forecasting would improve. Assess your data readiness. Build a cross-functional team. And choose tools that match your technical capabilities and business needs. The technology has never been more accessible—the differentiator is disciplined execution.