Quick Summary: Predictive analytics in law uses historical data, statistical models, and machine learning to forecast legal outcomes, assess risks, and optimize decision-making across criminal justice, litigation, and law firm operations. From bail risk assessment tools to case outcome prediction platforms, these technologies are transforming how legal professionals strategize, allocate resources, and serve clients—though they raise important questions about bias, transparency, and constitutional rights.

The legal profession has entered an era where data drives strategy as much as precedent does. Predictive analytics—the practice of mining historical information to forecast future outcomes—now shapes decisions from the courtroom to the law firm boardroom.

Here’s the thing though: this isn’t just about efficiency. When algorithms help determine who gets bail and who stays in jail, or which cases settle and which go to trial, the stakes involve fundamental rights and justice itself.

According to research on big data, over 90% of the data in the world has been created in just the last two years. Law enforcement and legal practitioners are increasingly tapping into this exponential data growth, applying big data analytics across criminal justice, litigation strategy, and firm management.

What Is Predictive Analytics in Law?

Predictive analytics refers to techniques that analyze historical and current data to make informed predictions about future events. In legal contexts, this means using statistical models and machine learning algorithms to forecast case outcomes, identify patterns in judicial behavior, assess risk, and optimize resource allocation.

The applications span two broad domains: criminal justice and civil legal practice.

In criminal justice, predictive analytics powers risk assessment instruments (RAIs) that estimate the likelihood a defendant will reoffend or fail to appear in court. These tools inform bail decisions, sentencing, parole, and patrol deployment. Law enforcement agencies have adopted predictive policing systems that analyze crime data to identify likely hotspots and direct patrols accordingly.

In civil practice and law firms, predictive analytics helps answer strategic questions: What’s the probability this case will settle? How much is this claim worth? Which judge is most likely to rule favorably? What’s the optimal litigation budget?

Real talk: the technology isn’t magic. It’s pattern recognition at scale. Algorithms identify correlations in massive datasets—court records, police reports, case filings, judicial decisions—and apply statistical methods to extrapolate trends.

Use Predictive Analytics in Law with AI Superior

AI Superior works with structured and unstructured legal data to build models that support case analysis and decision-making.

The focus is on creating models that fit into existing workflows and handle document-heavy environments.

Looking to Apply Predictive Analytics in Legal Work?

AI Superior can help with:

- assessing legal data sources

- building predictive models

- integrating models into workflows

- refining outputs based on usage

👉 Contact AI Superior to discuss your project, data, and implementation approach

Predictive Analytics in Criminal Justice

Criminal justice has become one of the most visible—and controversial—domains for predictive analytics. Algorithmic tools are in widespread use across bail decisions, sentencing, predictive policing, and resource allocation.

Risk Assessment Instruments (RAIs)

Risk assessment instruments evaluate defendants to predict their likelihood of committing future crimes or failing to appear for trial. Judges use these scores when deciding whether to grant bail or detain someone pretrial.

According to Brookings Institution research, judges in one undisclosed jurisdiction had release rates ranging from roughly 50% to almost 90% across similar cases—a massive discrepancy that suggests inconsistent human judgment. Algorithmic tools aim to standardize these decisions.

The same research found that a simple checklist-style RAI considering only defendant age and prior failures to appear could reduce the detained population by 30% without increasing pretrial misconduct. Another study suggests that if bail decisions were made algorithmically, the US prison population could potentially be reduced by 40%.

But wait. The benefits come with serious concerns.

Proprietary algorithms like COMPAS (Correctional Offender Management Profiling for Alternative Sanctions) have faced criticism for racial bias. Research examining these tools found troubling patterns in how they assess defendants of different racial backgrounds, raising constitutional questions about equal protection and due process.

Predictive Policing

Law enforcement agencies deploy predictive analytics to forecast where crimes are likely to occur and allocate patrol resources accordingly. Law enforcement agencies have received federal support for predictive policing initiatives, signaling government backing for these approaches.

Adoption grew rapidly. Law enforcement adoption of predictive policing has grown significantly, with various police departments implementing or planning to implement such systems.

These systems analyze historical crime data—locations, times, types of offenses—to identify patterns and generate maps of likely crime hotspots. Officers then concentrate patrols in these areas.

Sound familiar? It should, because critics argue this creates feedback loops. Increased police presence in algorithmically identified neighborhoods leads to more arrests in those areas, which feeds data back into the system, reinforcing the original prediction. Brookings researchers describe this as digital redlining—certain neighborhoods flagged as perpetual hotspots, contributing to cycles of surveillance and harassment.

The constitutional implications span Fourth Amendment protections against unreasonable search and seizure, Fourteenth Amendment equal protection guarantees, and administrative law questions about transparency and accountability in algorithmic decision-making.

Transparency and Bias Concerns

A core tension in criminal justice analytics involves transparency. Many widely used algorithms are proprietary, their internal workings hidden behind intellectual property protections. Defendants and their attorneys often can’t examine the models that influence sentencing or bail decisions.

NASA research on criminal sentencing algorithms argues that open-source development should be the standard in highly consequential contexts affecting people’s lives. Transparency enables collaboration, contributes to greater predictive accuracy, and offers cost-effectiveness compared to expensive proprietary systems.

When researchers replicated a major sentencing algorithm using real criminal profiles and tested three penalized regressions, they demonstrated an increase in predictive power using open-source, computationally inexpensive options.

The bias question runs deeper than technical accuracy. According to RAND researchers, what may look like a 1% to 2% difference initially can compound into larger problems over time, with effects that disproportionately impact certain groups.

Predictive Analytics for Law Firms and Civil Practice

While criminal justice applications grab headlines, civil practice has quietly embraced predictive analytics to transform how firms operate, strategize, and compete.

Case Outcome Forecasting

Platforms like Lex Machina mine litigation data to identify patterns in case outcomes, judge behavior, and opposing counsel performance. These tools analyze thousands of cases to estimate the probability of success in similar matters.

According to the American Bar Association’s 2024 Legal Technology Survey Report, 46% of firms with 100+ attorneys used legal analytics tools—a significant jump reflecting the technology’s maturation and accessibility.

Industry discussions suggest that advanced models can advise clients with greater confidence. For instance, if a model shows an 85% probability of winning a case based on historical data from the same judge, jurisdiction, and claim type, attorneys can counsel clients more effectively about litigation versus settlement.

In employment or commercial litigation, historical outcome trends might reveal that certain claim types have higher settlement likelihoods or face judicial dismissal, allowing lawyers to advise clients on the risks and benefits of prolonged litigation versus early negotiation.

Strategic Decision-Making

Predictive analytics helps answer five common legal questions that drive strategic choices:

- Should we litigate or settle? Algorithms assess settlement probability by analyzing comparable cases, judge tendencies, and claim characteristics. This data-driven approach replaces pure intuition with quantified risk assessment.

- Will our motion prevail? By examining how specific judges have ruled on similar motions historically, predictive tools estimate success likelihood, helping teams prioritize arguments and allocate preparation time.

- What’s the valuation of this claim? Models trained on damages awards in comparable cases can estimate expected compensation ranges, informing settlement negotiations and client expectations.

- How much should this matter cost? Analyzing historical billing data for similar cases helps firms provide more accurate fee estimates and budget more effectively, improving client relationships and firm profitability.

- Can we pursue this more efficiently? Analytics identify which tasks consume disproportionate resources relative to outcomes, enabling process optimization and staffing decisions.

Client Intake and Matter Selection

Predictive analytics assists in client screening by forecasting the potential value or likely success of prospective matters. Firms can evaluate whether a case aligns with their expertise, resource capacity, and strategic goals before committing.

This triage function helps firms choose cases more strategically, declining low-probability matters that would consume resources without commensurate returns, while identifying high-value opportunities that match their strengths.

Operational Efficiency

Beyond case strategy, analytics optimizes internal operations. Firms analyze historical data on matter duration, staffing patterns, and task completion times to improve project management and resource allocation.

When patterns reveal that certain case types consistently exceed budget or timeline estimates, firms can adjust their processes, staffing models, or fee structures accordingly.

Public Perception and Trust Issues

Technology adoption doesn’t happen in a vacuum. Public trust shapes whether algorithmic tools gain acceptance or face resistance.

Brookings research on facial recognition and law enforcement found that over 50% of people trust police use of facial recognition technology, and nearly 75% believe facial recognition accurately identifies people.

But demographics matter. The same research revealed a stark divide: approximately 60% of white respondents trust police facial recognition, compared to only 40% of Black respondents—a 20-percentage-point gap reflecting differing experiences with law enforcement and concerns about discriminatory application.

The accuracy assumption also deserves scrutiny. Studies have documented disparities in facial recognition accuracy across demographic groups, with higher error rates for some groups including Black women. Law enforcement facial recognition databases contain faces of millions of American adults, and these accuracy disparities have real consequences.

Meanwhile, only 36% of adults believe facial recognition is used responsibly by private companies, suggesting skepticism about corporate stewardship of sensitive biometric data.

Ethical and Constitutional Challenges

Predictive analytics in law raises fundamental questions about fairness, bias, and rights.

Algorithmic Bias

Algorithms learn from historical data. When that data reflects systemic bias—racially disparate arrest rates, discriminatory lending patterns, unequal access to legal representation—models trained on it perpetuate and potentially amplify those biases.

This isn’t hypothetical. Multiple studies examining criminal risk assessment tools have documented racial disparities in how they classify defendants, with Black individuals more likely to be incorrectly flagged as high-risk compared to white individuals with similar profiles.

The problem compounds over time. As RAND analysis notes, differences that seem small initially—1% to 2%—can cascade into larger disparities as algorithmic recommendations influence decisions that shape future data collection.

Due Process and Transparency

Constitutional due process guarantees the right to understand and challenge evidence used against you. When proprietary algorithms influence bail, sentencing, or parole decisions, but defendants can’t examine the model’s logic or underlying data, due process becomes questionable.

Courts have begun grappling with these issues. Defense attorneys argue that black-box algorithms violate confrontation rights when their recommendations can’t be cross-examined or challenged.

Some jurisdictions have responded by requiring transparency. The movement toward open-source algorithm development in criminal justice contexts reflects these concerns—transparency enables scrutiny, which protects rights and improves accuracy.

Privacy and Surveillance

According to NIH research, the legal implications of law enforcement’s use of big data span criminal, constitutional, administrative, and privacy law. Digital information production at unprecedented rates enables surveillance capabilities that previous generations never imagined.

Predictive policing systems that integrate data from license plate readers, social media, commercial databases, and public records create comprehensive profiles of individuals and communities. Fourth Amendment protections against unreasonable search and seizure weren’t written with algorithmic surveillance in mind.

Privacy law struggles to keep pace with technological capability.

Best Practices for Implementation

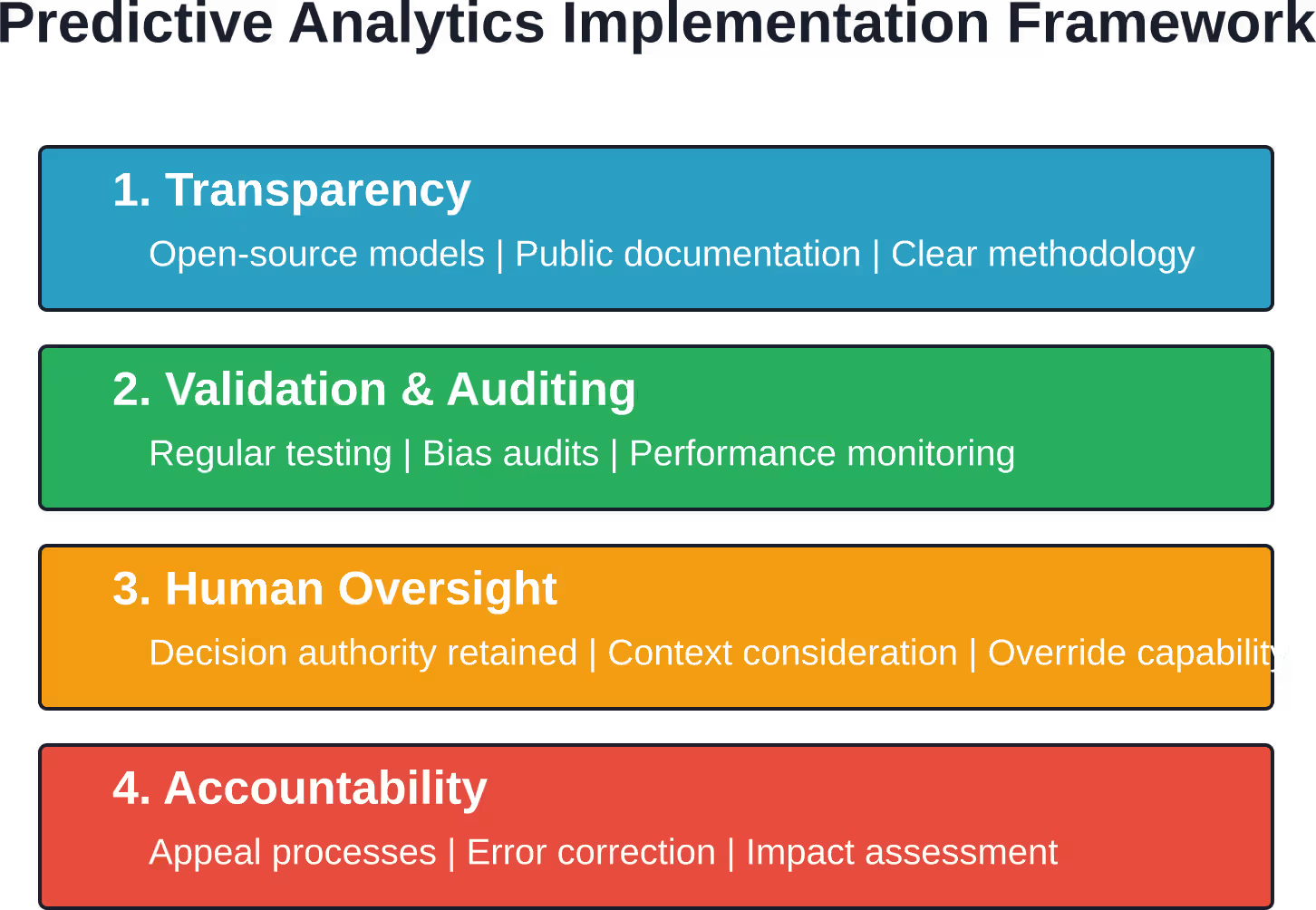

Organizations adopting predictive analytics in legal contexts should consider several principles to maximize benefits while minimizing harms.

Prioritize Transparency

Open-source models enable scrutiny by defendants, researchers, and the public. When proprietary interests conflict with transparency, highly consequential applications affecting fundamental rights should favor openness.

Validate and Audit Regularly

Algorithms require ongoing validation against new data and regular audits for bias. Static models become outdated as conditions change and can embed historical biases that no longer reflect current policy goals.

Human Oversight Remains Essential

Predictive analytics should inform human decision-making, not replace it. Judges, lawyers, and policymakers must retain authority to override algorithmic recommendations when context demands it.

Consider Impact Across Groups

Evaluate model performance disaggregated by race, gender, socioeconomic status, and other relevant characteristics. Overall accuracy can mask disparate impact on subgroups.

Establish Accountability Mechanisms

Clear processes for challenging algorithmic decisions, appealing classifications, and correcting errors protect individual rights and system legitimacy.

Future Trends in Legal Predictive Analytics

The trajectory points toward greater integration and sophistication, with several developments likely to shape the next phase.

Multimodal Data Integration

Next-generation systems will integrate structured data (case filings, statutes, decisions) with unstructured sources (deposition transcripts, correspondence, discovery documents) to generate richer insights. Natural language processing advances enable extraction of meaning from text at scale.

Real-Time Analytics

Cloud computing and distributed processing enable analysis of streaming data, providing updated predictions as new information emerges during litigation or investigation rather than relying solely on historical snapshots.

Explainable AI

Pressure for transparency is driving development of explainable AI—models that can articulate the reasoning behind predictions in ways humans understand. This addresses due process concerns while maintaining predictive power.

Brookings research highlights the tension between explainability and accuracy. Sometimes the most accurate models are the least interpretable. Democratic governance requires balancing these competing values, particularly when algorithmic recommendations affect fundamental rights.

Regulatory Frameworks

Expect increasing regulation governing algorithmic decision-making in legal contexts. Legislatures and courts will establish standards for validation, transparency, bias testing, and accountability as the technology matures and impacts become clearer.

Frequently Asked Questions

What is predictive analytics in law?

Predictive analytics in law involves using statistical models, machine learning, and historical data analysis to forecast legal outcomes, assess risks, and optimize decision-making. Applications include case outcome prediction, risk assessment in criminal justice, litigation cost estimation, and strategic planning for law firms.

How accurate are legal predictive analytics tools?

Accuracy varies significantly by application and data quality. Industry reports suggest that advanced models can forecast case outcomes with confidence levels around 85% in specific contexts with rich historical data. However, accuracy for individual predictions depends on how closely a new case matches historical patterns. Criminal risk assessment tools have faced criticism for racial bias despite claims of overall accuracy.

Do predictive policing systems reduce crime?

The evidence is mixed. While some departments report crime reductions after implementing predictive policing, isolating the technology’s specific contribution from other factors proves difficult. Critics argue these systems create feedback loops that concentrate enforcement in certain neighborhoods without necessarily reducing overall crime, potentially violating constitutional rights through excessive surveillance.

Are algorithms biased in criminal justice applications?

Research has documented bias in several widely used criminal justice algorithms. When models learn from historical data reflecting systemic disparities in arrest rates, sentencing, and enforcement, they can perpetuate those biases. Studies show that Black defendants are disproportionately classified as high-risk compared to white defendants with similar profiles. Transparency, regular auditing, and careful validation across demographic groups help mitigate but don’t eliminate these concerns.

What percentage of law firms use predictive analytics?

According to the American Bar Association’s 2024 Legal Technology Survey Report, 46% of law firms used legal analytics tools. Adoption continues growing as platforms become more accessible and attorneys recognize competitive advantages in data-driven decision-making for case strategy, client intake, and resource allocation.

Can defendants challenge algorithmic risk assessments?

Legal frameworks for challenging algorithmic assessments remain underdeveloped. When proprietary algorithms produce risk scores without transparent methodology, defendants face obstacles to meaningful challenge. Defense attorneys increasingly argue that black-box assessments violate due process and confrontation rights. Some jurisdictions now require greater transparency or limit reliance on proprietary tools in sentencing and bail decisions.

How does predictive analytics help with litigation strategy?

Predictive analytics informs litigation strategy by analyzing comparable cases to estimate success probability, likely damages ranges, settlement likelihood, and judge tendencies. Attorneys use these insights to advise clients on whether to settle or litigate, how to allocate preparation resources, which arguments to emphasize, and what settlement ranges to pursue. The technology helps replace intuition with data-driven risk assessment.

Conclusion: Balancing Innovation and Justice

Predictive analytics represents one of the most significant technological shifts in legal practice and criminal justice administration in decades. The potential benefits are substantial—more consistent bail decisions, better resource allocation, improved litigation strategy, and operational efficiency.

But the technology is not neutral. Algorithms reflect the data they’re trained on and the choices their designers make. When that data embeds historical bias or when models lack transparency, predictive analytics can perpetuate injustice under the guise of objectivity.

The path forward requires thoughtful implementation guided by principles of transparency, accountability, regular validation, and meaningful human oversight. Open-source development, particularly for high-stakes criminal justice applications, enables scrutiny that protects rights while improving accuracy.

Law enforcement agencies, courts, and law firms adopting these tools must commit to ongoing evaluation of their impact across different populations, establishing clear processes for challenging algorithmic recommendations, and maintaining human decision-making authority where fundamental rights and justice are at stake.

The legal profession stands at a crossroads. Data-driven tools offer genuine advantages in an increasingly complex environment. Whether predictive analytics ultimately enhances justice or undermines it depends on the choices legal professionals, policymakers, and technologists make today about how to design, deploy, and govern these powerful systems.

Ready to explore how predictive analytics could transform your legal practice? Start by evaluating specific use cases relevant to your work, examining available platforms for transparency and validation standards, and considering how data-driven insights could complement—not replace—professional judgment honed through years of experience.