Quick Summary: AI will not replace doctors but instead will transform healthcare by serving as a powerful assistant. While AI excels at data analysis, diagnostic imaging, and administrative tasks, the human elements of medicine—empathy, clinical judgment, ethical decision-making, and patient relationships—remain irreplaceable. The future of healthcare involves AI-augmented physicians working more efficiently and accurately than ever before.

The question keeps healthcare professionals up at night: will artificial intelligence eventually replace doctors?

It’s not an irrational fear. AI has disrupted countless industries, automating jobs that once seemed immune to technological replacement. Machine learning algorithms now outperform humans in specific diagnostic tasks. Radiology AI can detect certain cancers with remarkable accuracy. Natural language processing handles medical documentation.

But here’s the thing—the reality of AI in healthcare is far more nuanced than the replacement narrative suggests.

According to research published by the National Center for Biotechnology Information, artificial intelligence in healthcare is “complementing, not replacing, doctors and healthcare providers.” The evidence points toward a future where AI augments physician capabilities rather than eliminating the profession entirely.

Let’s examine what the data actually shows.

How AI Is Already Transforming Medical Practice

AI isn’t some distant future technology in healthcare. It’s already here, working alongside physicians in meaningful ways.

Diagnostic imaging represents the most advanced application of medical AI today. According to the Future Healthcare Journal, a review of AI and machine learning-based medical devices approved in the USA and Europe from 2015 to 2020 found that more than half—58% of devices in the USA and 53% in Europe—focused on automated classification of medical images.

These systems assist radiologists by flagging potential abnormalities, prioritizing urgent cases, and reducing the time needed for image analysis.

Beyond radiology, AI is making inroads in several clinical areas:

- Natural language processing for medical documentation and electronic health records

- Predictive analytics for disease surveillance and outbreak response

- Drug development and pharmaceutical research acceleration

- Clinical decision support systems that analyze patient data

- Automated triage systems in emergency departments

One notable example comes from the World Health Organization’s Skin NTDs App, which uses AI-based algorithms to help healthcare workers diagnose skin-related neglected tropical diseases. Preliminary results from a Kenya study showed an average sensitivity of approximately 80% for both algorithms when compared with diagnoses provided by board-certified dermatologists.

Real talk: these applications represent genuine breakthroughs. But they’re tools that enhance medical practice, not replacements for the physicians using them.

Why AI Cannot Completely Replace Physicians

The philosophical and practical limitations of AI in medicine run deeper than most people realize.

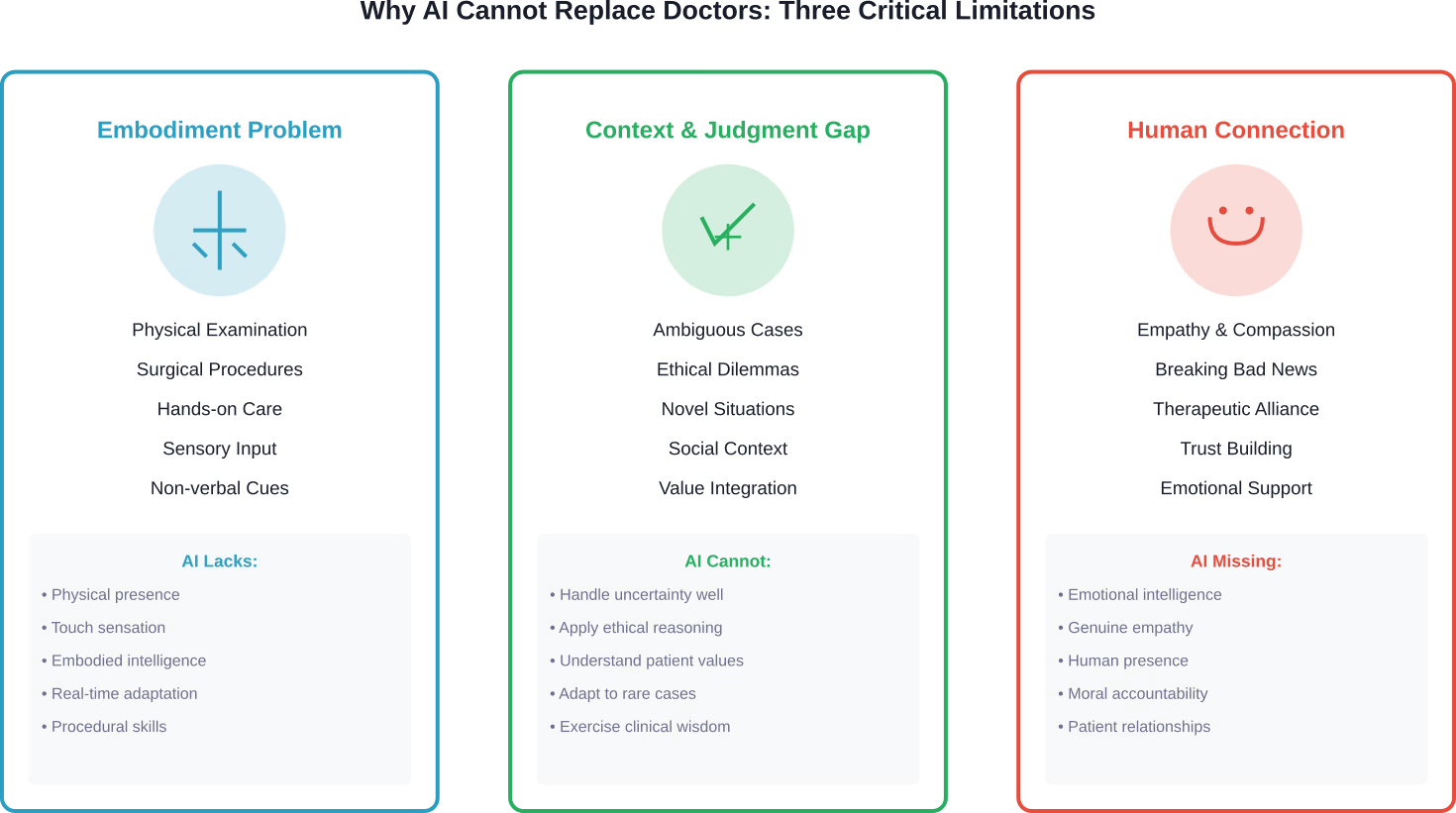

Research published in the Journal of Medical Ethics and History of Medicine examined why the idea of AI completely replacing physicians is fundamentally a “pseudo-problem.” The analysis identified three critical limitations that prevent full AI replacement of doctors.

The Embodiment Problem

Medicine isn’t purely intellectual work. Physicians perform physical examinations, surgical procedures, and hands-on interventions that require embodied intelligence.

AI systems lack physical presence and the sensory input that comes from examining patients. The subtle cues a doctor picks up from touching a patient’s abdomen, observing their gait, or noticing their non-verbal distress signals remain beyond AI’s current capabilities.

As one urologist from Keck Medicine of USC explained: “I know the patient’s history, the previous surgeries they’ve had and obstacles I might encounter. AI can’t really do that yet.”

The Context and Judgment Gap

Medical decision-making involves far more than pattern recognition in data sets.

Physicians integrate multiple sources of information—patient history, social context, personal values, family dynamics, and individual risk tolerance—into treatment recommendations. They navigate ambiguous situations where evidence is incomplete or conflicting.

AI models trained on historical data struggle with novel situations, rare presentations, and cases that fall outside their training parameters. They can’t adapt to unexpected complications the way experienced clinicians can.

The Human Relationship Factor

Healthcare fundamentally involves human connection.

Patients need empathy, reassurance, and someone who treats them as a whole person rather than a collection of symptoms. Breaking bad news, discussing end-of-life care, and supporting patients through difficult treatments require emotional intelligence that AI cannot replicate.

The World Health Organization emphasizes that while AI holds promise for improving healthcare delivery, “ethics and human rights must be put at the heart of its design, deployment, and use.” This includes protecting human autonomy and ensuring patients maintain control over their healthcare decisions.

Where AI Actually Excels in Healthcare

Don’t get it twisted—AI brings enormous value to medicine. Just not as a replacement for doctors.

The technology shines in specific, well-defined applications where its strengths align perfectly with clinical needs.

Diagnostic Imaging and Pattern Recognition

AI excels at analyzing visual data and identifying patterns across massive datasets.

In radiology, pathology, and dermatology, machine learning algorithms can process thousands of images and detect subtle abnormalities that might escape human notice. They work tirelessly without fatigue, maintaining consistent performance across repetitive tasks.

But they work best when augmenting radiologists, not replacing them. The AI flags potential issues; the physician provides context, confirms findings, and integrates results into the broader clinical picture.

Administrative Burden Reduction

Here’s where AI might have its biggest immediate impact on physician wellbeing.

According to research on physician productivity, a meta-analysis of empirical studies found that AI significantly reduces physician workload and diagnostic time by automating repetitive interpretation and documentation processes.

A Sermo survey of physicians found that According to physician surveys, 46% of respondents saw its value as an administrative tool, such as a scribe, to reduce paperwork. Only 17% believe it could make meaningful clinical suggestions.

That tells you something important about where doctors see the real practical value.

Data Analysis and Prediction

AI can crunch numbers and identify trends far beyond human cognitive capacity.

For population health management, disease surveillance, and clinical research, AI processes vast amounts of data to predict outbreaks, identify at-risk patients, and accelerate drug development. These capabilities support better decision-making at the system level.

An AI model achieved a 79.5% accuracy rate in the U.K. Royal College of Radiologists (FRCR) mock examination, compared to an average of 84.8% for 26 recently qualified human radiologists. The AI passed 2 out of 10 mock exams, while the average radiologist passed 4 out of 10. Overall, the AI ranked 26th out of 27 participants (second to last), but it was the top performer in one of the 10 mock examinations and correctly identified 50% of the cases where most radiologists failed.

The Real Future: AI-Augmented Medicine

The question shouldn’t be whether AI will replace doctors. It should be how AI will transform medical practice.

Research consistently points toward a collaborative model where artificial intelligence handles tasks it does well—data processing, pattern recognition, administrative work—while physicians focus on the uniquely human aspects of medicine.

As one general practitioner explained in community discussions: “AI can assist clinicians in taking a more comprehensive approach to disease management, better coordinate care plans, and help patients to better manage and comply with their long-term treatment programs.”

That’s the realistic vision. Not replacement, but enhancement.

Addressing Physician Burnout

The potential for AI to combat healthcare workforce burnout deserves attention.

According to research published in BMJ Health Care Informatics, burnout has become so widespread among physicians, nurses, and staff that it markedly impairs the healthcare workforce. The condition compromises patient care quality, precipitating medical errors and declining physician productivity.

AI tools that reduce documentation burden, streamline workflows, and automate routine tasks could give physicians back the time and mental energy they need to connect with patients.

But there’s a flip side. Poorly implemented AI that creates additional work, generates alerts physicians must wade through, or introduces new technical problems could worsen burnout rather than alleviate it.

Expanding Access to Care

AI holds particular promise for underserved populations.

The World Health Organization notes that AI could enable resource-poor countries and rural communities—where patients have restricted access to healthcare workers—to bridge gaps in health services. Diagnostic AI, telemedicine platforms, and clinical decision support could extend specialist knowledge to areas with physician shortages.

However, WHO also cautions against overestimating AI benefits at the expense of investments needed for universal health coverage. Technology isn’t a substitute for adequate healthcare infrastructure and workforce.

Critical Challenges and Risks

The path toward AI-augmented medicine isn’t without obstacles.

WHO’s guidance on AI ethics identifies several risks that must be addressed through careful regulation and governance.

Bias and Equity Concerns

AI systems reflect the data they’re trained on.

Systems trained primarily on data from individuals in high-income countries may not perform well for patients in low and middle-income settings. Algorithms can encode existing healthcare disparities, perpetuating or even amplifying inequities.

According to WHO, AI technologies must be “carefully designed to reflect the diversity of socio-economic and healthcare settings” to ensure they serve all populations equitably.

Privacy and Data Protection

Medical AI requires vast amounts of patient data.

The unethical collection and use of health data poses serious risks. WHO emphasizes that protecting human autonomy means privacy and confidentiality must be protected, and patients must give valid informed consent through appropriate legal frameworks.

The subordination of patient rights to commercial interests of technology companies represents a real danger that requires regulatory oversight.

Safety and Accountability

When AI makes errors, who’s responsible?

While AI technologies perform specific tasks, WHO stresses that “it is the responsibility of stakeholders to ensure that they are used under appropriate conditions and by appropriately trained people.” Effective mechanisms must exist for questioning and providing redress when AI-based decisions adversely affect individuals.

The FDA has developed regulatory frameworks for AI-enabled medical devices to ensure safety and effectiveness, but the field continues evolving faster than regulation can keep pace.

What Physicians Think About AI

Community discussions among doctors reveal nuanced perspectives on AI’s role in medicine.

Most physicians don’t fear wholesale replacement. They recognize AI’s potential to improve specific aspects of their work while remaining skeptical of overblown claims.

Common themes emerge from physician forums and surveys:

- Enthusiasm for administrative AI that reduces documentation burden

- Cautious optimism about diagnostic assistance tools

- Concern about liability when AI makes recommendations

- Skepticism that AI can handle complex, ambiguous cases

- Recognition that patient relationships remain fundamentally human

Many doctors see AI as potentially freeing them to practice medicine the way they want—spending more time with patients, less time on paperwork.

But they also worry about poorly designed systems that create more problems than they solve.

Preparing for an AI-Augmented Future

The medical profession needs to adapt strategically to AI integration.

This doesn’t mean preparing for obsolescence. It means developing skills and systems that maximize the benefits of AI while preserving what makes physicians irreplaceable.

Training and Digital Literacy

Medical education must evolve to prepare future doctors for AI-augmented practice.

Physicians need digital literacy to understand how AI systems work, their limitations, and how to interpret their outputs critically. They need training in when to trust AI recommendations and when to override them based on clinical judgment.

WHO notes that millions of healthcare workers will require digital skills training or retraining as some functions become automated.

Regulatory Frameworks

Governments must develop appropriate governance structures.

WHO outlines six principles for AI regulation: protecting human autonomy, promoting human wellbeing and safety, ensuring transparency, fostering responsibility and accountability, ensuring inclusiveness and equity, and promoting responsiveness and sustainability.

These principles should guide laws and policies that balance innovation with patient protection.

Workflow Integration

Healthcare systems need thoughtful implementation strategies.

Poorly integrated AI creates workflow disruptions, alert fatigue, and physician frustration. Successful deployment requires involving clinicians in design decisions, providing adequate training, and continuously evaluating real-world performance.

The goal isn’t to maximize automation but to optimize the human-AI partnership.

| Application Area | AI Readiness | Current Impact | Primary Limitation |

|---|---|---|---|

| Diagnostic Imaging | High | Significant assistance in radiology, pathology, dermatology | Requires physician interpretation and context |

| Administrative Tasks | High | Reducing documentation burden, scheduling automation | Integration with existing systems |

| Clinical Decision Support | Moderate | Flagging drug interactions, suggesting diagnoses | Liability concerns, accuracy in edge cases |

| Physical Examination | Low | Minimal—some sensor-based monitoring | Requires embodied intelligence |

| Patient Communication | Low | Basic chatbots for triage | Lacks empathy and nuanced understanding |

| Surgical Procedures | Moderate | Robotic assistance in specific procedures | Requires human surgeon control and judgment |

| Ethical Decision-Making | Very Low | None—remains entirely human domain | AI cannot apply moral reasoning |

Start With Real Medical Use Cases Before Rethinking Roles

AI is already being used in healthcare, but not as a replacement for doctors. It works in specific areas, processing medical data, identifying patterns, or supporting routine tasks. Diagnosis, responsibility, and final decisions still stay with medical professionals. AI Superior focuses on turning these capabilities into practical systems. Instead of broad assumptions, they help organizations define clear use cases and build solutions that fit into existing environments.

What they typically help with:

- Identifying realistic AI use cases before development

- Building custom AI models and data-driven tools

- Integrating AI into current systems and workflows

- Validating ideas through proof of concept before scaling

👉Contact AI Superior and see where AI can support your workflows without replacing the people who run them.

The Verdict on AI Replacing Doctors

So will AI replace doctors?

The evidence says no. Not completely, and probably not even mostly.

AI will transform medical practice dramatically. It’ll automate certain tasks, enhance diagnostic accuracy in specific domains, and free physicians from administrative drudgery. It might change what doctors spend their time on, shifting focus toward the uniquely human elements of care.

But the core of medicine—the judgment, empathy, ethical reasoning, physical skills, and human connection that define excellent patient care—remains beyond AI’s capabilities.

As research published by the National Center for Biotechnology Information concludes, AI is “complementing, not replacing, doctors and healthcare providers.”

The future looks like physicians wielding powerful AI tools to practice better medicine, not algorithms practicing medicine alone.

What we’re building is augmented intelligence in healthcare—human doctors enhanced by computational power, not replaced by it.

Frequently Asked Questions

Will AI take away doctors’ jobs in the next 10 years?

AI is unlikely to eliminate physician jobs within the next decade. While AI will automate certain tasks like documentation and basic image analysis, the complex judgment, physical examination skills, and human relationships central to medicine cannot be replicated by current or near-future AI. According to research from WHO and NCBI, AI serves as a complement to physicians rather than a replacement. Doctors’ roles will evolve to focus more on tasks requiring human intelligence, but demand for physicians remains strong given ongoing healthcare workforce shortages.

Can AI diagnose diseases better than doctors?

AI can outperform doctors on specific, narrow diagnostic tasks with well-defined parameters—like identifying certain patterns in medical images or screening for particular conditions. For example, AI achieved 79.5% accuracy on radiology examinations. However, diagnosis in real clinical practice involves integrating multiple information sources, patient history, physical examination findings, and contextual factors that AI cannot fully process. AI works best as a diagnostic assistant that flags potential issues for physician review rather than as an independent diagnostic tool.

What medical specialties are most at risk from AI?

Radiology, pathology, and dermatology face the most AI disruption since they rely heavily on image interpretation—AI’s strongest capability. However, “at risk” doesn’t mean replacement. These specialists are learning to work with AI tools that handle routine screening while they focus on complex cases, procedural work, patient consultation, and treatment planning. The FDA has approved over 50% of medical AI devices for imaging applications, but these augment rather than replace specialists. Specialties requiring extensive physical procedures, complex patient interaction, or ethical decision-making face minimal displacement risk.

Are patients comfortable with AI making medical decisions?

Patient attitudes vary, but most prefer AI-assisted rather than AI-driven care. People generally accept AI for administrative tasks, screening, and flagging potential issues, but want human doctors making final decisions about their treatment. WHO emphasizes that protecting human autonomy in healthcare means humans must remain in control of medical decisions. Trust remains a central issue—patients need transparent information about when and how AI is used in their care. The doctor-patient relationship built on empathy and trust cannot be replicated by algorithms alone.

How can doctors prepare for working with AI?

Physicians should develop digital literacy to understand AI capabilities and limitations. This includes learning how AI systems are trained, what biases they might contain, and when to trust versus question AI recommendations. Medical education is beginning to incorporate AI training, but practicing physicians can pursue continuing education in health informatics. According to research on physician productivity, those who embrace AI for administrative tasks report reduced burnout. Doctors should also advocate for thoughtful AI implementation in their institutions that truly supports clinical workflow rather than creating additional burdens.

What regulations govern medical AI?

In the United States, the FDA regulates AI-enabled medical devices through its existing device frameworks, with over 1400 AI-enabled devices now approved. The FDA maintains a publicly available list of authorized AI medical devices and has developed assessment paradigms specifically for AI and machine learning applications. WHO has published global guidance emphasizing six principles: protecting autonomy, promoting safety, ensuring transparency, fostering accountability, ensuring equity, and promoting sustainability. However, AI technology evolves faster than regulation, creating ongoing challenges for governance frameworks worldwide.

Will AI make healthcare more accessible to underserved populations?

AI has potential to extend healthcare access to resource-poor and rural areas where physician shortages exist. WHO notes that diagnostic AI and telemedicine could bridge gaps in specialist access for underserved communities. The WHO Skin NTDs App demonstrated this potential in Kenya, assisting healthcare workers in areas lacking dermatologists. However, WHO cautions against overestimating AI benefits at the expense of fundamental healthcare infrastructure investments. AI trained primarily on data from wealthy countries may perform poorly for diverse populations, risking increased inequity rather than reduced disparities. Careful design and validation across diverse settings is essential.

The Bottom Line

AI represents the most significant technological shift in medicine since the introduction of modern imaging.

It won’t replace doctors. But it will fundamentally change how medicine is practiced.

Physicians who learn to leverage AI tools effectively will deliver better, more efficient care than those who resist the technology. Healthcare systems that implement AI thoughtfully—with physician input, adequate training, and continuous evaluation—will improve outcomes while reducing clinician burnout.

The goal isn’t choosing between human doctors and artificial intelligence. It’s building a healthcare system where both work together, each contributing what they do best.

That’s the future worth building. Not one where algorithms practice medicine alone, but one where human physicians empowered by AI deliver the most effective, empathetic, and equitable care possible.