Quick Summary: Predictive analytics in clinical trials uses statistical modeling, machine learning, and historical data to forecast patient outcomes, optimize trial design, and improve recruitment efficiency. FDA guidance now supports AI-driven predictive models for regulatory decision-making, with validation frameworks ensuring model accuracy through metrics like calibration slope and Brier scores. Organizations implementing these tools report faster timelines, better patient stratification, and reduced development costs.

Clinical trials have long been the most expensive, time-consuming phase of drug development. Traditional approaches rely heavily on retrospective analysis and educated guesses about patient responses, protocol feasibility, and enrollment timelines.

But that’s changing fast.

Predictive analytics now applies statistical techniques and machine learning algorithms to current and historical trial data, enabling researchers to forecast outcomes before they happen. The FDA has recognized this shift, issuing formal guidance on artificial intelligence use throughout the drug development process and clinical trial design.

The pharmaceutical industry is increasingly turning to these data-driven approaches for everything from identifying new drug targets to forecasting clinical trial timelines. Real talk: the organizations that master predictive analytics are seeing measurable improvements in trial success rates, recruitment speed, and overall efficiency.

What Predictive Analytics Actually Means for Clinical Trials

Predictive analytics applies statistical and modeling techniques to current and historical data, making it possible to anticipate future events with quantifiable confidence levels. In the clinical trial context, this translates to forecasting patient enrollment rates, predicting dropout risks, identifying likely responders to treatment, and estimating protocol feasibility before committing millions to execution.

According to FDA guidance, artificial intelligence refers to machine-based systems that can make predictions, recommendations, or decisions influencing real or virtual environments. These systems perceive environments through various inputs, abstract perceptions into models through automated analysis, and use model inference to formulate actionable options.

The technology stack is now dominated by Large Medical Models (LMMs) and Multi-modal Foundation Models integrated with traditional ML for clinical evidence generation.

The Components That Make It Work

Clinical prediction models follow a structured development process. Research published in medical journals outlines seven critical steps: determining the prediction problem and defining predictors and outcomes, coding predictors appropriately, specifying a model architecture, estimating model parameters, evaluating model performance, validating against external datasets, and presenting the model in a clinically useful format.

Current 2026 validation frameworks, including the updated TRIPOD+AI statement, prioritize calibration intercept/slope and Decision Curve Analysis (DCA) over rigid R² differences, requiring shrinkage factors tailored to the specific clinical impact.

Use Predictive Analytics in Clinical Trials with AI Superior

AI Superior works with structured and unstructured data to build predictive models that support trial planning, monitoring, and analysis.

The focus is on models that fit into regulated workflows and can handle complex datasets used in clinical environments.

Looking to Apply Predictive Analytics in Clinical Trials?

AI Superior can help with:

- assessing clinical and research data

- building predictive models

- integrating models into existing systems

- refining outputs based on usage

👉 Contact AI Superior to discuss your project, data, and implementation approach

Where Predictive Analytics Delivers the Biggest Impact

The applications span the entire clinical trial lifecycle. Here’s where organizations are seeing the clearest returns.

Patient Recruitment and Site Selection

Finding the right patients remains one of the most stubborn bottlenecks in clinical research. Predictive models analyze electronic health records, claims data, and registry information to identify candidate populations that match enrollment criteria. More importantly, these models forecast which sites will enroll fastest based on historical performance, patient demographics, and local disease prevalence.

The difference between a well-targeted recruitment strategy and a poorly planned one can mean months of timeline variance and hundreds of thousands in wasted screening costs.

Protocol Optimization and Feasibility Assessment

Before locking a protocol, predictive analytics can simulate thousands of trial scenarios, testing different inclusion criteria, visit schedules, endpoint selections, and sample size requirements. This computational approach identifies design flaws that would otherwise surface months into execution.

Research on multiple sclerosis prognostic models has found that many published models include predictors unlikely to be measured in primary care settings, severely limiting their practical utility. Predictive feasibility assessment catches these implementation gaps early.

Interim Decision-Making and Adaptive Designs

Validation analyses based on completed trials and real-world data now support the selection of predictive models and interim analysis rules for future studies. The FDA has acknowledged this application, noting that AI and machine learning are gaining traction in clinical research and changing the trial landscape.

Adaptive trial designs use accumulating data to modify aspects like sample size, treatment arms, or patient populations while the study is ongoing. Predictive analytics powers these decisions, ensuring modifications improve efficiency without compromising statistical integrity.

Safety Monitoring and Adverse Event Prediction

Machine learning models trained on historical safety databases can flag patients at elevated risk for specific adverse events before they occur. This enables proactive monitoring protocols, more informed consent conversations, and earlier intervention when warning signs emerge.

The calibration of these safety models matters enormously. Poorly calibrated predictions lead to either alarm fatigue from false positives or missed signals from false negatives.

Validation Standards and Regulatory Considerations

The FDA’s 2024 guidance on using artificial intelligence to support regulatory decision-making for drug and biological products establishes clear expectations. Sponsors must demonstrate that predictive models are fit for their intended use, properly validated, and transparently documented.

Model performance isn’t just about accuracy. Calibration matters enormously in clinical contexts. A well-calibrated model’s predictions match observed outcomes across the probability spectrum. Poorly calibrated models might show high discrimination (separating events from non-events) while systematically over- or under-predicting absolute risk.

Calibration assessment typically involves fitting a calibration line on observations versus predictions, summarizing performance with two numbers: the intercept and slope. Alternatively, smooth calibration curves assess local calibration across different risk strata.

External Validation Requirements

Internal validation on the development dataset isn’t enough. Prediction models must demonstrate transportability to new populations, different healthcare settings, or future time periods. External validation reveals whether a model trained on academic medical center data maintains accuracy in community hospitals, or whether geographic variations in disease presentation affect performance.

Published cardiovascular risk prediction models demonstrate strong discrimination; well-validated models achieve c-index values in the 0.84–0.87 range across different modeling strategies. The consistency across approaches builds confidence, but the modest variation across subgroups highlights the importance of comprehensive validation.

Context matters too. A lung cancer prediction model validated in a thoracic surgery clinic population with high cancer prevalence may not perform similarly in a general outpatient setting with lower disease prevalence. Disease prevalence affects positive and negative predictive values even when sensitivity and specificity remain constant.

| Validation Metric | Target Threshold | Clinical Meaning |

|---|---|---|

| Global Shrinkage Factor | ≥0.9 | Minimal optimism in predictor effects |

| R² Difference (Continuous) | ≤0.05 | Stable explained variance |

| R² Difference (Binary) | ≤0.05 | Consistent classification performance |

| Brier Score (Binary) | 0–0.25 | 0=perfect, 0.25=non-informative |

| Residual SD Margin | ≤10% | Precise estimation of variability |

Implementation Challenges That Actually Matter

Theory is one thing. Deployment is another.

Data Quality and Integration Barriers

Predictive models are only as good as their training data. Clinical trial databases often suffer from missing values, inconsistent coding, selection biases, and limited diversity. Electronic health record data brings its own issues: documentation variability, billing code inaccuracies, and structural differences across EHR systems.

Integrating data from multiple sources requires extensive cleaning, standardization, and validation. That’s unglamorous work, but it determines whether predictions generalize or fail spectacularly when confronted with real-world messiness.

Model Interpretability Versus Performance Trade-offs

Complex machine learning architectures often outperform simpler statistical models on predictive accuracy. But that performance advantage comes at the cost of interpretability. Regulatory reviewers and institutional review boards want to understand why a model makes specific predictions, especially when those predictions influence patient safety decisions.

Linear models and decision trees offer transparency. Deep neural networks offer opacity. The optimal choice depends on the specific application, regulatory requirements, and available validation resources.

Equity and Bias Considerations

Predictive models can perpetuate or amplify existing healthcare disparities if training data under-represents certain populations or if predictor variables correlate with protected characteristics. Implementation efforts must incorporate equity considerations from inception through deployment, routinely auditing model performance across demographic subgroups.

The FDA guidance explicitly addresses this concern, recommending that sponsors evaluate whether AI systems perform consistently across relevant patient subpopulations and clinical contexts.

The Current State of Adoption

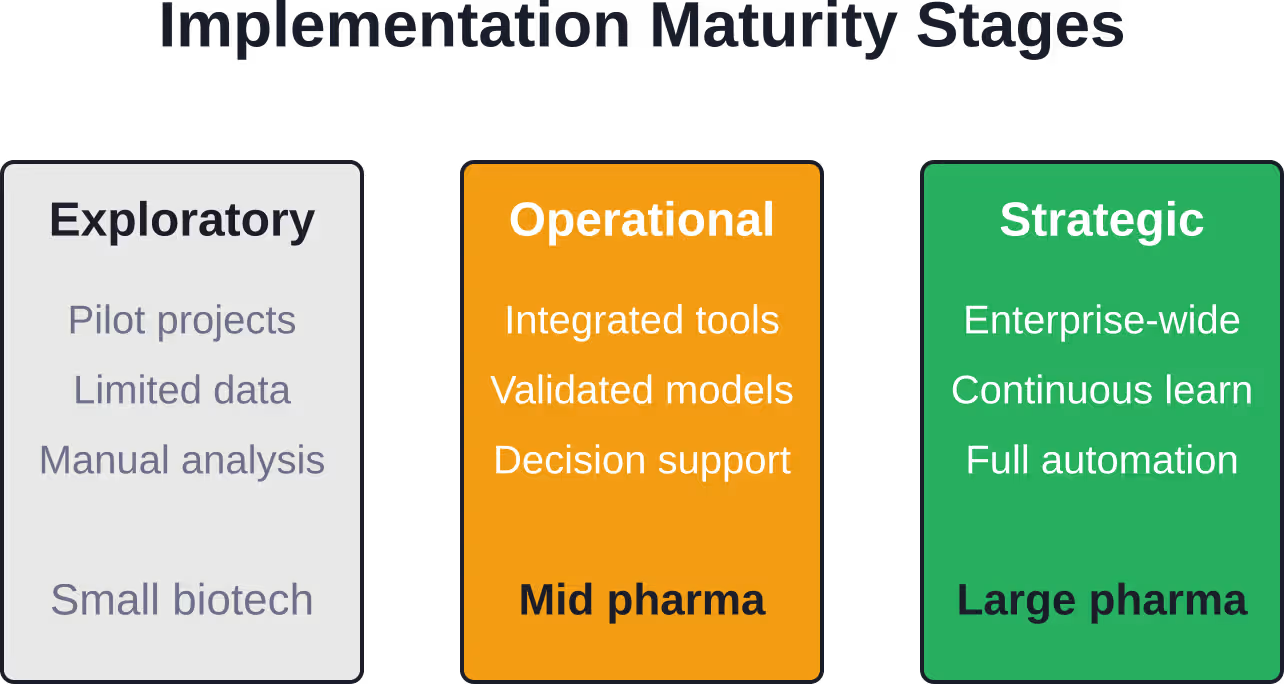

Pharmaceutical companies and contract research organizations have moved beyond pilot projects. Predictive analytics now influences real trial decisions, with organizations building dedicated data science teams and investing in analytics infrastructure.

That said, adoption remains uneven. Large pharmaceutical companies with extensive historical trial databases and data science capabilities lead the way. Smaller biotechs and academic research centers face steeper resource barriers.

The technology stack continues evolving rapidly. Cloud-based analytics platforms, federated learning approaches that preserve data privacy, and pre-trained foundation models adapted for clinical applications are all gaining traction.

Practical Steps for Getting Started

Organizations new to predictive analytics don’t need to build everything from scratch. Start with well-defined, high-impact use cases where data availability is strong and validation feasibility is clear.

- Patient recruitment optimization represents an accessible entry point. Historical enrollment data, site performance metrics, and screening failure rates provide rich training datasets for relatively straightforward predictive models.

- Protocol feasibility assessment comes next. Analyzing past protocols against actual enrollment timelines reveals patterns that inform future design decisions. This doesn’t require exotic algorithms—even basic regression models deliver value when applied systematically.

- Building internal capability matters more than buying software. Train clinical operations teams to interpret model outputs, question assumptions, and integrate predictions into decision workflows. The best predictive model is useless if stakeholders don’t trust it or understand how to act on its insights.

- Partnerships with academic medical centers, contract research organizations with analytics capabilities, or technology vendors can accelerate learning curves. But maintain ownership of core competencies. Predictive analytics will increasingly differentiate successful drug development organizations from struggling ones.

Looking Forward

The trajectory is clear. Regulatory acceptance of AI-driven decision support continues expanding. Data availability keeps growing. Computational capabilities keep improving. The organizations that build robust predictive analytics capabilities now will have compounding advantages over competitors still relying on intuition and spreadsheets.

But wait.

Technology alone won’t solve clinical trial inefficiencies. Predictive models need thoughtful implementation, continuous validation, and integration with human expertise. The goal isn’t replacing clinical judgment—it’s augmenting it with quantitative evidence that wasn’t previously accessible.

As the FDA noted in its guidance discussions, AI and machine learning are changing the clinical trial landscape. That transformation creates opportunities for organizations willing to invest in the infrastructure, talent, and cultural changes required to make data-driven decision-making routine rather than exceptional.

The question isn’t whether predictive analytics will reshape clinical trials. It’s whether specific organizations will lead that transformation or scramble to catch up later when competitive pressures leave no alternative.

Frequently Asked Questions

What types of data do predictive analytics models use in clinical trials?

Models typically integrate historical clinical trial databases, electronic health records, claims data, disease registries, genomic information, and real-world evidence. The specific data sources depend on the prediction task—recruitment models emphasize patient demographics and site performance, while safety models prioritize adverse event databases and laboratory values.

How accurate are predictive models for clinical trial outcomes?

Accuracy varies significantly by application and model quality. Well-validated cardiovascular risk models achieve c-index values in the 0.84–0.87 range, indicating strong discrimination between high and low-risk patients. However, poorly developed models may perform no better than chance. External validation on independent datasets is essential before trusting any model’s predictions.

Does the FDA require specific validation standards for AI in clinical trials?

The FDA’s 2024 guidance on artificial intelligence for drug development recommends that sponsors demonstrate models are fit for purpose, properly validated, and transparently documented. While specific numerical thresholds aren’t mandated, published validation frameworks suggest metrics like shrinkage factors ≥0.9 and R² differences ≤0.05 for continuous outcomes.

Can predictive analytics reduce clinical trial costs?

Industry reports suggest substantial potential savings through optimized site selection, reduced screen failure rates, better patient stratification, and earlier identification of futile trials. However, realizing these benefits requires upfront investment in data infrastructure, model development, and validation—and not all applications deliver positive returns.

What’s the difference between predictive analytics and machine learning in trials?

Predictive analytics is the broader discipline of using data to forecast future outcomes. Machine learning represents specific algorithmic approaches within predictive analytics that automatically learn patterns from data. All machine learning is predictive analytics, but not all predictive analytics uses machine learning—traditional statistical regression counts too.

How do organizations handle bias in predictive models?

Best practices include evaluating model performance across demographic subgroups, ensuring training data represents diverse populations, auditing predictor variables for correlations with protected characteristics, and establishing governance processes that incorporate equity considerations from model inception through deployment. The FDA guidance explicitly recommends assessing performance across relevant patient subpopulations.

What skills do teams need to implement predictive analytics?

Successful implementation requires data scientists with statistical and machine learning expertise, clinical domain experts who understand trial operations and medical context, data engineers who can integrate and clean disparate data sources, and change management specialists who can drive adoption among skeptical stakeholders. No single person needs all these skills, but the team collectively must cover them.

Conclusion

Predictive analytics has moved from experimental curiosity to operational necessity in clinical trials. The FDA’s formal guidance, published validation frameworks, and growing body of real-world implementations all point to a fundamental shift in how trials are designed and executed.

Organizations that build data infrastructure, develop validation expertise, and integrate predictive insights into decision workflows are already seeing measurable improvements in recruitment efficiency, protocol feasibility, and patient outcomes. Those that delay face mounting competitive disadvantages as peers gain compounding benefits from data-driven approaches.

The technology will keep improving. The real question is whether specific organizations will build the capabilities to harness it effectively. Start with well-scoped use cases, invest in proper validation, and focus on integration with existing workflows rather than wholesale replacement of current processes.

The future of clinical trials is quantitatively predictable. The organizations that embrace that reality systematically will lead the next generation of drug development.