Quick Summary: Private LLM evaluation services typically cost between $249 and $10,000+ monthly for platforms, while custom evaluation projects range from $125K to $820K annually depending on scale. Costs are driven by model size, infrastructure requirements, team expertise, and deployment complexity. Open-source evaluation tools exist, but operational expenses for hosting, talent, and maintenance often exceed platform subscription fees.

The rush to deploy private large language models has created a painful realization for many organizations: building the model is just the beginning. Evaluating whether it actually works? That’s where things get expensive.

Unlike public API-based models where evaluation can happen through simple benchmarking, private LLMs demand rigorous, ongoing testing that accounts for proprietary data, custom use cases, and enterprise security requirements. The evaluation infrastructure alone can match or exceed the hosting costs of the models themselves.

Here’s the uncomfortable truth: enterprises consistently underestimate evaluation costs by 40-60%. They budget for hardware and engineers but forget about continuous testing infrastructure, red teaming specialists, and the operational overhead of maintaining evaluation pipelines that run thousands of times per month.

This breakdown covers platform pricing, infrastructure expenses, talent costs, and the hidden operational bleed that turns “affordable” open-source evaluation into a six-figure annual commitment.

Understanding Private LLM Evaluation: What You’re Actually Paying For

Private LLM evaluation isn’t just running a model through a benchmark suite and calling it done. It’s a continuous process that spans multiple dimensions.

The evaluation process covers accuracy testing, security vulnerability scanning, performance optimization, bias detection, and regulatory compliance validation. Each dimension requires different tools, datasets, and expertise. Some organizations attempt to cobble together open-source solutions. Others purchase platforms. Most end up with a hybrid that costs more than either approach alone.

The Core Components Driving Costs

Evaluation infrastructure breaks down into several cost centers. Platform subscriptions or licensing fees form the visible baseline. Infrastructure costs for running evaluations at scale add another layer. Then there’s the talent expense—ML engineers, evaluation specialists, and domain experts who design tests and interpret results.

Don’t forget data costs. Custom evaluation datasets, whether licensed from vendors or created internally, represent a significant investment. According to NIST’s Center for AI Standards and Innovation (CAISI), building gold-standard AI systems requires gold-standard AI measurement science—and those don’t come cheap.

The final piece? Integration and maintenance overhead. Evaluation pipelines need to connect with existing MLOps workflows, version control systems, and monitoring platforms. That integration work rarely appears in initial cost estimates but consistently consumes 20-30% of evaluation budgets.

Platform-Based Evaluation Services: Pricing Benchmarks

Managed evaluation platforms offer the fastest path to comprehensive testing. But pricing varies wildly based on features, scale, and vendor positioning.

Based on available data from 2025-2026, here’s what the market looks like:

| Platform Tier | Monthly Cost | Key Features | Best For |

|---|---|---|---|

| Entry (e.g., Braintrust Pro) | $249 | Unlimited traces, 5GB processed data, 50K scores | Small teams, early-stage products |

| Mid-Tier | $1,500-$3,500 | Advanced analytics, custom benchmarks, team collaboration | Growing products with moderate traffic |

| Enterprise | $5,000-$10,000+ | On-prem deployment, dedicated support, unlimited scale | Large organizations, regulated industries |

| Custom/White-Label | $15,000+ | Full customization, dedicated infrastructure, SLA guarantees | Fortune 500, government agencies |

The data from Braintrust’s pricing structure shows that according to Braintrust, customers consistently report accuracy improvements of 30% or more within just weeks of adoption. That kind of performance gain justifies the platform cost—if the alternative is shipping broken AI features to production.

Giskard offers both open-source and enterprise options. The open-source library is free but requires self-hosting and technical expertise. Their enterprise platform provides continuous AI red teaming and RAG evaluation with managed infrastructure, though specific pricing isn’t publicly disclosed.

What Platform Fees Actually Cover

Platform subscriptions typically include the evaluation framework itself, pre-built benchmark suites, hosting for test execution, result analytics dashboards, and some level of support.

What they don’t cover? The compute costs for actually running your models during evaluation. Custom dataset creation. The engineering time to integrate the platform into your workflow. Training your team to use it effectively.

Many platforms charge based on processed data volume or evaluation runs. That $249/month entry tier sounds reasonable until you’re processing 100GB of evaluation data monthly and suddenly need the enterprise plan.

Infrastructure Costs for Self-Hosted Evaluation

Some teams choose to build evaluation infrastructure using open-source tools like Lighteval or Hugging Face’s evaluation libraries. The software is free. Everything else costs money.

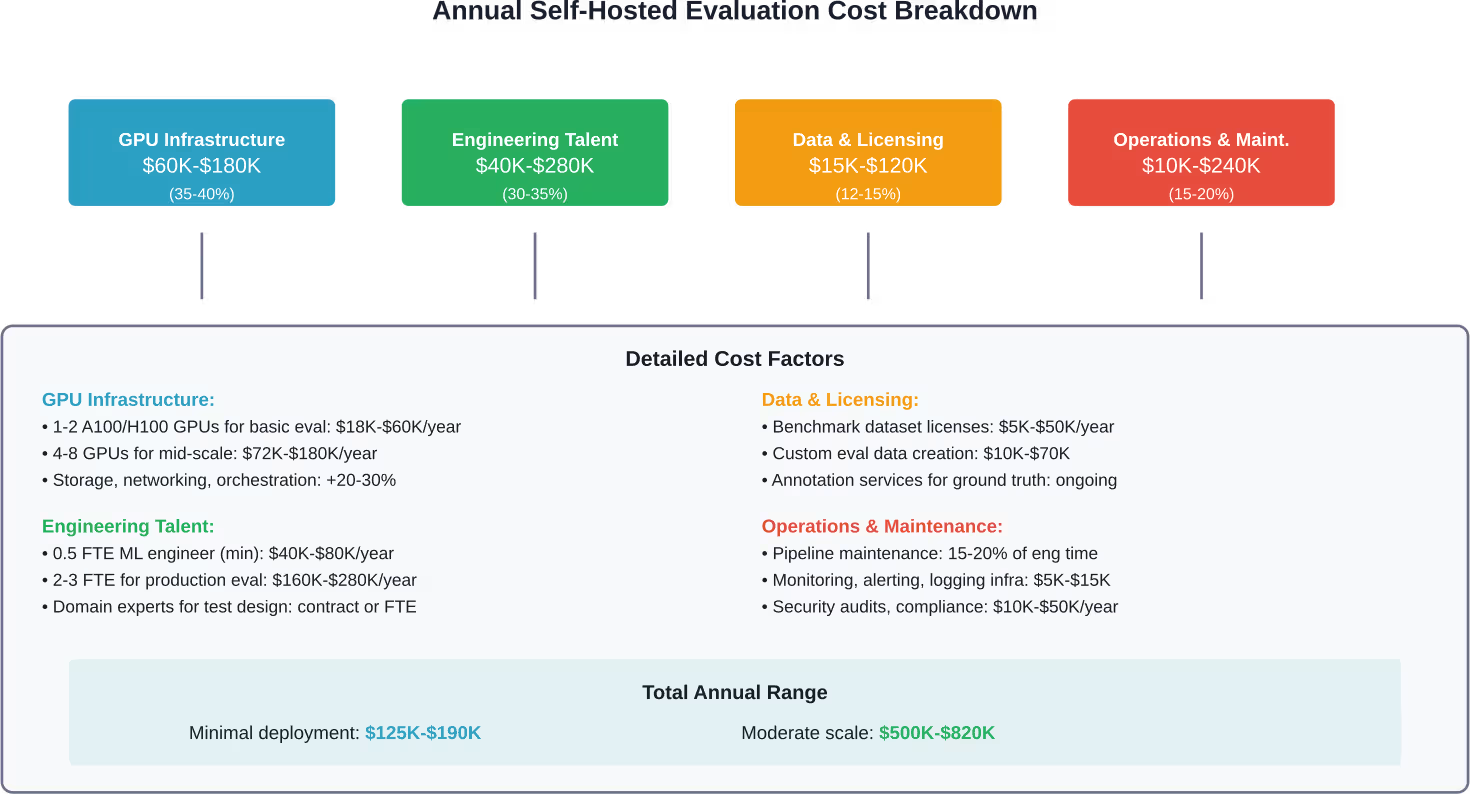

Even a minimal internal deployment can cost $125K-$190K annually. That’s for a small-scale setup serving internal use cases. Moderate-scale, customer-facing evaluation features? Expect $500K-$820K annually, conservatively.

Here’s what drives those numbers:

GPU and Compute Requirements

Running evaluations means repeatedly executing models against test datasets. For a 7B-13B parameter model, a single A100 or H100 GPU handles basic evaluation workloads. Monthly cloud GPU costs for that tier run approximately $1,500-$5,000.

Scale up to 30B-70B models? Now the requirement jumps to 4-8 GPUs, and monthly active costs hit $6,000-$15,000. The evaluation infrastructure can easily match the production hosting costs.

Based on 2025 competitive data, entry-level deployments with 7B-13B models on a single GPU cost approximately $1.5K-$5K monthly. Mid-tier deployments with larger models on 4-8 GPUs range from $6K-$15K monthly. Enterprise deployments with the largest models can exceed $30K monthly just for compute.

But here’s the catch: evaluation doesn’t run continuously like production serving. It runs in bursts. That creates inefficiency. Teams either overprovision and waste money on idle GPUs, or underprovision and create bottlenecks that slow development cycles.

The Talent Tax Nobody Mentions

Open-source tools don’t configure themselves. They require skilled engineers who understand both the evaluation frameworks and the specific domain being tested.

Even pre-trained models need expert handlers. Someone has to design evaluation protocols, select appropriate benchmarks, interpret results, and translate findings into actionable improvements. That requires ML expertise combined with domain knowledge—a combination that commands $150K-$250K in annual salary for experienced practitioners.

Small teams might allocate 0.5 FTE (full-time equivalent) to evaluation work initially. That’s $75K-$125K in loaded cost (salary plus benefits and overhead). Moderate-scale deployments require 2-3 dedicated engineers, pushing talent costs to $300K-$750K annually.

Community discussions highlight this gap repeatedly. Teams assume they’ll just “use the open-source eval library” without budgeting for the expertise needed to use it effectively. Six months later, they’re either hiring specialists or abandoning their evaluation efforts entirely.

Model Size and Complexity Impact on Evaluation Costs

The relationship between model size and evaluation cost isn’t linear. It’s exponential in the worst cases.

Small models (1-3B parameters) run quickly through evaluation suites. A comprehensive test might take minutes to hours. Large models (30B-70B parameters) can take days for the same evaluation depth. Mixture-of-Experts (MoE) architectures add another complexity layer.

According to research on MoE systems, these models possess large parameter counts—some reaching as high as 1,571 billion—but only activate 1-25% during token processing. That sparse activation creates evaluation challenges. Standard benchmarks might not adequately test all expert pathways, requiring custom evaluation protocols.

Parameter Count vs. Evaluation Complexity

Here’s how model scale translates to evaluation overhead:

| Model Size | Typical Parameters | VRAM (4-bit) | Eval Time per Test | Monthly Eval Cost |

|---|---|---|---|---|

| Small | 1-3B | ~2 GB | Minutes | $200-$800 |

| Medium | 7-13B | 6-8 GB | Hours | $800-$2,500 |

| Large | 30-70B | 20-40 GB | Hours to days | $3,000-$8,000 |

| Extra Large | 100B+ | 60+ GB | Days | $10,000+ |

These estimates assume regular evaluation cadence (weekly comprehensive tests plus daily smoke tests). Teams practicing continuous evaluation with every code change will see costs multiply.

Specialized Architectures Demand Specialized Testing

Standard transformer models have well-established evaluation protocols. Newer architectures like MoE models, state-space models, or hybrid systems require custom testing approaches.

That customization costs money. Either teams build the testing infrastructure themselves (engineering time) or purchase specialized evaluation services. Either way, the exotic architecture premium adds 30-50% to baseline evaluation costs.

Hidden Costs: Data, Integration, and Operational Overhead

The expenses don’t stop at platforms and infrastructure. Several cost categories hide in plain sight until invoices arrive.

Evaluation Dataset Costs

Public benchmarks like HumanEval (164 coding problems) or MBPP work for general capabilities testing. But private LLMs typically serve specific domains—legal analysis, medical diagnosis, financial modeling, customer service.

Generic benchmarks don’t cut it. Organizations need custom evaluation datasets that reflect their actual use cases, data distributions, and edge cases. Creating those datasets requires either internal effort or external services.

Internal dataset creation costs include subject matter expert time (often $150-$300/hour for specialized domains), annotation labor, quality assurance, and dataset maintenance as products evolve. A modest custom evaluation dataset (5,000-10,000 examples) typically costs $20K-$50K to create and $5K-$15K annually to maintain.

Licensing commercial benchmark datasets adds another expense. Specialized domain datasets (legal, medical, financial) can cost $10K-$100K+ depending on size, quality, and licensing terms.

Integration and Orchestration Expenses

Evaluation doesn’t exist in isolation. It needs to integrate with version control systems, CI/CD pipelines, model registries, experiment tracking platforms, and production monitoring.

Building those integrations consumes significant engineering time. A basic integration between an evaluation platform and existing MLOps infrastructure typically requires 80-200 hours of development and testing. At $150-$250/hour for ML engineering talent, that’s $12K-$50K per integration.

Multiply that across multiple tools in the stack. Then add ongoing maintenance as APIs change and requirements evolve. Integration overhead easily reaches 15-25% of total evaluation costs.

Compliance and Security Auditing

Private LLMs often process sensitive data. Healthcare providers handle PHI. Financial institutions process PII and transaction data. Government agencies manage classified information.

Evaluation infrastructure must meet the same security and compliance standards as production systems. That means security audits, penetration testing, compliance documentation, and potentially dedicated infrastructure with air-gapped deployment.

Security audits for AI systems range from $25K for basic assessments to $200K+ for comprehensive evaluations of complex deployments. Ongoing compliance monitoring adds $10K-$50K annually depending on regulatory requirements.

Platform vs. Self-Hosted: Total Cost of Ownership Comparison

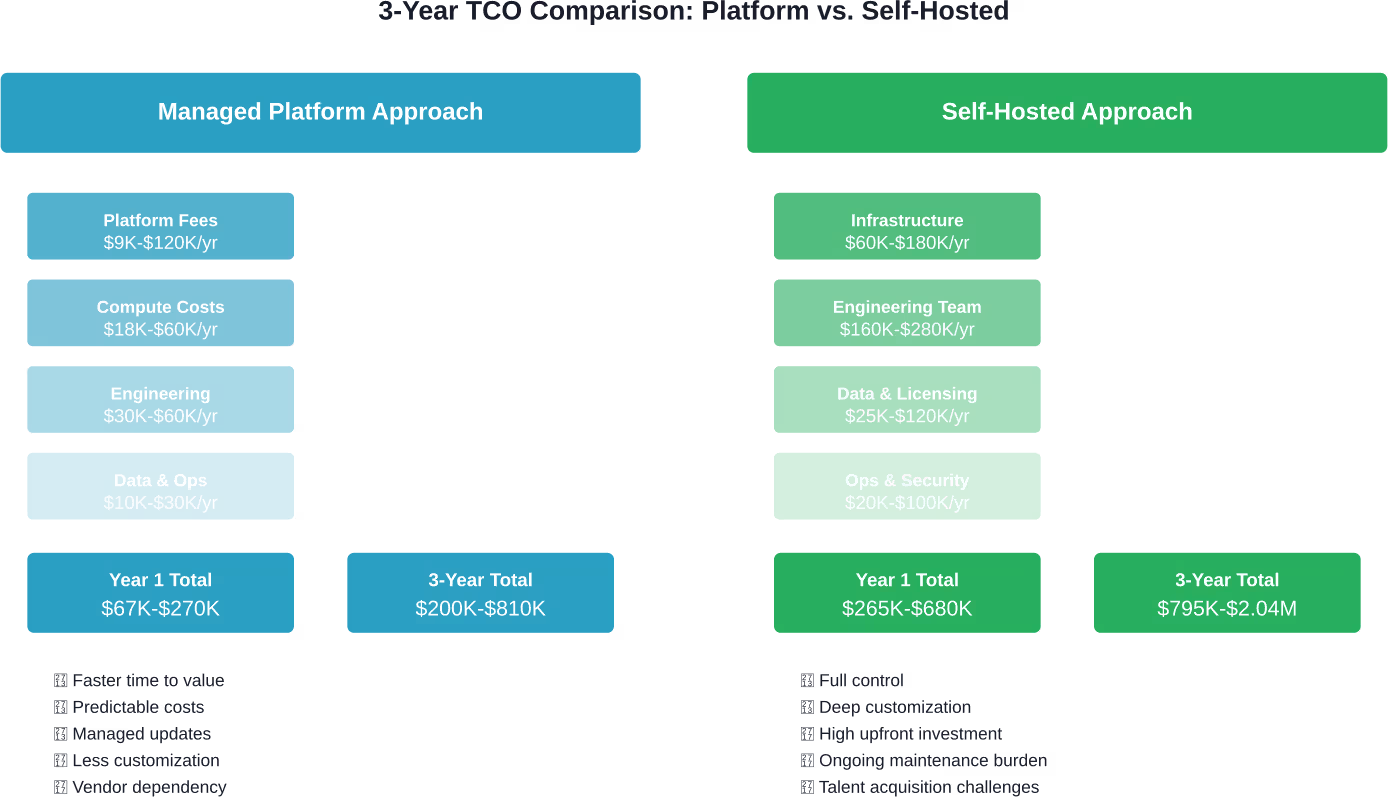

The build vs. buy decision for evaluation infrastructure involves more than comparing platform subscription fees to infrastructure costs.

Total cost of ownership (TCO) includes direct costs (platforms, compute, licenses), talent costs (engineering, operations, specialized expertise), opportunity costs (time to value, feature velocity), and risk costs (evaluation gaps leading to production failures).

The managed platform approach shows lower first-year costs ($67K-$270K vs. $265K-$680K) and significantly lower three-year TCO ($200K-$810K vs. $795K-$2.04M). The self-hosted approach requires 3-4x the investment for comparable functionality.

But those numbers tell only part of the story. Platform approaches deliver faster time to value—often weeks instead of months. Self-hosted solutions offer deeper customization for organizations with unique requirements that platforms can’t accommodate.

When Platform Subscriptions Make Sense

Managed platforms work best for teams that need comprehensive evaluation capabilities quickly, have limited ML infrastructure expertise in-house, want predictable operational costs, or operate at small-to-moderate scale where platform limits aren’t constraining.

The economic framework for evaluating language models suggests focusing on cost-of-pass metrics—how much it costs to get a correct result. Platforms excel here for most organizations because they reduce the engineering overhead needed to achieve reliable evaluation results.

When Self-Hosting Becomes Necessary

Self-hosted infrastructure makes sense when evaluation requirements exceed platform capabilities, data sensitivity prevents external service usage, evaluation volume would make platform fees prohibitively expensive, or deep customization is required for proprietary architectures or evaluation protocols.

Organizations in regulated industries (healthcare, finance, government) often have no choice. Data governance requirements mandate on-premises or private cloud deployment with full control over data flows and access patterns.

Cost Optimization Strategies for LLM Evaluation

Regardless of the platform vs. self-hosted decision, several strategies reduce evaluation costs without sacrificing quality.

Tiered Evaluation Approaches

Not every code change needs full evaluation. Implement a tiered testing strategy: fast smoke tests on every commit (minutes, minimal cost), medium-depth evaluation on pull requests (hours, moderate cost), and comprehensive evaluation on release candidates (days, full cost).

This approach reduces compute costs by 60-70% compared to running comprehensive evaluation on every change while catching most issues early when they’re cheaper to fix.

Efficient Benchmark Selection

A survey on Large Language Model Benchmarks identifies 283 representative benchmarks, indicating the field’s comprehensive approach to LLM evaluation. Instead of running all available benchmarks, identify the 8-10 that matter most for specific use cases. Validate the subset selection quarterly to ensure coverage remains adequate as models evolve.

Hybrid Evaluation Strategies

Combine platform services for standard capabilities testing with custom self-hosted evaluation for domain-specific requirements. Platforms handle the commodity evaluation workload efficiently. Internal infrastructure addresses the specialized 20% that platforms can’t cover.

This hybrid approach typically costs 30-40% less than pure self-hosting while maintaining necessary customization.

Compute Resource Optimization

Evaluation workloads spike and trough. Spot instances and preemptible VMs can reduce cloud GPU costs by 60-80% for evaluation workloads that tolerate interruption and restart.

For teams with consistent evaluation volume, reserved instances offer 40-50% discounts compared to on-demand pricing. The commitment risk decreases as evaluation becomes a permanent part of development workflows rather than an occasional activity.

Make LLM Evaluation Worth the Cost, Not Another Line Item

Private LLM evaluation can get expensive fast, especially when testing is disconnected from how the model is actually built and used. AI Superior approaches evaluation as part of the full model lifecycle – not a separate service layer. Their work includes building and fine-tuning models, setting up validation pipelines, and aligning evaluation with real use cases. This helps avoid over-testing, reduces redundant benchmarks, and keeps evaluation tied to performance that actually matters in production.

Most evaluation costs grow when testing is repeated without improving the system itself. When evaluation is built into development and deployment, you get fewer cycles and clearer results. If you want to turn evaluation into something that actually improves your model instead of just measuring it, contact AI Superior and take a closer look at how your current setup is structured.

Real-World Pricing Examples and Case Studies

Abstract cost ranges become clearer with concrete scenarios.

Small Team: Internal Chatbot

A 15-person startup builds an internal knowledge base chatbot using a fine-tuned 7B parameter model. Evaluation needs include accuracy testing on company-specific queries, safety checks, and performance monitoring.

Approach: Braintrust Pro platform ($249/month, confirmed pricing) plus custom evaluation dataset creation ($15K one-time estimate) plus 0.25 FTE engineering time ($40K/year estimate).

Total first-year cost: $58K. Ongoing annual cost: $43K.

Mid-Sized Company: Customer Service AI

A 200-person SaaS company deploys a 13B parameter model for customer service automation. Evaluation requirements include accuracy, tone appropriateness, hallucination detection, and A/B testing against baseline models.

Approach: Mid-tier platform ($2,500/month) plus moderate GPU resources for self-hosted specialized tests ($4K/month) plus custom domain dataset ($35K) plus 1.5 FTE specialists ($180K/year).

Total first-year cost: $293K. Ongoing annual cost: $258K.

Enterprise: Regulated Industry Deployment

A financial services firm with 5,000 employees builds a 30B parameter model for investment research assistance. Regulatory requirements mandate on-premises deployment, comprehensive audit trails, and third-party validation.

Approach: Self-hosted infrastructure on dedicated hardware ($180K/year GPU costs) plus 3 FTE team ($450K/year) plus commercial datasets and licenses ($80K/year) plus security audits ($50K/year) plus external validation services ($40K/year).

Total first-year cost: $800K. Ongoing annual cost: $800K (plus major infrastructure upgrades every 3 years).

These scenarios illustrate how costs scale with organization size, model complexity, and regulatory requirements. The enterprise example costs 14x more than the small team—but serves 333x more users in a heavily regulated environment.

The Hidden Economics of “Free” Open-Source Evaluation

Open-source LLM evaluation tools carry a seductive promise: zero software licensing costs. Reality proves more expensive.

The challenge isn’t the tools themselves. Lighteval, Hugging Face’s evaluation libraries, and similar frameworks work well. The challenge is everything around them: infrastructure to run them, expertise to use them effectively, maintenance to keep them current, and integration to make them useful.

Community discussions consistently highlight this gap. Teams assume open-source means free. They learn otherwise when they’re six months into a project with $150K invested in engineering time and still struggling to get reliable evaluation results.

Here’s the pattern: download open-source eval framework (free), spend 2 weeks figuring out documentation (engineering cost), spend 1 month building infrastructure (engineering + cloud cost), spend 2 months debugging integration issues (engineering cost), and spend ongoing effort maintaining as frameworks evolve (permanent engineering cost).

That “free” framework just cost $80K-$120K in the first year. For many organizations, paying $3K-$10K for a managed platform would have delivered better results faster at lower total cost.

When Open Source Actually Saves Money

Open-source evaluation tools do make economic sense in specific scenarios: when teams already have ML infrastructure expertise in-house, evaluation requirements are highly specialized and platforms can’t accommodate them, evaluation volume would make platform fees extremely expensive, or organizations have ideological or strategic commitments to open-source technology stacks.

But even in those scenarios, the operational economics matter. The cost structure shifts from platform fees to talent and infrastructure, but the total spend rarely drops as much as initial analysis suggests.

Pricing Trends and Future Cost Predictions

The LLM evaluation market remains immature and pricing volatile. Several trends shape future cost trajectories.

Increasing Competition Drives Platform Prices Down

More vendors enter the evaluation platform space monthly. Competition typically drives prices down and features up. The $249/month entry tier from 2025 might drop to $149/month by 2027 while including capabilities that required enterprise plans previously.

Research on cost-of-pass metrics demonstrates that frontier cost-of-pass has reduced over time with new model releases, with distinct economic insights showing lightweight models most cost-effective for basic tasks. Evaluation services will likely follow similar pricing dynamics.

Infrastructure Costs Remain Sticky

GPU costs haven’t declined meaningfully despite years of predictions. Cloud providers maintain high margins on GPU instances. The hyperscaler oligopoly prevents aggressive price competition.

Don’t expect significant infrastructure cost reductions for self-hosted evaluation in the near term. Efficiency gains from better software might offset 10-15% of compute costs, but hardware economics remain challenging.

Specialization Creates Premium Pricing Tiers

Generic evaluation platforms will commoditize and price competitively. Specialized services for regulated industries, domain-specific evaluation, or advanced capabilities like adversarial testing will maintain premium pricing.

Expect market segmentation: commodity platforms at $200-$500/month, professional platforms at $2K-$5K/month, and specialized services at $10K+/month or custom project pricing.

Frequently Asked Questions

What is the average cost of private LLM evaluation services?

Platform-based evaluation services typically range from $249/month for entry-level plans to $10,000+/month for enterprise deployments. Self-hosted evaluation infrastructure costs $125K-$190K annually for minimal deployments and $500K-$820K annually for moderate-scale production systems. Total costs depend on model size, evaluation frequency, team expertise, and infrastructure choices.

Are open-source LLM evaluation tools really free?

The software itself is free, but operational costs are substantial. Even minimal self-hosted deployments using open-source tools cost $125K+ annually when accounting for infrastructure, engineering talent, data licensing, and maintenance. Organizations must budget for GPU resources, ML engineering expertise, dataset creation, and ongoing operational overhead. The “free” software often costs more in total ownership than paid platforms.

How much does it cost to evaluate a 70B parameter model?

Evaluating large 70B parameter models typically requires 4-8 high-end GPUs and costs $3,000-$8,000 monthly for compute resources alone. Add platform fees ($2,500-$5,000/month) or engineering talent for self-hosted infrastructure (2-3 FTE at $300K-$450K annually), plus custom datasets ($35K-$70K) and ongoing maintenance. Total first-year costs for comprehensive 70B model evaluation range from $150K to $400K depending on evaluation depth and frequency.

What factors most significantly impact LLM evaluation costs?

Model size and architecture drive the largest cost variations. Larger models require more GPUs and longer evaluation times. Evaluation frequency and depth also matter substantially—continuous evaluation costs 5-10x more than weekly testing. Team expertise affects costs because experienced evaluators work more efficiently and make better tool choices. Infrastructure decisions (platform vs. self-hosted) create 3-4x cost differences for comparable capabilities.

Is it cheaper to use evaluation platforms or build custom infrastructure?

Platforms cost less for most organizations. Total three-year platform TCO ranges from $200K-$810K compared to $795K-$2.04M for self-hosted infrastructure with comparable capabilities. Platforms deliver faster time to value and require less specialized expertise. Self-hosted infrastructure makes economic sense only when evaluation volume exceeds platform limits, data governance prevents external services, or highly specialized evaluation requirements exist that platforms can’t accommodate.

How can organizations reduce LLM evaluation costs without sacrificing quality?

Implement tiered evaluation strategies with fast smoke tests on every change and comprehensive testing only on releases, reducing compute costs by 60-70%. Select efficient benchmark subsets rather than running exhaustive test suites. Use hybrid approaches combining platform services for standard testing with targeted self-hosted evaluation for specialized needs. Optimize compute resources through spot instances (60-80% savings) or reserved instances (40-50% savings) for consistent workloads. Focus engineering effort on high-value custom evaluation rather than rebuilding commodity capabilities.

Do evaluation costs scale linearly with model size?

No, evaluation costs scale super-linearly. A 70B model doesn’t cost twice as much to evaluate as a 35B model—it typically costs 3-5x more due to increased GPU requirements, longer evaluation times, and infrastructure complexity. Very large models (100B+ parameters) require specialized infrastructure and techniques that add premium costs. The relationship between parameters and cost accelerates rather than following a linear progression.

Making the Economic Decision

The cost of private LLM evaluation services varies by two orders of magnitude depending on approach, scale, and requirements. Small teams can start with platform solutions for under $5K annually. Large enterprises with specialized needs might spend $1M+ annually on comprehensive evaluation infrastructure.

The economic decision hinges on three factors: required evaluation depth and frequency, available internal expertise, and strategic importance of evaluation capabilities.

For most organizations, managed platforms offer the best economics. Lower upfront investment, faster time to value, and predictable costs outweigh the flexibility advantages of self-hosted infrastructure. The exception? Organizations with truly unique requirements, massive evaluation volume, or regulatory constraints that preclude external services.

But here’s the real insight: evaluation costs should be measured against failure costs. Shipping a broken AI feature to production can destroy customer trust, create regulatory exposure, or damage brand reputation. Those costs dwarf evaluation expenses.

The question isn’t whether to invest in evaluation. It’s how much is adequate for the risk profile. A customer service chatbot might justify $50K annually in evaluation. A medical diagnosis assistant might need $500K. An autonomous vehicle decision system might require $5M+.

Match evaluation investment to consequence severity. Skimping on evaluation to save money today often creates exponentially larger expenses tomorrow when production failures occur.

Ready to implement rigorous LLM evaluation? Start by assessing current evaluation maturity, identifying gaps between current and required capabilities, and calculating the true cost of evaluation failures in specific use cases. That analysis makes the platform vs. self-hosted decision clear—and justifies the necessary investment to stakeholders.