Quick Summary: Datadog LLM Observability provides end-to-end monitoring for AI applications with metrics on token usage, latency, and error rates, but pricing is complex and based on span ingestion. Teams can expect costs to scale with request volume and data retention needs, making it crucial to monitor usage through Datadog’s Cost Management features and set alerts to prevent overages.

Large language model deployments have exploded over the past two years. With that explosion comes a new operational challenge: how do teams monitor these AI workloads without breaking the bank?

Datadog entered the LLM observability space to address exactly this problem. Their platform promises comprehensive visibility into model performance, token usage, and application quality. But here’s the thing—understanding what this capability actually costs requires navigating Datadog’s complex pricing model.

This guide breaks down the cost structure of Datadog LLM Observability, explains the key pricing drivers, and provides practical strategies for controlling spend while maintaining the visibility modern AI applications demand.

Understanding Datadog LLM Observability Pricing Structure

Datadog doesn’t publish a standalone price for LLM Observability on their public pricing page. Instead, the cost model ties directly to their APM (Application Performance Monitoring) infrastructure, which charges based on ingested spans.

According to the official Datadog documentation, LLM Observability generates metrics calculated from 100% of application traffic. These metrics capture span counts, error counts, token usage, and latency measures. The ml_obs.span metric tracks the total number of spans with tags for environment, model name, model provider, service, and span kind.

Each LLM request typically generates multiple spans—one for the overall request, additional spans for preprocessing, model invocation, postprocessing, and any tool calls. The span volume directly impacts costs since Datadog’s APM pricing scales with ingested and indexed span data.

Core Pricing Components

Teams deploying LLM Observability face several cost drivers:

- Span ingestion volume based on request throughput

- Data retention periods (standard vs. extended retention)

- Custom metrics derived from trace data

- Infrastructure monitoring for the underlying compute resources

- Log ingestion if capturing LLM request/response payloads

The challenge? In containerized or microservices environments, costs can scale faster than expected. As one analysis noted, Datadog’s host-based pricing model “can feel outdated and punitive” in dynamic cloud environments where container counts fluctuate.

What Drives LLM Observability Costs

Understanding cost drivers helps teams budget accurately and identify optimization opportunities. Here’s what actually moves the needle on LLM monitoring spend.

Request Volume and Span Generation

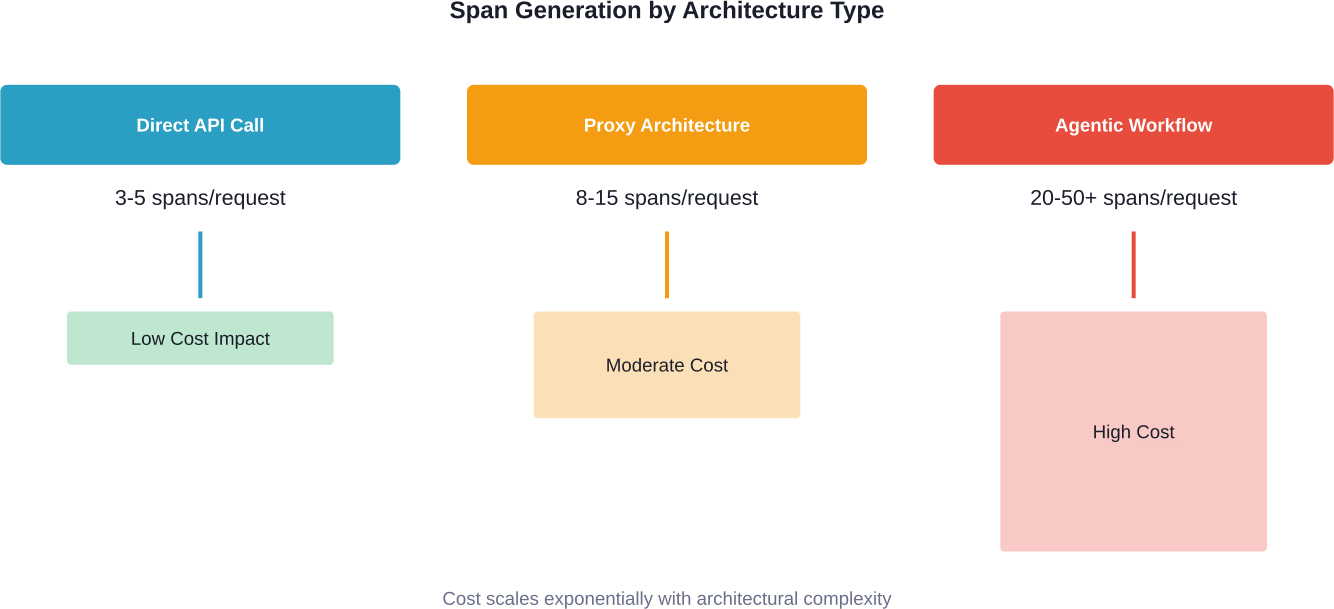

Every LLM API call generates traces. A simple completion request might create 3-5 spans. Complex agentic workflows with tool calls, retrieval steps, and reasoning chains? Those can easily generate 20-50 spans per request.

Consider a team running 1 million LLM requests per day. At a conservative 5 spans per request, that’s 5 million spans daily or 150 million monthly. Span ingestion costs accumulate quickly at this scale.

Proxy-based architectures add another layer. When teams route LLM traffic through gateways like LiteLLM or custom proxy solutions, each routing decision, retry, and fallback creates additional spans. According to Datadog’s guidance on monitoring AI proxies, teams should instrument proxy requests to track “model selection, latency, error rates, and token usage.”

Token Usage Tracking Overhead

Datadog captures token counts as span metadata. For teams processing billions of tokens monthly, storing this telemetry data adds up. The platform tracks both input and output tokens, plus metadata about the model, provider, and request parameters.

Token data becomes particularly valuable when optimizing costs. Teams can identify expensive queries, detect inefficient prompts, or spot unexpected usage patterns. But this visibility comes at the cost of storing high-cardinality data across potentially millions of requests.

Custom Metrics and Dashboards

Beyond standard metrics, teams often create custom dashboards aggregating LLM performance data. Each custom metric query, especially those with high cardinality tags, adds to monthly costs.

Common custom metrics include cost per user session, average tokens per query type, error rates by model version, and latency percentiles by geographic region. These provide business-critical insights but require careful management to avoid runaway metric costs.

Datadog Cost Management for LLM Workloads

Datadog offers tools specifically designed to help teams monitor and control their observability spend. For LLM workloads, these features become essential.

The Datadog Costs feature within Cloud Cost Management provides visibility into observability spending itself. According to the official documentation, teams need the billing_read and usage_read permissions to access cost breakdowns. Only Cloud Cost Management shows actual usage-based costs, while the Plan & Usage page shows prorated monthly estimates.

Setting Token Usage Alerts

One practical cost control strategy involves configuring token usage alerts. As Datadog’s proxy monitoring guidance explains, teams can “set a ‘soft’ quota that triggers a notification at 80% of the limit, and a ‘hard’ quota to prevent any overage.”

This two-tier alert system prevents surprise bills. The soft alert gives teams time to investigate usage spikes, while the hard limit provides a hard stop before costs spiral.

Trace Sampling Strategies

Not every trace requires retention. Teams can implement intelligent sampling to reduce costs while maintaining statistical significance for performance analysis.

Head-based sampling makes decisions at trace initiation—sample 10% of all requests, for example. Tail-based sampling is smarter: keep all error traces and slow requests, but sample only a percentage of successful fast requests. This approach preserves the most valuable debugging data while cutting storage costs.

Datadog supports both approaches through ingestion controls and retention filters. The key is configuring rules that match the team’s debugging needs without paying for comprehensive retention of routine, successful requests.

| Sampling Strategy | Data Retained | Cost Impact | Best For |

|---|---|---|---|

| 100% Retention | All traces | Highest cost | Critical production apps, compliance requirements |

| Head Sampling (10%) | Random subset | 90% reduction | High-volume, stable applications |

| Tail Sampling | Errors + slow requests + sample of normal | 60-80% reduction | Most production LLM applications |

| Error-only | Failed requests only | 95% reduction | Cost-sensitive dev/staging environments |

Comparing Datadog LLM Observability Costs to Alternatives

Datadog isn’t the only player in LLM observability. Understanding the competitive landscape helps teams evaluate whether Datadog’s pricing makes sense for their use case.

Open Source Alternatives

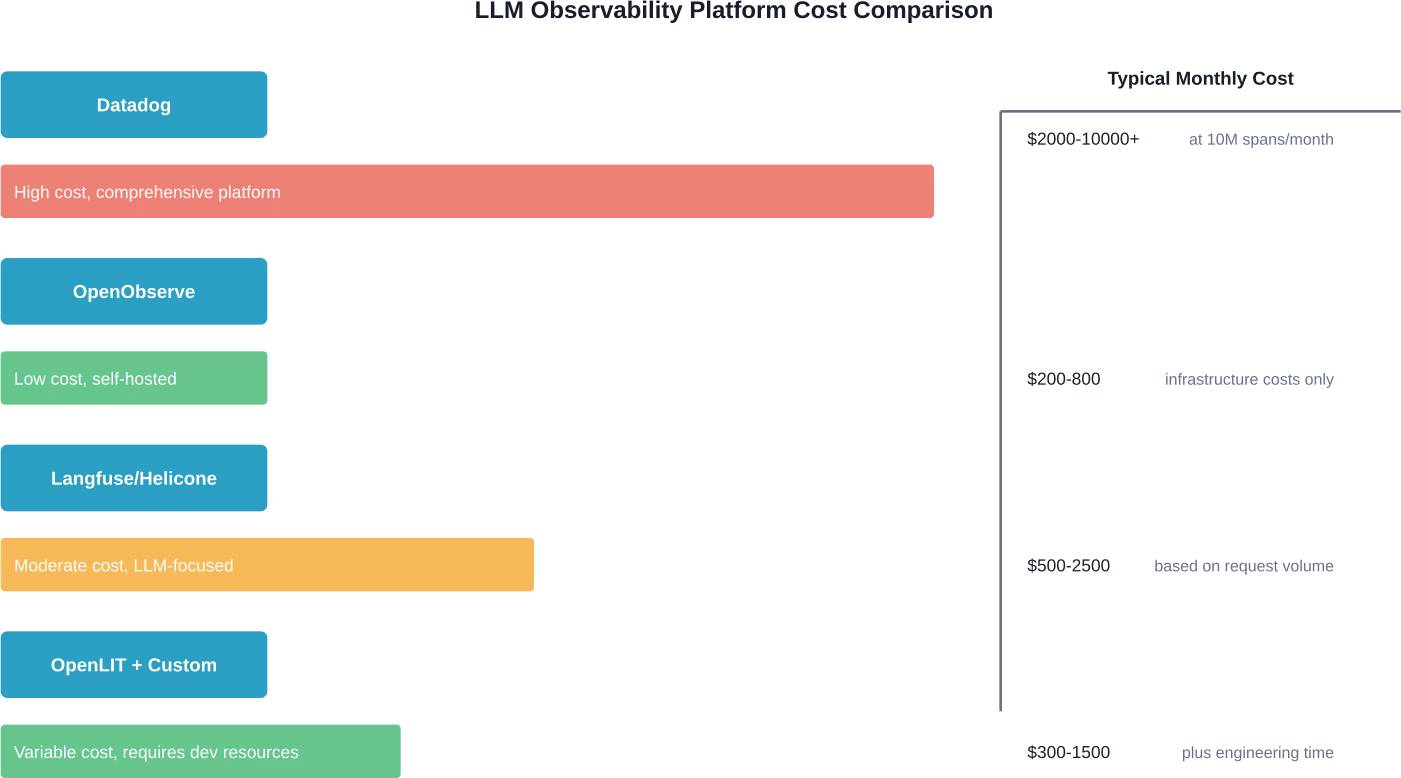

OpenObserve is described as “a cost-effective alternative to Datadog, Splunk, and Elasticsearch with 140x lower storage cost.” The platform uses S3-backed storage with stateless architecture, dramatically reducing infrastructure costs compared to Datadog’s managed service model.

Other open source options include OpenLIT, which provides OpenTelemetry-based monitoring specifically designed for LLM workloads. For teams with engineering resources to manage infrastructure, these alternatives can deliver substantial savings—but at the cost of operational overhead.

Specialized LLM Platforms

Platforms like Langfuse, Helicone, and Arize offer LLM-specific observability with simpler pricing models. Many charge based on tracked requests rather than underlying infrastructure metrics.

The trade-off? These platforms excel at LLM monitoring but lack Datadog’s comprehensive infrastructure observability. Teams already using Datadog for traditional APM often find value in consolidating LLM monitoring within the same platform, despite potentially higher costs.

Middleware and Proxy Solutions

Projects like claude_telemetry demonstrate a hybrid approach: lightweight OpenTelemetry wrappers that log tool calls, token usage, and costs to various backends including Datadog. The claude_telemetry project documentation indicates telemetry can be sent to various backends including Datadog.

This architecture decouples instrumentation from the backend, giving teams flexibility to switch providers if Datadog costs become prohibitive. The instrumentation cost is minimal—just the wrapper overhead—while backend costs scale with the chosen provider’s pricing model.

Practical Cost Optimization Strategies

Managing Datadog LLM Observability costs requires ongoing attention and smart configuration choices. Here are strategies that actually work in production environments.

Optimize Proxy Routing Rules

When using LLM proxies, routing decisions directly impact costs. A query routed to GPT-4 costs significantly more than one handled by GPT-3.5 or a smaller open model.

Datadog’s proxy monitoring guidance recommends tracking model selection performance. If a routing rule sends traffic to an expensive model but quality metrics don’t improve, “revert the routing rule to a faster, cheaper model.” This trace-level visibility pays for itself by preventing unnecessary usage of premium models.

Implement Request Throttling

Runaway request loops or inefficient retry logic can spike both LLM provider costs and observability costs. Datadog traces reveal these patterns through span analysis.

Teams should configure request throttling at the proxy level, with generous limits for legitimate traffic but hard caps to prevent abuse or bugs from generating millions of unnecessary requests. The observability data helps calibrate these limits based on actual usage patterns.

Tag Management Discipline

High-cardinality tags dramatically increase metric storage costs. Tags like user_id, session_id, or request_id on every span create massive data volumes.

Best practice: use high-cardinality identifiers for traces (searchable in span data) but not as metric tags. Reserve metric tags for bounded attributes like model_name, environment, service, and error_type. This maintains debugging capability while controlling metric explosion.

Selective Payload Capture

Capturing full LLM request and response payloads provides incredible debugging value but consumes substantial log storage. A single conversation thread might generate hundreds of kilobytes of logged data.

Strategic approach: capture payloads for errors and high-latency requests automatically, but sample successful requests at 1-5%. Teams can always increase sampling temporarily when investigating specific issues.

Reduce Observability Costs Before They Scale Out of Control

LLM observability tools like Datadog are useful, but they don’t fix underlying inefficiencies. Most costs come from how the model is built, tuned, and deployed, not just how it’s monitored. AI Superior works on that earlier layer: model selection, data preparation, fine-tuning, and deployment design, so you’re not pushing unnecessary load into observability pipelines later. Their work typically covers the full lifecycle, from data handling to optimization and production setup.

If you’re already thinking about observability costs, it’s the right time to step back and fix the architecture behind it. Talk to AI Superior, define what actually needs to be tracked, and build a system that stays predictable instead of getting more expensive with every query.

Real-World Cost Scenarios

What do teams actually spend on Datadog LLM Observability? While specific numbers vary widely based on scale and configuration, some patterns emerge.

Small-Scale Production Application

A startup running 500k LLM requests monthly with average complexity (7 spans per request) generates roughly 3.5 million spans. At typical APM pricing, this might cost $300-600 monthly for span ingestion and retention.

Add infrastructure monitoring for 10-20 containers running the LLM service, and the monthly bill reaches $800-1200. This assumes standard retention and moderate custom metric usage.

Enterprise AI Platform

A large organization processing 50 million LLM requests monthly with complex agentic workflows (averaging 25 spans per request) generates 1.25 billion spans. This volume pushes into Datadog’s enterprise tier pricing.

With negotiated rates and optimized sampling (retaining 20% of traces), costs might range from $8,000-15,000 monthly just for LLM observability. Total Datadog spend including infrastructure monitoring could exceed $30,000 monthly.

Development and Staging

Teams often over-instrument non-production environments. A dev environment generating 5 million requests monthly at full observability might cost $400-800—money better spent on production visibility.

Recommended approach: use aggressive sampling (5-10% retention) in dev/staging, focusing on error capture rather than comprehensive tracing. This cuts costs by 80-90% while maintaining debugging capability.

| Environment Type | Monthly Requests | Sampling Strategy | Est. Monthly Cost |

|---|---|---|---|

| Dev/Test | 5M | 10% + errors | $100-200 |

| Staging | 10M | 20% + errors | $300-500 |

| Production (small) | 500K-2M | 50-100% | $800-1500 |

| Production (medium) | 10M-25M | 30-50% | $3000-6000 |

| Production (enterprise) | 50M+ | 20-30% optimized | $8000-15000+ |

When Datadog Makes Sense Despite Higher Costs

Datadog’s premium pricing isn’t always a dealbreaker. Several scenarios justify the investment.

Organizations already standardized on Datadog for infrastructure and APM monitoring gain significant value from adding LLM Observability. The unified platform eliminates context switching and correlates LLM performance with underlying infrastructure metrics. When a model response slows down, teams can immediately check GPU utilization, network latency, and database performance—all in one interface.

Enterprises with complex compliance requirements benefit from Datadog’s audit capabilities, access controls, and data retention features. Open source alternatives often lack the governance tooling required in regulated industries.

Teams without dedicated platform engineering resources find value in Datadog’s managed service. The alternative—deploying and maintaining open source observability infrastructure—requires ongoing engineering investment that can exceed Datadog subscription costs.

Frequently Asked Questions

How much does Datadog LLM Observability actually cost per month?

Datadog doesn’t publish standalone LLM Observability pricing. Costs depend on span ingestion volume, which varies based on request throughput and application complexity. Small applications might spend $300-800 monthly, while enterprise deployments commonly exceed $8,000-15,000. The pricing scales with APM span ingestion rates and infrastructure monitoring needs.

Can I use Datadog LLM Observability without paying for full APM?

No. LLM Observability builds on Datadog’s APM infrastructure and requires an active APM subscription. The span-based pricing model means LLM traces count toward overall APM span ingestion. Teams need both APM and the infrastructure monitoring components that support the application.

What’s the cheapest way to monitor LLM costs in production?

For basic cost tracking, lightweight solutions like token counters in application code or simple request logging to S3 cost nearly nothing. For comprehensive observability, open source platforms like OpenLIT or OpenObserve offer the lowest infrastructure costs but require engineering time to deploy and maintain. Managed alternatives like Langfuse provide middle-ground pricing focused specifically on LLM workloads.

Does Datadog charge separately for token usage data?

Token counts are stored as span metadata and don’t incur separate charges beyond the underlying span ingestion costs. However, creating custom metrics based on token usage (like aggregate token counts by user or query type) does generate additional custom metric costs. Teams should monitor custom metric usage to avoid unexpected charges.

How can I estimate Datadog LLM Observability costs before deploying?

Calculate expected monthly request volume, estimate spans per request (3-5 for simple calls, 20-50 for complex agents), and multiply to get total spans. Compare this to Datadog’s APM pricing tiers. Add infrastructure monitoring costs for the compute resources running the LLM application. Build in 20-30% buffer for growth and unexpected usage patterns.

Are there cost differences between monitoring different LLM providers?

Datadog’s pricing doesn’t vary based on the LLM provider being monitored (OpenAI, Anthropic, etc.). Costs depend purely on observability data volume—spans, metrics, and logs generated by the monitoring infrastructure. However, different providers may have different response characteristics that affect trace complexity and storage needs.

What happens if I exceed my Datadog budget mid-month?

Datadog typically doesn’t cut off service mid-month but will bill for overages. Teams should configure usage alerts through the Cost Management features and set token quota alerts to prevent runaway spend. The soft/hard quota pattern recommended by Datadog provides warning before hitting limits and can block requests that would exceed budgets.

Making the Right Choice for Your Team

Datadog LLM Observability delivers powerful visibility into AI application performance, token economics, and quality metrics. For teams already invested in the Datadog ecosystem, adding LLM monitoring creates a unified observability strategy.

But the cost model requires careful management. Span volumes scale quickly with request throughput and architectural complexity. Without disciplined sampling strategies, tag management, and usage monitoring, bills can grow faster than value delivered.

The decision ultimately comes down to three factors: existing Datadog investment, engineering resources available for alternatives, and the criticality of unified observability across infrastructure and AI workloads.

For enterprise teams with complex deployments, Datadog’s premium pricing often proves worthwhile. For smaller teams or those with strong platform engineering capabilities, open source alternatives deliver comparable visibility at a fraction of the cost.

Whichever path makes sense, the key is treating observability costs as a first-class concern—monitored, optimized, and justified by the operational insights they enable.

Ready to get started with LLM monitoring? Check the official Datadog documentation for current feature availability and contact their sales team for pricing that matches your specific deployment scale and requirements.