Quick Summary: LLM cost optimization in AI deployment requires a multi-layered approach combining smart model selection, infrastructure tuning, and token management. Organizations can reduce costs by 60-85% through techniques like model routing, semantic caching, and KV cache optimization—without sacrificing accuracy. The key is treating LLM costs like manufacturing unit economics rather than traditional software expenses.

A customer support chatbot handling 500,000 monthly requests at 1,500 tokens per request racks up roughly $18,000 per month—just for a single feature. Scale that to 10,000 daily conversations and costs balloon past $1,500 daily for input tokens alone.

This isn’t traditional cloud cost management. LLM-native products inherit properties from both physical goods and software: they scale instantly like code but carry meaningful variable costs per use. As organizations increasingly deploy large-scale models, cost management has become a competitive differentiator rather than just an operational concern.

The pricing delta between providers is substantial. GPT-5.4 charges $2.50 per million input tokens, while Claude 4.5 Sonnet charges $3 per million input tokens. But provider selection is just the start—production cost optimization demands infrastructure-level thinking.

Why LLM Costs Behave Differently

Traditional software operates on a simple economic model: high upfront development costs, then marginal costs approaching zero for each additional user. Host the application once, serve millions.

AI-native applications break this model completely.

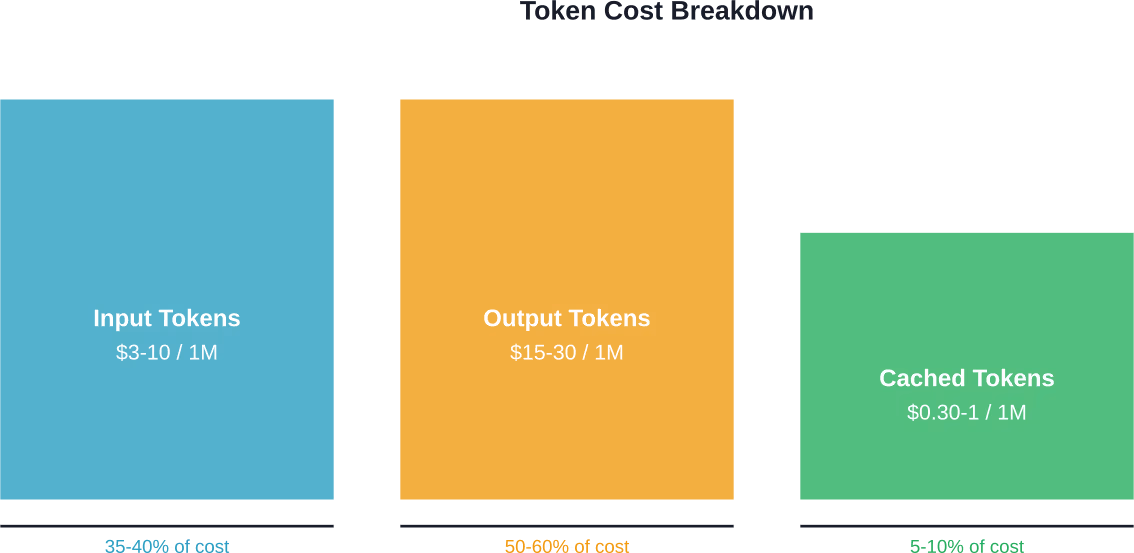

Every inference carries actual compute cost. Input tokens, output tokens, and cached tokens each have different pricing structures. The pricing depends on several interrelated variables that change dynamically based on workload characteristics.

Context length matters more than most teams expect. A model with a context length of 2,048 tokens can attend to up to 2,048 tokens at once. But processing longer contexts increases memory requirements exponentially—not linearly. The key-value cache, which eliminates redundant recomputation of past token representations during autoregressive generation, grows proportionally with sequence length.

Production systems face bottlenecks that don’t exist in development. Memory bandwidth becomes the primary constraint during the decode phase. The multi-head attention mechanism performs multiple attention calculations in parallel, but hardware limitations determine actual throughput.

The Unit Economics Problem

AI startups face unique challenges in three areas: unit economics (cost per inference), capacity planning (GPU supply), and yield optimization (model output quality per token).

Unlike traditional software where the marginal cost of one new user is effectively zero, LLM-native products have significant variable cost components. This forces teams to think like manufacturers—tracking production efficiency, optimizing for throughput, and managing supply constraints.

Real talk: most teams can’t explain their LLM costs with any precision. The complexity of AI cost structures, including compute, memory bandwidth, storage, and networking, creates accountability gaps. Engineering teams lack visibility into which use cases drive expenses or which optimizations would deliver the highest ROI.

Model Selection and Routing Strategies

Recent progress in language models has created an expanding ecosystem. Organizations now choose from dozens of open-source and commercial options, each with different performance-cost tradeoffs.

But treating every query as equally complex wastes money.

| Strategy | How It Works | Typical Savings |

|---|---|---|

| Static Routing | Route queries to predetermined models based on use case | 30-40% |

| Dynamic Routing | Analyze query complexity in real-time, select optimal model | 45-60% |

| Cascading | Try cheaper models first, escalate only when needed | 50-70% |

| LLM Shepherding | Use expensive models for hints, cheaper models for execution | 60-75% |

Research from arXiv demonstrates that Small Language Models (SLMs) with targeted hints from Large Language Models achieve accuracy gains with minimal LLM resource usage. The data shows that SLM (Llama-3.2-3B-Instruct) accuracy as a function of LLM (Llama-3.3-70B-Versatile) hint size improves substantially with small hints representing just 10-30% of the full LLM response, with diminishing returns beyond 60%.

This motivates a shepherding approach: requesting hints rather than full LLM responses. The strategy treats the expensive model as a consultant rather than an executor—pay for guidance, not complete answers.

Infrastructure-Level Optimization Techniques

Model selection is just one lever. Infrastructure optimization addresses the hardware-imposed bottlenecks that limit performance and inflate costs.

KV Cache Management

The key-value cache is a foundational optimization in Transformer-based models. But it’s also a major memory consumer.

During autoregressive generation, the model computes attention over all previous tokens at each step. Without caching, this requires recomputing representations for the entire sequence repeatedly. The KV cache stores these computations, trading memory for speed.

Here’s the problem: cache size grows linearly with sequence length and batch size. For long-context applications, cache memory can exceed the model weights themselves. Strategies to manage this include:

- Quantizing cached values to lower precision (8-bit or 4-bit)

- Implementing eviction policies that discard less-relevant tokens

- Using sliding window attention for bounded memory growth

- Compressing cache entries through learned compression tokens

Research on sentence-anchored gist compression demonstrates that pre-trained LLMs can be fine-tuned to compress context using learned tokens, reducing memory and computational demands for long sequences. Parameter-efficient fine-tuning methods allow compact models to handle reasoning tasks without full KV cache expansion.

Batching and Throughput Optimization

Inference serving systems must balance latency against throughput. Larger batch sizes improve hardware utilization but increase wait times for individual requests.

The compute phase during prefill (processing input tokens) benefits enormously from batching—GPU utilization increases linearly with batch size up to hardware limits. But the decode phase is bandwidth-bound. Adding more requests to a batch doesn’t proportionally increase throughput because memory bandwidth becomes the bottleneck.

Effective strategies separate prefill and decode into different batches, allowing independent optimization of each phase. Continuous batching techniques add new requests to in-flight batches dynamically rather than waiting for the entire batch to complete.

Model Quantization

Quantization reduces model precision from 32-bit or 16-bit floating point to 8-bit or 4-bit integers. This cuts memory requirements and bandwidth consumption proportionally.

GPTQ quantization is mathematically equivalent to Babai’s nearest plane algorithm according to research from IST Austria. This geometric interpretation provides error bounds for large language model quantization, enabling 4-bit precision with carefully calibrated parameters to minimize accuracy degradation.

DistilBERT demonstrates the power of model distillation combined with quantization. Created by the Hugging Face team, it’s 40% smaller and faster than BERT base—approximately 66 million parameters versus 110 million—while retaining 97% of the performance on downstream tasks.

| Technique | Memory Reduction | Speed Improvement | Accuracy Impact |

|---|---|---|---|

| 8-bit Quantization | 50% | 1.5-2x | <1% loss |

| 4-bit Quantization | 75% | 2-3x | 1-3% loss |

| Model Distillation | 40-60% | 2-3x | 2-5% loss |

| KV Cache Quantization | 30-50% (cache only) | 1.3-1.8x | <1% loss |

Semantic Caching for Cost Reduction

Caching seems obvious—store results, reuse them. But LLM applications present unique challenges.

Exact string matching fails because users phrase identical questions differently. “What’s the capital of France?” and “Tell me the capital city of France” should hit the same cache entry.

Semantic caching solves this by embedding queries into vector space and matching based on similarity rather than exact strings. When a new query arrives, the system computes its embedding and searches for nearby cached entries. If a match exists above a threshold, return the cached response. Otherwise, call the model and cache the result.

For high-volume applications, semantic caching typically achieves 40-60% hit rates after the first week of operation. At GPT-5 pricing, that represents substantial monthly savings for a single feature.

Implementation requires careful tuning of the similarity threshold. Set it too high and cache hits drop dramatically. Too low and the system returns stale or irrelevant responses, degrading user experience.

Prompt Engineering and Token Management

Input tokens cost money. Output tokens cost more—often 3-5x the input rate.

Prompt optimization focuses on achieving the same results with fewer tokens. Techniques include:

- Removing unnecessary context or examples

- Using more concise instruction phrasing

- Leveraging system messages efficiently

- Implementing few-shot learning with minimal examples

- Constraining output length through instructions

The challenge is balancing brevity against clarity. Overly terse prompts often produce lower-quality outputs, requiring retries that cost more than the original savings.

Testing shows that systematic prompt compression—removing redundant tokens while preserving semantic meaning—can reduce input costs by 20-40% without accuracy loss. But this requires evaluation infrastructure to validate that compressed prompts maintain output quality.

Building a Cost Monitoring System

Can’t optimize what isn’t measured.

Production LLM systems need instrumentation that tracks costs at multiple granularities: per user, per feature, per model, per request type. This visibility enables data-driven optimization decisions.

Most teams start with aggregate monthly bills from providers. That’s insufficient. The instrumentation should capture:

- Token counts (input, output, cached) per request

- Model used and routing decisions

- Latency and throughput metrics

- Cache hit rates and effectiveness

- Error rates and retry costs

- Cost attribution to features or users

Hierarchical budget controls allow teams to set spending limits at various levels—organization-wide, per team, per feature, or per user. When a budget threshold is approached, the system can automatically route to cheaper models or implement rate limiting.

According to MIT research on AI scaling laws, it’s critical to decide on a compute budget and target model accuracy upfront. The research found that 4% average relative error (ARE) is approximately the best achievable accuracy due to random seed noise, but up to 20% ARE remains useful for decision-making.

The Provider Economics Problem

Managed LLM services like Azure OpenAI introduce cost management challenges that differ fundamentally from traditional cloud models. The pricing structure depends on input tokens, output tokens, cached tokens, provisioned throughput units (PTUs), and deployment configurations.

Azure OpenAI specifically obscures true cost drivers through its architecture. Organizations provision capacity in PTUs without clear visibility into actual token consumption or model utilization. This creates accountability gaps—engineering teams can’t determine which features drive costs or whether optimizations actually work.

Cloud cost management platforms built for traditional infrastructure don’t handle AI workloads effectively. They track VM hours and storage bytes but miss the token-level granularity needed for LLM optimization.

FinOps for AI requires use case economics. Teams must track unit costs—cost per conversation, per document summarized, per code completion—rather than just aggregate spending. This shifts the mindset from infrastructure cost management to manufacturing efficiency.

Real-World Implementation Framework

Optimization isn’t a one-time project. It’s an ongoing practice that evolves with usage patterns and model availability.

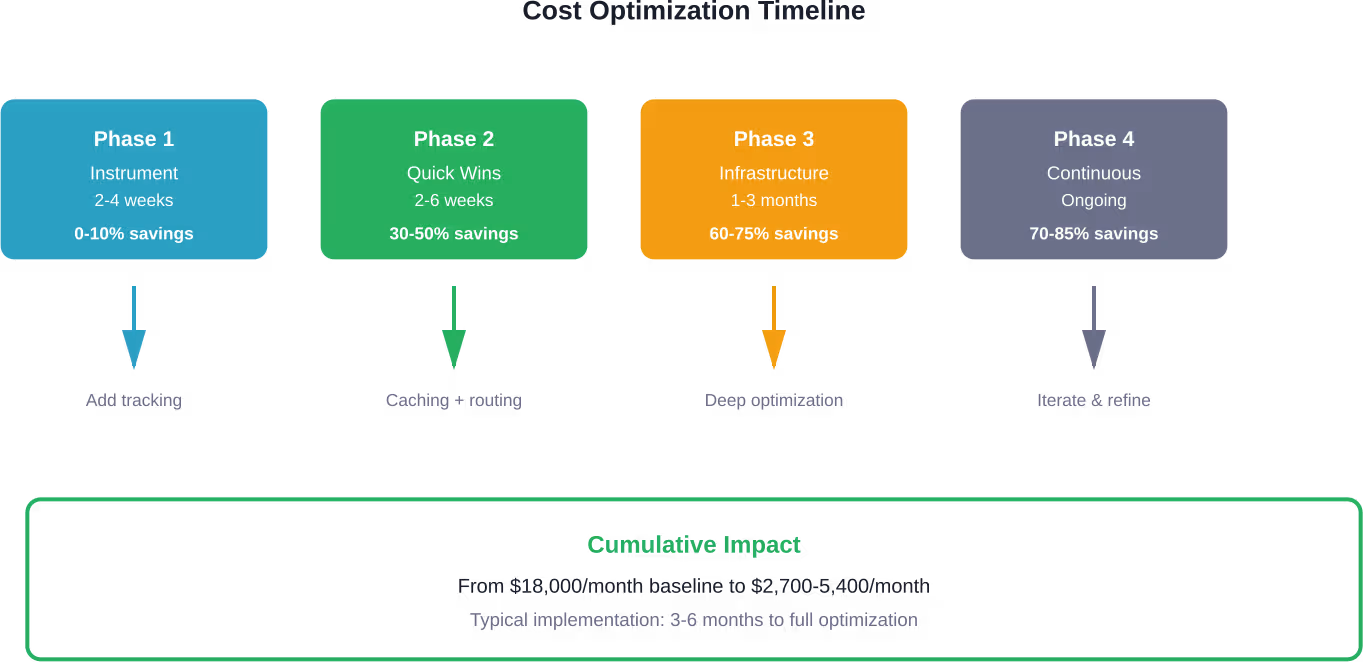

Phase 1: Baseline and Instrument

Start with comprehensive instrumentation. Deploy tracking that captures token usage, model selection, latency, and costs at request granularity. Establish baseline metrics: current costs, distribution across use cases, and performance benchmarks.

This phase typically takes 2-4 weeks and requires minimal code changes—mostly adding logging and metrics collection.

Phase 2: Quick Wins

Implement low-hanging optimizations:

- Deploy semantic caching for high-frequency queries

- Route simple queries to cheaper models

- Compress prompts by removing redundant context

- Set maximum output token limits

These changes often deliver 30-50% cost reductions within weeks without accuracy loss.

Phase 3: Infrastructure Optimization

Now tackle deeper optimizations:

- Implement dynamic routing with complexity analysis

- Deploy quantized models for latency-tolerant workloads

- Optimize KV cache management

- Implement continuous batching for throughput improvement

This phase requires more engineering effort—typically 1-3 months—but unlocks an additional 20-40% cost reduction.

Phase 4: Continuous Improvement

Establish feedback loops. Monitor which queries get routed where, which cache entries get hit frequently, and where latency or quality issues arise. Use this data to refine routing logic, update cache policies, and retune quantization parameters.

Testing new models becomes routine. When providers release improved options, the instrumentation allows rapid A/B testing to validate cost-quality tradeoffs before full rollout.

Common Pitfalls to Avoid

Cost optimization can backfire when teams optimize the wrong metrics or sacrifice critical capabilities:

- Latency degradation: Aggressive caching or routing to slower models can increase response times beyond user tolerance. For interactive applications, latency matters as much as cost. Users abandon experiences with 3-5 second delays regardless of accuracy.

- Quality erosion: Routing too aggressively to small models degrades output quality. Testing might show acceptable accuracy on benchmarks, but production edge cases expose weaknesses. Implement quality monitoring alongside cost tracking.

- Over-engineering caching: Semantic caching adds infrastructure complexity. For low-traffic features, the engineering cost of implementing and maintaining caching exceeds the savings. Focus caching efforts on high-volume endpoints first.

- Ignoring cold start costs: Model loading and initialization can impact performance and cost efficiency. Scale-to-zero policies require careful consideration of startup latency against idle costs. Balance idle costs against startup latency.

- Vendor lock-in: Optimizing deeply for one provider’s specific APIs or pricing structure creates migration barriers. When possible, abstract provider-specific details behind interfaces that allow switching.

Reduce LLM Deployment Costs Where They Actually Start

Most LLM deployment costs are not driven by the model alone – they come from how the system is designed, integrated, and scaled. AI Superior works across the full deployment lifecycle, from model selection and fine-tuning to infrastructure setup and optimization. Their approach focuses on building AI systems that match the actual workload, whether that means using custom models, optimizing existing ones, or balancing API usage with in-house deployment. This reduces unnecessary inference, avoids overprovisioned infrastructure, and keeps performance predictable as usage grows.

Cost problems in deployment usually come from decisions made before launch – model size, data pipelines, and how often systems are called. Adjusting those has a bigger impact than switching tools later. If you want your LLM deployment to stay efficient as it scales, contact AI Superior and align your setup with how it will actually be used in production.

Looking Forward: Cost Trajectories

Some believe LLM costs will fall toward zero, making optimization unnecessary. History suggests otherwise.

Compute costs have declined consistently for decades, but demand scales faster. More capable models enable new use cases that consume additional compute. Context windows expand from 2,048 to 128,000+ tokens, multiplying memory requirements. Multimodal models process images and video alongside text.

The organizations that treat LLM costs as strategic—building optimization capabilities early—create competitive advantages that compound over time. Cost efficiency enables sustainable scaling, allowing broader deployment and experimentation without budget constraints limiting product development.

Infrastructure optimization, model selection, and token management aren’t one-time projects. They’re core competencies for AI-native companies. The teams building these capabilities now will operate with structural cost advantages that competitors struggle to match.

Frequently Asked Questions

What’s the fastest way to reduce LLM costs by 30% or more?

Implement semantic caching for high-frequency queries and route simple requests to cheaper models. These two changes typically deliver 30-50% cost reduction within 4-6 weeks with minimal engineering effort. Start by instrumenting to identify which endpoints have high request volume and low query diversity—these are ideal caching candidates.

Should I use GPT-4 or Claude for cost optimization?

Neither exclusively. GPT-5.4 charges $2.50 per million input tokens, while Claude 4.5 Sonnet charges $3 per million input tokens. But cost per token isn’t the only factor—output quality, latency, and context length requirements matter. Implement routing that uses each model for workloads where it provides the best cost-quality-latency tradeoff. Testing different models on production data is the only way to determine optimal allocation.

Does quantization hurt model accuracy significantly?

Not when done properly. Research shows that 8-bit quantization typically causes less than 1% accuracy loss while cutting memory requirements by 50%. Even 4-bit quantization with careful calibration (like GPTQ) loses only 1-3% accuracy while reducing memory by 75%. The key is testing quantized models on representative evaluation datasets before production deployment to validate acceptable performance.

How much can caching actually save in production?

Semantic caching hit rates typically reach 40-60% after the first week of operation for most applications. For a support chatbot processing 500,000 monthly requests at GPT-4 pricing, that translates to $7,200-10,800 monthly savings. But effectiveness varies by use case—FAQ-style applications see higher hit rates while creative or highly personalized applications benefit less from caching.

What’s the ROI on building custom optimization infrastructure?

For applications spending more than $5,000 monthly on LLM costs, custom optimization infrastructure typically pays for itself within 3-6 months. The engineering investment ranges from 2-4 developer-months for comprehensive implementation including instrumentation, caching, and routing. Organizations spending less should focus on simpler optimizations like prompt compression and provider selection before building custom infrastructure.

How do I balance cost optimization with response latency?

Measure both metrics together and define acceptable tradeoffs. Some optimizations like caching reduce both cost and latency. Others like routing to smaller models may increase latency slightly while cutting costs. Define latency SLAs for each use case—interactive chat might require sub-second responses while batch document processing tolerates minutes. Optimize within constraints rather than treating cost or latency in isolation.

Can I run LLMs on-premises to reduce costs?

Maybe. On-premises deployment eliminates API costs but requires GPU infrastructure, engineering expertise for serving optimization, and operational overhead. This becomes cost-effective at scale—roughly 500,000+ daily requests—where the fixed infrastructure costs are amortized across high volume. Below that threshold, managed APIs are typically cheaper when factoring in total cost of ownership including engineering time.

Conclusion

LLM cost optimization isn’t optional for AI-native products. The economics are fundamentally different from traditional software—variable costs scale with usage, creating manufacturing-like unit economics that demand continuous attention.

But the opportunity is substantial. Organizations implementing comprehensive optimization—combining smart model selection, infrastructure tuning, semantic caching, and token management—achieve 60-85% cost reductions without sacrificing quality or user experience.

Start with instrumentation. Teams can’t optimize what they don’t measure. Build visibility into token usage, model selection, and cost attribution at request granularity.

Then implement quick wins: caching high-frequency queries and routing simple requests to efficient models. These deliver immediate impact while building organizational capability for deeper optimization.

The competitive advantage goes to teams that treat cost optimization as an ongoing discipline rather than a one-time project. Build the infrastructure, establish the practices, and iterate continuously as usage patterns evolve and new models emerge.

The future of AI deployment belongs to organizations that solve both the technical and economic challenges. Start optimizing today.