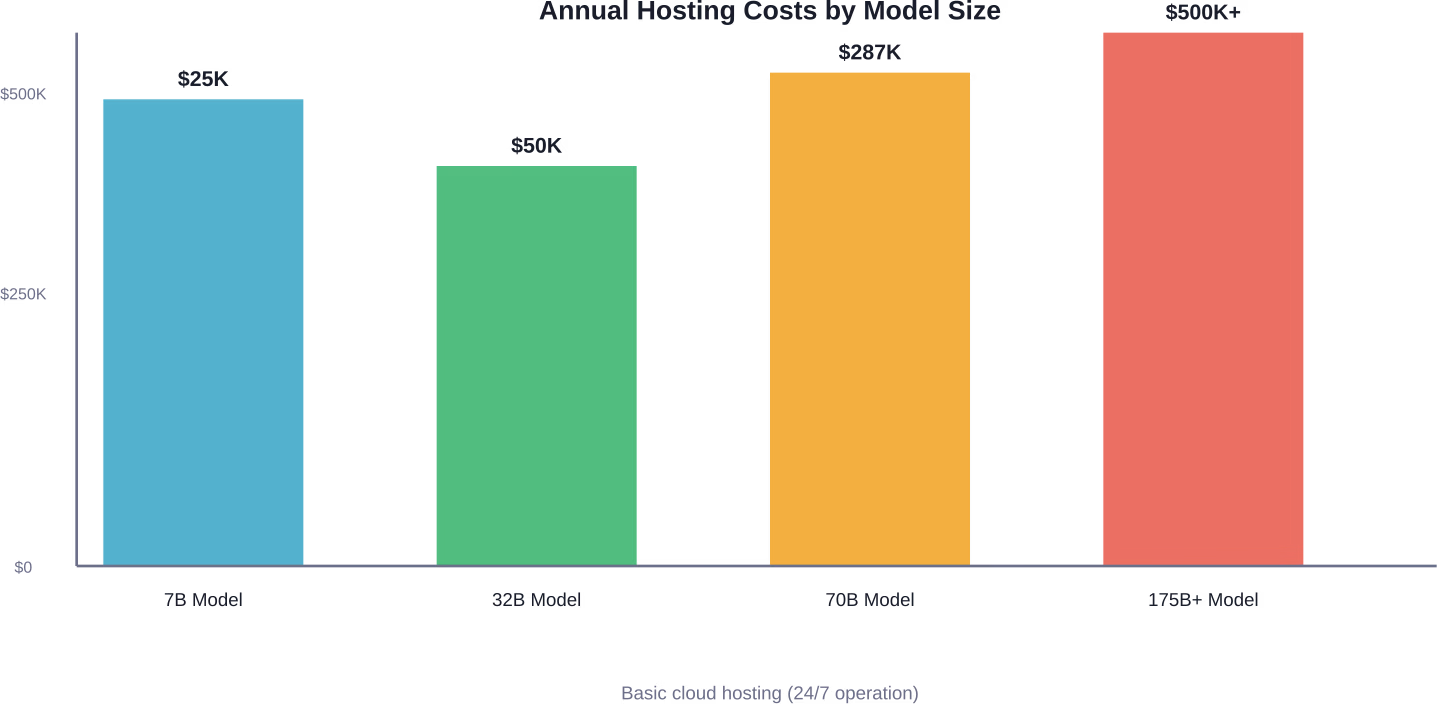

Quick Summary: Building a custom LLM costs between $125K–$12M annually depending on model size, infrastructure choices, and deployment scale. Smaller models (32B parameters) on cloud instances run around $50K/year, while enterprise deployments of 70B+ models can exceed $287K annually just for hosting. Training from scratch adds millions in GPU costs, data preparation, and engineering resources—making API services often more economical for most use cases.

The phrase “open-source LLMs are free” ranks among the most dangerous misconceptions in tech right now. Free to download? Sure. Free to run? Not even close.

Organizations evaluating custom language models face a complex cost structure that extends far beyond licensing fees. The expenses show up in infrastructure, engineering time, maintenance overhead, and strategic opportunity costs that aren’t immediately obvious.

This breakdown examines actual deployment costs based on real-world infrastructure requirements, cloud pricing data, and enterprise implementations. The numbers come from production deployments, not theoretical calculations.

The Infrastructure Reality: What Hosting Actually Costs

Hardware represents the most visible expense when deploying custom LLMs. The costs scale dramatically with model size, and the math gets uncomfortable quickly.

According to community discussions analyzing real deployment scenarios, a Qwen-2.5 32B or QwQ 32B model requires an AWS g5.12xlarge instance equipped with 4x A10G GPUs. Running this configuration 24/7 costs approximately $50,000 per year. That’s for a moderately-sized model handling basic production workloads.

Step up to Llama-3 70B, and the infrastructure requirements jump to a p4d.24xlarge instance with 8x A100 GPUs. The annual cost? Around $287,000 for continuous operation.

But here’s the thing—these figures assume perfect utilization. Real-world deployments need redundancy, load balancing, and failover capacity. A production-grade deployment with proper redundancy and monitoring typically consumes four to five times the base instance cost. That $15,000 monthly estimate balloons before any fine-tuning or scaling happens.

Breaking Down GPU Economics

Research from arXiv analyzing on-premise LLM deployment economics reveals baseline GPU costs that inform these calculations. An A800 80G card, under common assumptions, carries a baseline hourly cost of approximately $0.79 per hour. This generally falls within a $0.51–$0.99 per hour range depending on procurement and infrastructure specifics.

Cloud platforms add markup on top of raw compute costs. The convenience of not managing physical hardware comes with a premium that compounds over time.

Memory and Storage Requirements

LLMs demand substantial memory beyond GPU VRAM. A 70B parameter model typically requires approximately 140GB just to load the weights in FP16 precision. Add the KV cache for context windows, activation memory during inference, and overhead for the serving framework—suddenly that theoretical requirement balloons to 200GB+ of system memory.

Storage costs accumulate more quietly. Model checkpoints, training data, logs, and versioning artifacts add up. A comprehensive training run might generate terabytes of artifacts that need retention for reproducibility and compliance.

Training Costs: The Million-Dollar Question

Hosting a pre-trained model is expensive. Training one from scratch? That’s where costs enter entirely different territory.

Research published on arXiv examining pre-training LLMs on a budget used two cluster nodes, each equipped with substantial GPU resources, for their training experiments. Even these “budget” approaches required coordinated multi-GPU setups that most organizations can’t assemble casually.

The computational intensity of pre-training creates a cost structure dominated by GPU-hours. A full training run for a competitive model can consume thousands of GPU-hours on high-end accelerators.

What Pre-Training Actually Involves

Pre-training an LLM from scratch means processing massive text corpora—often hundreds of billions to trillions of tokens. The model learns language patterns, factual associations, and reasoning capabilities through repeated exposure to this data.

This process requires:

- Data acquisition and cleaning (often underestimated in complexity)

- Distributed training infrastructure with high-speed interconnects

- Hyperparameter tuning across multiple trial runs

- Continuous monitoring and intervention when training destabilizes

- Checkpoint management and evaluation pipelines

Each of these components carries both direct costs and engineering time requirements.

The Economics of Compute

According to arXiv research on inference economics, the marginal cost structure of LLM operations follows a compute-driven production model. Inference operates as an “intelligent production activity” where computational resources directly translate to output capacity.

Training amplifies this relationship. Where inference costs scale with usage, training costs are front-loaded and largely fixed. Whether the model succeeds or fails, the GPU-hours are spent.

Cloud providers offer various GPU options with different price-performance characteristics. Generally speaking, the newest generation accelerators offer better performance per dollar, but availability constraints and premium pricing can negate theoretical advantages.

The Hidden Costs Nobody Warns You About

Infrastructure and training represent obvious line items. The costs that blindside organizations tend to be softer but equally impactful.

Engineering and Talent Expenses

Deploying and maintaining custom LLMs requires specialized expertise. Machine learning engineers with LLM experience command premium salaries—often $150K–$300K+ annually for senior talent.

A minimal internal deployment typically needs:

- At least one ML engineer for model operations and fine-tuning

- DevOps support for infrastructure and monitoring

- Backend engineers for integration work

- Product/domain experts for evaluation and guidance

According to an analysis published on LinkedIn examining open-source LLM costs, even minimal internal deployments cost $125K–$190K per year when accounting for engineering resources. Moderate-scale customer-facing features jump to $500K–$820K annually. Core product engines at enterprise scale can exceed several million dollars.

These figures assume the team already has relevant expertise. Building that capability from scratch adds recruiting, onboarding, and learning curve costs.

Maintenance and Operations

Models don’t maintain themselves. Production deployments require:

- Monitoring for performance degradation and drift

- Security patching and dependency updates

- Incident response when things break at 3 AM

- Capacity planning and scaling adjustments

- Cost optimization as usage patterns evolve

These operational demands persist indefinitely. The monthly cloud bill might stabilize, but the human attention required doesn’t.

Data Preparation and Quality

Quality training data doesn’t materialize spontaneously. Organizations typically need to:

- License or acquire appropriate datasets

- Clean and filter content for quality and appropriateness

- Handle data privacy and compliance requirements

- Create evaluation datasets for measuring performance

- Continuously update data as domains evolve

Data work is labor-intensive and often requires domain expertise. The costs scale with data volume and quality requirements.

Deployment Scale Determines Total Costs

The difference between running a model for internal tools versus powering customer-facing features creates order-of-magnitude cost variations.

Internal Use Cases

Deploying an LLM for internal productivity—document analysis, code assistance, internal search—represents the lower end of the cost spectrum. These workloads typically:

- Serve limited concurrent users (10-100)

- Tolerate higher latency

- Accept occasional downtime or degradation

- Need less rigorous monitoring and support

Even here, costs run $125K–$190K annually when factoring infrastructure, engineering, and maintenance overhead.

Customer-Facing Features

Once an LLM powers features customers directly interact with, requirements tighten considerably:

- Latency expectations drop to subsecond response times

- Availability must approach 99.9% or higher

- Load varies unpredictably requiring headroom and scaling

- Failures directly impact revenue and reputation

These constraints push costs toward the $500K–$820K range for moderate implementations. High-traffic applications easily exceed seven figures.

Core Product Engines

When a custom LLM becomes the central differentiation for a product, organizations essentially commit to maintaining AI infrastructure as a core competency. This means:

- Dedicated ML/AI teams

- Continuous model improvement and retraining

- Sophisticated monitoring and experimentation frameworks

- Multi-region deployments for performance and reliability

- Significant executive attention and strategic investment

According to LinkedIn analysis, these implementations run $6M–$12M annually at enterprise scale. And that’s before accounting for the opportunity cost of engineering resources not working on other priorities.

| Deployment Tier | Typical Use Case | Annual Cost Range | Key Constraints |

|---|---|---|---|

| Internal Tools | Document search, code assist, analysis | $125K–$190K | Limited users, flexible latency |

| Customer-Facing | Chatbots, recommendations, content generation | $500K–$820K | High availability, low latency |

| Core Product | Primary product differentiation | $6M–$12M | Continuous improvement, multi-region |

Fine-Tuning: A More Accessible Middle Ground

Most organizations don’t need to pre-train models from scratch. Fine-tuning existing open-source models offers a pragmatic alternative that dramatically reduces costs while still enabling customization.

What Fine-Tuning Costs

Research on efficient LLM improvement strategies published on arXiv documented fine-tuning experiments using techniques like LoRA (Low-Rank Adaptation) on modest hardware. The base model quantized at 8 bits with LoRA training took approximately 7 hours on a single NVIDIA T4 GPU with 16GB VRAM. This was executed on Google Colab with 12GB RAM.

A T4 GPU on cloud providers typically costs $0.35–$0.50 per hour. A 7-hour fine-tuning run therefore costs roughly $2.50–$3.50 in compute. Even accounting for multiple training runs, hyperparameter search, and evaluation, fine-tuning costs generally stay under $500–$1000 for smaller models.

The engineering time represents the larger investment. Setting up training pipelines, preparing datasets, and evaluating results requires expertise, but at a fraction of the effort needed for pre-training.

When Fine-Tuning Makes Sense

Fine-tuning works well when:

- Domain-specific terminology or style matters more than general capability

- Proprietary data can improve performance on specific tasks

- Customization provides competitive advantage

- Smaller models with fine-tuning can match larger general models

According to a Hugging Face blog post (published March 20, 2026) on building domain-specific embedding models, organizations using synthetic training datasets and established recipes saw over 10% improvement in recall and ranking metrics. These gains came from targeted fine-tuning, not massive pre-training investments.

Parameter-Efficient Techniques

Modern fine-tuning approaches like LoRA, QLoRA, and adapter methods reduce resource requirements by updating only a small fraction of model parameters. This means:

- Less memory needed during training

- Faster iteration cycles

- Ability to maintain multiple task-specific adaptations

- Lower storage costs for model variants

These techniques make customization accessible to organizations without massive ML budgets.

Commercial API Services: The Alternative

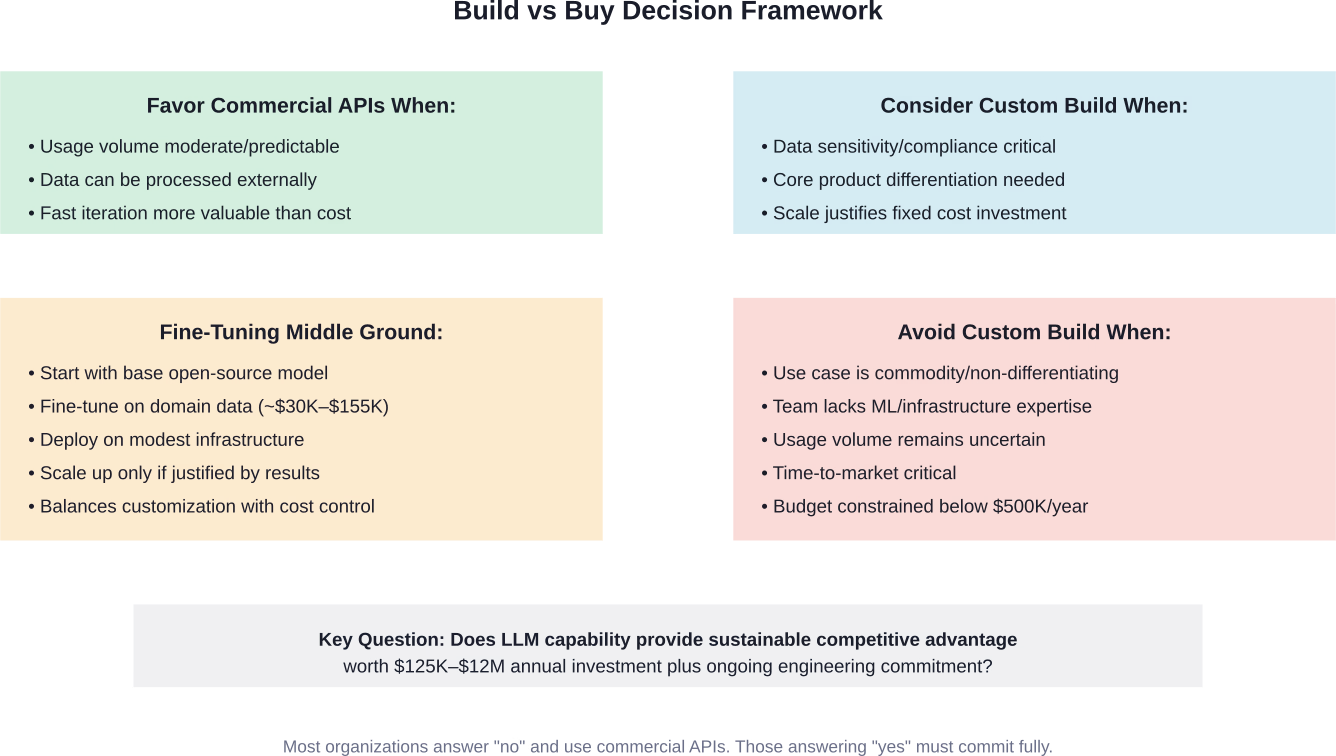

Before committing to custom infrastructure, organizations should seriously evaluate commercial API services. The economics often favor APIs for all but the most specific use cases.

How API Pricing Works

Commercial LLM providers typically charge per token processed. Rates vary by model capability:

- Smaller, faster models: $0.10–$0.50 per million tokens

- Mid-tier models: $1–$5 per million tokens

- Advanced reasoning models: $10–$60 per million tokens

Context and output tokens may be priced differently, with output generation typically costing more than input processing.

When APIs Make More Sense

Commercial APIs typically win on cost when:

- Usage is moderate and predictable

- Latency requirements allow for network calls

- Data sensitivity permits external processing

- Rapid iteration and experimentation matter

- Engineering resources are constrained

Research from arXiv on the cost-benefit analysis of on-premise LLM deployment examines the build-versus-buy decision organizations face. Cloud services offer convenience and avoid upfront capital expenditure, but ongoing subscription costs compound over time.

The breakeven point depends on usage volume and organizational priorities. For many enterprises, APIs remain more economical even at substantial scale.

Hybrid Approaches

Some organizations deploy hybrid architectures:

- Use APIs for peak traffic and overflow capacity

- Run custom models for high-volume, latency-sensitive operations

- Keep sensitive data on-premise while using APIs for general tasks

- Prototype with APIs before committing to custom infrastructure

This approach balances cost, flexibility, and capability while providing fallback options.

Real-World Case Studies and Reported Costs

Understanding theoretical costs helps, but actual deployment experiences reveal where estimates meet reality.

Moderate Scale Deployment

According to community discussions, one team’s experience deploying private LLMs showed initial costs seemed manageable but escalated quickly once production requirements entered the picture.

The team discovered their production-grade deployment required redundancy, caching, load balancing, and comprehensive monitoring. What started as a few thousand dollars monthly rapidly approached $15,000—and that was before any fine-tuning or significant scaling.

Enterprise Implementation

According to OpenAI’s December 17, 2025 report on enterprise AI adoption, organizations deploying AI at scale saw dramatic usage increases. According to OpenAI’s December 2025 enterprise AI report, ChatGPT message volume grew 8x year-over-year, while API reasoning token consumption per organization increased 320x.

These usage patterns indicate substantial ongoing costs whether using custom infrastructure or commercial services. The organizations experiencing “measurable productivity and business impact” clearly found the investment worthwhile—but the expense remains significant.

Academic and Research Context

Research institutions face similar cost pressures with additional constraints. A Carnegie Mellon team published cost-benefit analysis in 2026 examining on-premise deployment economics. Their findings emphasized that performance parity with commercial models requires careful model selection, typically targeting benchmark scores within 20% of leading commercial offerings.

This performance threshold reflects enterprise practice where modest performance gaps are acceptable if other factors—data privacy, cost predictability, customization—provide offsetting benefits.

Optimization Strategies to Control Costs

Organizations committed to custom LLM deployment can employ several strategies to manage expenses.

Right-Sizing Model Selection

The largest model isn’t always necessary. Careful analysis of task requirements often reveals that smaller models with fine-tuning match or exceed larger general models on specific workloads.

Testing multiple model sizes against actual use cases helps identify the smallest effective model. This directly impacts infrastructure requirements and ongoing costs.

Quantization and Compression

Model quantization reduces precision from 16-bit or 32-bit floats to 8-bit or even 4-bit integers. This dramatically reduces memory requirements and increases inference throughput with minimal accuracy loss for many tasks.

Research documented on arXiv showed LoRA training applied to models pre-quantized at 4 bits achieved results comparable to higher precision with substantially lower resource requirements.

Efficient Infrastructure Management

According to arXiv research on LLM training efficiency, optimizer choice and hyperparameter tuning significantly impact pre-training times and final model performance. Studies comparing AdamW, Lion, and other optimizers found meaningful differences in convergence speed and compute efficiency.

Similarly, ensuring GPUs stay actively utilized rather than sitting idle prevents paying for unused capacity. Batch processing requests, implementing request queuing, and auto-scaling infrastructure based on demand all improve cost efficiency.

Caching and Request Optimization

Many LLM queries repeat or overlap substantially. Implementing semantic caching can serve identical or similar requests from cache rather than recomputing responses. This reduces inference costs proportionally to cache hit rates.

Request batching also improves GPU utilization by processing multiple requests simultaneously, amortizing overhead across batch members.

Build a Custom LLM Without Letting Costs Run Uncontrolled

Custom LLM projects rarely become expensive overnight – costs build up through decisions around data scope, training approach, and how the model is expected to perform in real use. AI Superior supports custom LLM development from the ground up, including dataset preparation, model training, fine-tuning, and deployment. Instead of defaulting to larger models or longer training cycles, the focus is on defining a setup that fits the task and can be maintained over time. That often means narrowing the scope, structuring data more carefully, and choosing training methods that do not overuse compute.

Projects tend to go over budget when the model is built without clear limits or when requirements keep expanding during development. Keeping the system aligned with actual use cases makes both the build and future operation more predictable. If you want a custom LLM that is practical to build and run, contact AI Superior and align the project before costs scale.

The Strategic Calculation: When Custom Makes Sense

Given these costs, when does building custom LLM infrastructure actually make strategic sense?

Data Sensitivity and Compliance

Organizations handling sensitive data—healthcare, finance, government—may face regulatory requirements or risk tolerance that precludes external API usage. On-premise deployment becomes mandatory rather than optional.

Research published on arXiv provided a decision framework specifically for government LLM adoption. The framework emphasized that strategic and economic value requires sufficient usage volume. According to the Menlo Ventures 2025 State of Generative AI report cited in the research, market leaders Anthropic, OpenAI, and Google collectively saw massive adoption—but that doesn’t mean every organization needs custom infrastructure.

Differentiation and Competitive Advantage

If LLM capabilities provide core product differentiation, custom models might justify the investment. This applies when:

- Proprietary data creates an unmatched training corpus

- Specialized domain knowledge isn’t available in general models

- Model behavior and output style define brand identity

- Competitive pressure requires capability others can’t easily replicate

Commodity use cases rarely justify custom deployment. Differentiation matters.

Scale and Usage Patterns

Extremely high usage volumes can shift economics toward custom infrastructure despite high fixed costs. The calculation depends on comparing cumulative API costs against the all-in cost of ownership.

But be realistic about usage projections. Overestimating adoption and underestimating API efficiency leads to costly infrastructure sitting underutilized.

Long-Term Strategic Investment

Building LLM capabilities represents a long-term strategic investment in AI as a core competency. This goes beyond immediate cost calculations to questions of organizational capabilities and strategic positioning.

Organizations choosing this path commit to continuous investment in talent, infrastructure, and improvement. The costs extend indefinitely, but so does the strategic optionality.

Emerging Cost Trends and Future Outlook

The economics of custom LLMs continue evolving rapidly. Several trends affect future cost calculations.

Hardware Efficiency Improvements

New GPU architectures consistently improve performance per dollar. According to RISC-V market analysis published in 2025, the global AI processor market was valued at $261.4 billion in 2025 and is expected to grow at an 8.1% CAGR to $385.4 billion by 2030.

This growth brings competition and architectural innovation. RISC-V’s emergence as an AI-native architecture could disrupt current GPU dominance, potentially lowering costs through increased competition and specialization.

Algorithm and Architecture Advances

Research continues producing more efficient model architectures and training techniques. Improvements in attention mechanisms, mixture-of-experts approaches, and sparse models reduce computational requirements for equivalent performance.

These advances benefit both training and inference costs, though they require expertise to implement effectively.

Regulatory and Compliance Pressures

Increasing regulatory attention on AI—particularly around data privacy, bias, and transparency—may shift economics toward on-premise deployments for regulated industries. Compliance costs could make custom infrastructure relatively more attractive despite higher absolute costs.

Market Consolidation

According to OpenAI’s December 2025 enterprise AI report, ChatGPT message volume grew 8x year-over-year with API usage increasing 320x per organization. This concentration suggests potential market consolidation around a few providers.

Dependence on consolidated providers creates strategic risk that could justify custom infrastructure as a hedge against vendor lock-in or pricing pressure.

Frequently Asked Questions

How much does it cost to train an LLM from scratch?

Training an LLM from scratch typically costs between $500,000 to several million dollars depending on model size and desired performance. This includes GPU compute ($500K–$5M+), engineering resources ($300K–$1M+), and data preparation ($100K–$500K). Smaller research models might be trained for less using budget techniques, but competitive performance at scale requires substantial investment. Fine-tuning existing models reduces this to $30K–$155K for most use cases.

What’s cheaper: hosting a custom LLM or using API services?

API services are typically cheaper for most organizations unless usage volume is extremely high and sustained. A 32B parameter model hosted 24/7 costs around $50K annually just for infrastructure, while a 70B model runs approximately $287K per year. API pricing at $1–$5 per million tokens means reaching breakeven requires processing billions of tokens monthly. Additionally, custom deployment requires engineering resources ($125K–$190K minimum) that API services eliminate.

Can small companies afford to build custom LLMs?

Small companies can fine-tune existing open-source models for $30K–$155K, which is feasible for well-funded startups. However, pre-training from scratch or operating large-scale production deployments ($500K–$12M annually) typically exceeds small company budgets. Most small organizations achieve better ROI using commercial APIs or fine-tuned smaller models deployed on modest infrastructure. The engineering expertise required also challenges smaller teams.

What are the hidden costs of running private LLMs?

Hidden costs include engineering salaries ($150K–$300K+ per specialized role), maintenance and operations overhead, monitoring infrastructure, data preparation and cleaning, security and compliance work, and opportunity cost of resources not working on core business problems. Production deployments also require redundancy and load balancing that multiply baseline infrastructure costs by 4-5x. These soft costs often exceed the visible cloud bills.

How much does fine-tuning an existing model cost?

Fine-tuning costs $500–$5,000 in compute for most projects, with engineering time adding $20K–$100K depending on complexity. Research shows a 7-hour fine-tuning run on a single T4 GPU costs roughly $2.50–$3.50 in cloud compute. Parameter-efficient techniques like LoRA reduce requirements further. Total project costs including data preparation typically range from $30K–$155K, representing approximately 95% cost reduction compared to pre-training from scratch.

When does building a custom LLM make business sense?

Building custom LLMs makes sense when data sensitivity requires on-premise deployment, when LLM capabilities provide core product differentiation worth protecting, when usage scale exceeds API cost breakeven points, or when developing AI as a long-term strategic competency. Organizations handling sensitive regulated data, processing billions of tokens monthly, or building LLM-centric products are the most likely candidates. Commodity use cases rarely justify the investment.

What model size should organizations choose for custom deployment?

Organizations should choose the smallest model that meets performance requirements after fine-tuning. Generally speaking, 7B–13B parameter models handle many production workloads effectively with modest infrastructure. 32B models offer stronger capability but require substantial GPU resources. 70B+ models need enterprise-grade infrastructure and should only be deployed when smaller models demonstrably fail to meet requirements. Testing multiple sizes against actual use cases identifies the right balance of capability and cost.

Making the Decision: A Practical Framework

The choice between building custom LLM infrastructure and using commercial services ultimately depends on specific organizational circumstances. Here’s how to approach the decision systematically.

Start by honestly assessing usage volume. Calculate expected token throughput across all use cases. Compare the cumulative API costs against the all-in cost of custom infrastructure including engineering, maintenance, and opportunity costs. Be conservative with usage projections—overestimation leads to expensive underutilized infrastructure.

Evaluate data sensitivity requirements. If regulatory compliance or business risk truly prevents external processing, custom infrastructure becomes necessary regardless of cost comparisons. But verify this constraint is real rather than assumed.

Consider strategic differentiation. Does LLM capability provide sustainable competitive advantage or is it commodity functionality? Commodity use cases favor APIs. True differentiation might justify custom investment.

Assess organizational capability realistically. Building and operating LLM infrastructure requires specialized expertise. Organizations lacking ML/AI talent face steep learning curves and higher costs.

Start small regardless of direction. Use commercial APIs or fine-tuned models on modest infrastructure before committing to large-scale custom deployment. Prove value and usage patterns with minimal investment, then scale when justified.

Most organizations discover that commercial APIs or fine-tuned smaller models meet their needs at lower cost and risk than custom large-scale deployments. The exceptional cases—highly regulated industries, massive scale, core differentiation—justify custom infrastructure, but they’re the minority.

The costs are real and substantial. Organizations committing to custom LLM infrastructure must approach it as a long-term strategic investment with ongoing attention and resources. Half-measures lead to expensive failures.

Ready to explore LLM deployment for specific use cases? Evaluate options systematically, validate assumptions with small-scale tests, and scale investments as usage and value become clear. The technology is powerful, but success requires matching deployment approaches to actual organizational needs and capabilities.