Quick Summary: Google LLM API costs vary significantly across Vertex AI models. As of March 2026, Gemini 3.1 Flash-Lite starts at $0.25 per 1M input tokens (for ≤200K tokens) and $0.25 per 1M for >200K tokens, while Gemini 3.1 Pro ranges from $2 to $12 per 1M tokens depending on context size. Pricing depends on model type, token volume, caching, and grounding features, with batch processing offering 50% discounts.

Pricing for Google’s LLM APIs has become a critical factor for developers and businesses building AI applications. With the expansion of Vertex AI’s Gemini model family through early 2026, understanding the cost structure is no longer optional.

The challenge? Google’s pricing model operates on multiple variables—token count, context window size, caching status, and whether requests use batch or real-time processing. A single API call can range from fractions of a cent to several dollars depending on configuration.

Here’s what the actual costs look like right now.

Understanding Google LLM API Pricing Structure

Google charges for LLM API usage through Vertex AI on a per-token basis. But that’s where simplicity ends.

According to the official Vertex AI pricing page, costs are split into input tokens (what developers send to the model) and output tokens (what the model generates). This dual-pricing approach means a 1,000-word prompt with a 500-word response gets billed twice—once for reading, once for writing.

The token itself is a text fragment, typically 3-4 characters in English. The phrase “artificial intelligence” breaks into roughly 4 tokens. So a typical 500-word business document converts to approximately 650-750 tokens.

Real talk: most developers underestimate token consumption by 30-40% when planning budgets. That gap widens dramatically when dealing with multimodal inputs like images or video.

What Counts as a Billable Request

Google charges for all processed tokens in successful requests (200 OK). However, some 4xx errors (like 429 Too Many Requests) do not incur costs, while others related to content filtering during generation may still result in charges for input tokens.

This matters more than it sounds. During testing phases when error rates can hit 15-20%, that protection represents significant savings.

Gemini 3.1 Model Pricing Breakdown

The Gemini 3.1 family spans multiple models with drastically different price points. Here’s the current structure as of March 2026.

| Model | Input ≤200K tokens | Output ≤200K tokens | Input >200K tokens | Output >200K tokens |

|---|---|---|---|---|

| Gemini 3.1 Pro Preview | $2 per 1M | $12 per 1M | $4 per 1M | $18 per 1M |

| Gemini 3.1 Flash Image Preview | $0.50 input, $3 output per 1M | Image: $60 per 1M | N/A | N/A |

| Gemini 3 Standard | $3 per 1M | $15 per 1M | Higher rates apply | Higher rates apply |

The pricing tier jumps when input context exceeds 200,000 tokens. At that threshold, Google charges all tokens—both input and output—at the long-context rate. For Gemini 3.1 Pro, that’s a 100% input cost increase (from $2 to $4) and 50% output increase (from $12 to $18).

Flash models target cost-conscious applications. At half the price of Pro models, they sacrifice some reasoning depth for speed and economy. For straightforward classification, summarization, or extraction tasks, Flash delivers 90% of Pro’s quality at 25% of the cost.

Cached Input Pricing Advantage

Caching is where smart developers cut costs dramatically. When the same context appears across multiple requests—think a product catalog, documentation set, or knowledge base—caching that content reduces repeated input costs by 90%.

For Gemini 3.1 Pro, cached input tokens cost $0.20 per million instead of $2 (for ≤200K tokens) or $0.40 per million (for >200K tokens).

The math works out fast. If a 50,000-token knowledge base gets queried 100 times daily, caching saves approximately $9 per day compared to sending the full context each time. That’s $270 monthly from one optimization.

Batch Processing vs Real-Time Costs

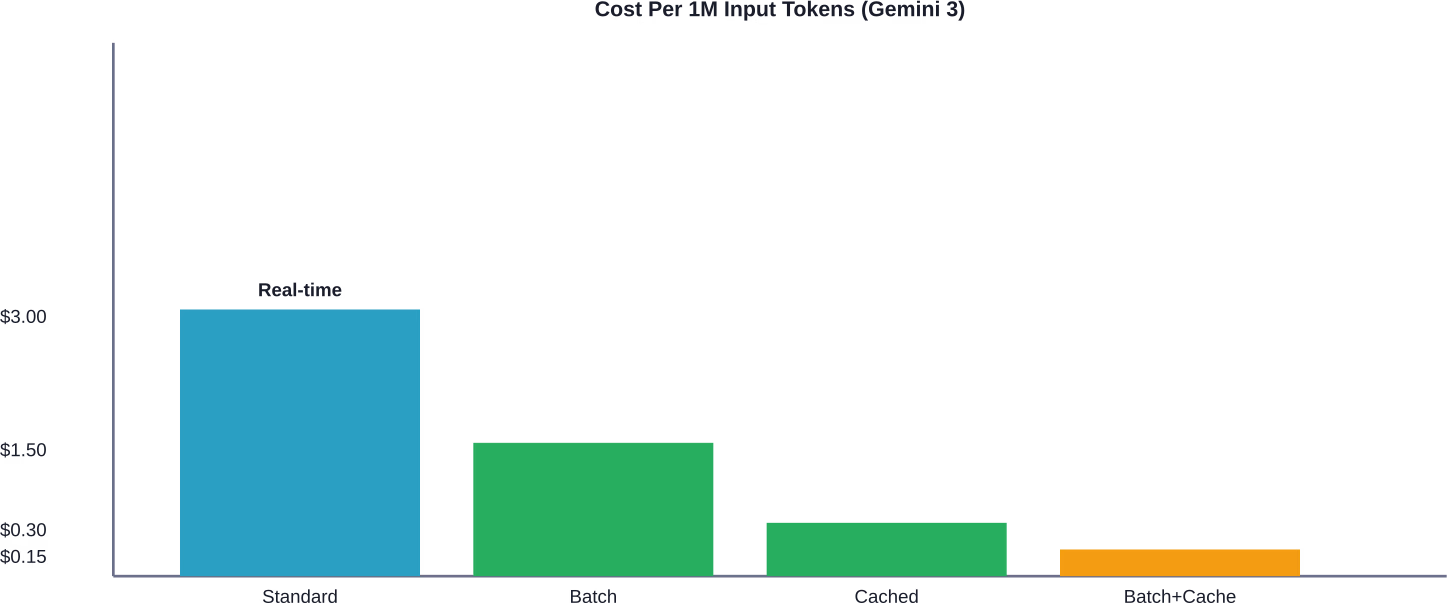

Batch requests cut costs in half. According to official Vertex AI documentation, batch input for Gemini 3 Standard costs $1.50 per million tokens versus $3 for real-time (non-batch). Batch output is $7.50 per million versus $15 for real-time.

The tradeoff? Latency. Batch jobs process asynchronously with completion times ranging from minutes to hours. For overnight data processing, document analysis, or bulk content generation, that delay is irrelevant. For chatbots or interactive tools, it’s a dealbreaker.

Batch cache operations carry similar discounts. Cache writes drop to $1.875 per million tokens, and cache hits to $0.15. For high-volume workloads where immediate responses aren’t required, batch processing with caching represents the absolute lowest cost structure available.

Grounding and Tool Pricing

Gemini 2.5 Pro includes 10,000 grounded prompts per day at no additional charge. Beyond that limit, Google bills $35 per 1,000 grounded prompts.

A grounded prompt means the model queries Google Search during generation. For factual accuracy in news summaries, research assistance, or real-time data lookup, grounding proves invaluable. But the cost adds up.

At $35 per 1,000 grounded requests, heavy usage scenarios rack up charges quickly. An application making 50,000 grounded requests monthly pays $1,750 just for grounding—before token costs. The free daily allocation covers 300,000 monthly requests for qualifying accounts, which handles most small-to-medium deployments.

Web Grounding for enterprise carries a higher rate: $45 per 1,000 grounded prompts. This premium tier offers enhanced search capabilities and enterprise data sources. Organizations needing this feature should contact Google Cloud’s account team for potential volume discounts.

Comparing Google LLM Costs to Competitors

How do Google’s rates stack up against OpenAI and Anthropic?

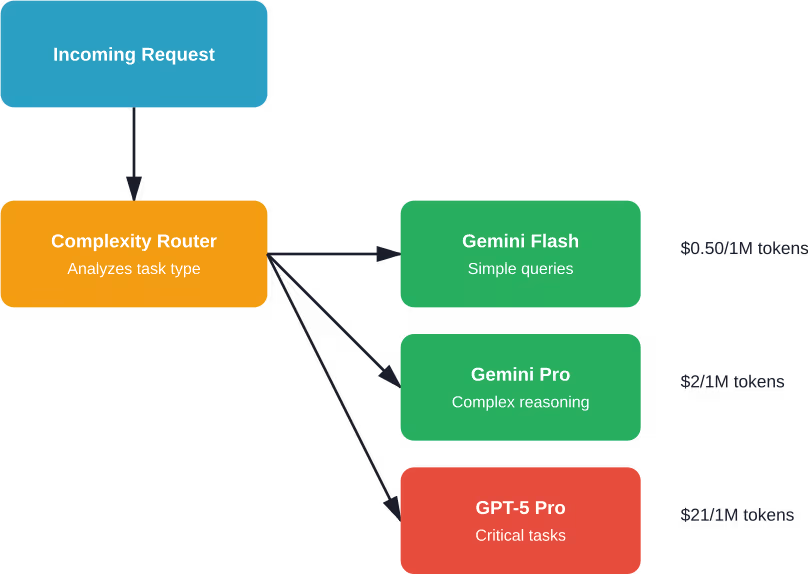

As of March 2026, OpenAI’s GPT-5.2 Pro costs $21 per million input tokens and $168 per million output tokens—roughly 10x Google’s Gemini 3.1 Pro. Anthropic’s Claude Sonnet 4.5 runs $3 per million input and $15 per million output, nearly identical to Gemini 3 Standard.

But here’s where it gets interesting. DeepSeek’s V3.2 undercuts everyone at $0.28 per million input tokens. For budget-conscious applications, Chinese providers have created a new cost floor that Western providers struggle to match.

| Provider | Model | Input (per 1M tokens) | Output (per 1M tokens) |

|---|---|---|---|

| Gemini 3.1 Pro | $2.00 | $12.00 | |

| Gemini 3.1 Flash-Lite | $0.25 | Standard rates | |

| OpenAI | GPT-5.2 Pro | $21.00 | $168.00 |

| Anthropic | Claude Sonnet 4.5 | $3.00 | $15.00 |

| DeepSeek | V3.2-Exp | $0.28 | $0.40 |

Performance matters as much as price. Some community discussions indicate that DeepSeek’s ultra-low pricing may involve quality tradeoffs for certain complex reasoning tasks. Google’s Gemini 3.1 Pro and Anthropic’s Claude models deliver superior performance on benchmarks like MMLU and HellaSwag.

The value calculation depends entirely on the use case. For high-stakes legal document analysis, paying 10x for GPT-5.2 Pro’s accuracy makes sense. For customer support ticket classification, Gemini Flash or DeepSeek provides sufficient quality at a fraction of the cost.

Hidden Costs and Infrastructure Charges

Token pricing tells only part of the story. Vertex AI infrastructure adds additional costs that many developers overlook during initial planning.

Data storage for RAG applications using Vertex AI RAG Engine incurs separate charges. Vertex AI Search pricing operates on a configurable model with monthly subscriptions for query capacity (QPM) and storage. For websites, storage is calculated as 500 kilobytes times the number of pages—a 1,000-page website costs $2.38 monthly for data indexing alone.

Vector databases, whether using Vertex AI’s managed offerings or third-party solutions like Pinecot or Weaviate, add per-GB storage and query costs. A typical enterprise RAG deployment with 50GB of embeddings might incur $50-150 monthly in vector storage charges independent of LLM costs.

Data Transfer and Egress Fees

Cloud Storage, Google Drive, and other data sources accessed from Vertex AI don’t charge for access, but data egress costs apply. Moving data out of Google Cloud regions incurs bandwidth fees ranging from $0.08 to $0.23 per GB depending on destination.

For applications processing large multimedia files or extensive document collections, egress can add 10-20% to total costs. A video processing pipeline handling 1TB monthly pays $80-230 just for bandwidth.

Cost Optimization Strategies That Work

The gap between naive implementation and optimized deployment can reach 70% in total spend. Here’s what actually moves the needle.

Implement Aggressive Context Caching

Beyond basic caching, implementing a multi-tier cache strategy cuts costs further. Store frequently accessed contexts in Vertex AI’s native cache. For less common but still repeated contexts, maintain a Redis or Memcached layer that reconstructs prompts from templates.

A cost reduction example shows that implementing a two-tier caching system for a customer service bot referencing a 30,000-token product catalog can reduce costs from approximately $2,400 to $720 monthly.

Compress Prompts Without Sacrificing Quality

Prompt engineering isn’t just about quality—it’s about efficiency. Removing filler words, using abbreviations where context allows, and restructuring prompts can reduce token counts by 15-25% with no quality loss.

Instead of “Please analyze the following customer feedback and provide a detailed summary of the main themes, sentiment, and actionable insights,” use “Analyze this feedback. List: main themes, sentiment, actionable insights.” Same instruction, 40% fewer tokens.

Route Requests to Appropriate Models

Not every request needs Gemini Pro. Implementing a routing layer that directs simple queries to Flash and complex reasoning to Pro optimizes cost-to-quality ratios.

Classification tasks, basic Q&A, and template filling work fine on Flash. Multi-step reasoning, nuanced analysis, and creative generation benefit from Pro’s additional capabilities. Smart routing can reduce average per-request costs by 40-50% across mixed workloads.

Batch Everything Possible

Real-time requirements are often overstated. Content moderation, document summarization, data enrichment, and many other workflows tolerate 5-30 minute delays without user impact.

Migrating these workloads to batch processing immediately cuts costs 50%. For organizations processing millions of requests monthly, that’s five-figure savings with minimal engineering effort.

Monitor and Set Budget Alerts

Runaway costs happen. A misconfigured retry loop, an unexpected traffic spike, or a prompt injection attack can drain budgets in hours.

Google Cloud’s billing alerts trigger notifications when spending crosses thresholds. Setting alerts at 50%, 75%, and 90% of monthly budgets provides early warning. Coupling alerts with automatic quota limits prevents catastrophic overages.

Avoid Overpaying for LLM APIs, Validate Your Setup First

Using Google LLM APIs looks straightforward at first, but costs quickly grow once usage scales – especially when prompts, data flow, and model behavior are not optimized. AI Superior works across the full lifecycle, from data preparation and model selection to fine-tuning and deployment, which helps reduce unnecessary API usage and avoid inefficient setups.

Instead of relying only on external APIs, the approach often includes evaluating when custom models, fine-tuning, or hybrid setups make more sense financially. This is particularly relevant for companies moving from testing to production, where API costs can compound over time. If you are planning to rely on LLM APIs or already seeing costs rise, it is worth reviewing your architecture early. Reach out to AI Superior to assess your setup before costs scale further.

Real-World Cost Examples

Theory matters less than practice. What do actual deployments cost?

Customer Support Chatbot

A mid-sized e-commerce company runs a support bot handling 50,000 conversations monthly. Each conversation averages 8 messages with 200 input tokens and 150 output tokens per message.

Total monthly volume: 50,000 conversations × 8 messages × (200 input + 150 output) = 140 million tokens (80M input, 60M output).

Using Gemini 3.1 Flash ($0.50 input for text/image, $3 output for text): approximately $40 input + $30 output = $70 monthly.

Using Gemini 3.1 Pro ($2 input, $12 output): $160 input + $720 output = $880 monthly.

Flash handles this use case effectively, saving $810 monthly—97% cost reduction.

Document Processing Pipeline

A legal tech startup processes 10,000 contracts monthly, each averaging 5,000 tokens. Extraction and analysis generates 1,000 output tokens per document.

Total volume: 10,000 docs × (5,000 input + 1,000 output) = 60 million tokens (50M input, 10M output).

For batch processing with Gemini 3 Standard: 50M × $1.50/1M (batch input) + 10M × $7.50/1M (batch output) = $75 + $75 = $150 monthly.

Real-time processing: 50M × $3/1M + 10M × $15/1M = $150 + $150 = $300 monthly.

Batch processing cuts costs in half with zero quality impact for overnight processing workflows.

When to Choose Google Over Competitors

Google’s LLM APIs excel in specific scenarios but aren’t universally optimal.

Choose Google Vertex AI when:

- Already operating within Google Cloud infrastructure: Data transfer and integration costs drop significantly

- Requiring multimodal capabilities: Gemini handles text, images, audio, and video in unified prompts

- Building RAG applications: Vertex AI’s integrated vector search and grounding tools reduce architectural complexity

- Needing ultra-long context windows: Gemini 1.5 Pro supports up to 2 million tokens, far exceeding most competitors

- Prioritizing cost efficiency for moderate-complexity tasks: Flash models deliver strong value

Look elsewhere when:

Maximum reasoning capability matters more than cost—GPT-5.2 Pro outperforms Gemini on complex logical tasks. Specialized domains like advanced mathematics or competitive programming—OpenAI’s models currently lead these benchmarks. Zero-tolerance compliance requirements—some industries mandate specific certifications that favor established providers.

Frequently Asked Questions

How much does Google’s cheapest LLM API cost?

Gemini 3.1 Flash-Lite costs $0.25 per million input tokens (for ≤200K context) as of March 2026, making it one of Google’s most economical options. With batch processing and caching, effective costs can drop to $0.15 per million tokens for batch cache hits, though initial batch cache writes cost $1.875 per million.

What’s the difference between Gemini Pro and Flash pricing?

Gemini 3.1 Pro costs $2 per million input tokens compared to Flash’s $0.50—a 4x difference. Output tokens show a similar gap: Pro charges $12 per million while Flash uses standard rates significantly lower. Pro delivers superior reasoning and nuance; Flash optimizes for speed and cost on simpler tasks.

Does Google charge for failed API requests?

Google charges for all processed tokens in successful requests (200 OK). However, some 4xx errors (like 429 Too Many Requests) do not incur costs, while others related to content filtering during generation may still result in charges for input tokens.

How does context caching reduce Google LLM costs?

Caching repeated context reduces token costs by approximately 90%. For Gemini 3.1 Pro, cached input tokens cost $0.20 per million versus $2 for uncached.

What are grounding costs for Gemini models?

Gemini 2.5 Pro includes 10,000 free grounded prompts per day. Beyond that limit, standard grounding costs $35 per 1,000 grounded prompts. Enterprise web grounding runs $45 per 1,000 grounded prompts. These charges apply in addition to standard input and output token costs.

Can I use Google LLM APIs for free?

Google doesn’t offer a permanent free tier for Vertex AI LLM usage like some competitors. However, new Google Cloud accounts receive credits (typically $300) for initial testing. Pricing is pay-as-you-go with no minimum usage requirements, allowing small-scale testing at minimal cost.

How does batch processing pricing work?

Batch processing cuts token costs by 50% across Google’s Gemini models. For example, Gemini 3 Standard drops from $3 to $1.50 per million input tokens and $15 to $7.50 per million output tokens. Batch requests process asynchronously with completion times ranging from minutes to hours depending on queue depth.

Making the Cost Decision

Google’s LLM API pricing positions Vertex AI competitively in the 2026 market, particularly for applications already operating within Google Cloud’s ecosystem.

The cost structure rewards optimization. Developers who implement caching, batch processing, and intelligent model routing can achieve effective costs 70-80% below list prices. Those who deploy models naively will overpay significantly.

Token-based pricing remains the dominant model across all major providers, but the effective cost per AI-generated response varies wildly based on implementation choices. A well-architected deployment on Gemini Flash can deliver AI capabilities at one-tenth the cost of an unoptimized GPT-5 Pro deployment.

The key question isn’t which provider has the lowest list price—it’s which combination of model capability, pricing structure, and infrastructure integration delivers the best value for specific workload characteristics.

Start with clear benchmarking. Test representative workloads across Google, OpenAI, and Anthropic models. Measure not just quality but actual token consumption, latency, and error rates. Calculate total cost of ownership including infrastructure, data transfer, and engineering time.

Then optimize ruthlessly. Every 10% reduction in average tokens per request, every percentage point improvement in cache hit rates, every workload migrated to batch processing translates directly to bottom-line savings.

The LLM cost landscape continues evolving rapidly. Pricing that’s competitive today may become obsolete within months as providers jockey for market share. Budget flexibility and architectural adaptability matter as much as current pricing when building long-term AI infrastructure.