Quick Summary: Predictive analytics tools combine statistical modeling, machine learning, and data mining to forecast future outcomes from historical data. The technology stack spans programming environments (Python, R), statistical platforms (IBM SPSS, SAS), business intelligence tools (Tableau, Power BI), AutoML platforms (DataRobot, H2O.ai), and cloud-based solutions (AWS SageMaker, Azure ML) tailored to different technical skill levels and use cases.

Predictive analytics determines the likelihood of future outcomes using techniques like data mining, statistics, data modeling, artificial intelligence, and machine learning. Organizations across industries leverage these tools to interpret historical data patterns and make informed decisions about risks, opportunities, and customer behaviors.

The predictive analytics market in 2026 offers everything from code-free solutions for business analysts to enterprise machine learning ecosystems built for data science teams. Choosing the right tool depends on your organization’s maturity, use cases, and existing tech stack.

But here’s the thing—not every predictive analytics tool delivers equal results. The right platform changes how teams allocate time, shifting focus from data preparation to actual predictions that drive revenue. Marketing analysts, for instance, typically spend 40% of their time preparing data for analysis, leaving minimal room for the forecasts that actually matter.

What Qualifies as a Predictive Analytics Tool

Predictive analytics tools use statistical modeling, data mining techniques, and machine learning to analyze current and historical business data and build accurate forecasts. These platforms help companies define the probability of future or otherwise unknown events.

The distinction matters because not every analytics platform qualifies as predictive. Descriptive analytics tells you what happened. Diagnostic analytics explains why it happened. Predictive analytics, though, forecasts what will happen next based on patterns in your data.

Real predictive analytics platforms combine several core capabilities:

- Data integration from multiple sources (databases, spreadsheets, cloud services)

- Statistical modeling and algorithm libraries (regression, classification, time series)

- Machine learning model training and validation

- Automated feature engineering and variable selection

- Model deployment and monitoring infrastructure

- Visualization tools for interpreting predictions

Generic business intelligence tools often include basic forecasting features. But they lack the depth required for sophisticated predictive modeling. True predictive platforms offer advanced techniques like ensemble methods, neural networks, and gradient boosting that deliver measurably better accuracy.

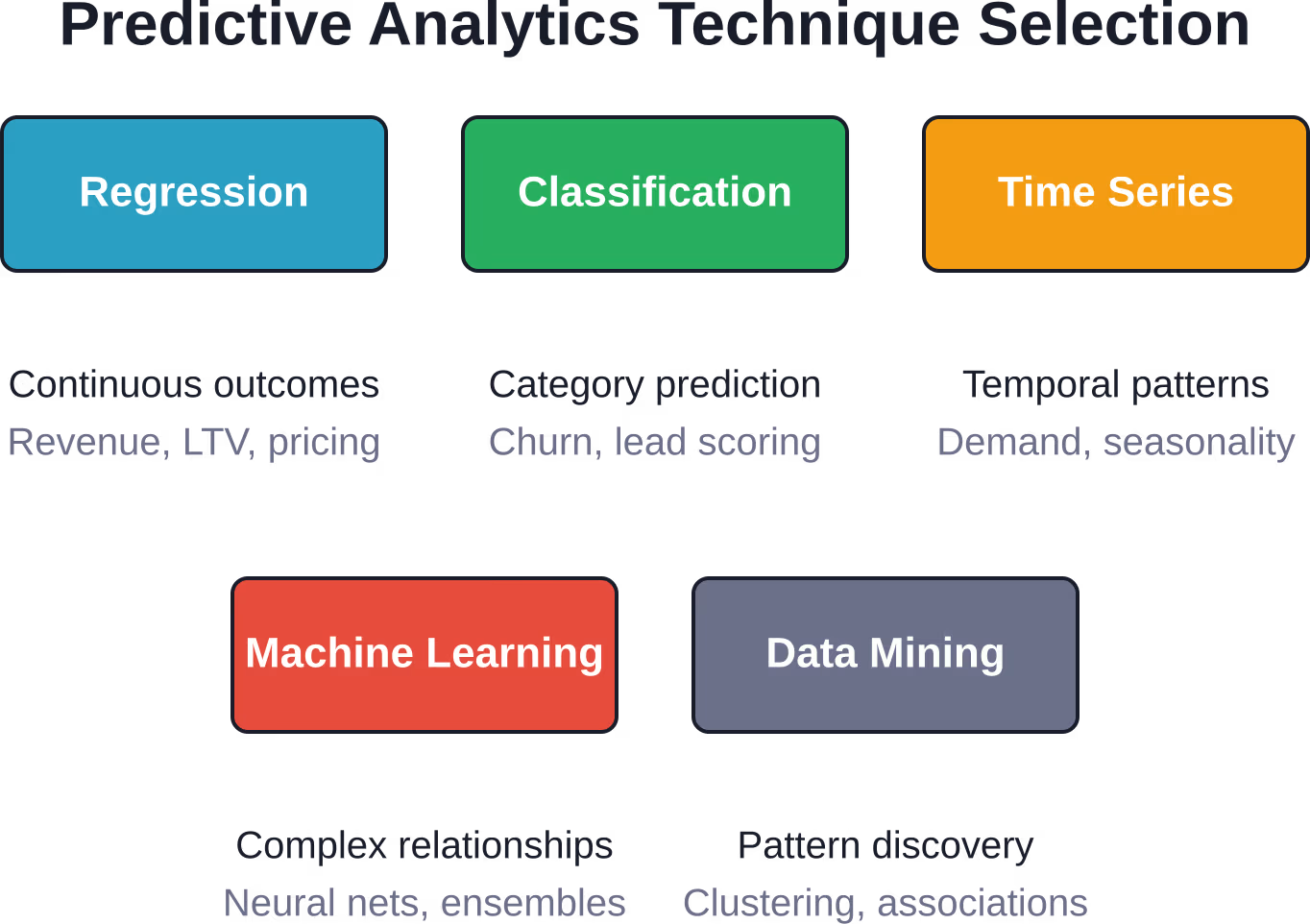

Core Predictive Analytics Techniques

Understanding the techniques behind predictive analytics helps clarify which tools you actually need. Different methods suit different prediction types, and not every platform supports every technique.

Regression Analysis

Regression models predict continuous numerical outcomes. Linear regression works for straightforward relationships—predicting sales revenue based on advertising spend, for example. More complex variants like polynomial regression and ridge regression handle non-linear patterns and prevent overfitting.

The technique requires clean historical data with identified relationships between variables. Marketing teams use regression to forecast customer lifetime value, while finance departments predict quarterly revenue based on seasonal trends and market signals.

Classification Models

Classification predicts categorical outcomes—yes/no decisions, risk categories, customer segments. Logistic regression, decision trees, and support vector machines fall into this category.

Lead scoring systems rely heavily on classification. The model analyzes hundreds of attributes (job title, company size, website behavior) to classify prospects as high-probability or low-probability conversions. Healthcare organizations use classification to predict patient readmission risk or disease diagnosis likelihood.

Time Series Forecasting

Time series techniques analyze data points collected at consistent intervals to predict future values. ARIMA models, exponential smoothing, and Prophet (Meta’s open-source forecasting tool) excel at capturing seasonal patterns, trends, and cyclical behaviors.

Retail operations use time series forecasting for inventory planning. E-commerce platforms predict demand spikes around holidays. Financial institutions forecast stock prices and currency fluctuations using sophisticated time series models that incorporate multiple variables.

Machine Learning Algorithms

Machine learning enhances predictive analytics by automatically improving predictions as more data becomes available. Random forests, gradient boosting machines (GBM), neural networks, and deep learning architectures handle complex, non-linear relationships that traditional statistical methods miss.

According to research from William & Mary’s online business analytics program, machine learning techniques have led to enhancements in predictive analytics for businesses across a broad range of industries. The use of machine learning allows systems to process millions of data points simultaneously—something that would be impractical with manual statistical modeling.

For example, a machine learning algorithm managing a pay-per-click advertisement campaign might set an upper bound of $0.25 for keyword bidding. By incorporating thousands of data points, the algorithm could determine that $0.14 represents the optimal bid for maximum ROI—a level of precision difficult to achieve through manual analysis.

Data Mining and Pattern Recognition

Data mining extracts previously unknown patterns from large datasets. Clustering algorithms group similar customers without predefined categories. Association rule learning discovers which products customers frequently purchase together. Anomaly detection identifies unusual patterns that might indicate fraud or system failures.

Machine learning and predictive analysis serve as essential tools to uncover and understand huge collections of data. These techniques complement each other—data mining discovers the patterns, while predictive modeling uses those patterns to forecast future outcomes.

Categories of Predictive Analytics Tools

Predictive analytics platforms range from code-free solutions for business analysts to enterprise machine learning ecosystems built for data science teams. The market segments into distinct categories based on technical requirements, use case specificity, and deployment complexity.

Statistical Software Platforms

Traditional statistical tools like IBM SPSS, SAS, and Stata dominated predictive analytics for decades. These platforms offer comprehensive statistical modeling capabilities, extensive documentation, and proven reliability for academic and enterprise research.

IBM SPSS provides point-and-click interfaces for regression analysis, factor analysis, and other classical techniques. SAS delivers industrial-strength analytics with particular dominance in regulated industries like pharmaceuticals and finance. These tools require statistical knowledge but don’t demand programming expertise for basic analyses.

The limitation? They weren’t built for modern machine learning workflows or big data infrastructure. Data scientists increasingly prefer more flexible alternatives.

Programming Languages and Libraries

Python and R represent the most popular environments for predictive analytics among data science teams. Both languages offer extensive machine learning libraries, active communities, and flexibility for custom model development.

Python’s scikit-learn library provides implementations of dozens of algorithms. TensorFlow and PyTorch power deep learning models. Pandas handles data manipulation. The ecosystem supports the entire predictive analytics workflow from data cleaning through model deployment.

R specializes in statistical computing with packages like caret, randomForest, and glmnet. The language excels at exploratory data analysis and statistical visualization through ggplot2. Research statisticians favor R for its comprehensive coverage of advanced statistical techniques.

These tools require programming skills. But they offer maximum flexibility and customization for teams with technical expertise.

Business Intelligence Tools with Predictive Features

Platforms like Tableau, Microsoft Power BI, and Qlik embedded predictive capabilities into their BI offerings. These tools focus on accessibility—business users can generate forecasts without writing code or understanding algorithms.

Tableau integrates with R and Python for custom models while offering built-in forecasting for time series data. Power BI includes AutoML features through Azure integration. These platforms connect to 100+ data sources including databases, spreadsheets, and cloud services.

The trade-off involves sophistication. Built-in forecasting works well for standard scenarios but lacks the depth required for complex modeling. Finance teams using these tools for revenue forecasting alongside market signals and seasonal trends achieve reliable results. But specialized use cases still require dedicated predictive platforms.

AutoML and No-Code Platforms

Automated machine learning platforms democratize predictive analytics by handling algorithm selection, hyperparameter tuning, and feature engineering automatically. DataRobot, H2O.ai, and Google AutoML represent this category.

These tools ingest training data and automatically test hundreds of model configurations to identify the best-performing approach. Business analysts without data science backgrounds can build production-grade models. The platforms handle deployment, monitoring, and retraining workflows.

DataRobot particularly shines for enterprise deployments with governance requirements. H2O.ai offers both open-source and commercial versions. Driverless AI automates the complete machine learning pipeline while maintaining model explainability—critical for regulated industries.

Cloud-Based Machine Learning Services

Amazon Web Services, Google Cloud Platform, and Microsoft Azure provide managed machine learning environments. AWS SageMaker, Google Vertex AI, and Azure Machine Learning combine infrastructure, algorithm libraries, and deployment tools in cloud-native platforms.

These services integrate naturally with other cloud resources. Data stored in S3 or BigQuery flows directly into model training. Deployed models scale automatically based on prediction volume. Built-in monitoring tracks model performance and data drift.

Cloud platforms suit organizations already invested in cloud infrastructure. They eliminate infrastructure management overhead while providing enterprise-grade security and compliance features. Organizations using cloud machine learning services have achieved increases in customer lifetime value through predictive customer segmentation.

Industry-Specific Solutions

Specialized predictive analytics tools target specific industries or use cases. Marketing clouds (Salesforce Einstein, Adobe Sensei) focus on customer journey prediction and personalization. Healthcare platforms address patient risk stratification and readmission prediction. Financial services tools specialize in fraud detection and credit scoring.

These solutions come pre-configured with industry-relevant models and data schemas. Implementation time drops significantly compared to building custom models from scratch. Healthcare organizations using predictive analytics have reported significant reductions in hospitalizations and emergency room visits through risk stratification approaches.

The specificity cuts both ways. Industry tools excel at their designed purpose but lack flexibility for novel use cases outside their scope.

Essential Features of Predictive Analytics Platforms

Not every platform includes every capability. Understanding which features matter for specific use cases prevents expensive mismatches between tool capabilities and organizational needs.

Data Connectivity and Integration

Predictive models only work when they can access relevant data. The best platforms offer extensive connector libraries for databases (PostgreSQL, MySQL, Oracle), cloud data warehouses (Snowflake, Redshift, BigQuery), CRM systems (Salesforce, HubSpot), and marketing platforms.

Data integration extends beyond simple imports. Production predictive systems need automated data pipelines that refresh training data, retrain models on schedules, and push predictions back to operational systems. Real-time prediction APIs require low-latency connections to transactional databases.

Can the tool deploy in the cloud and on-premises? Does it support data residency requirements for international operations? These integration questions determine whether a platform fits enterprise architecture constraints.

Algorithm Libraries and Model Types

Comprehensive platforms support multiple modeling approaches. Regression models for continuous outcomes. Classification algorithms for categorical predictions. Time series methods for temporal forecasting. Clustering for segmentation. Ensemble methods that combine multiple models for improved accuracy.

The depth matters too. Does the platform offer just linear regression, or does it include regularization techniques like LASSO and ridge regression? Can it handle gradient boosting machines, random forests, and neural networks? Does it support deep learning for unstructured data like images and text?

Leading platforms provide 10-50 different algorithm implementations. They also explain when to use each approach, guiding users toward appropriate techniques for their data characteristics.

AutoML and Automated Feature Engineering

Feature engineering—creating predictive variables from raw data—traditionally consumed massive amounts of data science time. Modern platforms automate this process, testing thousands of feature combinations to identify the most predictive variables.

AutoML extends automation to algorithm selection and hyperparameter tuning. The system trains dozens of candidate models, compares performance using cross-validation, and recommends the best configuration. This capability accelerates model development from weeks to hours.

But automation has limits. Fully automated systems sometimes miss domain-specific insights that expert analysts would incorporate. The best platforms balance automation with expert override capabilities.

Model Explainability and Interpretability

Complex machine learning models often function as black boxes—they generate accurate predictions but don’t explain reasoning. Regulated industries require model interpretability to satisfy compliance requirements. Business stakeholders need explanations to trust recommendations.

Modern platforms include explainability tools. SHAP (SHapley Additive exPlanations) values quantify each variable’s contribution to individual predictions. Partial dependence plots show how changing one variable affects outcomes. Feature importance rankings identify which data points matter most.

These interpretability features bridge the gap between statistical accuracy and business adoption. When marketing teams understand why a model flags certain leads as high-priority, they trust the system enough to act on recommendations.

Deployment and Monitoring Infrastructure

Building accurate models represents half the challenge. Deploying them into production systems where they generate business value completes the picture. Enterprise platforms include deployment infrastructure—REST APIs, batch scoring engines, and embedded model serving.

Post-deployment monitoring tracks model performance over time. Prediction accuracy often degrades as real-world conditions change. Monitoring dashboards alert teams when model performance drops below thresholds, triggering retraining workflows.

Version control for models matters too. Production systems need rollback capabilities when new model versions underperform. The best platforms treat models as versioned artifacts with complete lineage tracking.

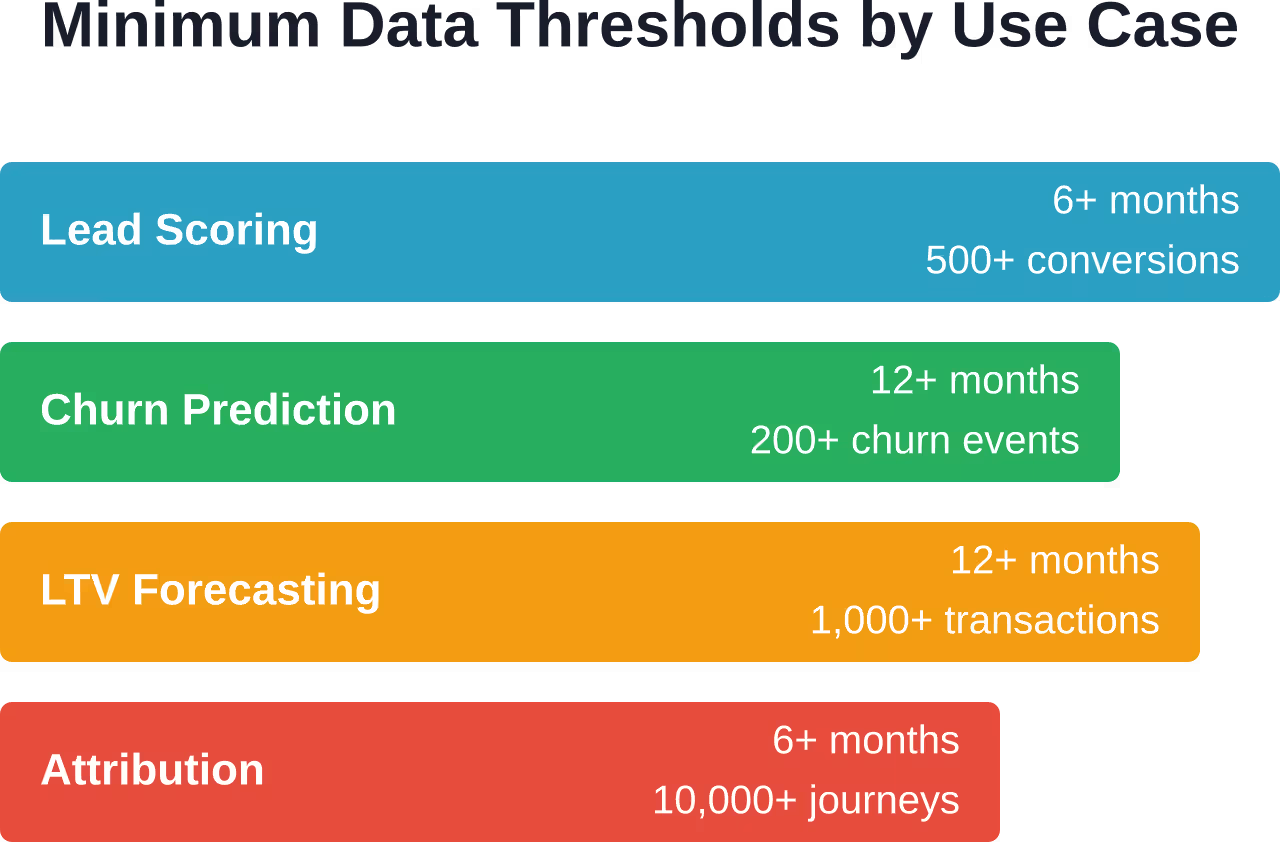

Minimum Data Requirements for Predictive Analytics

Here’s where implementations often fail: insufficient data volume or quality prevents models from learning meaningful patterns. Different prediction types demand different data thresholds.

If predictions involve conversion probability, the model needs to see hundreds—ideally thousands—of past conversions across different contexts. Minimum thresholds by prediction type:

- Lead scoring: 6+ months of lead history, 500+ conversions

- Churn prediction: 12+ months of customer lifecycle data, 200+ churn events

- LTV forecasting: 12+ months of revenue data, 1,000+ transactions

- Attribution modeling: 6+ months of multi-channel data, 10,000+ user journeys

These represent baseline minimums. More data generally improves model accuracy, though diminishing returns set in beyond certain volumes. Data quality matters as much as quantity—missing values, inconsistent formats, and incorrect labels undermine model performance regardless of volume.

Organizations below these thresholds should start with simpler analytical approaches (descriptive analytics, basic segmentation) while building data infrastructure for future predictive capabilities.

Top Predictive Analytics Platforms for Different Use Cases

Selecting the right tool depends on technical capabilities, use case requirements, and organizational maturity. These platforms represent current leaders across different categories.

For Marketing Teams: Improvado

Improvado combines unified marketing data integration with AI-powered predictive analytics. The platform connects to major advertising platforms, CRM systems, and analytics tools, centralizing data that’s typically scattered across dozens of sources.

The AI Agent enables natural language predictions—marketing analysts can query “which campaigns will drive the most conversions next quarter” without writing SQL or Python. Setup typically requires two weeks, positioning it as a turnkey solution for marketing departments without dedicated data science teams.

Improvado suits organizations prioritizing marketing-specific predictions: campaign performance forecasting, customer lifetime value modeling, and attribution optimization. It won’t replace general-purpose data science platforms but excels within its marketing analytics scope.

For Visual Analytics: Tableau

Tableau’s strength lies in combining predictive capabilities with best-in-class data visualization. Business users can generate forecasts through drag-and-drop interfaces while data scientists integrate custom R and Python models.

The platform supports complex calculations and rich time-series analysis to explore seasonality and trends. Data, visualizations, and dashboards can be embedded into third-party tools, extending predictive insights across the organization.

Tableau fits teams that need to communicate predictions to non-technical stakeholders. The visualization layer makes forecasts accessible and actionable for executives who won’t interpret raw model outputs.

For Enterprise AutoML: DataRobot

DataRobot automates the complete machine learning pipeline—from feature engineering through model deployment and monitoring. The platform tests hundreds of algorithm configurations, ranks them by performance, and explains model behavior through built-in interpretability tools.

Enterprise governance features include audit trails, role-based access controls, and bias detection. Models deploy via REST APIs or batch scoring engines. Automated monitoring tracks performance degradation and triggers retraining workflows.

DataRobot suits large enterprises with diverse predictive use cases but limited data science staffing. Financial services, healthcare, and manufacturing organizations use it for risk modeling, fraud detection, and predictive maintenance.

For Cloud-Native Workflows: AWS SageMaker

Amazon SageMaker provides managed infrastructure for building, training, and deploying machine learning models at scale. The service integrates with AWS data lakes, handles distributed training across GPU clusters, and deploys models with automatic scaling.

Built-in algorithms cover common use cases while custom model support accommodates specialized requirements. SageMaker Studio notebooks enable collaborative development. Model Monitor tracks data drift and prediction quality in production.

Organizations already invested in AWS infrastructure gain seamless integration. Data stored in S3 flows directly into training pipelines. Deployed models call other AWS services without complex networking configuration.

For Open-Source Flexibility: H2O.ai

H2O.ai offers both open-source and commercial predictive analytics platforms. The open-source H2O framework runs on laptops or distributed clusters, supporting popular algorithms through R and Python interfaces.

Driverless AI, the commercial product, automates feature engineering, model selection, and hyperparameter tuning while maintaining interpretability through automatic documentation. The platform generates explanations suitable for regulatory review in banking and healthcare.

H2O.ai fits organizations that value open-source flexibility while needing enterprise support for production deployments. The hybrid approach allows experimentation with free tools before committing to commercial licenses.

For Statistical Analysis: IBM SPSS

IBM SPSS remains dominant in academic research, healthcare, and government sectors where classical statistical techniques and regulatory compliance matter most. The point-and-click interface enables researchers without programming backgrounds to conduct sophisticated analyses.

The platform covers regression modeling, survival analysis, factor analysis, and experimental design. Documentation and validation meet FDA requirements for pharmaceutical trials. Integration with IBM’s broader analytics suite supports enterprise deployments.

SPSS suits organizations where statistical rigor and documentation outweigh cutting-edge machine learning capabilities. It’s less flexible than Python or R but more accessible to non-programmers.

| Platform | Best For | Key Strength | Typical Users |

|---|---|---|---|

| Improvado | Marketing analytics | Unified data + AI Agent | Marketing analysts |

| Tableau | Visual communication | Predictive + visualization | Business analysts |

| DataRobot | Enterprise AutoML | Full automation + governance | Analysts and data scientists |

| AWS SageMaker | Cloud-native ML | AWS integration + scale | Data engineers and scientists |

| H2O.ai | Open-source + commercial | Flexibility + explainability | Data science teams |

| IBM SPSS | Statistical rigor | Regulatory compliance | Researchers and analysts |

Real-World Predictive Analytics Applications

Understanding how organizations apply these tools clarifies their practical value beyond theoretical capabilities.

Healthcare: Patient Risk Stratification

Healthcare organizations use predictive analytics to identify patients at high risk for hospital readmission or emergency room visits. Healthcare organizations using predictive analytics have reported significant reductions in hospitalizations and emergency room visits through risk stratification approaches.

The models incorporate electronic health records, medication adherence data, social determinants of health, and historical utilization patterns. Clinicians receive risk scores that inform care planning decisions—allocating home health visits, coordinating specialist follow-ups, or adjusting medication regimens before acute episodes occur.

E-Commerce: Customer Lifetime Value Prediction

E-commerce logistics platforms using AWS analytics tools have achieved increases in customer lifetime value through predictive customer segmentation. Marketing teams use these predictions to optimize acquisition spending. High-predicted-LTV customers receive more aggressive retention offers and personalized experiences. The approach shifts budget from broad campaigns to targeted interventions where ROI proves highest.

Media: Content Recommendation and Audience Growth

Media companies have reported significant improvements in customer acquisition through predictive audience modeling. Content recommendation engines use similar techniques—Netflix and Spotify predict which movies or songs individual users will enjoy based on collaborative filtering and content attributes. These predictions directly impact user retention and engagement metrics.

Financial Services: Fraud Detection

Credit card companies deploy real-time predictive models that score each transaction for fraud probability. The systems analyze transaction amount, merchant category, geographic location, time of day, and historical patterns to flag suspicious activity within milliseconds.

These models balance accuracy with false positive rates. Blocking legitimate transactions frustrates customers, while missing fraud attempts costs money. Ensemble methods combining multiple algorithms achieve the precision required for production deployment.

Data breaches carry substantial costs for organizations, making predictive fraud detection systems valuable investments.

Manufacturing: Predictive Maintenance

Industrial operations use sensor data and machine learning to predict equipment failures before they occur. IEEE research demonstrates explainable AI frameworks for predictive tool condition monitoring in high-speed machining, combining prediction accuracy with interpretability for maintenance technicians.

These systems analyze vibration patterns, temperature readings, acoustic signatures, and usage logs to forecast when components will fail. Maintenance schedules shift from fixed intervals to condition-based timing, reducing downtime while extending equipment life.

Selecting the Right Predictive Analytics Tool

The predictive analytics market offers both generic solutions applicable across industries and industry-specific tools tailored to particular use cases. Choosing incorrectly wastes time and budget while delaying value realization.

Assess Your Data Maturity

Organizations fall into distinct data maturity stages. Early-stage companies lack sufficient historical data for sophisticated modeling. Mid-stage operations have data but need accessibility improvements. Advanced organizations optimize model performance and deployment infrastructure.

Match tool sophistication to current maturity. Teams without data science expertise shouldn’t start with AWS SageMaker—the learning curve delays results. Business analysts working with established datasets achieve faster wins using AutoML platforms or BI tools with predictive features.

Define Specific Use Cases

Generic “we need predictive analytics” initiatives fail more often than targeted projects. Define concrete use cases: reduce customer churn by 15%, improve lead scoring accuracy by 20%, or optimize inventory levels to reduce holding costs by $500K annually.

Specific objectives clarify tool requirements. Churn prediction needs classification algorithms and customer lifecycle data integration. Inventory optimization requires time series forecasting and supply chain system connectivity. Different use cases favor different platforms.

Evaluate Technical Requirements

Does the platform support your data sources? Can it deploy in your preferred environment (cloud, on-premises, hybrid)? Does it integrate with existing BI dashboards and operational systems?

Technical compatibility determines implementation complexity. A powerful platform that requires extensive custom integration work might deliver less value than a slightly less sophisticated tool with turnkey connectors for your specific tech stack.

Consider Team Capabilities

AutoML platforms enable business analysts to build models without programming. Statistical tools like SPSS suit researchers comfortable with traditional techniques. Python and R require data science expertise but offer maximum flexibility.

Honest assessment of team skills prevents tool-capability mismatches. Alternatively, use tool selection to guide hiring decisions—if business strategy demands advanced modeling, invest in data science talent alongside infrastructure.

Account for Total Cost of Ownership

Subscription pricing represents only part of total cost. Implementation services, training, data engineering, and ongoing maintenance add substantial expenses beyond listed software fees.

Hidden costs emerge during scaling. Some platforms charge per prediction, creating usage-based expenses that balloon with adoption. Others require expensive infrastructure for on-premises deployment. Cloud services accumulate compute and storage charges. Calculate realistic three-year total ownership costs before committing.

Implementation Best Practices

Purchasing a predictive analytics platform represents the beginning, not the end, of the journey. Successful implementations follow consistent patterns.

Start with a Pilot Project

Enterprise-wide rollouts often stall when complexity overwhelms teams. Instead, identify a single high-value use case with clear success metrics and manageable scope.

A three-month pilot proves the technology, builds organizational confidence, and surfaces integration challenges before full deployment. Choose use cases where prediction accuracy directly impacts measurable business outcomes—customer churn, lead conversion rates, or inventory turns.

Establish Data Governance

Predictive models inherit the quality problems in their training data. Establish data governance policies before model development begins. Define data ownership, quality standards, retention policies, and access controls.

Data catalogs document available datasets, their schemas, update frequencies, and known quality issues. This documentation accelerates model development by helping data scientists quickly locate relevant training data.

Build Cross-Functional Teams

Effective predictive analytics requires collaboration between domain experts, data scientists, and IT operations. Domain experts understand business context and interpret model outputs. Data scientists build and validate models. IT teams handle deployment and monitoring infrastructure.

Siloed implementations fail because models don’t reflect business reality or can’t integrate with operational systems. Cross-functional teams prevent these disconnects.

Plan for Model Lifecycle Management

Models degrade over time as real-world conditions change. Customer behaviors shift. Product mixes evolve. Competitors adjust strategies. Last year’s high-performing churn model might underperform today.

Establish processes for monitoring model performance, retraining on fresh data, and deploying updated versions. Automation tools handle routine retraining, but human review prevents automated systems from learning and amplifying anomalous patterns.

Prioritize Model Explainability

Stakeholders won’t act on predictions they don’t understand. Even if a black-box model achieves 95% accuracy, sales teams ignore lead scores without explanations. Executives reject recommendations lacking clear reasoning.

Invest in explainability tools that translate model internals into business language. “This lead scored high because they visited pricing pages three times, work at a target company size, and match our best customer segment” drives action better than “the model predicts 0.87 conversion probability.”

Get Predictive Models Built Around Your Data and Use Cases

Predictive analytics tools often require you to adapt your data to their structure. When data comes from multiple sources or doesn’t follow a standard format, built-in models stop being useful. AI Superior develops custom AI software with predictive analytics, building models that reflect how your data is actually collected and used. This allows you to work with forecasting, fraud detection, and failure prediction without being limited by predefined tool logic.

Turn Your Data Into Working Predictive Models

AI Superior provides:

- Predictive models built on your own data, not generic templates

- AI software designed for your specific prediction tasks

- One system that combines data from multiple sources

Contact AI Superior to discuss how predictive analytics can be implemented in your environment.

Common Pitfalls and How to Avoid Them

Organizations waste resources on predictive analytics initiatives that deliver minimal value. These patterns repeat across failed implementations.

Insufficient Training Data

The most sophisticated algorithms can’t extract patterns from inadequate data. Teams sometimes force predictive projects before accumulating sufficient historical records.

Be honest about data readiness. If churn prediction requires 200+ historical churn events but only 50 exist, delay the project while building data infrastructure. Use the interim period for descriptive analytics that documents current patterns.

Overemphasis on Accuracy

Pursuing marginally better model accuracy often provides less business value than deploying a good-enough model quickly. The difference between 82% and 85% accuracy rarely justifies six additional months of development.

Define acceptable accuracy thresholds based on business impact. Deploy models that meet thresholds, then iterate based on production performance. Real-world usage often reveals improvements invisible during offline testing.

Neglecting the Last Mile

Building accurate models represents half the challenge. Integrating predictions into operational workflows where they influence decisions completes the value chain.

If lead scores don’t flow into the CRM where salespeople work, those scores won’t change behavior. If churn predictions don’t trigger retention campaigns, they don’t reduce churn. Plan deployment and integration from project inception, not as an afterthought.

Ignoring Change Management

Technical implementation succeeds more often than organizational adoption. Sales teams accustomed to gut-feel decisions resist algorithmic lead scoring. Marketing managers question attribution models that contradict their intuition.

Involve stakeholders early. Demonstrate quick wins that build confidence. Provide explanations that help users understand and trust predictions. Change management determines whether predictive analytics delivers paper value or actual business impact.

The Future of Predictive Analytics Tools

The predictive analytics landscape continues evolving. Several trends shape where tools are heading.

Increased Automation and Accessibility

AutoML capabilities expand each year, lowering technical barriers to predictive modeling. Natural language interfaces let business analysts ask questions in plain English rather than writing code or SQL.

This democratization extends predictive capabilities beyond specialized data science teams. Domain experts build their own models, accelerating insight generation while freeing data scientists for complex challenges requiring custom approaches.

Real-Time and Streaming Predictions

Batch predictions give way to real-time scoring as infrastructure improves. Fraud detection systems already score transactions in milliseconds. Personalization engines deliver individualized content recommendations in real-time as users browse websites.

Streaming data platforms and low-latency model serving infrastructure enable continuous prediction refreshes. Customer risk scores update as new behavioral signals arrive rather than recalculating nightly.

Explainable AI Emphasis

Regulatory pressure and business requirements drive demand for interpretable models. European GDPR regulations establish rights to explanation for automated decisions. Model risk management in banking requires documented model logic.

Explainability techniques advance alongside model sophistication. Researchers develop methods that maintain prediction accuracy while enabling transparency about decision logic. This balance becomes a competitive differentiator as organizations face scrutiny over algorithmic decisions.

Integration of Multiple Data Types

Early predictive models used structured data—customer demographics, transaction records, behavioral logs. Modern platforms increasingly incorporate unstructured data like text, images, and video.

Natural language processing extracts signals from customer service transcripts and social media posts. Computer vision analyzes product images and manufacturing defects. Multi-modal models combine structured and unstructured inputs for richer predictions.

Frequently Asked Questions

What’s the difference between predictive analytics tools and business intelligence platforms?

Business intelligence platforms focus on descriptive analytics—reporting what happened and why through dashboards, visualizations, and historical analysis. Predictive analytics tools forecast what will happen next using statistical models and machine learning algorithms. Many modern BI platforms now include basic predictive features, but dedicated predictive platforms offer more sophisticated modeling techniques and automated workflows.

Do I need data scientists to use predictive analytics tools?

It depends on the platform and use case. AutoML tools like DataRobot and industry-specific platforms enable business analysts to build models without programming expertise. These platforms automate algorithm selection and feature engineering. For custom models, advanced techniques, or novel use cases, data science skills remain valuable. Organizations often start with accessible tools and add technical expertise as requirements grow more sophisticated.

How much data do I need before starting predictive analytics?

Minimum requirements vary by use case. Lead scoring typically needs 6+ months of history and 500+ conversions. Churn prediction requires 12+ months of customer lifecycle data and 200+ churn events. Simpler forecasting might work with less data, while complex models need more. Quality matters as much as quantity—clean, consistent data with relevant variables delivers better results than massive volumes of poor-quality records.

What industries benefit most from predictive analytics?

Nearly every industry applies predictive analytics, though use cases vary. Financial services use it for fraud detection, credit scoring, and risk management. Healthcare organizations predict patient outcomes and readmission risk. Retailers forecast demand and optimize pricing. Manufacturing applies it to predictive maintenance. Marketing teams across industries use it for customer lifetime value prediction and campaign optimization. The common thread involves decisions where forecasting future outcomes creates competitive advantage.

How accurate are predictive analytics models?

Accuracy varies widely based on data quality, problem complexity, and modeling approach. Simple forecasting might achieve 70-80% accuracy, while sophisticated ensemble methods on clean data reach 90%+. However, perfect accuracy is neither achievable nor necessary—models that improve decision-making compared to intuition alone deliver value. Real-world performance often differs from testing accuracy, so continuous monitoring ensures models maintain effectiveness as conditions change.

Can predictive analytics work with small datasets?

Small datasets limit modeling options and accuracy. Techniques like regularization prevent overfitting when training data is scarce. Transfer learning applies patterns learned from large datasets to smaller domain-specific problems. That said, statistical significance requires minimum sample sizes—predicting rare events from 20 historical examples won’t produce reliable forecasts. Organizations with limited data should start with simpler analytical approaches while building data infrastructure for future predictive capabilities.

What’s the typical ROI timeline for predictive analytics implementations?

Pilot projects often demonstrate value within 3-6 months when use cases have clear metrics and sufficient data. Enterprise-wide implementations take 12-18 months as organizations build infrastructure, establish governance, and integrate predictions into operational workflows. ROI depends on use case—churn reduction and fraud prevention deliver measurable financial impact quickly, while strategic forecasting provides longer-term benefits. Organizations that start with focused pilots and expand incrementally achieve faster returns than those attempting comprehensive transformations immediately.

Conclusion

Predictive analytics tools transform how organizations make decisions by replacing intuition with data-driven forecasts. The technology stack ranges from accessible AutoML platforms that democratize modeling to sophisticated programming environments that give data scientists maximum flexibility.

Successful implementations match tool capabilities to organizational maturity, team skills, and specific use cases. Early-stage companies benefit from turnkey solutions with minimal technical requirements. Advanced data teams leverage custom modeling environments for specialized applications.

The key lies in starting focused—identify a single high-value use case, ensure data readiness, select appropriate tools, and demonstrate value before expanding. Organizations that follow this approach achieve measurable business outcomes: reduced churn, improved conversion rates, optimized inventory levels, and prevented fraud losses.

Real-world results validate the investment. Healthcare providers reduced hospitalizations significantly through predictive analytics. E-commerce platforms increased customer lifetime value through predictive approaches. Media companies grew acquisition substantially using predictive audience modeling. These outcomes become achievable when the right tools meet sufficient data and clear business objectives.

The predictive analytics landscape will continue evolving. Automation expands access. Real-time capabilities enable instantaneous decisions. Explainability techniques build trust in algorithmic recommendations. Organizations that invest now in predictive capabilities build competitive advantages that compound as data accumulates and models improve.

Ready to implement predictive analytics? Start by auditing existing data, defining measurable objectives, and selecting one pilot use case where forecasting delivers clear business value. The tools exist—successful outcomes depend on thoughtful implementation aligned with organizational reality.