Quick Summary: AI will not replace radiologists, but it will fundamentally transform their workflow. Radiologists who integrate AI into their practice will have significant advantages over those who resist adoption. The technology excels at specific detection tasks but lacks the clinical judgment, patient interaction skills, and contextual reasoning that define radiology expertise.

The debate started in earnest back in 2016 when Geoffrey Hinton, a pioneer in deep learning, made a bold prediction: medical schools should stop training radiologists because AI would surpass them within five years. That deadline has come and gone.

Yet here’s what actually happened. Radiology residency programs remain competitive. The American College of Radiology continues investing in education and workforce development. And radiologists are busier than ever.

So what gives? Was Hinton wrong, or is the disruption just delayed?

The truth is more nuanced than either the doomsayers or the skeptics suggest. AI has made remarkable progress in medical imaging, but it hasn’t replaced a single radiologist position through pure automation. Instead, something more interesting is happening.

Why the Replacement Debate Persists

Understanding why this question won’t die requires looking at what AI can genuinely accomplish in radiology today versus what the technology still struggles with fundamentally.

Pattern recognition in medical images is exactly the kind of task where deep learning excels. Feed a neural network thousands of chest X-rays labeled for pneumonia, and it learns to spot the subtle opacities that indicate infection. Train it on mammograms, and it identifies suspicious masses with impressive accuracy.

But here’s the thing. Radiology isn’t just pattern recognition.

A radiologist integrates imaging findings with patient history, lab results, prior studies, and clinical context. They communicate with referring physicians to clarify questions. They perform image-guided procedures that require real-time decision-making and manual dexterity. They catch the unexpected finding that wasn’t part of the clinical question.

According to research published in RSNA journals, when AI advice was incorrect, physicians still relied heavily on that advice, with diagnostic accuracy dropping significantly. A November 2024 study in Radiology found that when AI pointed to specific areas of interest in X-rays, radiologists sometimes over-relied on those suggestions even when they were wrong. This reveals something crucial: AI is a powerful tool, but it’s not an autonomous replacement.

The U.S. Department of Health and Human Services released a strategic plan for AI in healthcare in January 2025 that echoes recommendations from the American College of Radiology. The plan prioritizes AI innovation alongside trustworthiness, democratization of access, and workforce development. Notice what’s absent? Any suggestion that healthcare workers will become obsolete.

What AI Can Reliably Do in Radiology Today

Let’s get specific about where AI actually delivers value in clinical practice right now, not in speculative futures.

Detection of Specific Abnormalities

AI excels at flagging specific findings on imaging studies. FDA-cleared tools can identify fractures on skeletal X-rays, pneumothorax on chest radiographs, intracranial hemorrhage on CT scans, and pulmonary nodules on chest CT.

According to clinical validation data, some AI tools like AZtrauma achieve up to 83% reduction in turnaround time at healthcare centers for detecting fractures, dislocations, and joint effusions on X-rays. That’s a genuine workflow improvement.

AZchest, another CE- and FDA-cleared product, helps detect abnormalities on chest radiographs. These aren’t experimental technologies—they’re actively deployed in clinical settings.

Research published in late 2025 shows that currently, 70% of MRI workflow steps and 64% of CT steps have available AI solutions. Compare that to interventional radiology, where only 55% of workflow steps have AI support. Diagnostic imaging has matured faster than interventional procedures, which makes sense given the different complexity levels.

Worklist Prioritization and Triage

One of AI’s most practical applications is triaging studies for radiologist review. An algorithm can scan incoming studies and flag those with critical findings—a massive pulmonary embolism, acute stroke, or traumatic brain injury—moving them to the front of the reading queue.

This doesn’t replace radiologist interpretation. It ensures that time-sensitive cases get urgent attention, potentially saving lives while reducing the stress of managing overwhelming worklists.

Real talk: radiologists face crushing workloads. The American College of Radiology’s 2025 report on workforce burden emphasizes that easing this pressure requires acknowledging trends and finding overlooked solutions. AI triage represents one such solution.

Quantitative Analysis and Measurements

AI can perform repetitive quantitative tasks faster and more consistently than humans. Measuring tumor dimensions for oncology response criteria, calculating ejection fraction on cardiac imaging, or volumetric analysis of brain structures—these are perfect AI applications.

The technology doesn’t get fatigued. It doesn’t have day-to-day measurement variability. For longitudinal studies tracking disease progression, AI-generated measurements can be more reproducible than manual techniques.

Report Generation Assistance

Multimodal generative AI models can now draft radiology reports directly from imaging data. Research from December 2025 shows these models are becoming increasingly capable, though they require careful validation.

The key word? Draft. These AI-generated reports need radiologist review and editing. Clinical precision matters enormously—a missed qualifier or inappropriate certainty level can mislead referring physicians and harm patients.

But wait. If AI can generate a competent first draft that a radiologist then refines, that’s a productivity multiplier, not a replacement.

What AI Cannot Do in Radiology

Now for the limitations that prevent AI from functioning as an autonomous radiologist, regardless of how sophisticated the algorithms become.

Clinical Context Integration

Imaging never exists in isolation. A small lung nodule means something completely different in a 25-year-old nonsmoker versus a 65-year-old with a smoking history. The same imaging finding might be critical in one clinical scenario and incidental in another.

AI models trained on images struggle with this contextual reasoning. They don’t naturally integrate patient age, symptoms, laboratory values, medication lists, surgical history, and family history the way a radiologist does automatically.

According to research on AI generalizability published in late 2025, AI models often fail when deployed in different clinical settings than where they were trained. After reviewing studies addressing diverse diagnostic tasks, researchers found only six met inclusion criteria for robust external validation. Models that performed brilliantly at one institution stumbled when applied at hospitals with different patient populations, imaging protocols, or scanner equipment.

That’s a generalizability problem that human radiologists don’t have. Training at one institution doesn’t prevent a radiologist from practicing competently at another.

Unexpected Findings and Comprehensive Analysis

Here’s a scenario AI handles poorly: a CT scan ordered to evaluate abdominal pain that incidentally shows an early lung cancer at the lung bases, or subtle bone lesions suggesting metastatic disease, or an abdominal aortic aneurysm.

Task-specific AI looks for what it was trained to find. A model optimized for detecting kidney stones won’t flag that concerning pancreatic mass. Radiologists perform comprehensive examinations, reviewing every structure visible in the study regardless of the clinical indication.

Community discussions among radiologists consistently emphasize this point. AI might outperform humans on narrow detection tasks, but radiology requires broad vigilance across dozens of potential findings simultaneously.

Interventional Procedures

Image-guided biopsies, drain placements, tumor ablations, and vascular interventions require manual skills, real-time decision-making, and patient interaction. Some research shows AI systems can localize moving catheters and provide guidance, but the gulf between “assistance” and “autonomous performance” remains vast.

The 2025 systematic review noted that only 55% of interventional workflow steps currently have AI solutions, compared to 70% for MRI. The gap makes sense—interventional radiology combines imaging interpretation with procedural expertise that’s nowhere close to automation.

Communication and Collaboration

Radiologists regularly field calls from emergency department physicians seeking guidance on imaging protocols, surgeons discussing operative planning, oncologists reviewing tumor response, and primary care doctors clarifying report findings.

These consultations require nuanced medical knowledge, communication skills, and collaborative judgment. They’re fundamentally human interactions that AI cannot replicate.

Look, this matters more than it might seem. Radiology isn’t a back-room image-reading service. It’s a clinical specialty deeply embedded in multidisciplinary patient care.

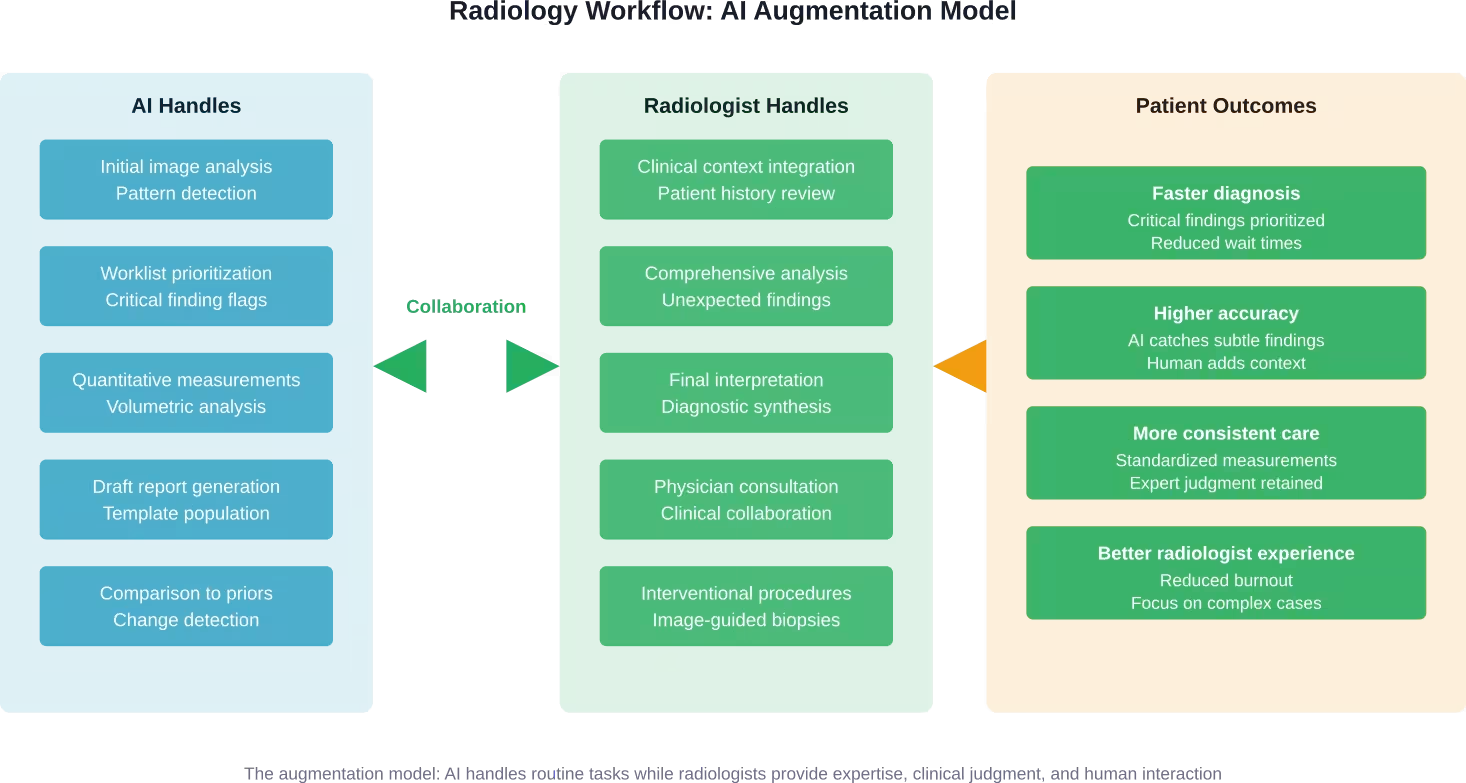

The Real Transformation: Augmentation Not Replacement

So what’s actually happening in radiology departments deploying AI? The pattern emerging is collaboration, not competition.

AI handles the routine detection and measurement tasks. Radiologists focus cognitive energy on complex cases, integrating findings with clinical context, and communicating with care teams. The workload becomes more manageable without eliminating the expertise radiologists provide.

According to Brookings Institution analysis from October 2025, labor market data shows no AI jobs apocalypse—for now. According to October 2025 analysis citing Anthropic data about half of Claude chatbot usage was for augmenting human work, while 77% of business API deployments aimed at automation. That’s worth monitoring, but current employment data remains stable.

Translation? Companies are experimenting with automation, but actual job displacement hasn’t materialized yet in most sectors, including healthcare.

The American College of Radiology has positioned itself at the forefront of AI integration, not AI resistance. The organization’s 2024 Impact Report emphasizes implementing new technologies successfully while supporting the workforce through education and advocacy.

That’s the pragmatic response. Radiologists who embrace AI tools will have competitive advantages. Radiologists who ignore these technologies risk becoming less efficient than peers who adopt them.

But that’s different from saying AI will replace radiologists entirely.

Clinical Validation: The Critical Differentiator

Not all AI tools are created equal. The radiology AI market has exploded, with the FDA’s AI-Enabled Medical Device List containing numerous authorized products. But authorization doesn’t guarantee clinical utility.

Clinical validation means the AI tool has been tested in real-world clinical settings with diverse patient populations and proven to deliver the claimed benefits. This goes beyond technical performance metrics measured on curated datasets.

Research published in late 2025 examining AI generalizability found that most AI models struggle when deployed outside their training environments. After analyzing studies from PubMed and Embase, only six met rigorous inclusion criteria for external validation. The gap between laboratory performance and real-world effectiveness is substantial.

What should healthcare systems look for when evaluating AI radiology tools?

| Validation Criterion | What to Look For | Why It Matters |

|---|---|---|

| External validation | Testing at multiple institutions beyond where the model was developed | Proves the model generalizes to different patient populations and imaging protocols |

| Prospective studies | Real-time deployment data, not just retrospective analysis | Demonstrates actual workflow integration and clinical impact |

| Regulatory clearance | FDA clearance or CE marking for intended clinical use | Confirms safety and effectiveness review by regulatory authorities |

| Peer-reviewed publications | Independent research published in reputable medical journals | Provides transparent methodology and results scrutiny |

| Clinical outcomes data | Evidence of improved patient outcomes, not just detection metrics | Shows the tool actually benefits patients, not just radiologists |

| Implementation support | Training, workflow integration assistance, ongoing technical support | Determines whether adoption succeeds or fails in practice |

The American College of Radiology Data Science Institute has established programs to evaluate AI tools and provide guidance to the radiology community. These resources help cut through marketing hype and identify genuinely validated solutions.

The Workforce Reality

Despite a decade of predictions about AI replacing radiologists, workforce data tells a different story. Radiology residency positions remain competitive. Radiologist salaries remain strong. Demand for imaging services continues growing faster than the radiologist workforce can expand.

The American College of Radiology’s 2025 report on workforce burden notes that easing the pressure requires acknowledging trends and finding overlooked solutions—AI augmentation being one of them.

Here’s the thing about workload. Imaging volume has increased dramatically over decades as technology improved and clinical applications expanded. CT scans that took hours in the 1980s now take minutes. MRI capabilities have exploded. Nuclear medicine and molecular imaging have advanced. Each advance creates more studies for radiologists to interpret.

AI doesn’t eliminate radiologist positions. It helps them manage volume that would otherwise be overwhelming.

Community discussions among radiologists reveal a pragmatic attitude. Many acknowledge AI will transform workflows significantly. Few expect it to eliminate their profession. Most are focused on learning to use AI tools effectively rather than resisting them.

That’s probably the smartest approach.

Radiology Education Adapts

According to American College of Radiology resources, radiology education must adapt to a changing landscape. Medical schools are rethinking curricula to prepare aspiring radiologists for a future where AI is ubiquitous.

This doesn’t mean training fewer radiologists. It means training differently.

Future radiologists need to understand AI capabilities and limitations. They need skills in AI implementation, validation, and oversight. They need stronger communication and consultation abilities as routine detection becomes automated and their role shifts toward complex interpretation and clinical collaboration.

The Radiological Society of North America and American College of Radiology now offer extensive educational resources on AI. Residency programs are incorporating AI literacy into training. The specialty is evolving, not disappearing.

What the Next Five Years Likely Holds

Predicting technology is risky. But based on current trends and technical realities, some projections seem reasonable for the 2026-2031 timeframe.

AI tools will become more sophisticated and more widely deployed. Adoption will accelerate as clinical validation accumulates and integration improves. Radiologists will increasingly work with AI assistance as standard practice rather than experimental novelty.

Generative AI report drafting will mature, potentially handling more of the routine dictation burden. But radiologist review and editing will remain necessary for the foreseeable future given the stakes involved in diagnostic accuracy.

Interventional radiology will see AI-assisted guidance systems improve, but the manual procedure components will remain firmly in human hands. Robotics might eventually change this equation, but that’s likely a 10+ year timeline.

The generalizability problem will gradually improve as models train on more diverse datasets and techniques like federated learning enable training across institutions without data sharing. But this remains a fundamental challenge that won’t disappear quickly.

Regulatory frameworks will continue evolving. The FDA has proposed approaches for regulating adaptive AI that continues learning after deployment. The U.S. Department of Health and Human Services’ January 2025 strategic plan signals continued policy development around AI trustworthiness, democratization of access, and workforce implications.

And workforce demand? Imaging volume growth will likely continue outpacing radiologist supply growth. AI augmentation will help close the gap, but radiologist positions aren’t disappearing.

The radiologists most likely to thrive are those who embrace AI tools, develop expertise in their application, and focus on the uniquely human aspects of their specialty that algorithms can’t replicate.

Choosing AI Tools: A Practical Framework

For radiology departments considering AI adoption, clinical validation should drive decision-making over marketing promises.

- Start with specific pain points: Is the emergency department overwhelmed with head CT volume? Look for validated intracranial hemorrhage detection tools. Is mammography screening creating backlogs? Investigate breast imaging AI with proven track records.

- Evaluate tools based on the validation criteria discussed earlier: Demand evidence of external validation, prospective studies, and clinical outcomes data. Request references from other institutions that have implemented the technology.

- Consider workflow integration carefully: Even excellent AI that disrupts radiologist workflow won’t deliver benefits if nobody uses it. Implementation support matters enormously.

- Monitor performance after deployment: AI tools should improve over time with updates, but they also need ongoing oversight to catch potential degradation or bias.

- Maintain appropriate skepticism about grandiose claims: If a vendor promises their AI will “replace radiologists” or “make human interpretation obsolete,” that’s a red flag indicating they don’t understand the clinical reality.

Start With Real Radiology Tasks Before Assuming Replacement

AI in radiology is often framed as a full replacement, but the reality is more limited. It works best on clearly defined tasks – reviewing imaging data, flagging anomalies, or helping prioritize cases – while interpretation and clinical decisions remain with specialists.

AI Superior takes a practical approach to this. Instead of treating AI as a standalone tool, they work with organizations to map конкретні workflows, identify where automation makes sense, and build custom solutions that integrate into existing systems. The focus is on making AI usable in day-to-day operations, not just proving that it can work in isolation.

If you are evaluating AI in radiology, it is more useful to test it on real processes rather than rely on general claims. Reach out to AI Superior and explore what parts of your workflow can be improved without changing how specialists actually work.

The Role of Professional Organizations

The American College of Radiology and Radiological Society of North America have positioned themselves as guides through the AI transformation rather than obstacles to it.

The ACR’s AI policy recommendations to the federal government over the past decade have emphasized responsible development, appropriate validation, and radiologist involvement in oversight. The HHS strategic plan released in January 2025 echoes many of these recommendations.

These organizations provide educational resources, establish standards for AI validation, and advocate for policies that support both innovation and patient safety. They represent a pragmatic middle ground between techno-optimism and Luddite resistance.

For radiologists navigating this transition, engagement with professional organizations provides valuable guidance and community support.

Addressing Concerns and Misconceptions

Several persistent misconceptions about AI in radiology deserve direct addressing.

- Misconception: AI is already better than radiologists at reading images.

- Reality: AI outperforms radiologists on specific narrow detection tasks in controlled studies. Comprehensive image interpretation integrating clinical context remains firmly in human territory. According to the November 2024 RSNA study, when AI advice was incorrect, diagnostic accuracy dropped significantly because physicians over-relied on the suggestions. That’s not superior performance—it’s a tool that needs expert supervision.

- Misconception: Once AI reaches human-level performance on detection, radiologists become obsolete.

- Reality: Detection is one component of radiology work. Integration, communication, procedures, unexpected findings, and clinical reasoning remain beyond AI capabilities for the foreseeable future.

- Misconception: Hospitals are already replacing radiologist positions with AI.

- Reality: According to Brookings Institution labor market analysis from October 2025, employment data shows stability, not disruption, from AI. No healthcare systems are eliminating radiologist positions and replacing them with AI systems.

- Misconception: Radiologists who resist AI will be fine because the technology is overhyped.

- Reality: AI capabilities are real and improving. Radiologists who develop AI literacy and integration skills will have advantages over those who don’t. The technology is neither replacement nor hype—it’s a powerful tool that changes workflows.

Frequently Asked Questions

Will AI replace radiologists in the next 10 years?

No. AI will transform radiology workflows significantly, but the specialty requires clinical judgment, contextual reasoning, patient interaction, and procedural skills that AI cannot replicate. Radiologists who integrate AI tools effectively will replace those who don’t, but AI won’t eliminate the profession.

What percentage of radiology tasks can AI currently handle?

According to 2025 research, approximately 70% of MRI workflow steps and 64% of CT workflow steps have available AI solutions, compared to 55% for interventional radiology. However, “available AI solutions” doesn’t mean full automation—most applications provide assistance rather than autonomous performance.

Are radiology residency positions becoming less competitive due to AI concerns?

No. Radiology residency programs remain competitive despite a decade of AI predictions. The workforce faces growing imaging volumes that exceed radiologist supply, creating continued strong demand even as AI augmentation improves efficiency.

How accurate are AI radiology tools compared to human radiologists?

Accuracy varies by specific task and tool. For narrow detection tasks like identifying fractures or pulmonary nodules, validated AI tools can match or exceed human performance. However, comprehensive image interpretation, clinical context integration, and identification of unexpected findings remain areas where human radiologists significantly outperform AI. A 2024 study found 92.8% diagnostic accuracy when AI advice was correct, but significantly lower accuracy when AI suggestions were wrong, demonstrating the need for expert oversight.

What should radiologists learn to stay relevant as AI advances?

Radiologists should develop AI literacy, understanding how models work and their limitations. Skills in AI validation, implementation, and oversight will become increasingly valuable. Strengthening communication, consultation, and clinical reasoning abilities will differentiate radiologists as routine detection becomes more automated. Procedural skills in interventional radiology remain firmly human territory.

Can AI radiology tools work across different hospitals and patient populations?

Generalizability remains a significant challenge. Research from 2025 found that most AI models struggle when deployed outside their training environments. Only six studies met rigorous criteria for external validation across different clinical settings. Models trained at one institution may perform poorly at others with different patient demographics, imaging protocols, or equipment. This is a fundamental limitation that human radiologists don’t face.

How do I know if an AI radiology tool is clinically validated?

Look for external validation at multiple institutions, prospective deployment studies, FDA clearance or CE marking, peer-reviewed publications in reputable journals, clinical outcomes data showing patient benefit, and references from institutions using the tool. The American College of Radiology Data Science Institute provides resources for evaluating AI tools. Marketing claims should be verified through independent evidence.

Conclusion: Partnership, Not Replacement

The question “will AI replace radiologists” assumes a competition that doesn’t match reality. AI is a tool, not a competitor. It handles specific tasks exceptionally well while struggling with others that humans find natural.

The transformation happening in radiology mirrors what occurred when PACS replaced film, or when CT and MRI emerged. Technology changes workflows and requires new skills, but it doesn’t eliminate the need for expert physicians.

Radiologists provide clinical judgment that integrates imaging findings with patient context. They perform procedures requiring manual skill and real-time decision-making. They communicate with care teams to guide diagnosis and treatment. They catch unexpected findings that weren’t part of the clinical question. They bring human judgment to situations where algorithmic certainty is inappropriate.

AI excels at pattern recognition, quantitative analysis, and tireless consistency. It can triage worklists, flag critical findings, measure structures precisely, and draft reports. It makes radiologists more efficient without making them obsolete.

The radiologists who thrive will be those who embrace these tools while developing the uniquely human skills that AI cannot replicate. Clinical reasoning, communication, procedural expertise, and comprehensive analysis will become even more valuable as routine detection becomes automated.

Organizations like the American College of Radiology and Radiological Society of North America are guiding the profession through this transition with education, standards, and advocacy. The HHS strategic plan for AI in healthcare emphasizes innovation alongside trustworthiness and workforce development.

So no, AI won’t replace radiologists. But it will absolutely transform the specialty. And radiologists who master AI-augmented practice will have significant advantages over those who resist the change.

The future of radiology is partnership between human expertise and algorithmic power. That future is already arriving.