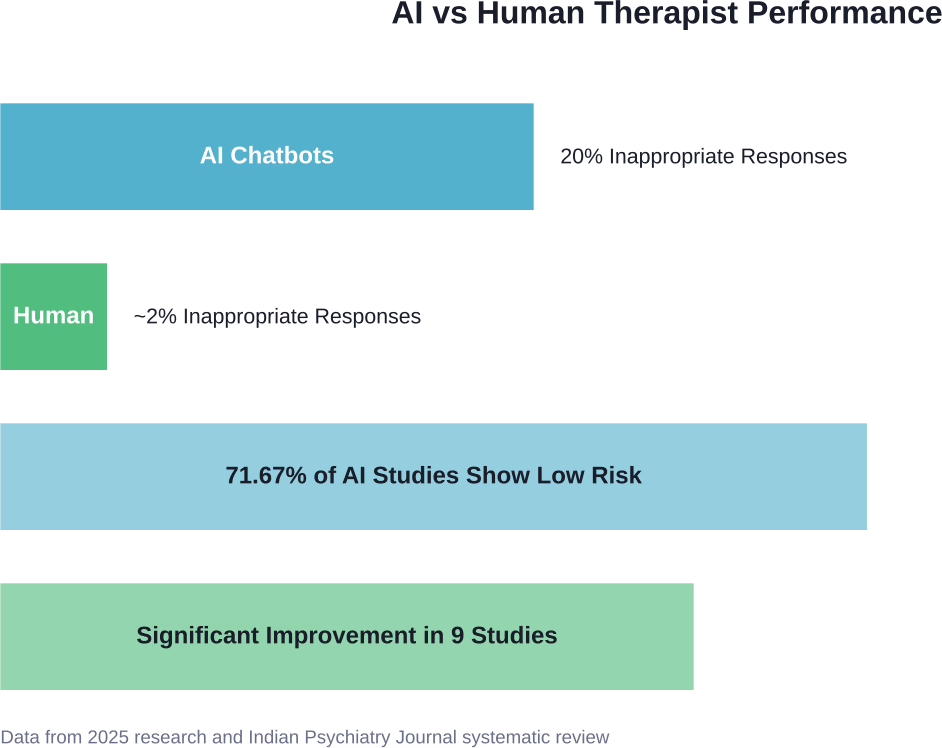

Quick Summary: AI will not replace therapists entirely, but will serve as a complementary tool for mental health care. Research shows AI chatbots respond inappropriately 20% of the time and cannot replicate the essential human connection and therapeutic relationship that drives meaningful psychological change.

The mental health field stands at a crossroads. AI-powered therapy chatbots are multiplying, promising 24/7 access to mental health support without waitlists or high costs. For therapists watching this unfold, the question isn’t just academic—it’s personal.

Will machines replace the work that takes years of training and emotional intelligence to master?

Here’s the thing though—the answer isn’t as simple as yes or no. Recent research from leading institutions reveals a more nuanced picture, one where AI shows both surprising promise and critical limitations that can’t be ignored.

What Research Actually Shows About AI Therapy Tools

Research found that when AI chatbots designed for mental health support were tested, they responded inappropriately 20% of the time—one in five responses. Human therapists, by contrast, rarely produced inappropriate responses in the same scenarios.

But wait. That’s not the whole story.

A systematic review published in the Indian Psychiatry Journal in 2025 examined AI-enabled psychological interventions for depression and anxiety. The results? Most studies showed low risk (71.67%), indicating reliability for specific, bounded tasks. Nine studies involving 1,082 college student participants demonstrated statistically significant improvements in anxiety and depression scores when using AI chatbots.

So which is it—helpful or harmful?

The answer depends entirely on how these tools are deployed. AI therapy tools work well for specific applications: symptom tracking, cognitive behavioral therapy exercises, crisis text line support, and psychoeducation. They struggle—sometimes dangerously—with nuanced situations requiring clinical judgment, safety assessments, and complex trauma work.

The Numbers Don’t Lie

According to a study published in BMC Psychiatry (and available via PubMed), misdiagnosis rates for severe psychiatric disorders reached 39.16% in Ethiopia, with higher rates among non-specialists. This underscores a critical point: even human practitioners struggle with diagnostic accuracy without proper training.

AI systems face similar challenges, but without the ability to course-correct through empathy and clinical intuition.

Where AI Actually Helps Mental Health Care

Despite the limitations, AI tools aren’t useless. Far from it.

Research published in JMIR Mental Health in 2025 examined cognitive behavioral therapy-based chatbots for depression and anxiety. The findings highlighted several areas where AI tools demonstrate genuine value:

- Accessibility and scalability: AI chatbots provide immediate access to mental health support without appointment scheduling, geographic constraints, or insurance barriers. For underserved populations facing stigma or limited provider availability, this matters enormously.

- Consistent availability: Mental health crises don’t respect business hours. AI tools offer 24/7 support when human therapists simply can’t be available.

- Lower cost barriers: While pricing varies across platforms, AI therapy tools typically cost far less than traditional therapy sessions, making mental health support accessible to individuals who can’t afford standard rates.

- Reduced administrative burden: Generative AI shows promise in handling paperwork, note-taking, and treatment plan documentation, freeing clinicians to focus on direct patient care.

Real-World Applications That Work

CBT-based chatbots appear particularly effective for structured interventions. These systems guide individuals through evidence-based exercises: thought records, behavioral activation, exposure hierarchies, and relaxation techniques.

For college students—a population experiencing surging mental health challenges—AI chatbots targeting DSM-5-defined conditions showed measurable improvements. Studies documented reductions in GAD-7 anxiety scores and depression symptom measures.

That said, variability in study designs and heterogeneity of outcome reporting pose challenges for broad generalizability. The evidence base remains promising but incomplete.

Find Where AI Fits In Therapy Before Expecting Big Changes

AI is sometimes discussed as a replacement for therapists, but in reality it plays a supporting role. It can help structure information, surface patterns in data, or assist with routine tasks, while the core of therapy – trust, context, and human connection – stays unchanged.

AI Superior focuses on making AI usable inside real workflows. They work with organizations to define clear use cases, then develop and integrate custom solutions that align with how services are already delivered, instead of forcing a completely new setup.

If you are considering AI in therapy or mental health services, it makes more sense to start small and test it in practice. Contact AI Superior to explore what can be improved without disrupting how your team works today.

The Irreplaceable Human Elements

Now, this is where it gets interesting.

Therapy isn’t just about delivering correct information or following treatment protocols. The therapeutic relationship itself—the connection between therapist and client—drives much of the healing process.

Research consistently demonstrates that therapeutic alliance predicts treatment outcomes more reliably than specific intervention techniques. Clients who feel genuinely understood, validated, and supported show better progress regardless of the therapy modality used.

Can AI replicate that?

Not yet. Probably not ever, in the fullest sense.

According to research published in Frontiers in Psychology, the necessity of human interaction in mental healthcare remains paramount. AI systems lack several critical capacities:

| Human Therapist Capability | AI Current Status | Why It Matters |

|---|---|---|

| Genuine empathy and emotional attunement | Simulated responses only | Clients detect authenticity; trust depends on it |

| Complex safety assessments | Limited by training data | Suicide risk evaluation requires nuanced judgment |

| Cultural competency and context | Often lacks cultural specificity | Effective therapy must honor cultural backgrounds |

| Ethical decision-making in gray areas | Struggles with ambiguous situations | Mental health rarely presents clear-cut scenarios |

| Adaptive flexibility | Constrained by programming | Humans pivot based on moment-to-moment feedback |

Real talk: therapy is hard. It’s supposed to be. Growth happens in discomfort, and skilled therapists know how to push clients productively while maintaining safety.

AI chatbots can’t sense when someone’s body language contradicts their words. They can’t pick up on the pregnant pause before answering a question. They don’t feel the weight of someone’s pain or celebrate the breakthrough moment when insight clicks.

What Mental Health Experts Actually Say

The American Psychological Association has published ethical guidance for AI in professional practice. Their position acknowledges both potential benefits and serious concerns about unregulated AI mental health tools.

Field experts consistently emphasize a crucial distinction: AI as augmentation versus AI as replacement.

Augmentation means using AI to enhance human clinical work—automated screening tools, session note transcription, symptom tracking between appointments, psychoeducational content delivery. This approach harnesses technology’s strengths while preserving human oversight.

Replacement means substituting AI for human therapists entirely. Most experts view this as inappropriate for comprehensive mental health care, particularly for individuals with serious mental illness, complex trauma, or active safety concerns.

Practitioners express varying perspectives on AI tools, ranging from cautious optimism to deep concern. Many practitioners see AI tools as potential allies for expanding access, but worry about quality control, liability issues, and the devaluation of clinical expertise.

The Stigma Problem

Here’s something the Stanford research highlighted: unregulated AI chatbots can actually contribute to mental health stigma and provide dangerous advice.

Without proper oversight, AI systems may reinforce harmful stereotypes, offer overly simplistic solutions to complex problems, or fail to recognize when professional intervention is urgently needed.

One chatbot response analyzed in the study essentially told someone experiencing significant distress that everyone feels that way sometimes—a response that minimizes genuine suffering and could discourage help-seeking.

The Social Connection Crisis

Look, there’s a broader context worth examining.

Social isolation in the United States has reached alarming levels. According to Brookings, only 13% of adults now have 10 or more close friends—down from 33% in 1990. Young people face particularly severe isolation despite living in the most technologically connected moment in history.

What happens when AI chatbots replace real human connection in therapeutic contexts?

Some researchers worry this could accelerate social isolation rather than address it. If people increasingly turn to AI for emotional support instead of building human relationships—therapeutic or otherwise—the long-term mental health consequences could be significant.

Humans are wired for connection. Therapy works partly because it provides a corrective emotional experience: being truly seen and accepted by another person. An AI system, no matter how sophisticated, can’t fulfill that fundamental human need.

Regulation and Oversight Concerns

Here’s where things get messy.

The mental health AI field remains largely unregulated. Companies can launch therapy chatbots without clinical oversight, evidence-based protocols, or safety testing comparable to what pharmaceutical or medical device companies face.

This creates serious risks. AI systems trained on biased data may provide different quality care to different populations. Chatbots without proper safeguards might miss warning signs of serious conditions or provide advice that worsens symptoms.

The American Psychological Association and other professional organizations have called for ethical guidelines and regulatory frameworks, but implementation lags far behind technological development.

Practitioners and patients alike need protection: clear standards for what AI mental health tools should and shouldn’t do, transparency about limitations, and accountability when systems cause harm.

The Economic Reality

Research from Brookings on AI labor displacement reveals concerning trends. More than 30% of all workers could see at least 50% of their occupation’s tasks disrupted by generative AI technology. Clerical roles and middle- to higher-paid occupations face particular exposure.

But does this apply to therapists?

Partially. Entry-level therapeutic support roles—crisis line volunteers, peer counselors, intake coordinators—could face displacement by AI tools. Highly specialized clinical work requiring advanced training seems safer, at least for now.

The broader workforce pattern shows worker retraining programs often struggle to help displaced employees successfully transition. Training program participation rates vary significantly across states, ranging from 14% to 96% for classroom training.

For mental health professionals, staying relevant likely means embracing AI as a tool while deepening uniquely human competencies: complex case conceptualization, cultural humility, ethical reasoning, and relationship-building.

What the Future Actually Looks Like

So where does this leave us?

The most realistic scenario isn’t total replacement, but transformation. AI tools will increasingly handle routine tasks: symptom screening, appointment scheduling, session notes, homework assignments, psychoeducation modules, and between-session support.

Human therapists will focus on what machines can’t do: building therapeutic alliances, navigating complex clinical presentations, addressing trauma and attachment wounds, making nuanced safety decisions, and providing the authentic human presence that facilitates healing.

This hybrid model could actually expand mental health access. AI tools serve as a first line of support, filtering to human clinicians when complexity or risk increases. More people get some level of support; those who need human intervention still receive it.

Research on generative AI-enabled therapy support tools shows promising results when AI augments human-led group therapy. The combination improves both efficacy and adherence to mental health care.

That’s the sweet spot: AI handling what it does well, humans providing what only humans can offer.

Frequently Asked Questions

Can AI therapy chatbots really help with depression and anxiety?

Research shows AI chatbots can provide measurable improvements for mild to moderate depression and anxiety, particularly when delivering structured cognitive behavioral therapy exercises. Nine studies involving 1,082 college student participants demonstrated statistically significant improvements in anxiety and depression scores. However, these tools work best as supplements to professional care, not replacements, especially for severe conditions.

Are AI therapy apps safe to use?

Safety varies dramatically. Research found AI chatbots responded inappropriately 20% of the time, and unregulated systems can contribute to stigma or provide dangerous advice. For basic psychoeducation and symptom tracking, many AI tools are reasonably safe. For crisis intervention, complex mental health conditions, or trauma work, human professional support remains essential. Always verify whether an app has clinical oversight.

Will therapists lose their jobs to AI?

Complete replacement is unlikely. AI will likely transform therapeutic work rather than eliminate it. Entry-level support roles face higher displacement risk, while specialized clinical positions requiring complex judgment, cultural competency, and relationship-building skills remain difficult for AI to replicate. Therapists who integrate AI tools while deepening uniquely human competencies will likely thrive.

What can AI do better than human therapists?

AI excels at 24/7 availability, immediate access without appointment delays, lower cost barriers, consistent delivery of structured interventions, symptom tracking over time, administrative task automation, and scalability to underserved populations. These strengths make AI valuable for expanding mental health access, particularly for initial support and ongoing skill practice between therapy sessions.

What can’t AI replace in therapy?

AI cannot provide genuine empathy, authentic human connection, complex safety assessments for suicide or harm risk, nuanced cultural competency, ethical decision-making in ambiguous situations, trauma processing requiring attunement, or the therapeutic relationship itself—which research shows predicts treatment outcomes more than specific techniques. The human capacity to sense unspoken emotions and adapt flexibly remains beyond current AI capabilities.

How accurate are AI mental health diagnoses?

AI diagnostic accuracy varies widely depending on the condition and training data quality. Research shows even human clinicians face high misdiagnosis rates (39.16% for severe psychiatric disorders in one study), particularly among non-specialists. AI systems face similar challenges without the ability to course-correct through clinical intuition. AI diagnostic tools should support, not replace, comprehensive professional evaluation.

Should I use an AI chatbot instead of seeing a therapist?

For immediate support, psychoeducation, or practicing coping skills when professional help isn’t accessible, AI chatbots can provide value. However, they shouldn’t replace professional therapy for moderate to severe mental health conditions, trauma, active safety concerns, or situations requiring diagnostic assessment. Think of AI tools as supplemental support rather than substitutes for comprehensive care.

The Bottom Line

Will AI replace therapists? No—but it will change how therapy works.

The evidence points toward a collaborative future where technology expands access while human expertise remains central to effective mental health care. AI handles routine tasks and provides immediate support; humans navigate complexity and build healing relationships.

This isn’t about choosing between human therapists and AI tools. It’s about thoughtfully integrating both to serve more people more effectively.

For therapists worried about obsolescence: deepen your relationship skills, cultural competency, and ability to handle complex cases. These uniquely human capacities become more valuable as AI handles routine work.

For individuals seeking mental health support: use AI tools as stepping stones or supplements, but don’t avoid human connection when facing serious challenges. The therapeutic relationship itself has healing power that algorithms can’t replicate.

The mental health field stands at a crossroads, yes—but the path forward likely runs through collaboration rather than replacement. Technology and humanity working together may finally make quality mental health care accessible to everyone who needs it.