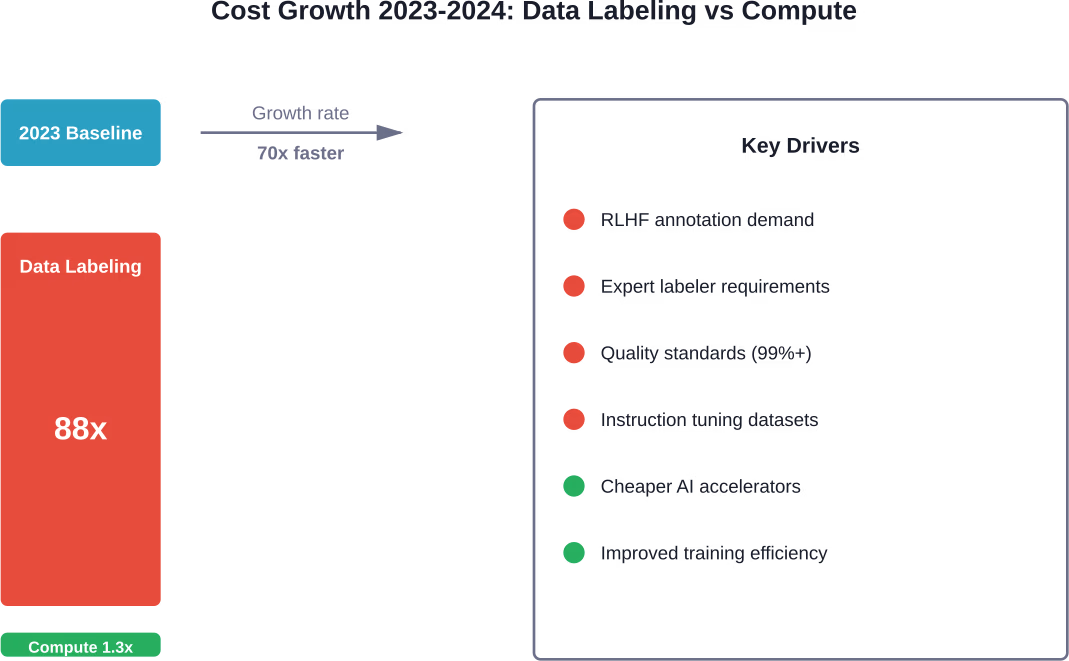

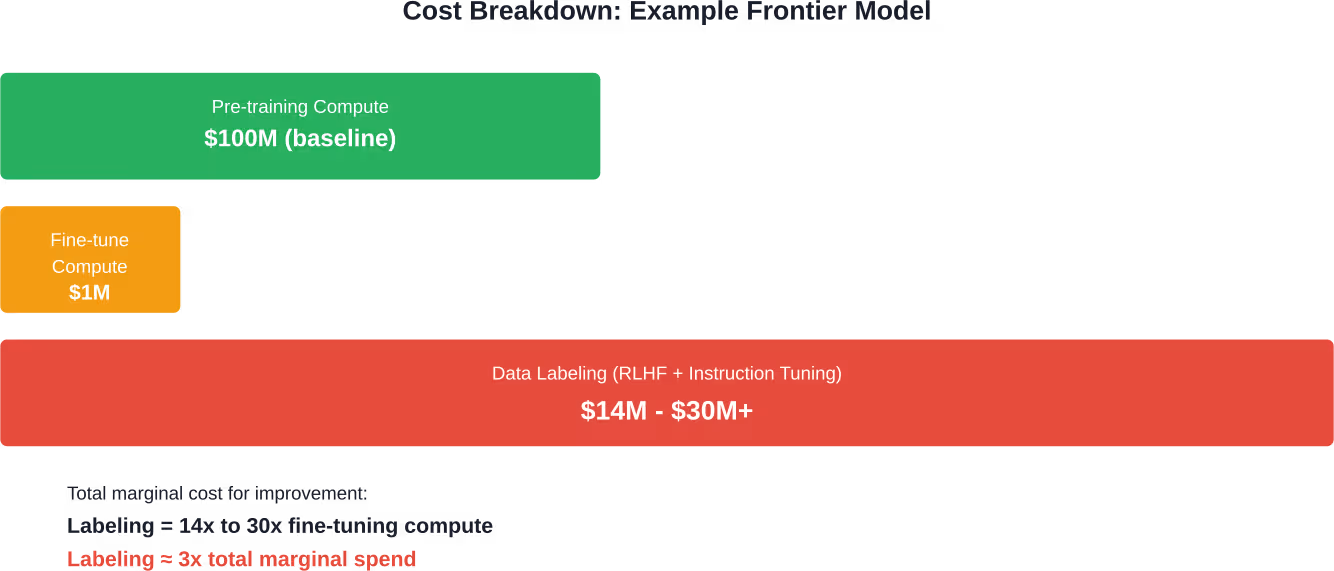

Quick Summary: LLM data labeling costs have surged dramatically, with industry revenue growing 88x from 2023 to 2024 while compute costs rose only 1.3x. Human annotation for post-training (RLHF, instruction tuning) now costs roughly 3x more than marginal compute expenses for frontier models. Expert labeling for a single project can range from $60,000 to $14 million, making data labeling the emerging bottleneck in AI development.

The conventional wisdom about AI costs is wrong.

For years, compute dominated conversations about LLM training budgets. GPUs, cloud infrastructure, electricity—these were the usual suspects when discussing what makes AI expensive. According to sources cited in competitor content, GPT-4’s training cost an estimated $78-100+ million, with Gemini Ultra 1.0 reaching $192 million.

But here’s what’s changed: data labeling has quietly overtaken compute as the primary marginal cost driver for frontier models.

Recent analysis shows that the revenue of major data-labeling companies jumped 88x between 2023 and 2024, while training compute costs rose only 1.3x. When researchers calculated annual revenue across Scale, Surge, Mercor, Labelbox, and similar firms, then compared it to marginal compute spending for models like GPT-4o, Claude Sonnet-3.5, Mistral-Large, Grok-2, and Llama-3-405B, the numbers told a clear story: labeling costs now run approximately 3x higher than marginal compute costs.

This shift reflects how modern LLMs achieve their capabilities. Post-training techniques like supervised fine-tuning (SFT) and reinforcement learning from human feedback (RLHF) have become essential for producing models that actually work in production. Unlike pre-training on raw internet data, these methods require carefully curated datasets created by humans—often domain experts.

And expert time doesn’t come cheap.

The Real Numbers Behind LLM Data Labeling Costs

Case studies reveal just how expensive human annotation has become.

Take MiniMax-M1, which needed less than $1 million in compute to reach Claude-Opus-4 quality. Or consider SkyRL-SQL, which matched GPT-4o performance on text-to-SQL tasks using just $360 of training compute.

These aren’t outliers. They represent the new economics of LLM development.

According to Scale AI’s authoritative guide on data labeling, achieving extremely high quality (99%+) on a large dataset requires a large workforce (1,000+ data labelers on any given project). With highly trained resource pools and sophisticated automated workflows, specialized companies deliver high-quality labels, but minimal cost is relative when human expertise drives the process.

What Drives LLM Data Labeling Expenses?

Several factors combine to push annotation costs higher.

Post-Training Dependency

Modern LLMs don’t work straight out of pre-training. They need refinement through supervised fine-tuning and reinforcement learning techniques. These processes absolutely require human-labeled data—preferably from experts who understand nuanced evaluation criteria.

A research paper on cost-aware LLM-based online dataset annotation (arXiv:2505.15101) highlights how recent advances in large language models have enabled automated labeling, but human oversight remains critical for quality assurance. The tension between automation potential and quality requirements keeps costs elevated.

Expert Labeler Requirements

Not just anyone can label LLM training data effectively. Different tasks demand different expertise levels:

- Basic classification tasks might work with general crowdsourced labor

- Code evaluation requires experienced software developers

- Medical query responses need domain specialists with relevant credentials

- Legal reasoning tasks demand actual legal professionals

- Mathematical problem verification needs subject matter experts

Expert hourly rates reflect their specialized knowledge. Domain specialists commanding $50-200+ per hour dramatically change project economics compared to basic labeling at $10-15 per hour.

Quality Standards and Multi-Stage Review

Achieving 99%+ annotation accuracy requires layered quality control. Industry-standard workflows often include:

- Initial labeling by trained annotators

- Secondary review by senior labelers

- Spot-checking by domain experts

- Consensus mechanisms for ambiguous cases

- Continuous quality monitoring and feedback loops

Each layer adds cost but proves necessary for production-grade datasets.

Dataset Scale Requirements

Effective post-training demands substantial data volumes. RLHF implementations might need tens of thousands of comparison judgments. Instruction tuning datasets often contain hundreds of thousands of examples across diverse task categories.

Scale matters for generalization. Larger, more diverse datasets help models handle edge cases and unusual query patterns—but they multiply annotation costs proportionally.

How Leading Companies Price Data Labeling Services

The data labeling industry has matured into a multi-billion dollar sector with specialized players.

According to industry analysis, major firms like Scale, Surge, Mercor, and Labelbox have seen explosive revenue growth. Leading AI companies like OpenAI, Google, Meta, and Anthropic are each spending on the order of $1 billion per year on human-provided training data and feedback to achieve competitive model capabilities.

Pricing models vary by provider and project complexity:

| Pricing Model | Best For | Typical Range |

|---|---|---|

| Per-item pricing | Simple classification tasks | $0.01 – $2.00 per label |

| Per-hour rates | Complex annotation requiring expertise | $15 – $200+ per hour |

| Project-based quotes | Large-scale initiatives with defined scope | $50,000 – $10M+ |

| Managed service contracts | Ongoing labeling needs with quality SLAs | Custom enterprise pricing |

Real talk: published rates rarely tell the full story. Enterprise contracts involve volume discounts, quality guarantees, turnaround time commitments, and specialized tooling access—all of which affect final costs.

Comparing Data Labeling vs Compute Costs in Practice

The cost structure of LLM development has fundamentally shifted.

Pre-training still consumes significant compute resources. Training frontier models on trillions of tokens requires massive GPU clusters running for weeks or months. But here’s the thing—compute costs have become more predictable and, relatively speaking, more manageable.

Cloud providers offer reserved capacity and long-term contracts that lock in rates. GPU efficiency continues improving. Training techniques like mixed-precision arithmetic and gradient checkpointing reduce resource requirements.

Data labeling, meanwhile, scales differently. Human capacity doesn’t double every 18 months. Expert availability remains constrained. Quality control can’t be infinitely parallelized.

The economics become stark when examining specific model development cycles. For models targeting specialized domains (legal, medical, scientific), the expertise premium compounds the problem. Finding qualified annotators takes time. Training them on annotation guidelines takes more time. Maintaining consistency across large teams requires sophisticated management.

Cost Variations by Annotation Task Type

Not all labeling tasks carry the same price tag.

RLHF Preference Labeling

Reinforcement learning from human feedback requires annotators to compare model outputs and indicate preferences. Tasks involve:

- Reading two or more model responses to the same prompt

- Evaluating quality across multiple dimensions (accuracy, helpfulness, safety, tone)

- Selecting the superior response or ranking multiple options

- Sometimes providing written justification for choices

Complexity varies wildly. Simple preference judgments on straightforward queries might cost $2-5 per comparison. Nuanced evaluations requiring domain expertise can run $20-100+ per comparison set.

With datasets requiring 50,000-200,000 comparisons, costs quickly reach six or seven figures.

Instruction Tuning Dataset Creation

Building instruction-following datasets demands different work. Annotators create:

- Diverse prompts spanning multiple task categories

- High-quality reference responses demonstrating desired behavior

- Variations covering edge cases and different phrasings

- Multi-turn conversations showing contextual understanding

Creating original, high-quality instruction-response pairs takes significantly more time than simple preference labeling. Rates of $10-50 per instruction pair are common for general tasks. Specialized domains (coding, mathematics, scientific reasoning) can command $50-200+ per example.

Classification and Entity Recognition

Traditional NLP labeling tasks remain relevant for specialized applications:

- Named entity recognition in domain-specific texts

- Sentiment classification with fine-grained categories

- Intent classification for conversational systems

- Relationship extraction from unstructured documents

These tasks generally cost less than RLHF or instruction tuning—often $0.05-$2.00 per item depending on complexity and required expertise.

Multimodal Annotation

Vision-language models need labeled image-text pairs, video annotations, and cross-modal alignment data. Complexity escalates with:

- Detailed image captioning requiring comprehensive descriptions

- Object detection and segmentation in complex scenes

- Video understanding tasks spanning temporal reasoning

- 3D annotation for spatial understanding

Computer vision labeling has its own cost structure, often higher than pure text annotation due to specialized tooling requirements and cognitive load.

Strategies to Reduce LLM Data Labeling Costs

Smart teams optimize annotation budgets without sacrificing quality.

Active Learning and Selective Annotation

Why label everything when models can identify their own weak points?

Active learning frameworks query the model to find examples where it’s most uncertain or where additional data would provide maximum value. This targets annotation effort where it matters most, potentially reducing labeling volume by 50-80% while maintaining comparable model performance.

The arXiv paper on cost-aware LLM-based online dataset annotation explores how automated systems can strategically select which examples require human labeling, balancing cost constraints against quality goals.

LLM-Assisted Annotation

Large language models can bootstrap the labeling process. Workflows include:

- Using GPT-4 or Claude to generate initial labels

- Human reviewers validate and correct LLM outputs

- Focusing expert time on difficult cases or quality assurance

- Building consensus mechanisms between LLM and human judgments

This approach can cut costs by 40-70% compared to full human annotation while maintaining quality standards, though careful validation remains essential to catch systematic LLM errors.

Tiered Labeling Workflows

Match annotator expertise to task complexity:

- Junior labelers handle straightforward cases at lower rates

- Senior annotators tackle ambiguous or challenging examples

- Domain experts focus exclusively on specialized content

- Automated quality checks route items to appropriate tiers

Sophisticated orchestration maximizes cost efficiency while preserving quality on items that truly need expert attention.

Dataset Reuse and Synthetic Augmentation

Every new project doesn’t need to start from zero. Organizations can:

- Build core datasets once and reuse across multiple model iterations

- License existing high-quality datasets when available

- Generate synthetic variations of labeled examples

- Share datasets across related projects within the organization

But watch out—dataset licensing can itself become expensive as providers recognize data’s strategic value. Recent deals between AI labs and content providers have reached hundreds of millions of dollars for access to proprietary text sources.

Cut Wasted Labeling Spend Before You Train

Data quality is where most LLM costs quietly grow. Fixing labeling issues after training is expensive, and poorly prepared datasets lead to more iterations, not better models. This is where AI Superior typically fits in – not as a labeling vendor, but as the layer that makes sure labeling actually translates into usable model performance.

They handle data collection, cleaning, and preprocessing as part of the model pipeline, so datasets are structured for training from the start, not patched later. That includes aligning data with the use case, reducing noise, and preparing it for fine-tuning workflows that don’t waste compute or budget. If your labeling costs keep increasing but model quality doesn’t, the issue is usually upstream. Fix the pipeline before you scale it – reach out to AI Superior and get clarity on what’s actually driving your costs.

The Strategic Implications for AI Development

Data labeling costs reshape how organizations approach LLM development.

Smaller companies face a challenging reality. Without resources to fund massive annotation efforts, competing with well-funded labs becomes difficult. This creates potential consolidation pressure in the AI industry—those with deeper pockets can afford better datasets and, consequently, better models.

The economics favor certain architectural choices too. Small language models (SLMs) with 1-15 billion parameters require less training data and can achieve strong performance on focused domains. While frontier LLMs cost over $100 million to train, SLMs reduce cost-per-million queries by over 100x and demand proportionally smaller annotation budgets for fine-tuning.

Organizations increasingly evaluate build-vs-buy decisions through a data lens. Fine-tuning existing foundation models often makes more economic sense than training from scratch—you’re essentially paying annotation costs without the massive pre-training compute bill.

This has accelerated fine-tuning adoption. According to analysis of model deployment patterns, fine-tuning can save 60-90% compared to full pre-training while achieving comparable task-specific performance.

| Approach | Compute Cost | Data Labeling Cost | Best For |

|---|---|---|---|

| Pre-training from scratch | $50M – $200M+ | Minimal (unsupervised) | Frontier model development |

| Fine-tuning foundation model | $10K – $1M | $50K – $15M | Domain specialization |

| Prompt engineering only | Near zero | $5K – $50K (few-shot examples) | Rapid prototyping, simple tasks |

| Small model training | $5K – $500K | $10K – $500K | Edge deployment, cost-sensitive apps |

Industry Trends and Future Outlook

What happens next with data labeling economics?

Growth rates will likely moderate from the extraordinary 88x jump seen from 2023 to 2024. Much of that spike came from rapid scaling at specific companies like Mercor. But the absolute dollar amounts continue climbing as more organizations pursue LLM development and existing labs iterate on model improvements.

Research directions that could change the economics include:

- Automated verification mechanisms: If models can reliably self-check or if cheap verification methods emerge, the cost of generating large labeled datasets could drop substantially. This remains an active research area.

- Reward models tolerating noisy data: Current RLHF implementations require high-quality preference labels. Techniques that work with lower-quality or partially automated labels would reduce costs.

- Constitutional AI and self-improvement techniques: Methods where models improve through self-critique and revision could reduce human annotation dependency.

- Better data efficiency: Research continues on extracting more value from less labeled data through improved algorithms and training techniques.

The question facing the industry: can automation offset growing quality demands and expanding use cases?

Community discussions on professional forums highlight how data labeling has become a real bottleneck in AI development. Organizations report spending months recruiting and training annotator teams. Quality inconsistencies cause project delays. Expert availability limits project timelines more than compute scheduling.

Practical Cost Planning for LLM Projects

Teams planning LLM initiatives should budget realistically for data labeling.

For a mid-scale project targeting domain-specific improvement:

- RLHF dataset (20,000 comparisons, moderate complexity): $100K – $400K

- Instruction tuning dataset (10,000 examples, general domain): $80K – $300K

- Quality assurance and validation (20% of data): $36K – $140K

- Project management and tooling: $25K – $100K

Total annotation budget: $241K – $940K

Fine-tuning compute for the same project might run $50K – $200K. The annotation costs dominate—exactly what the industry data predicts.

For larger initiatives targeting frontier capabilities, budgets scale accordingly. Projects with 100,000+ labeled examples and expert annotator requirements easily reach $5-15 million in labeling costs alone.

Choosing Data Labeling Providers

Selecting the right annotation partner significantly impacts both cost and quality.

Evaluation criteria should include:

- Quality track record: Request case studies and reference customers working on similar tasks. Ask about achieved accuracy rates and quality control mechanisms.

- Annotator expertise: Verify the provider can access domain experts relevant to the project. Generic crowdsourcing platforms struggle with specialized content.

- Tooling capabilities: Modern annotation platforms offer efficiency features that reduce per-item costs—intelligent task routing, automated quality checks, collaboration features, and integration with ML pipelines.

- Scalability: Can the provider ramp up to handle surge capacity? Do they have sufficient workforce depth for large or urgent projects?

- Security and compliance: For sensitive data, verify appropriate certifications, data handling protocols, and contractual protections.

- Pricing transparency: Beware providers who won’t discuss pricing until deep in the sales process. Cost predictability matters for project planning.

Leading providers in the space have built specialized workflows optimized for LLM training data. According to Scale AI’s resources, they maintain large, trained labeling workforces and proprietary tooling designed specifically for ML use cases.

The Data Economics Research Agenda

Academic and industry researchers are beginning to treat data as its own economic field.

A research agenda published on arXiv (The Economics of AI Training Data) notes that despite data’s central role in AI production, it remains the least understood input. As AI labs exhaust public data and turn to proprietary sources through deals reaching hundreds of millions of dollars, research has fragmented across computer science, economics, law, and policy.

Key open questions include:

- How should data be valued as a distinct factor of production?

- What market structures will emerge for training data exchange?

- How do intellectual property regimes affect data availability and cost?

- What are the welfare implications of data concentration?

- Can mechanisms ensure fair compensation for data creators?

These aren’t just theoretical concerns. They directly affect who can afford to build competitive AI systems and what those systems can do.

The shift from compute bottlenecks to data bottlenecks represents a fundamental change in AI economics. It’s harder to scale human expertise than to add more GPUs. It’s harder to automate nuanced judgment than to parallelize matrix multiplications.

This reality will shape the AI industry for years to come.

Frequently Asked Questions

How much does data labeling cost for a typical LLM fine-tuning project?

Data labeling costs for LLM fine-tuning vary widely based on task complexity and dataset size. A moderate-scale project with 20,000-30,000 labeled examples typically costs $200,000-$900,000. Simple classification tasks at the low end might run $0.05-$2 per item, while complex RLHF comparisons requiring domain expertise can cost $20-$100+ per comparison. Expert annotation for specialized domains (medical, legal, scientific) commands premium rates of $50-$200+ per hour.

Why have data labeling costs grown faster than compute costs?

Data labeling costs grew 88x from 2023 to 2024 while compute costs rose only 1.3x. This dramatic difference stems from post-training techniques (RLHF, supervised fine-tuning) becoming essential for competitive models. These methods require extensive human annotation, often from domain experts. Meanwhile, GPU efficiency continues improving and cloud providers offer more competitive rates, keeping compute costs relatively stable even as labeling expenses surge.

Can LLMs automate their own data labeling to reduce costs?

LLMs can assist with labeling but not fully automate it without quality concerns. Common approaches include using GPT-4 or Claude to generate initial labels, then having human reviewers validate outputs. This hybrid approach can reduce costs by 40-70% compared to full human annotation. However, careful quality control remains essential since LLMs can introduce systematic errors or biases. The arXiv paper on cost-aware annotation explores frameworks for optimally balancing automated LLM labeling against human verification costs.

What’s more expensive: training an LLM from scratch or fine-tuning an existing model?

Pre-training frontier models from scratch costs $50-200+ million primarily in compute, while fine-tuning existing models typically costs $10,000-$1 million in compute. However, fine-tuning requires substantial data labeling budgets—often $50,000 to $15 million depending on dataset size and task complexity. Despite higher annotation costs, fine-tuning still delivers 60-90% cost savings overall compared to pre-training while achieving strong task-specific performance. For most organizations, fine-tuning makes more economic sense.

How do Small Language Models (SLMs) compare to LLMs on cost?

SLMs with 1-15 billion parameters dramatically reduce both training and inference costs. Training SLMs costs $5,000-$500,000 in compute versus $50-200+ million for frontier LLMs. Data labeling requirements scale proportionally smaller, typically $10,000-$500,000 for focused domains. SLMs reduce cost-per-million queries by over 100x compared to large models. For applications with specific scope and edge deployment scenarios, SLMs offer compelling cost advantages while maintaining acceptable accuracy on targeted tasks.

What strategies effectively reduce data labeling costs without sacrificing quality?

Several proven strategies cut costs while maintaining quality: Active learning reduces labeling volume by 50-80% by identifying examples where annotation provides maximum value. LLM-assisted workflows use models to generate initial labels, with humans validating outputs—cutting costs 40-70%. Tiered workflows match annotator expertise to task difficulty, reserving expensive experts for truly complex cases. Dataset reuse amortizes annotation investment across multiple projects. Selective high-quality sampling often outperforms larger, lower-quality datasets for fine-tuning.

Will data labeling costs continue growing at the current rate?

The extraordinary 88x growth from 2023 to 2024 will likely moderate, as much of that spike reflected rapid scaling at specific companies. However, absolute labeling costs continue climbing as more organizations pursue LLM development and quality standards increase. Industry experts expect data labeling to remain the dominant marginal cost for frontier models through 2026 and beyond. Research into automated verification, noise-tolerant training, and self-improvement techniques could eventually reduce dependency on expensive human annotation, but breakthrough solutions haven’t yet emerged at scale.

Conclusion

The economics of LLM development have fundamentally shifted.

What was once a compute-dominated field now finds human annotation consuming the majority of marginal budgets. Data labeling costs have grown 88x in a single year while compute expenses increased only 1.3x. For organizations building or fine-tuning models, annotation now represents roughly 3x the marginal compute spend.

This isn’t a temporary anomaly. Post-training techniques requiring human feedback have proven essential for creating models that work reliably in production. RLHF, instruction tuning, and specialized fine-tuning all depend on carefully curated, expertly labeled datasets. Expert time costs money—lots of it.

The case studies tell the story clearly. MiniMax-M1 spent 28x more on annotation than training compute. SkyRL-SQL’s labeling budget ran 167x higher than its compute costs. These ratios reflect the new normal in AI development.

Smart teams optimize annotation budgets through active learning, LLM-assisted workflows, and tiered labeling strategies. But there’s no escaping the fundamental reality: building competitive LLMs requires investing heavily in high-quality human-labeled data.

For organizations planning LLM projects in 2026, budget accordingly. Data labeling will likely represent 45-60% of total project costs for serious initiatives. Partner with experienced annotation providers, invest in quality control, and plan for longer timelines than compute-only estimates would suggest.

The bottleneck has moved from silicon to human expertise. Understanding this shift—and planning for its financial implications—separates successful LLM initiatives from underfunded failures.

Need help planning your LLM data labeling budget? Understanding the true costs of annotation requires analyzing your specific use case, quality requirements, and scale. Connect with experienced providers to get accurate project estimates before committing resources.