Quick Summary: AI will not replace cybersecurity professionals but will transform their roles by automating repetitive tasks like threat detection and log analysis. According to CISA and NIST research, human expertise remains essential for strategic decision-making, ethical judgment, incident response, and addressing novel threats that AI systems cannot independently resolve. The future involves human-AI collaboration where professionals guide AI tools rather than compete with them.

The question keeps surfacing in professional circles, online forums, and cybersecurity conferences: will AI replace cybersecurity jobs? It’s a legitimate concern. After all, artificial intelligence already analyzes network traffic patterns, detects anomalies, and responds to certain threats faster than any human team could.

But here’s the thing—the relationship between AI and cybersecurity professionals isn’t a zero-sum game. The technology doesn’t eliminate human roles. Instead, it reshapes them in ways that demand new skills while preserving the irreplaceable value of human judgment.

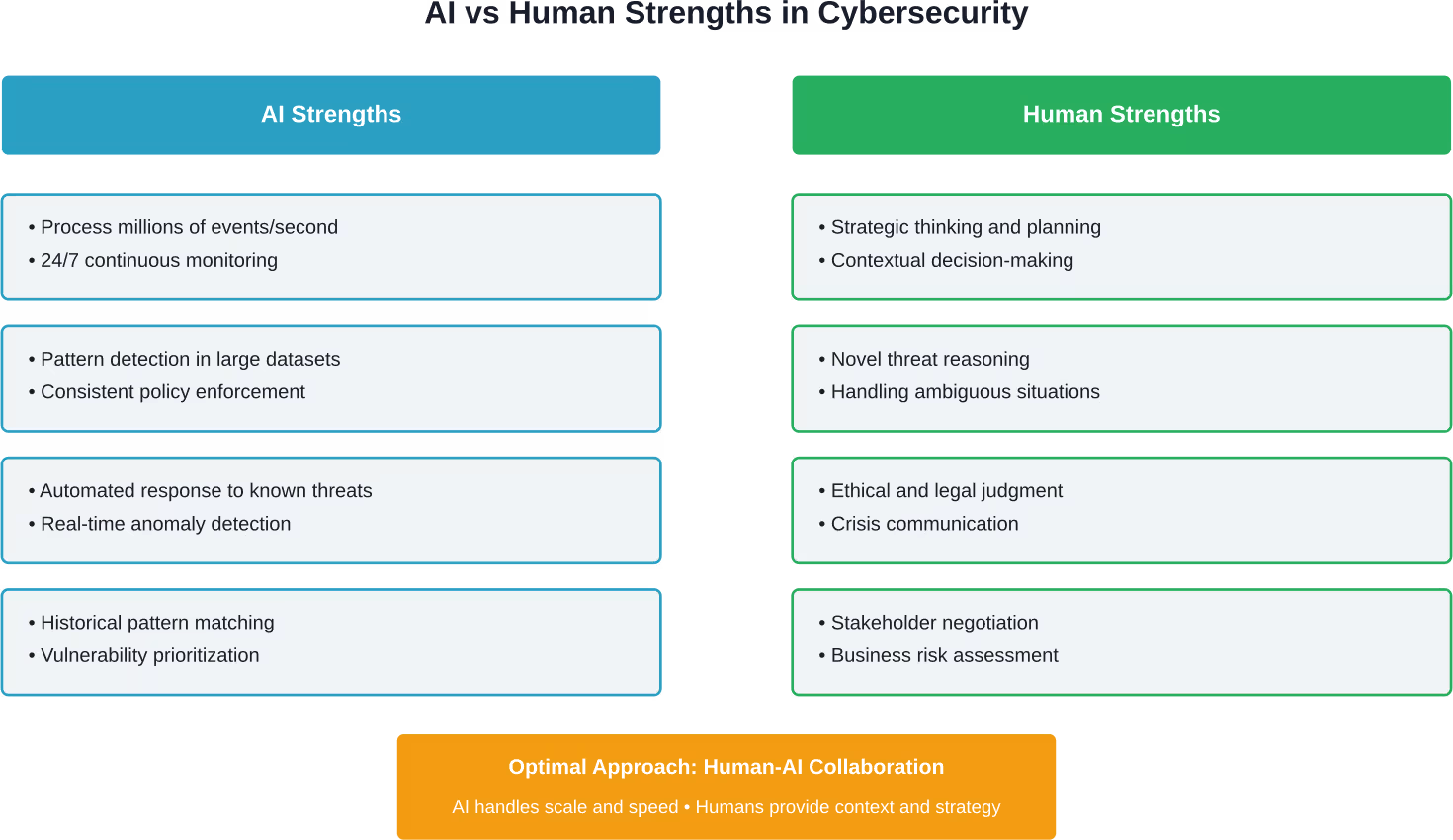

Real talk: AI excels at processing massive datasets and identifying patterns. It struggles with context, strategic thinking, and the ethical nuances that define effective security programs. That gap matters more than most people realize.

The Current State of AI in Cybersecurity Operations

According to CISA’s published use cases, AI tools have become pivotal components in modern security operations. From spotting anomalies in network data to enhancing threat intelligence, these systems supplement human capabilities rather than replace them.

CISA’s AI-driven automation initiatives focus on leveraging machine learning to automate processes and enhance operational efficiency. The Certified AI-Driven Automation Specialist (CAIAS) program, established in 2024, trains professionals to work alongside these systems—not compete against them.

Current AI applications in cybersecurity include:

- Behavioral analytics: Machine learning algorithms establish baseline user behavior patterns and flag deviations that might indicate compromised credentials or insider threats

- Automated vulnerability scanning: AI systems continuously assess cloud environments and on-premises infrastructure for configuration drift and security gaps

- Threat intelligence aggregation: Natural language processing analyzes security bulletins, dark web forums, and threat feeds to prioritize emerging risks

- Log analysis at scale: AI processes millions of log entries to identify suspicious patterns that would take human analysts days to uncover

- Policy enforcement automation: AI ensures consistent security configurations across distributed cloud accounts and detects configuration drift in real time

These applications share a common characteristic: they handle repetitive, data-intensive tasks that previously consumed enormous amounts of security team bandwidth. A Coastline College course on AI applications in cybersecurity, referenced in NICCS materials, emphasizes this collaboration between AI technologies and human oversight.

AI System Design and Deployment With AI Superior

AI Superior works on designing AI systems that fit into real environments. This includes defining the problem, developing models, and ensuring those models function properly once connected to operational workflows.

Need Help Designing and Deploying AI?

AI Superior can help with:

- building AI models for practical use cases

- defining system architecture and technical approach

- integrating AI into business infrastructure

👉 Contact AI Superior to discuss your project, data, and implementation approach

Where AI Falls Short in Cybersecurity

Despite impressive capabilities, AI systems face fundamental limitations that prevent full automation of security operations. Understanding these constraints explains why human expertise remains non-negotiable.

Context-Dependent Decision Making

AI excels at pattern recognition but struggles with context. A sudden spike in database queries might indicate a SQL injection attack—or it could reflect legitimate business activity during a product launch. AI flags the anomaly. Humans determine whether it’s a threat.

Security decisions often require understanding business objectives, regulatory requirements, and organizational risk tolerance. These aren’t data points an algorithm can process independently.

Novel Attack Vectors

Machine learning models train on historical data. When attackers develop genuinely novel techniques, AI systems lack reference points. The model might miss threats that don’t match known patterns.

Consider the finance worker in Hong Kong who transferred $25 million after a February 2024 video conference with what appeared to be the CFO and other executives. The deepfake technology mimicked speech patterns and visual appearance convincingly enough to bypass the employee’s suspicion. AI detection systems hadn’t encountered that specific attack pattern before.

Human security professionals, conversely, can reason about unfamiliar scenarios by applying fundamental security principles and understanding attacker motivations.

Adversarial AI and Model Manipulation

NIST research on AI security emphasizes that AI systems themselves become attack targets. Adversarial machine learning techniques can poison training data or craft inputs that fool classification models.

A security model trained to identify malware might misclassify malicious code if attackers subtly modify file characteristics. Defending against these attacks requires human security researchers who understand both AI vulnerabilities and attacker tactics.

NIST’s AI Model Validation and Test Automation for Critical Systems Essentials course, published in May 2025, addresses exactly these concerns—verifying AI reliability in high-assurance domains where failures carry serious consequences.

Ethical and Legal Judgment

Security incidents often involve privacy considerations, legal compliance, and ethical dilemmas. Should a company’s security team access employee personal devices to investigate a suspected data breach? How should incident response balance transparency with protecting sensitive information?

These questions don’t have algorithmic answers. They require human judgment informed by legal frameworks, ethical principles, and organizational values.

How AI Transforms Cybersecurity Roles

Rather than eliminating cybersecurity jobs, AI reshapes what those jobs entail. The transformation mirrors historical technological shifts—automation doesn’t destroy professions so much as redirect human effort toward higher-value activities.

From Manual Analysis to Strategic Oversight

Security analysts once spent hours manually reviewing firewall logs and correlating events across disparate systems. AI handles that grunt work now, processing data at speeds no human team could match.

This shift frees analysts to focus on strategic questions: Are current defenses aligned with emerging threat landscapes? How should security investments prioritize based on business risk? What gaps exist in incident response capabilities?

According to Brookings Institution research published in October 2024, more than 30% of workers could be exposed to generative AI’s effects, with the greatest impacts on middle- to higher-paid occupations and clerical roles. But the research emphasizes exposure doesn’t equal replacement—it signals task transformation.

New Skill Requirements

NIST research on AI’s impact on the cybersecurity workforce (published June 2025) highlights how the NICE Workforce Framework adapts to these changes. Cybersecurity professionals increasingly need skills that bridge security expertise and AI literacy.

According to World Economic Forum projections cited in academic research, 39% of workforce skills are expected to change by 2030, necessitating significant adaptation and reskilling to meet evolving job market demands.

Critical emerging skills include:

- AI model evaluation: Understanding how machine learning models make decisions and identifying potential biases or vulnerabilities

- Prompt engineering for security: Effectively querying AI systems to extract relevant threat intelligence and security insights

- Adversarial AI defense: Protecting AI systems from manipulation and poisoning attacks

- Algorithm interpretation: Translating AI outputs into actionable security recommendations

- Human-AI workflow design: Structuring processes that optimize collaboration between automated systems and human analysts

These aren’t entirely new professions. They’re evolutions of existing security roles, enhanced by AI capabilities.

Elevated Incident Response

AI dramatically accelerates initial threat detection and containment. Automated systems can isolate compromised endpoints, block malicious IP addresses, and trigger predefined response playbooks within milliseconds of detecting an incident.

But complex breaches still require human coordination. Determining breach scope, managing stakeholder communication, coordinating with law enforcement, making disclosure decisions—these activities demand judgment that algorithms can’t replicate.

The symbiotic relationship means faster mean time to resolution (MTTR) combined with more thoughtful strategic response. AI provides real-time insights. Humans make the critical calls.

| Security Task | AI Contribution | Human Contribution | Collaboration Outcome |

|---|---|---|---|

| Threat Detection | Analyzes network traffic and logs to identify anomalies in real time | Validates alerts, determines false positives, applies business context | Faster detection with fewer false positives |

| Vulnerability Management | Scans infrastructure, prioritizes based on exploitability and exposure | Assesses business impact, schedules remediation, manages exceptions | Risk-based prioritization aligned with business needs |

| Incident Response | Automates containment, executes response playbooks, collects forensic data | Directs investigation strategy, makes disclosure decisions, coordinates teams | Faster containment with strategic decision-making |

| Security Monitoring | Continuous 24/7 surveillance, pattern recognition across millions of events | Tunes detection rules, investigates complex scenarios, handles escalations | Comprehensive coverage without analyst burnout |

| Threat Hunting | Suggests hunting hypotheses based on threat intelligence, automates data queries | Develops creative hunt scenarios, interprets findings, pursues anomalies | Proactive threat discovery at scale |

Real-World AI and Cybersecurity Integration

Organizations already deploying AI in security operations demonstrate what this collaboration looks like in practice. These aren’t theoretical futures—they’re current implementations.

Automated Threat Intelligence

Security teams use AI to aggregate threat feeds from hundreds of sources, correlate indicators of compromise, and prioritize threats based on organizational exposure. The AI processes natural language from security bulletins, extracts relevant technical indicators, and maps them to internal assets.

Human analysts review prioritized threats, determine which require immediate action, and adjust defensive postures accordingly. Without AI, teams would drown in raw threat data. Without humans, the system couldn’t translate intelligence into context-appropriate action.

Cloud Security Posture Management

AI-powered tools continuously scan cloud environments for misconfigurations, overly permissive access controls, and policy violations. They automatically remediate certain classes of issues—closing publicly accessible storage buckets, for instance, or revoking unused credentials.

Security architects define the policies AI enforces. They determine acceptable risk levels, design exception processes, and ensure automated remediation aligns with business operations. The AI handles enforcement at scale. Humans handle strategy and governance.

User Behavior Analytics

Machine learning establishes baseline patterns for individual users and peer groups. When a user account exhibits unusual behavior—accessing systems at odd hours, downloading large datasets, or connecting from unfamiliar locations—AI flags the activity.

Security operations teams investigate flagged accounts, interviewing users if necessary and determining whether anomalous behavior reflects legitimate business activity or potential compromise. The AI identifies outliers. Humans determine intent.

The Job Market Reality for Cybersecurity Professionals

Data from IEEE and academic research provides perspective on AI’s actual employment impact—which differs substantially from dystopian replacement scenarios.

IEEE Spectrum reporting from February 2024 discusses arguments that AI may create more job growth than disruption, potentially reviving middle-class jobs lost to earlier automation waves. The caveat? Society must guard against AI misuse, particularly disinformation and deepfakes.

IEEE Transmitter’s April 2025 analysis echoes this assessment, noting that AI replaces more tasks than jobs. The 2016 prediction by AI researchers that automation would transform work rather than eliminate it has largely held true nearly a decade later.

For cybersecurity specifically, demand continues outpacing supply. The field faces persistent talent shortages even as AI tools proliferate. Why? Because AI creates new security challenges even as it solves existing ones.

AI Security Itself Creates Jobs

Securing AI systems requires specialized expertise. As organizations deploy machine learning models for business operations, they need professionals who understand adversarial AI, model validation, and AI governance frameworks.

NIST’s concept note on AI RMF Profile on Trustworthy AI in Critical Infrastructure (2026-04-07) addresses safety, security, and reliability requirements for AI deployed in critical systems. Implementing these frameworks requires human expertise—creating roles rather than eliminating them.

According to Brookings Institution analysis, AI infrastructure, models, research, and governance represented significant portions of total private AI funding globally between 2014 and 2025. That investment signals job creation, not destruction.

The Reskilling Imperative

That said, static skill sets won’t suffice. Cybersecurity professionals must continuously adapt as AI capabilities evolve. Organizations that invest in workforce development—training existing teams on AI integration rather than replacing them—tend to see better security outcomes.

The Certified AI-Driven Automation Specialist program represents one pathway. Similar initiatives from industry organizations, academic institutions, and government agencies help bridge the gap between traditional security skills and AI-augmented roles.

Risks AI Introduces to Cybersecurity

Ironically, AI’s integration into security operations creates new vulnerabilities. Understanding these risks explains why human oversight remains critical.

Adversarial Machine Learning

Attackers can manipulate AI systems through carefully crafted inputs. Adversarial examples—inputs designed to fool machine learning models—can cause misclassification of malware, bypass facial recognition systems, or evade intrusion detection.

According to RAND Corporation research on AI risks to security, published in December 2017, these vulnerabilities existed early in AI adoption and have only grown more sophisticated. Defending against adversarial AI requires security professionals who understand both machine learning mechanics and attacker methodologies.

Data Poisoning

AI models train on historical data. If attackers compromise training datasets—injecting malicious examples or skewing distributions—the resulting models inherit those flaws. A poisoned threat detection model might systematically ignore certain attack signatures or generate excessive false positives.

According to Brookings Institution analysis from September 2025, inadequate AI security investment at the state level could significantly raise cybercrime costs. In 2021, cybercrime was estimated to cost African nations 10% of their gross domestic product, or $4.12 billion—demonstrating the economic stakes of AI security failures.

Overreliance and Deskilling

Organizations that lean too heavily on AI risk deskilling their human teams. If analysts stop developing manual investigation skills because AI handles routine analysis, they lose the capability to function when AI systems fail or face novel scenarios.

Maintaining human expertise alongside AI automation requires intentional workforce development. Teams need opportunities to practice fundamental skills even when AI could theoretically handle tasks faster.

Explainability and Accountability

Many AI models function as black boxes—producing outputs without transparent reasoning. When an AI system flags an account as compromised, security teams need to understand why. Without explainability, validating AI decisions becomes impossible.

This challenge intensifies in regulated industries where security decisions must be auditable and defensible. NIST’s research on trustworthy AI emphasizes that AI system security depends partly on explainability and accountability—attributes that require human governance.

Best Practices for Human-AI Collaboration in Security

Organizations successfully integrating AI into security operations follow certain patterns. These practices maximize AI benefits while preserving human expertise.

Define Clear Automation Boundaries

Establish explicit rules about which tasks AI handles autonomously versus which require human approval. Low-risk, high-volume activities like routine vulnerability scanning suit full automation. High-stakes decisions like incident disclosure demand human oversight.

Document these boundaries in security playbooks so teams understand when to trust AI outputs versus when to validate independently.

Implement Human-in-the-Loop Validation

For critical security decisions, design workflows that require human confirmation before AI-recommended actions execute. This might mean having analysts review high-severity alerts before automated blocking occurs, or requiring approval before AI systems modify production security policies.

Human-in-the-loop approaches slow response slightly but dramatically reduce the risk of false positives causing business disruption.

Continuous Model Monitoring

AI models degrade over time as threat landscapes evolve and training data becomes stale. Security teams must continuously monitor model performance, tracking metrics like false positive rates, detection accuracy, and prediction confidence.

When performance degrades, models need retraining with current data. This ongoing maintenance requires human oversight—AI systems can’t independently determine when they’re no longer effective.

Invest in AI Literacy Training

Security professionals don’t need to become data scientists, but they do need baseline AI literacy. Training should cover how machine learning models work, common failure modes, and how to interpret AI outputs critically.

According to NIST’s workforce impact research from June 2025, organizations that invest in AI skills development for existing teams see better integration outcomes than those attempting to hire entirely new AI-specialized staff.

Maintain Manual Capabilities

Even with AI handling routine tasks, teams should periodically practice manual investigation and analysis. These exercises ensure skills don’t atrophy and prepare teams for scenarios where AI systems fail or prove inadequate.

Regular tabletop exercises that simulate AI system failures help teams prepare for degraded operations and maintain confidence in their fundamental capabilities.

The Future: Augmentation, Not Replacement

Evidence consistently points toward a future where AI augments cybersecurity professionals rather than replacing them. This isn’t wishful thinking—it reflects AI’s fundamental limitations and the enduring value of human judgment.

As AI capabilities expand, so do attack surfaces. Every new AI application creates security challenges that require human expertise to address. The deepfake incident in Hong Kong demonstrates how AI empowers attackers as much as defenders.

Look, the question “will AI replace cybersecurity” frames the issue incorrectly. The better question: how will cybersecurity professionals adapt to leverage AI effectively while addressing the new risks it creates?

Organizations need professionals who understand both traditional security principles and AI-era challenges. Those who develop hybrid skill sets—combining security fundamentals with AI literacy—will find themselves increasingly valuable.

The transformation resembles previous technological shifts. Cloud computing didn’t eliminate systems administrators; it changed what systems administration entails. Mobile technology didn’t eliminate software developers; it redirected what applications they build.

AI follows the same pattern. It eliminates specific tasks while creating new ones that require human judgment, creativity, and strategic thinking.

Frequently Asked Questions

Will AI completely replace cybersecurity analysts?

No. AI will automate repetitive tasks like log analysis and routine monitoring, but cybersecurity analysts remain essential for strategic decision-making, incident response coordination, and handling novel threats. According to CISA’s research on AI use cases, AI tools supplement human capabilities rather than replace them. Complex investigations, ethical judgment, and stakeholder communication require human expertise that AI cannot replicate.

What cybersecurity jobs are most at risk from AI automation?

Entry-level positions focused primarily on routine monitoring and manual log review face the greatest transformation. However, these roles evolve rather than disappear—junior analysts increasingly focus on validating AI outputs, tuning detection systems, and investigating escalated alerts. According to IEEE research from 2025, AI replaces more tasks than complete jobs, redirecting human effort toward higher-value activities.

What new cybersecurity roles does AI create?

AI creates specialized roles including AI security specialists who protect machine learning systems from adversarial attacks, model validation experts who verify AI system reliability, AI governance analysts who ensure responsible AI deployment, and prompt engineers who optimize security-focused AI interactions. NIST’s 2026 framework on trustworthy AI in critical infrastructure highlights growing demand for professionals who understand both security and AI risk management.

Do cybersecurity professionals need to learn programming and data science?

Basic AI literacy helps but deep programming expertise isn’t required for most cybersecurity roles. Professionals should understand how machine learning models work conceptually, recognize common failure modes, and interpret AI outputs critically. According to NIST workforce research from 2025, successful AI integration focuses more on developing hybrid skills—combining security fundamentals with practical AI understanding—rather than turning security professionals into data scientists.

How can cybersecurity professionals prepare for AI integration?

Professionals should pursue training in AI applications for security, such as the Certified AI-Driven Automation Specialist (CAIAS) program offered through CISA. Focus on understanding machine learning basics, adversarial AI techniques, and human-AI collaboration workflows. Maintain strong fundamentals in traditional security domains while developing familiarity with AI tools used in threat detection, vulnerability management, and incident response.

What are the biggest risks of using AI in cybersecurity?

Key risks include adversarial machine learning attacks that manipulate AI systems, data poisoning that corrupts model training, overreliance that causes deskilling of human teams, and lack of explainability that makes validation difficult. According to RAND research on AI security risks, organizations must address these vulnerabilities through careful AI governance, continuous monitoring, and maintaining human oversight of critical security decisions.

Will AI make cybersecurity jobs easier or more complex?

Both. AI handles tedious, repetitive tasks that previously consumed significant analyst time, reducing burnout and improving efficiency. However, AI also introduces new complexity—securing AI systems themselves, validating model outputs, and addressing AI-enabled threats like deepfakes. According to Brookings Institution research from 2024, more than 30% of workers face exposure to generative AI effects, but this exposure transforms work rather than eliminating it, shifting focus toward more strategic activities.

Conclusion: Embracing the Symbiotic Future

The evidence is clear. AI won’t replace cybersecurity professionals—it will redefine what those professionals do and which skills they need.

Organizations that treat AI as a tool for human augmentation rather than human replacement see better security outcomes. They invest in training existing teams on AI integration, maintain clear boundaries between automated and human-directed activities, and continuously monitor AI system performance.

Cybersecurity professionals who adapt by developing AI literacy while maintaining strong security fundamentals position themselves for long-term career success. The field needs experts who can guide AI systems, validate their outputs, and address the novel challenges AI creates.

So will AI replace cybersecurity? The question itself misses the point. AI transforms the profession, creating opportunities for those who embrace change while maintaining the critical thinking and judgment that algorithms can’t replicate.

The future belongs to professionals who master the collaboration between human expertise and artificial intelligence—each contributing complementary strengths to defend against an evolving threat landscape.

Ready to future-proof your cybersecurity career? Start by exploring AI-focused training programs like CAIAS, develop hands-on experience with AI security tools, and build expertise in both traditional security domains and emerging AI challenges. The symbiotic future of cybersecurity demands professionals who can bridge both worlds.