Quick Summary: Predictive analytics in finance uses historical data, machine learning, and statistical models to forecast future outcomes like cash flow, fraud risk, and market trends. Financial institutions leveraging predictive analytics achieve better risk management, improved forecasting accuracy, and data-driven decision-making. As of 2024, 75% of financial firms already use some form of AI in operations, with adoption accelerating across banks, insurers, and asset managers.

Financial markets don’t reward guesswork.

Yet many finance teams still base critical decisions on gut feelings, backward-looking reports, and spreadsheets that can’t see around corners. That’s changing fast. Predictive analytics has shifted from boardroom buzzword to operational necessity—turning raw data into actionable forecasts that shape everything from fraud prevention to capital allocation.

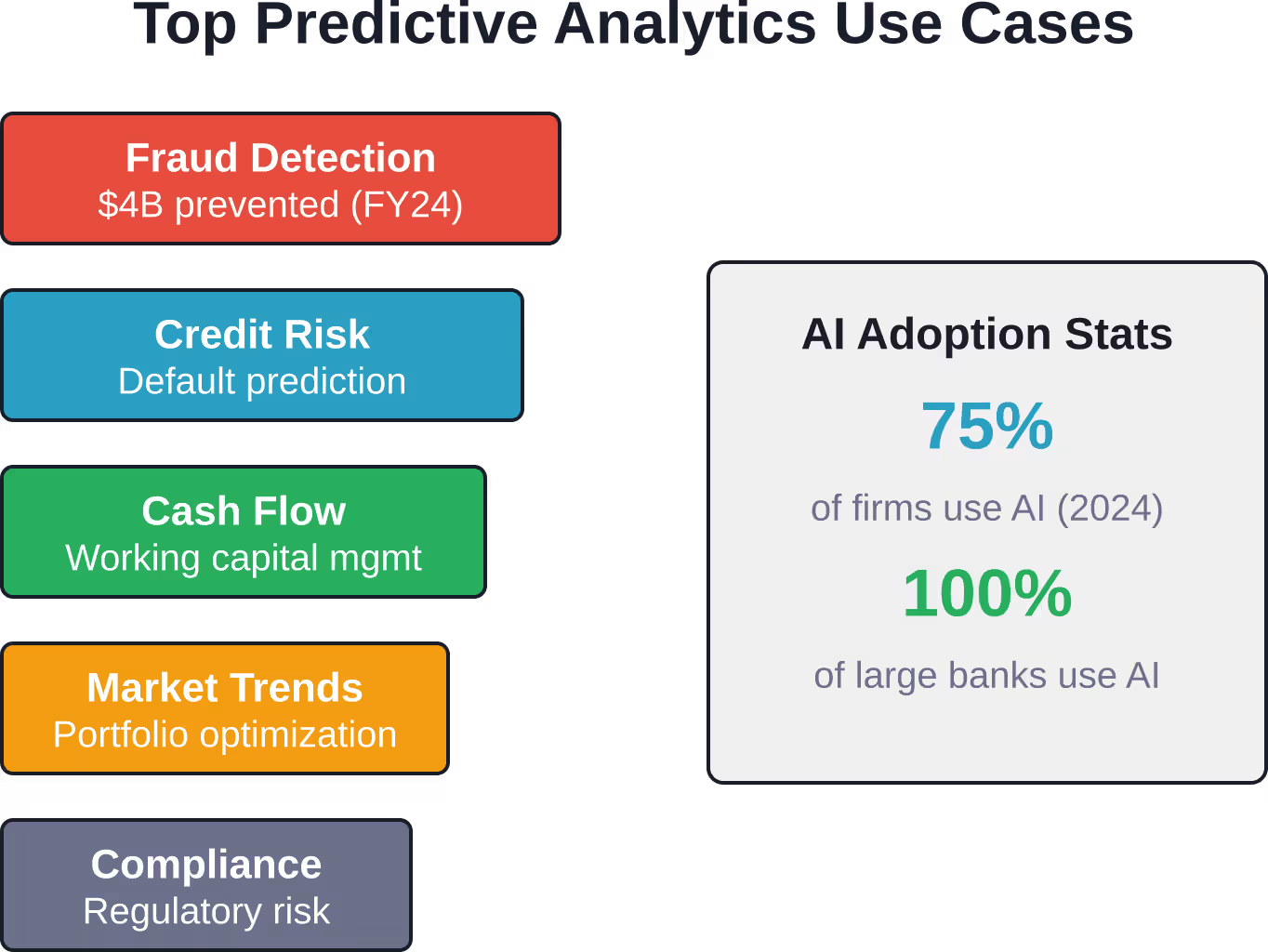

The numbers tell the story. From February to August 2023, over 15,000 check fraud reports were received, associated with more than $688 million in transactions (including both actual and attempted fraud). The U.S. Department of the Treasury’s enhanced use of AI and data analytics helped prevent and recover over $4 billion in fraudulent and improper payments during fiscal year 2024. Traditional methods couldn’t have touched those results.

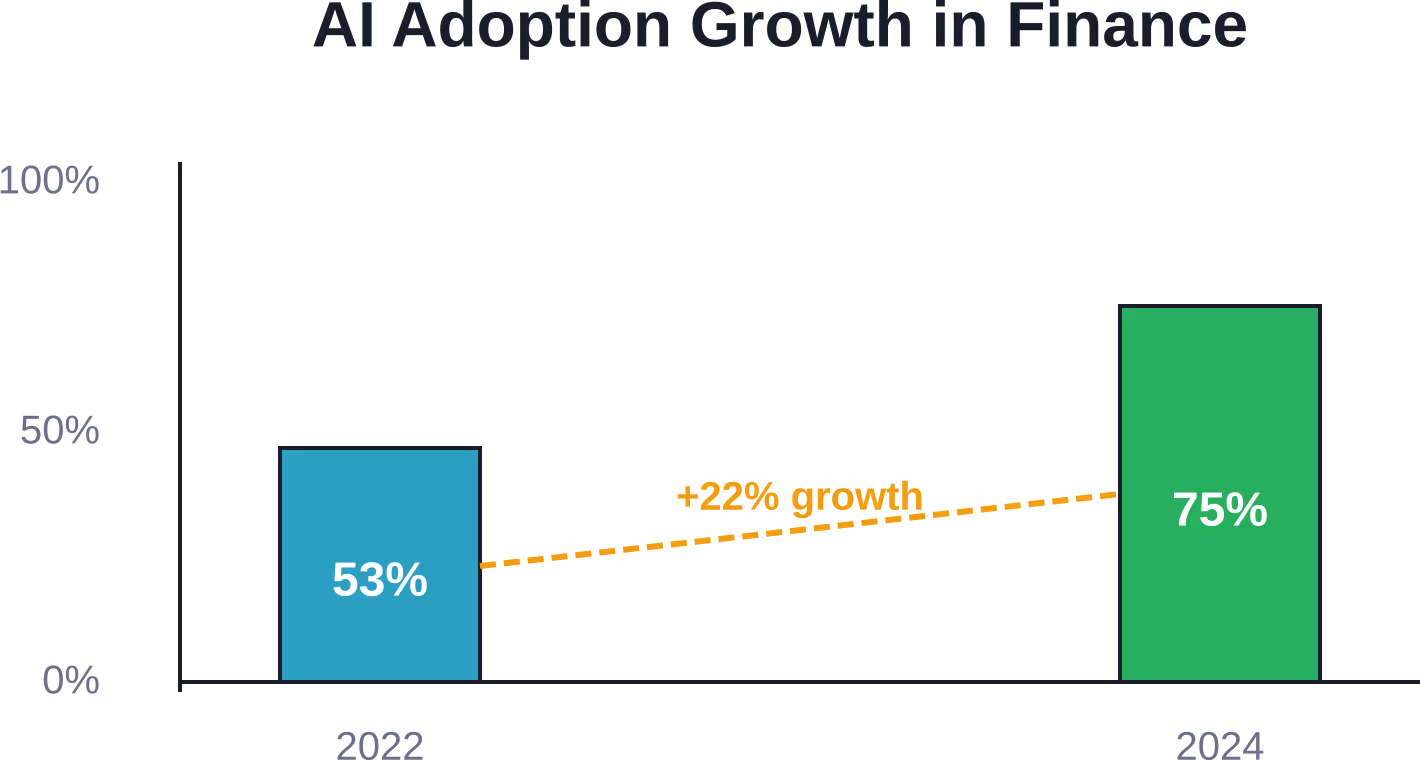

Here’s the thing though—adoption isn’t optional anymore. According to Bank of England research published in November 2024, 75% of firms surveyed were already using some form of AI in their operations, up from 53% in 2022. Every single large UK and international bank, insurer, and asset manager that responded? Already using AI.

This guide breaks down what predictive analytics actually means for finance teams, which models deliver results, and how to build frameworks that work without stumbling into common pitfalls.

What Predictive Analytics Means for Financial Operations

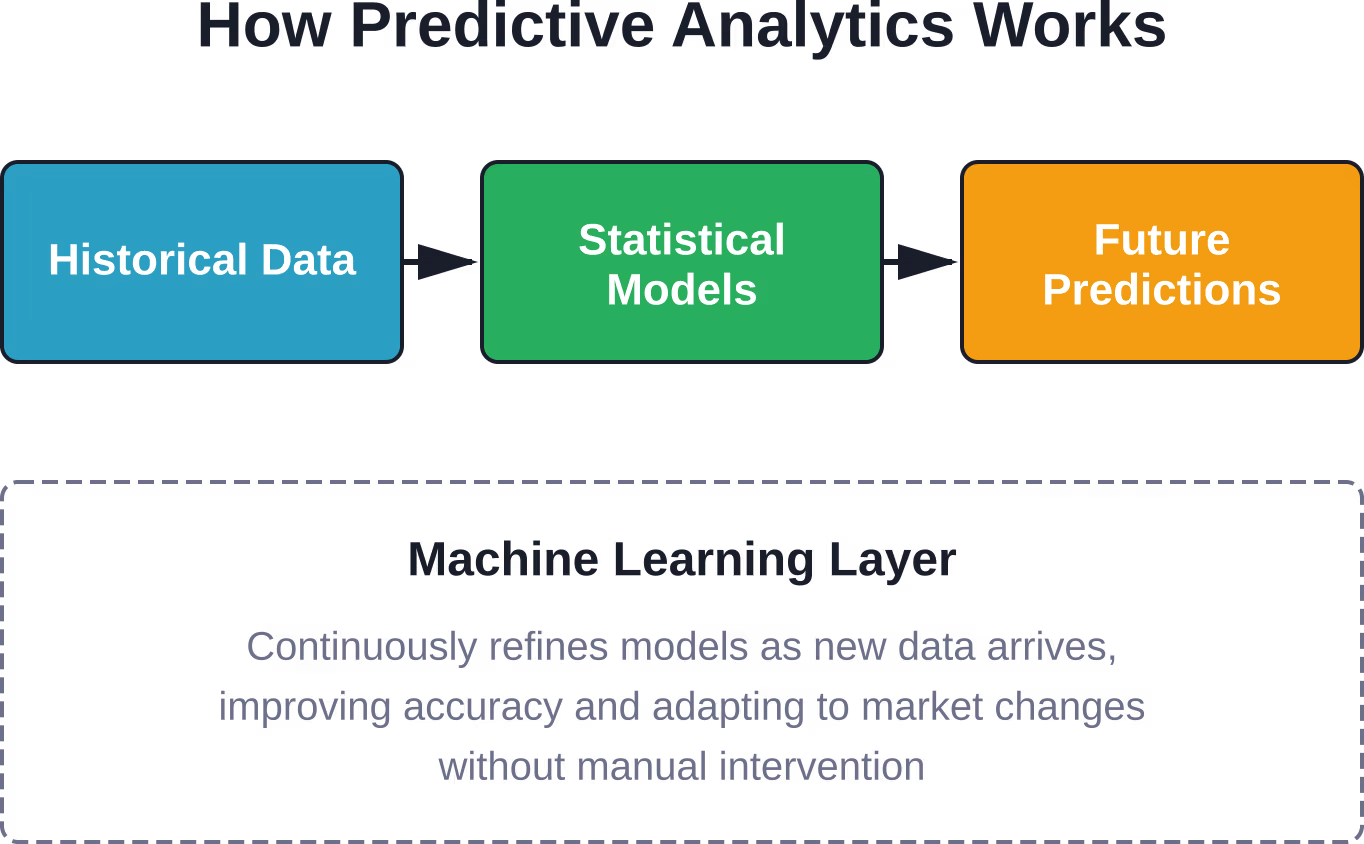

Predictive analytics combines historical data with statistical modeling, data mining techniques, and machine learning algorithms to forecast future outcomes. In finance, that translates to answering questions before they become problems: Will this customer default? Which invoices won’t get paid on time? Where will cash flow tighten next quarter?

The approach differs fundamentally from traditional financial analysis. Standard reporting tells you what happened last month or last quarter. Predictive analytics tells you what’s likely to happen next month—and quantifies the probability.

Three components make it work:

- Data collection: Historical transactions, market data, customer behavior, economic indicators, and external datasets feed the models

- Statistical modeling: Regression analysis, time series forecasting, and classification algorithms identify patterns humans miss

- Machine learning: Algorithms improve predictions over time as new data arrives, adapting to changing conditions without manual recalibration

Real talk: predictive analytics isn’t magic. It’s math applied systematically to large datasets. But that systematic application surfaces insights buried in noise.

Core Models Finance Teams Actually Use

Not all predictive models fit financial use cases. Three categories dominate real-world implementations.

Regression Models for Continuous Outcomes

Linear and logistic regression predict continuous variables—revenue forecasts, asset valuations, expense trajectories. These models establish relationships between independent variables (economic indicators, historical performance, seasonal factors) and dependent outcomes (next quarter’s revenue, portfolio returns).

Finance teams favor regression because the math is interpretable. When the model says Q3 revenue will hit $12.4 million with 85% confidence, analysts can trace exactly which inputs drove that number.

Time Series Forecasting for Trend Analysis

ARIMA (AutoRegressive Integrated Moving Average) and exponential smoothing models excel at forecasting metrics with temporal patterns—stock prices, cash flow cycles, seasonal revenue fluctuations.

One implementation extended forecast periods from 3 months to 12 months by applying time series models to budget data, freeing employee time for value-added activities and improving budget decision accuracy.

Time series models shine when historical patterns contain predictive power. They struggle when market disruptions break established trends.

Classification Models for Binary Outcomes

Decision trees, random forests, and neural networks classify outcomes into categories: Will this transaction be fraudulent? Will this customer default? Should we approve this loan?

DataVisor’s fraud detection engine, implemented by one of the largest U.S. banks, uses classification models with predictive capabilities to flag suspicious activity before losses occur. The system analyzes transaction patterns, behavioral anomalies, and network connections to assign fraud probability scores.

Classification accuracy matters enormously here. False positives block legitimate transactions and anger customers. False negatives let fraud slip through.

Eight High-Impact Use Cases Transforming Finance

Theory matters less than results. These use cases show where predictive analytics delivers measurable value.

Fraud Detection and Prevention

Financial institutions face escalating fraud sophistication. Traditional rule-based systems can’t keep pace. Predictive models analyze transaction patterns, user behavior, device fingerprints, and network graphs to identify anomalies indicating fraud.

The impact? During fiscal year 2024, machine learning AI prevented and recovered $4 billion in fraud—including $1 billion in Treasury check fraud alone. That’s not incremental improvement. That’s transformation.

Credit Risk Assessment and Loan Underwriting

Banks use predictive models to evaluate creditworthiness beyond traditional credit scores. Models incorporate payment history, employment stability, spending patterns, and alternative data sources to predict default probability.

Better risk assessment means fewer bad loans, lower capital reserves, and the ability to extend credit to qualified borrowers traditional scoring would reject.

Cash Flow Forecasting

Predictive analytics in accounts receivable provides timely insights into receivables that may constrain working capital. Models predict which invoices will pay late, which customers pose collection risk, and when cash crunches will hit.

Finance teams use these forecasts to optimize working capital management, time financing decisions, and negotiate better payment terms.

Market Trend Analysis and Investment Strategy

Asset managers apply machine learning to identify market patterns, forecast price movements, and optimize portfolio allocation. Models process vast datasets—market microstructure data, sentiment analysis from news and social media, macroeconomic indicators—to generate trading signals.

Foundation models including large language models represent an emerging use case in finance, often deployed for market analysis and research.

Customer Lifetime Value Prediction

Predictive models estimate how much revenue each customer will generate over their relationship with the institution. Banks use these predictions to prioritize retention efforts, customize product recommendations, and allocate marketing spend.

High-value customers get white-glove service. Low-value prospects get automated channels. Economics work because predictions direct resources where they’ll generate returns.

Regulatory Compliance and Risk Management

Financial institutions face complex regulatory requirements. Predictive analytics helps identify compliance risks before regulators do—flagging suspicious transactions for anti-money laundering review, monitoring trading patterns for market manipulation, and stress-testing portfolios against potential scenarios.

According to SEC proposals from July 2023, regulators increasingly scrutinize conflicts of interest associated with predictive data analytics use by broker-dealers and investment advisers. Compliance becomes both harder and more important.

Operational Process Optimization

About 41% of firms use AI to optimize internal processes—automating reconciliations, predicting processing bottlenecks, and streamlining workflows. Another 26% deploy AI to enhance customer support through chatbots and intelligent routing.

These applications don’t grab headlines. But they reduce costs and improve service quality measurably.

Budget Planning and Variance Analysis

Predictive models improve budget accuracy by incorporating more variables than traditional planning processes consider. Models account for market conditions, historical variance patterns, seasonal effects, and departmental performance trends.

Better budgets mean better capital allocation and fewer mid-year scrambles to cover shortfalls.

Building a Predictive Analytics Framework That Actually Works

Implementation separates winners from window dressing. A functional framework requires five components.

Data Infrastructure and Quality

Garbage in, garbage out. Predictive models demand clean, comprehensive, accessible data. That means:

- Centralized data repositories that aggregate information across systems

- Data governance policies that ensure consistency and accuracy

- Real-time data pipelines that feed models current information

- Validation processes that catch errors before they corrupt predictions

Most organizations underestimate the effort required. Data quality isn’t a one-time project—it’s ongoing discipline.

Model Selection and Validation

Different problems require different models. Classification problems need different approaches than regression forecasts. Time series data demands different techniques than cross-sectional analysis.

Validation prevents overfitting—when models memorize training data patterns that don’t generalize. Techniques include:

- Train/test splits that evaluate model performance on unseen data

- Cross-validation that tests robustness across multiple data subsets

- Backtesting that simulates how predictions would have performed historically

- A/B testing that compares model predictions against baseline approaches in production

Skip validation and you’ll deploy models that look brilliant in development and fail catastrophically in production.

Technology Stack and Platform Selection

Platform choice matters less than fit with existing infrastructure and team capabilities. Options range from enterprise analytics suites to open-source frameworks.

Key considerations include:

- Integration with existing data sources and business systems

- Scalability to handle growing data volumes and model complexity

- Interpretability features that explain predictions to stakeholders and regulators

- Deployment capabilities that operationalize models efficiently

Verify pricing with platform providers for current costs and feature availability, as offerings change frequently.

Team Skills and Organizational Change

Technology enables analytics. People make it useful. Finance teams need data scientists who understand statistics and machine learning, domain experts who know what questions matter, and leaders who’ll act on predictions even when they contradict intuition.

Organizational change proves harder than technology implementation. Shifting from gut-feel decisions to data-driven forecasting requires cultural transformation.

Governance and Compliance Controls

Regulators scrutinize predictive analytics closely. The SEC’s July 2023 proposal targets conflicts of interest associated with predictive data analytics used by broker-dealers and investment advisers. Financial stability authorities worldwide monitor AI deployment for systemic risk.

Governance frameworks must address:

- Model risk management that documents methodology, validates accuracy, and monitors performance

- Bias detection that prevents discriminatory outcomes in credit, pricing, and service decisions

- Explainability requirements that justify predictions to customers and regulators

- Audit trails that document how models reach conclusions

According to the Bank for International Settlements analysis published in June 2025, financial stability implications of AI require robust governance frameworks as usage scales.

Get Predictive Models That Actually Reduce Financial Risk

Fraud, missed payments, and inaccurate forecasts don’t show up as reports – they show up as losses. Predictive analytics helps spot these issues earlier, but only if it’s built around real financial data and workflows. AI Superior works with financial teams that need to move from reactive analysis to forward-looking decisions, using custom AI systems that support risk evaluation, forecasting, and operational control.

Make Predictions Part of How Financial Decisions Are Made

AI Superior focuses on where predictions actually matter:

- Identify risk patterns in transactions, payments, and customer behavior

- Build models around specific financial use cases, not generic templates

- Keep models aligned with changing market and data conditions

Don’t wait for reports to confirm losses – talk to AI Superior and start acting on financial risks earlier.

Common Pitfalls and How to Avoid Them

Implementation failures follow predictable patterns.

Overfitting and Model Degradation

Models that fit training data too perfectly often fail on new data. They’ve memorized noise instead of learning signals. Combat overfitting through regularization techniques, simpler model architectures, and rigorous validation.

Model performance degrades over time as market conditions shift. A model trained on pre-pandemic data won’t predict post-pandemic patterns accurately. Continuous monitoring and retraining keep models current.

Data Quality and Availability Issues

Missing data, inconsistent formatting, and integration challenges plague most implementations. Address data quality early—before building models that rely on flawed inputs.

Ignoring Domain Expertise

Data scientists who don’t understand finance build technically impressive models that answer the wrong questions. Domain experts who don’t understand analytics dismiss valid predictions as black-box nonsense. Collaboration between both groups is non-negotiable.

Regulatory and Ethical Risks

Predictive models can perpetuate historical biases, create regulatory violations, and generate customer harm. Fair lending laws prohibit discrimination even when models discover correlations between protected characteristics and default risk.

Test models for disparate impact, document decision processes, and maintain human oversight of automated decisions.

Implementation Without Clear ROI

Predictive analytics projects fail when they optimize metrics nobody cares about or solve problems that don’t impact business outcomes. Define success criteria before building models. If a prediction doesn’t change a decision, it’s trivia.

| Challenge | Impact | Mitigation Strategy |

|---|---|---|

| Overfitting | Poor real-world performance despite strong test metrics | Cross-validation, regularization, simpler models |

| Data quality | Inaccurate predictions, model failures | Governance frameworks, validation processes |

| Model drift | Degrading accuracy over time | Continuous monitoring, scheduled retraining |

| Regulatory risk | Compliance violations, fines, reputation damage | Bias testing, explainability, audit trails |

| Skill gaps | Poor model design, failed implementations | Cross-functional teams, training programs |

The Regulatory Landscape and Financial Stability

Regulators worldwide are tightening oversight of AI and predictive analytics in financial services.

The SEC proposed new requirements in July 2023 to address conflicts of interest associated with predictive data analytics by broker-dealers and investment advisers. The rules would require firms to eliminate or neutralize conflicts that place firm interests ahead of investor interests.

According to Bank of England research published in November 2024, 75% of firms surveyed were already using some form of AI in their operations, up from 53% in 2022. Every large UK and international bank, insurer, and asset manager surveyed reported AI deployment. That widespread adoption triggers financial stability concerns.

According to BIS analysis from January 2026, AI and digital finance create both opportunities and risks for financial stability. Concentration risk emerges when multiple institutions rely on similar models or data sources—correlated failures during market stress could amplify shocks.

The Federal Reserve highlighted in November 2024 that AI helps combat check fraud, which has become more prevalent. Over 15,000 check fraud reports from February to August 2023 were received, associated with more than $688 million in transactions. AI-powered fraud detection prevented $4 billion in losses during fiscal year 2024.

Financial institutions must balance innovation with risk management. Regulatory compliance isn’t optional—it’s the price of operating in the industry.

What’s Coming: The Evolution of Predictive Analytics

Several trends will shape the next phase of predictive analytics in finance.

Foundation Models and Large Language Models

Foundation models including large language models represent an emerging segment of AI use cases in finance. These models process unstructured data—earnings call transcripts, news articles, regulatory filings—to extract insights traditional analytics miss.

But LLMs introduce new risks. They can hallucinate facts, perpetuate biases in training data, and operate as black boxes that resist interpretation. According to GARP analysis from March 2024, financial institutions must assess whether LLMs truly outperform traditional modeling approaches before deploying them widely.

Real-Time Analytics and Streaming Data

Batch processing gives way to continuous analysis. Real-time fraud detection, instant credit decisions, and dynamic pricing require models that process streaming data and update predictions immediately.

Explainable AI and Model Transparency

Regulatory pressure and business requirements drive demand for interpretable models. Black-box neural networks face skepticism from regulators, auditors, and business leaders who need to understand how predictions arise.

Techniques like SHAP (SHapley Additive exPlanations) and LIME (Local Interpretable Model-agnostic Explanations) make complex models more transparent.

Automated Machine Learning and Democratization

AutoML platforms reduce the technical expertise required to build predictive models. Finance professionals without data science backgrounds can develop and deploy models using low-code tools.

Democratization creates opportunities and risks. More people building models means more innovation—and more poorly designed models entering production.

Measuring Success: Metrics That Matter

How do you know if predictive analytics delivers value? Track these metrics:

| Metric | What It Measures | Target |

|---|---|---|

| Forecast accuracy | Prediction error vs. actual outcomes (MAPE, MAE) | Better than baseline by 15%+ |

| Model precision | Accuracy of positive predictions (fraud detection, defaults) | 85%+ for critical applications |

| Model recall | Percentage of actual positives identified | 90%+ for fraud, 75%+ for credit risk |

| Time to decision | Speed from data input to actionable prediction | Real-time for fraud, hourly for cash flow |

| ROI | Value generated vs. implementation and operating costs | 3:1 minimum within 18 months |

| Adoption rate | Percentage of decisions informed by predictions | 60%+ for target use cases |

Financial impact ultimately determines success. Models that improve forecast accuracy by 20% but don’t change decisions create zero value. Models that reduce fraud losses by $10 million justify substantial investment.

Frequently Asked Questions

What’s the difference between predictive analytics and traditional financial forecasting?

Traditional forecasting typically relies on linear projections from historical data and analyst judgment. Predictive analytics uses machine learning algorithms to identify complex patterns across multiple variables, adapting automatically as conditions change. While traditional methods might project next quarter’s revenue based on last year’s growth rate, predictive models incorporate dozens of factors—market conditions, customer behavior, competitor actions, economic indicators—to generate probabilistic forecasts with confidence intervals.

How much does implementing predictive analytics cost?

Implementation costs vary enormously based on scope, data infrastructure readiness, and team capabilities. Implementation costs for predictive analytics vary considerably based on scope and infrastructure readiness. Enterprise implementations at large financial institutions typically require significant investment for comprehensive frameworks spanning multiple use cases. The largest cost component is usually personnel—data scientists, engineers, and analysts—rather than technology licensing. Verify pricing with platform providers for current costs and feature availability, as offerings change frequently.

What skills do finance teams need to use predictive analytics effectively?

Effective teams blend three skill sets. Data scientists who understand statistics, machine learning algorithms, and programming languages like Python or R build and validate models. Domain experts with deep finance knowledge identify which questions matter and interpret predictions in business context. Business leaders who’ll make data-driven decisions even when predictions contradict intuition. Many organizations start by hiring external specialists and gradually build internal capabilities through training programs.

How do you ensure predictive models remain accurate over time?

Model performance degrades as market conditions shift—a phenomenon called model drift. Prevent degradation through continuous monitoring that tracks prediction accuracy against actual outcomes, automated alerts when performance falls below thresholds, scheduled retraining that updates models with recent data, and validation testing that ensures refreshed models improve rather than harm accuracy. Leading institutions monitor model performance daily and retrain quarterly or when performance degrades significantly.

What are the biggest regulatory concerns around predictive analytics in finance?

Regulators focus on several areas. The SEC proposed rules in July 2023 targeting conflicts of interest when firms use predictive analytics in ways that prioritize firm interests over client interests. Fair lending regulations prohibit discrimination even when models discover correlations between protected characteristics and credit risk. Model risk management requirements demand documentation, validation, and governance. Financial stability authorities worry that widespread adoption of similar models could create correlated failures during market stress. Compliance requires explainable models, bias testing, audit trails, and human oversight.

Can small and mid-size financial institutions benefit from predictive analytics or is it only for large banks?

Cloud platforms and analytics-as-a-service offerings have democratized access. Small institutions can’t match the custom model development budgets of major banks, but they can deploy pre-built models for fraud detection, credit scoring, and cash flow forecasting at affordable costs. Many vendors offer tiered pricing that makes predictive capabilities accessible to institutions of all sizes. The key is starting with high-impact use cases that deliver measurable ROI rather than trying to build comprehensive analytics frameworks immediately.

How long does it take to see results from predictive analytics implementation?

Timeline depends on use case complexity and organizational readiness. Simple applications like fraud detection using vendor platforms can show results within 3-6 months. Complex custom models for portfolio optimization or integrated risk management typically require 12-18 months to develop, validate, and operationalize. Most organizations see measurable improvements within the first year for targeted use cases, with expanding benefits as they scale successful models to additional applications. Quick wins build organizational support for longer-term initiatives.

Taking the Next Step

Predictive analytics has shifted from competitive advantage to operational necessity. The 75% adoption rate among financial firms in 2024 will climb higher. The institutions that master data-driven forecasting will outperform those that don’t—not by small margins but by fundamental differences in risk management, capital efficiency, and strategic agility.

Start with use cases that deliver clear ROI. Fraud detection, credit risk assessment, and cash flow forecasting offer measurable benefits and manageable implementation complexity. Build data infrastructure and team capabilities incrementally rather than attempting comprehensive transformation immediately.

The technology works. The models perform. The question isn’t whether predictive analytics belongs in finance—it’s whether your organization will deploy it effectively or fall behind competitors who do.

Sound familiar? That’s because the window for early adoption has closed. The question now is execution quality, not whether to begin.