Quick Summary: LLM pricing varies widely across providers, with input tokens ranging from $0.10 to $5 per million and output tokens from $0.40 to $25 per million as of March 2026. OpenAI’s GPT models, Anthropic’s Claude, and Google’s Gemini dominate the market with different price-performance tiers. Understanding token-based pricing, context windows, and usage patterns is essential for optimizing costs while maintaining quality.

The explosion of large language model APIs has created a complex pricing landscape. Organizations face critical decisions about which models deliver the best value for their specific use cases.

But here’s the thing though—model choice isn’t just about finding the cheapest option. The economics of LLM inference involve multiple factors: token pricing, context window limits, latency requirements, and hidden costs that can multiply your bill by 2-3x.

This comparison analyzes pricing across major providers including OpenAI, Anthropic, Google, and emerging alternatives. The data reflects current pricing as of March 2026, though providers regularly adjust their rates.

Understanding Token-Based Pricing Models

LLM providers charge based on tokens processed. A token represents roughly 4 characters of text or about 0.75 words in English. For example, the string “ChatGPT is great!” is encoded into six tokens: [“Chat”, “G”, “PT”, ” is”, ” great”, “!”].

Most providers split pricing into two components: input tokens (what developers send to the model) and output tokens (what the model generates). Output tokens typically cost 3-5x more than input tokens because generation requires more computational resources.

The total number of tokens in an API call affects three critical factors: how much the call costs, how long it takes to complete, and whether it fits within the model’s context window limits.

Context Windows and Caching

Context windows define the maximum tokens a model can process in a single request. As of early 2026, context windows have expanded dramatically. Anthropic’s Claude Opus 4.6 features a 1M token context window in beta, while most production models offer 128K-200K token windows.

Larger context windows enable more sophisticated applications but increase costs proportionally. A 100K token input at $3 per million tokens costs $0.30 per request—multiply that across thousands of daily queries and costs escalate quickly.

Prompt caching provides significant savings. OpenAI offers cached input pricing at 50% of standard input costs. According to OpenAI’s pricing documentation, GPT-4.1 charges $2.00 per million input tokens but only $0.50 per million cached input tokens.

Major Provider Pricing Breakdown

The competitive landscape includes three dominant players and several emerging alternatives. Each provider offers multiple model tiers optimized for different use cases.

OpenAI Pricing Structure

OpenAI’s GPT models span multiple intelligence and cost tiers. As outlined in community discussions from January 2026, pricing continues evolving as new models launch.

| Model | Input (per 1M tokens) | Cached Input (per 1M) | Output (per 1M tokens) | Context Window |

|---|---|---|---|---|

| GPT-4.1 | $2.00 | $0.50 | $8.00 | 128K |

| GPT-4o | $2.50 | $1.25 | $10.00 | 128K |

| GPT-4-32k (deprecated) | $60.00 | N/A | $120.00 | 32K |

OpenAI deprecated GPT-4-32k models with shutdown scheduled for June 6, 2025. According to OpenAI’s deprecation documentation, existing users had limited time to migrate to newer models like GPT-4o.

The GPT-5.4 model family represents OpenAI’s latest advancement. Released in March 2026, GPT-5.4 mini became available to Free and Go users through ChatGPT’s “Thinking” feature. For paid users, GPT-5.4 mini serves as a rate limit fallback for GPT-5.4 Thinking.

Anthropic Claude Pricing

Anthropic’s Claude models have emerged as strong competitors to OpenAI, particularly for coding and agentic tasks. The company released Claude Opus 4.6 in February 2026 and Claude Sonnet 4.6 shortly after.

Claude Opus 4.6 maintains pricing at $5 per million input tokens and $25 per million output tokens despite significant capability improvements. According to Anthropic’s announcement, this pricing remains unchanged from the previous Opus 4.5 version.

Claude Sonnet 4.6 offers more accessible pricing at $3 per million input tokens and $15 per million output tokens—the same rate as Sonnet 4.5. Anthropic describes Sonnet 4.6 as approaching Opus-level intelligence at a more practical price point for everyday tasks.

| Model | Input (per 1M tokens) | Output (per 1M tokens) | Context Window | Best For |

|---|---|---|---|---|

| Claude Opus 4.6 | $5.00 | $25.00 | 1M (beta) | Complex reasoning, coding, agents |

| Claude Sonnet 4.6 | $3.00 | $15.00 | 1M (beta) | Balanced performance and cost |

| Claude Opus 4.5 | $5.00 | $25.00 | 200K | Legacy applications |

The 1M token context window in Claude Opus 4.6 represents a first for Anthropic’s Opus-class models. This expansion enables handling entire codebases or extensive documents in single requests.

Google Gemini Pricing

Google’s Gemini models compete aggressively on price, particularly for high-volume use cases. The Gemini family includes multiple tiers optimized for different performance requirements.

Pricing structures for Gemini models vary significantly based on tier and usage volume. Google positions Gemini as a cost-effective alternative for applications requiring strong performance without premium pricing.

Hidden Costs and Pricing Mechanics

The advertised per-token price tells only part of the story. Several hidden factors can dramatically increase actual costs.

Output Token Multipliers

Output tokens consistently cost 3-5x more than input tokens across all providers. An application that generates long responses will face disproportionately higher costs than one processing large inputs but generating concise outputs.

Setting maximum output tokens (max_tokens parameter) helps control costs. If set too low, responses get cut off before completion. If set too high, the model may generate unnecessary content, especially at higher temperature settings that encourage creativity.

Rate Limits and Fallback Costs

Most providers implement rate limits based on requests per minute, tokens per minute, or both. When applications hit these limits, they either fail or fall back to alternative models.

OpenAI’s GPT-5.4 implementation illustrates this pattern. According to OpenAI’s model release notes from March 2026, paid users experience GPT-5.4 mini as a fallback when GPT-5.4 Thinking rate limits are reached. This maintains service continuity but potentially at different cost structures.

Context Window Economics

Larger context windows enable more sophisticated applications but increase costs linearly. With a context length of 128K tokens, the KV cache of LLama2-7B with half-precision reaches 64GB, calculated as: num_layers × num_kv_head × head_dim × seqlen × sizeof(fp16) × 2.

Research on LLM decoding efficiency indicates the KV cache size grows linearly with sequence length, creating memory bottlenecks during decoding that translate to higher operational costs.

Enterprise Pricing Considerations

Enterprise deployments face different economics than individual developers or small teams. Volume discounts, custom pricing, and deployment options significantly impact total cost of ownership.

Cloud API vs. Self-Hosted Deployment

Organizations can subscribe to commercial LLM services or deploy models on their own infrastructure. Research published on arXiv analyzing on-premise LLM deployment found that breaking even with commercial services requires careful analysis of usage patterns and infrastructure costs.

The study defined four criteria for model selection: performance parity within 20% of top commercial models, operational compatibility, security requirements, and cost efficiency at scale. For high-volume applications, self-hosting can reduce costs, but upfront infrastructure investment remains substantial.

Hierarchical Architecture Cost Optimization

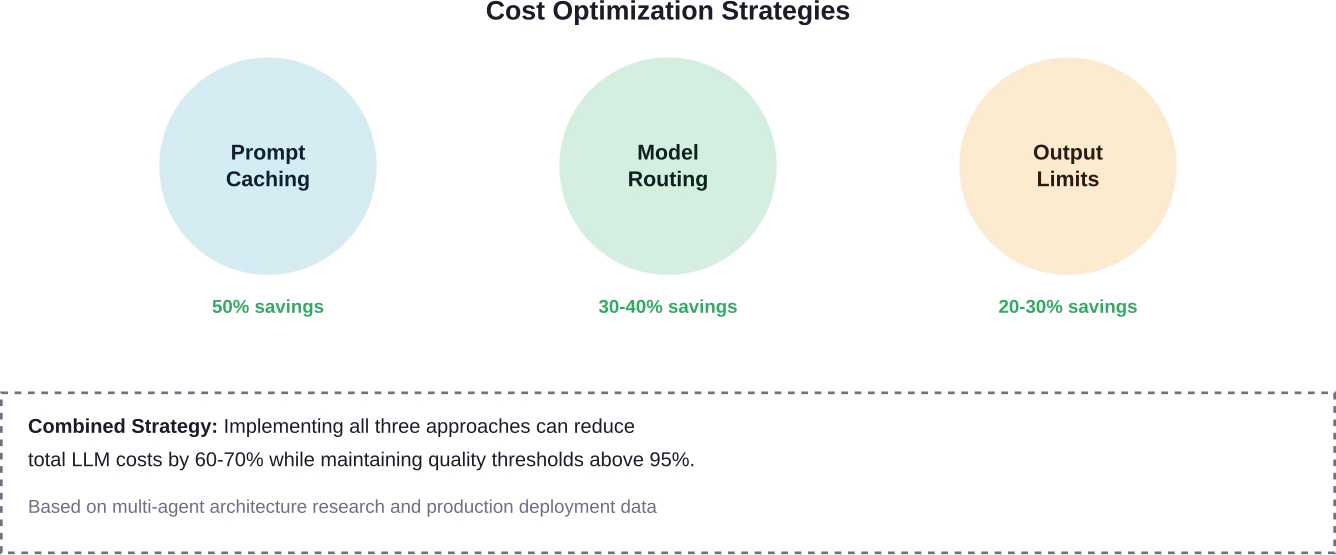

Recent benchmarking research on multi-agent LLM architectures for financial document processing revealed that hierarchical architectures provide the best cost-accuracy tradeoff. These systems achieved 97.7% of reflexive architecture accuracy at 60.9% of the cost.

The research demonstrated that semantic caching, model routing, and adaptive processing can significantly reduce operational costs without sacrificing quality. These techniques become increasingly important as applications scale to millions of daily requests.

Emerging Alternatives and Regional Pricing

Beyond the major three providers, several alternatives offer competitive pricing for specific use cases.

DeepSeek and Open Source Models

DeepSeek has gained attention for aggressive pricing on capable models. The company positions itself as a cost-effective alternative for applications that don’t require absolute cutting-edge performance.

Open source models deployed through cloud GPU providers like RunPod offer another path. These services charge by GPU hour rather than per token, making costs more predictable for high-volume applications.

Specialized Model Providers

Mistral, Meta’s Llama family, and NVIDIA’s models each serve specific niches. According to a model comparison analysis published in August 2025, model selection should consider design purpose, technical specifications, and optimal use cases beyond just pricing.

The analysis emphasizes that different models excel at different tasks. Choosing based solely on lowest cost often leads to poor results and expensive reprocessing.

Practical Cost Calculation Framework

Estimating actual costs requires understanding application-specific usage patterns. The four critical parameters are: average input tokens per request, average output tokens per request, expected requests per day, and chosen model tier.

A simple calculation: (Input tokens × Input price + Output tokens × Output price) × Daily requests × 30 days = Monthly cost.

For example, an application processing 10K input tokens and generating 2K output tokens per request, running 1,000 requests daily on Claude Sonnet 4.6: (10,000 × $0.000003 + 2,000 × $0.000015) × 1,000 × 30 = $1,800 per month.

Real talk: most applications underestimate actual token usage by 2-3x during planning phases. Buffer estimates accordingly.

Performance vs. Price Considerations

The cheapest model rarely delivers the best value. According to research analyzing the economics of AI inference, the “marginal cost” of LLM inference varies significantly based on compute efficiency and model architecture.

Studies analyzing query approximation using lightweight proxy models demonstrated that strategic model selection can achieve 100x cost and latency reduction. The research showed proxy models scoring above 90% accuracy while dramatically reducing costs for specific query types.

Local deployment on consumer hardware presents another option. Research examining local language model efficiency found that local LMs can accurately respond to 88.7% of single-turn chat and reasoning queries, though with significant latency tradeoffs compared to data center deployments.

Latency and Cost Tradeoffs

Faster models typically cost more or require premium tiers. Applications with strict latency requirements may need to accept higher per-token costs to meet performance SLAs.

Latency expectations vary by model and deployment: flagship models typically deliver 20-40 tokens/second, mid-tier models achieve 40-80 tokens/second, and optimized models can exceed 100 tokens/second on dedicated infrastructure.

Compare Models Carefully and Build Around the Right One

Comparing 15+ LLMs on price alone rarely gives the full picture. The real cost comes from how models are implemented – data quality, fine-tuning strategy, and infrastructure choices all shape what you actually pay over time. AI Superior works across the full lifecycle, from data preparation and model selection to training, optimization, and deployment, helping teams choose and configure models based on real use cases rather than surface-level pricing.

In practice, this often means avoiding overpowered models where they are not needed, or combining approaches like fine-tuning and hybrid setups instead of relying on a single model or API. The focus is on building systems that run efficiently in production, not just comparing benchmarks. If you are evaluating multiple LLMs and trying to understand what they will actually cost in use, it makes sense to review your setup early. Reach out to AI Superior to align model choice with real cost, not just listed pricing.

Future Pricing Trends

LLM pricing continues evolving rapidly. Several clear trends emerged through 2025 and into 2026.

Context windows expanded dramatically while per-token prices declined. Claude Opus 4.6 and Sonnet 4.6 both feature 1M token context windows at the same pricing as previous 200K window models. This represents a significant increase in context window capability without proportional cost increases.

Model deprecation cycles accelerated. OpenAI’s deprecation of GPT-4-32k variants within 12-18 months of release signals faster iteration cycles. Organizations must plan for regular model migrations and associated development costs.

The gap between flagship and mid-tier models narrowed. Claude Sonnet 4.6 approaches Opus-level intelligence at 60% of the cost, according to Anthropic’s announcements. This compression of capability across price tiers benefits cost-conscious deployments.

FAQ

What’s the cheapest LLM for production use in 2026?

DeepSeek and Google Gemini offer the lowest per-token costs among major providers, but “cheapest” doesn’t always mean best value. Total cost depends on accuracy requirements, reprocessing needs, and context window demands. For many applications, mid-tier models like Claude Sonnet 4.6 at $3/$15 per million tokens provide better overall economics than rock-bottom pricing with lower quality outputs.

How much does prompt caching actually save?

OpenAI’s cached input pricing provides 50% savings on repeated prompt segments. For applications with consistent system prompts or reference documents, this translates to 30-50% total cost reduction. The savings compound most dramatically in applications making thousands of similar requests with shared context.

Should enterprises self-host LLMs or use APIs?

Research on on-premise deployment economics suggests breaking even requires consistent high-volume usage and appropriate technical infrastructure. Applications processing less than 100M tokens monthly typically find API pricing more economical. Above that threshold, self-hosting becomes viable, but factor in DevOps overhead, model updates, and infrastructure management costs beyond raw compute expenses.

Why do output tokens cost more than input tokens?

Generation requires significantly more computational resources than processing. Input tokens flow through the model once for encoding, while each output token requires a full forward pass to predict the next token. This creates a 3-5x computational difference reflected in pricing structures across all providers.

How do I estimate token usage for my application?

Use tokenizer tools provided by each model vendor to measure typical requests. OpenAI, Anthropic, and Google all offer tokenizer APIs or web tools. Test with representative sample data, multiply by expected request volumes, and add a 50% buffer for variations. Most planning estimates undercount actual usage by 2-3x.

What happens when I hit rate limits?

Response depends on provider and tier. Some implementations queue requests, others return rate limit errors requiring retry logic, and premium tiers may fall back to alternative models. OpenAI’s GPT-5.4 falls back to GPT-5.4 mini for paid users when rate limits are reached. Check specific provider documentation for tier-specific rate limit handling.

Are there volume discounts for LLM APIs?

Most providers offer enterprise pricing with volume discounts, though terms aren’t publicly listed. Organizations processing 1B+ tokens monthly should contact sales teams directly. Discounts typically range from 10-30% depending on commitment levels and usage volumes. Anthropic, OpenAI, and Google all maintain enterprise sales programs with custom pricing.

Conclusion

LLM pricing landscapes remain complex and rapidly evolving. As of March 2026, per-token costs range from under $1 per million to $25 per million depending on model tier and token type.

The economics favor strategic model selection over simply choosing the cheapest option. Claude Sonnet 4.6 at $3/$15 per million tokens delivers near-flagship performance for everyday tasks. OpenAI’s GPT-4.1 at $2/$8 provides strong general reasoning at competitive rates. Claude Opus 4.6 commands premium pricing at $5/$25 but leads for complex coding and agentic tasks.

Hidden costs matter as much as headline pricing. Prompt caching saves 50% on repeated inputs. Output token management prevents cost explosions from verbose responses. Hierarchical architectures reduce total costs by 60% while maintaining quality.

Organizations should calculate total cost of ownership including rate limit handling, model deprecation cycles, and quality-related reprocessing needs. The cheapest per-token price often generates the highest total cost.

Start by benchmarking representative workloads across candidate models. Measure not just accuracy but total tokens consumed per successful task completion. Factor in specific usage patterns, latency requirements, and context window needs. Then make an informed decision based on true value rather than sticker price alone.